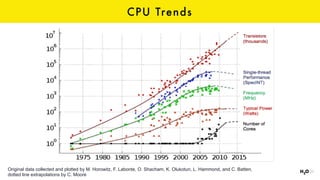

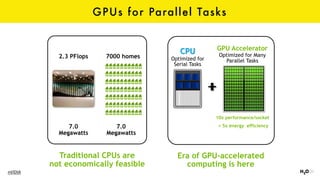

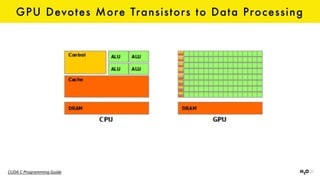

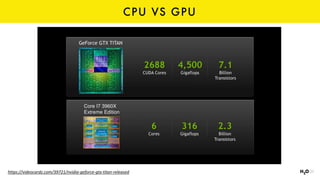

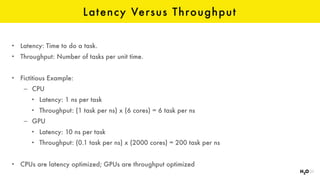

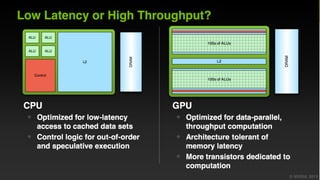

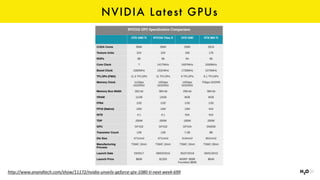

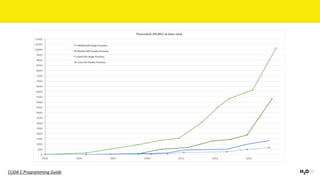

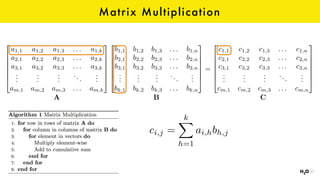

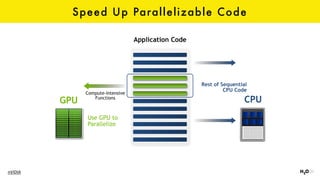

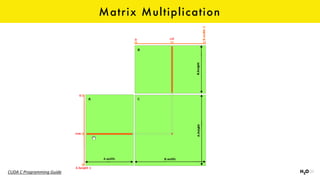

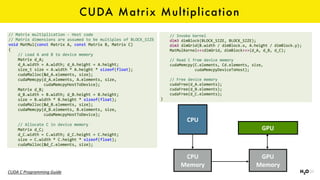

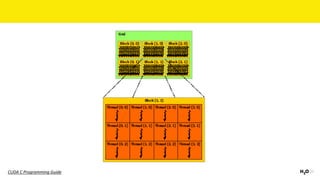

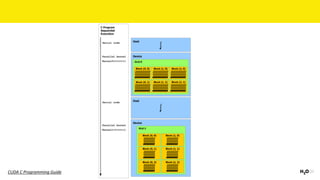

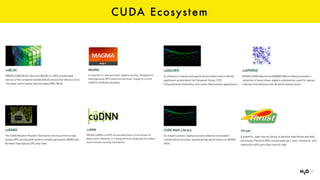

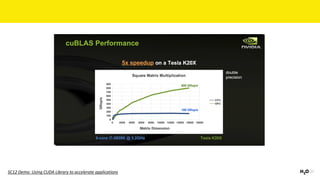

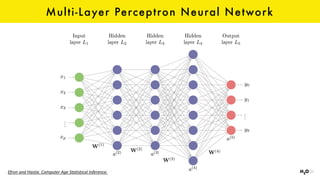

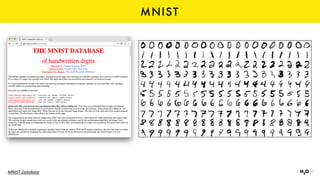

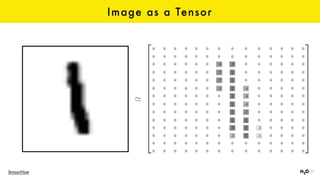

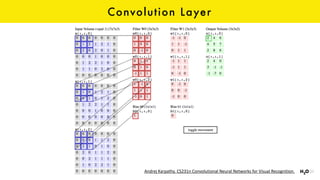

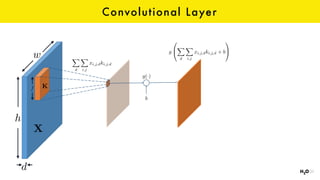

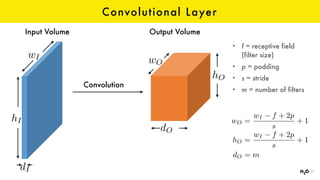

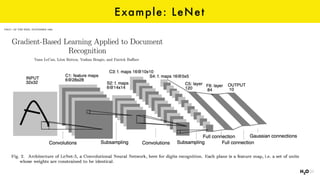

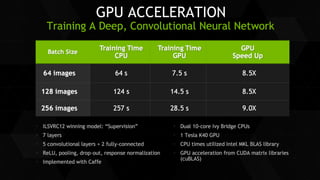

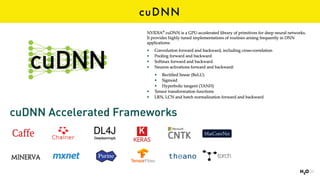

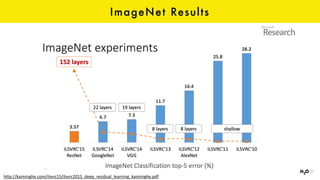

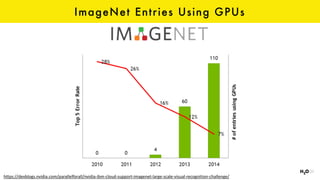

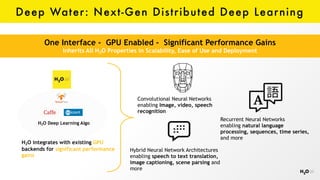

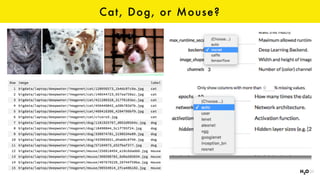

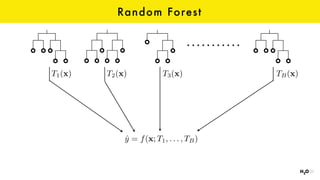

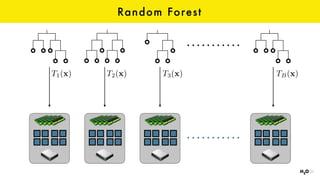

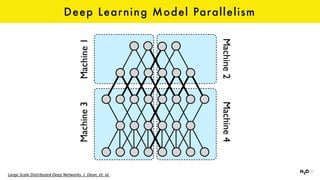

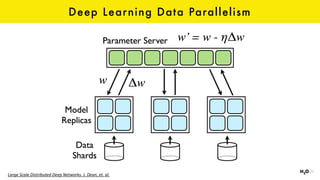

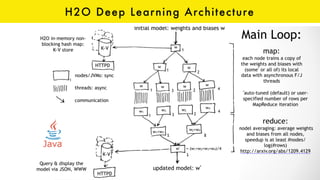

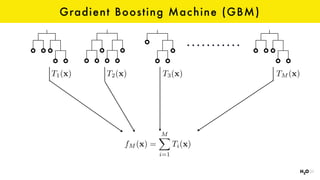

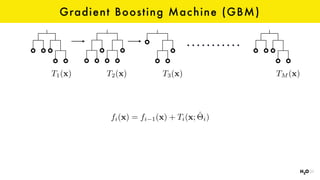

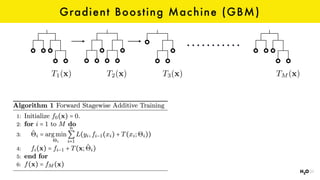

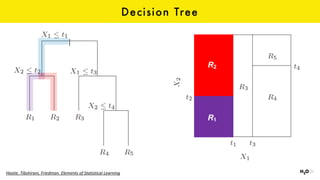

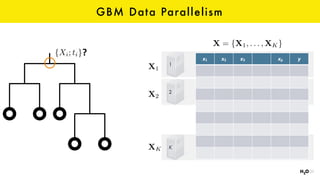

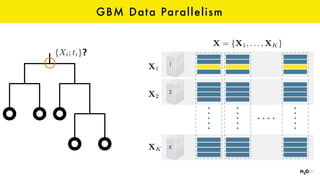

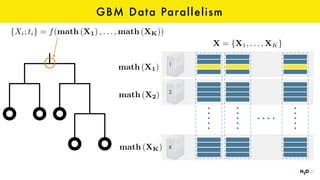

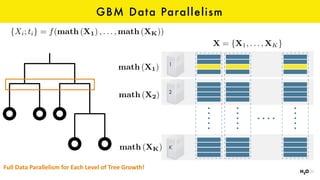

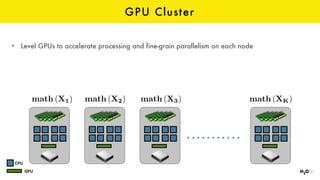

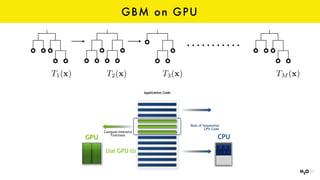

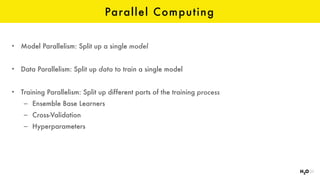

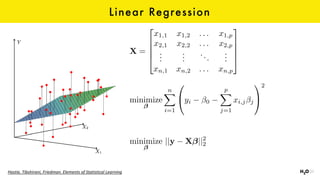

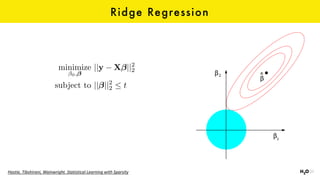

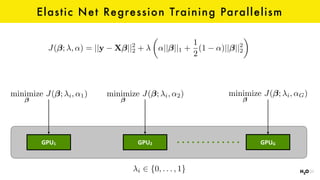

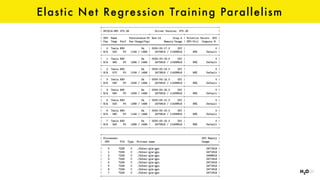

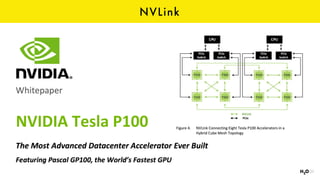

The document discusses the role of GPUs in machine learning, highlighting their efficiency over traditional CPUs for parallel tasks, particularly in deep learning applications. It introduces CUDA as a programming model for leveraging GPU capabilities and explores various computing paradigms such as model and data parallelism. The advantages of GPU-accelerated computing are presented alongside the impacts on machine learning methods like convolutional neural networks and gradient boosting machines.