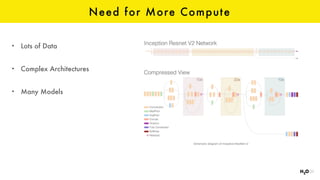

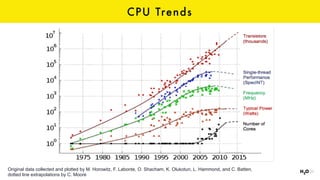

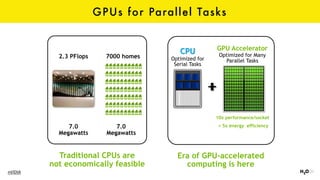

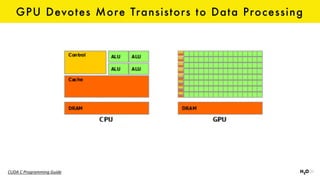

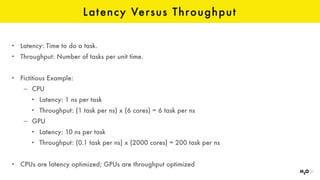

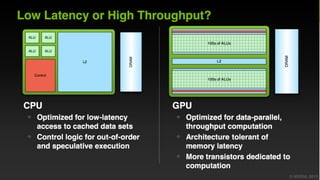

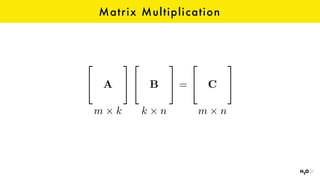

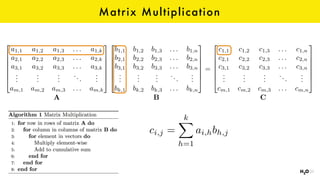

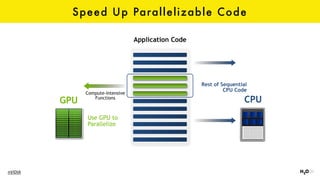

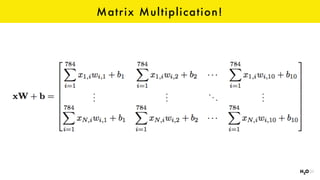

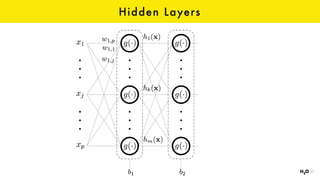

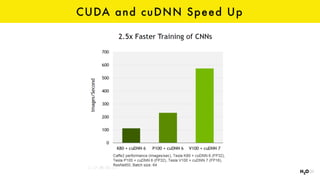

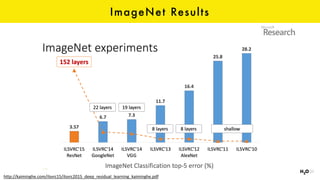

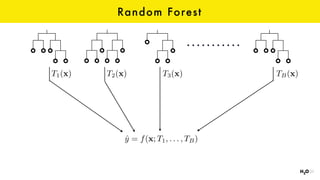

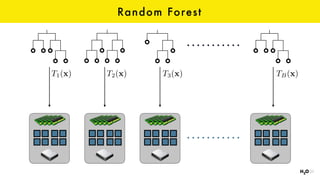

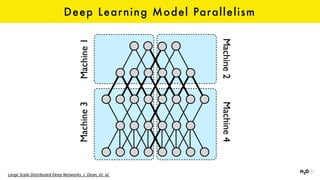

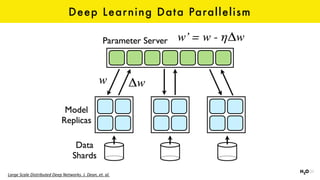

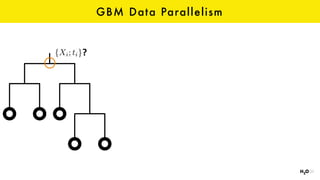

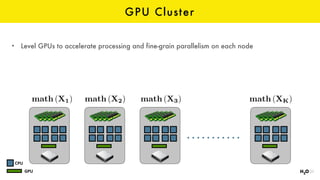

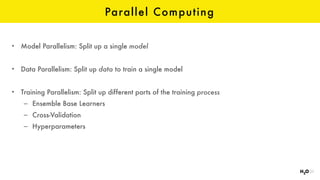

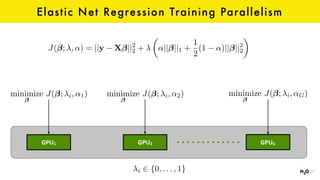

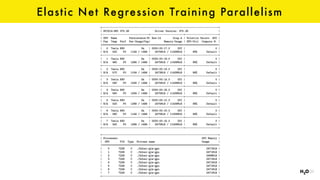

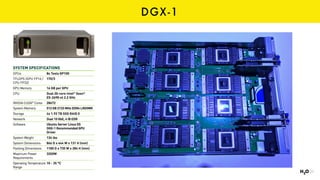

1. The document discusses GPUs and their advantages for machine learning tasks like deep learning and parallel computing. GPUs have many parallel processors that can accelerate matrix multiplications and other computations used in machine learning algorithms.

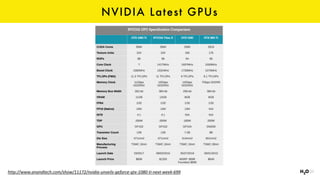

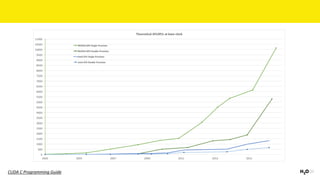

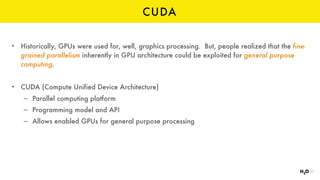

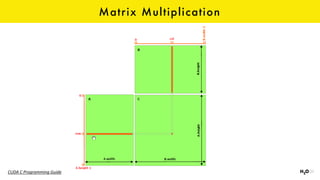

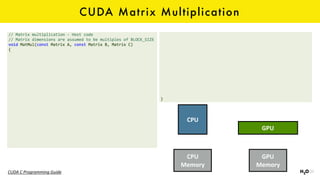

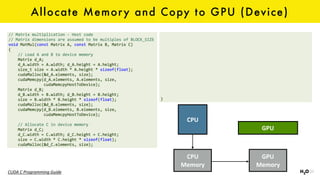

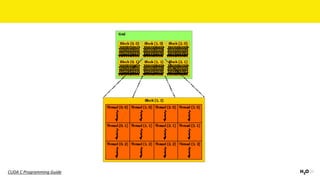

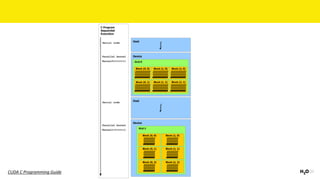

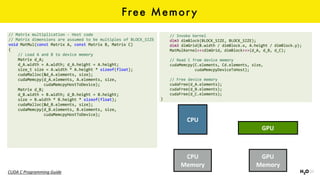

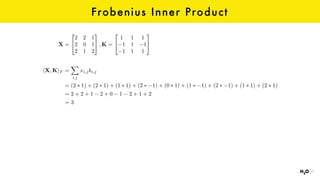

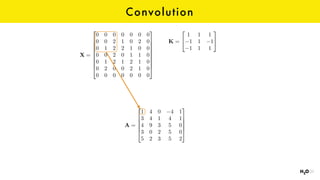

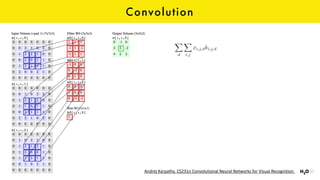

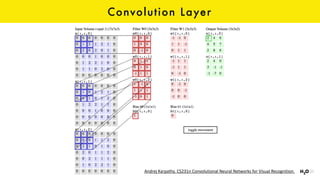

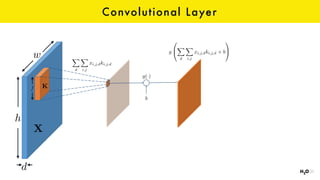

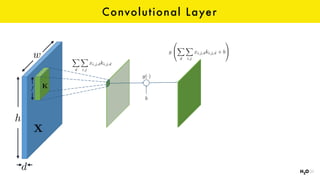

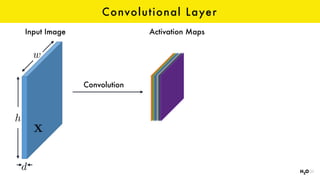

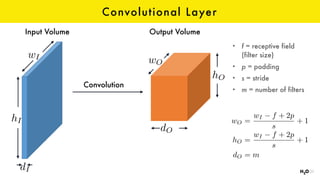

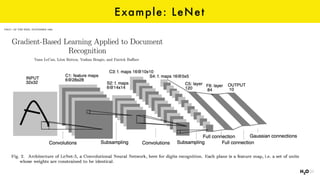

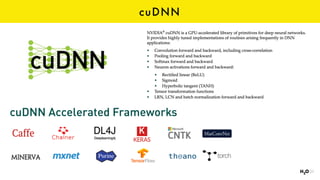

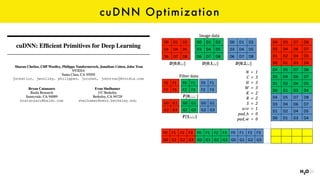

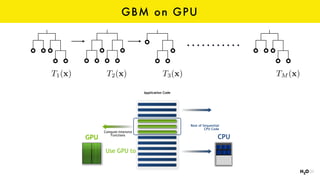

2. It introduces CUDA and how it allows GPUs to be programmed for general purpose processing through a parallel computing model. Examples are given of how matrix multiplications and convolutional neural network operations can be parallelized on GPUs.

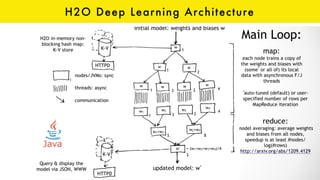

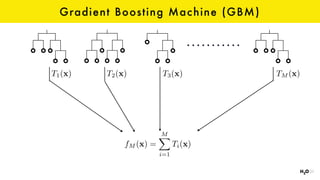

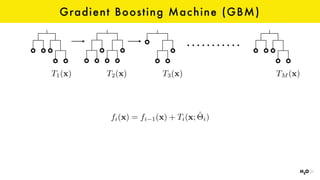

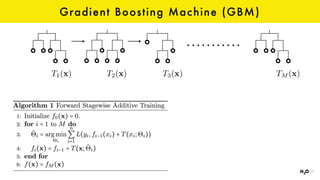

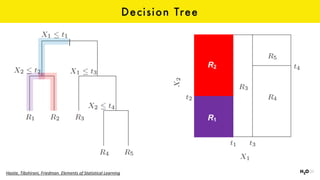

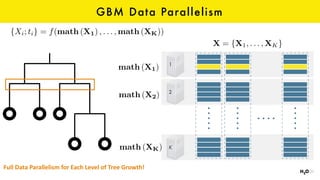

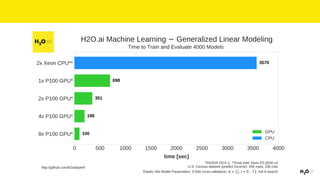

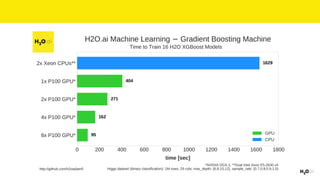

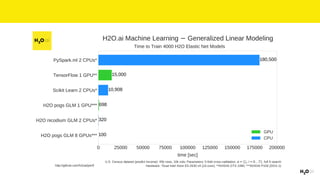

3. H2O is presented as a machine learning platform that supports GPU acceleration for algorithms like gradient boosted machines, enabling faster training on large datasets. Instructions are provided on getting started with CUDA, cuDNN and using GPUs for machine learning.