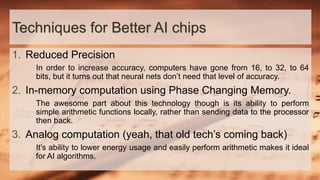

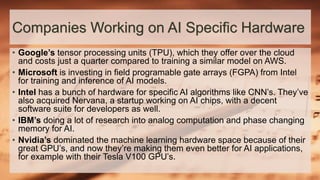

The document discusses the rapid growth of artificial intelligence (AI) and the associated hardware challenges, particularly in relation to deep neural networks (DNNs) that require significant processing power and memory. It emphasizes the importance of selecting appropriate hardware, such as CPUs or GPUs, based on specific AI goals and highlights various technological advancements and companies involved in AI hardware development. Key techniques for improving AI chips include reduced precision, in-memory computation, and analog computation.

![GPUs

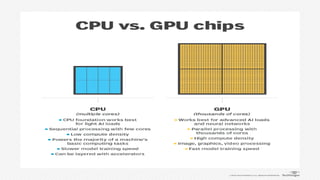

• If a company knows its AI strategy includes advanced neural networks

and AI algorithms, choosing a CPU chip processor would require

numerous accelerators to match the multicore, fast processing speed

of a GPU.

• The current industry standard is to build your AI system using GPUs.

GPUs are optimized to render graphics and images but have the

speed and computational power to support AI, machine learning and

neural network development. Nvidia, Intel and Arm are some of the

primary GPU vendors.

• GPUs are very effective for training. If you have enough deep learning

[models that you're training], use an architecture that [is] designed for

that: GPUs.](https://image.slidesharecdn.com/aihardware-191207183817/85/AI-Hardware-7-320.jpg)