This document outlines an introduction to adaptive signal processing. It discusses how adaptive signal processing designs adaptive systems for signal processing applications. The course covers adaptive algorithm families including Newton's method, steepest descent, LMS, RLS, and Kalman filtering. It also covers applications in communications and blind equalization. The evaluation is based on assignments, a midterm, and a final exam.

![Adaptive Signal Processing

• Definition: Adaptive signal processing is the

design of adaptive systems for signal-

processing applications.

[http://encyclopedia2.thefreedictionary.com/adaptive+signal+pr

ocessing]

• Definition: Adaptive signal processing is the

design of adaptive systems for signal-

processing applications.

[http://encyclopedia2.thefreedictionary.com/adaptive+signal+pr

ocessing]

CESdSP

EECS0712 Adaptive Signal Processing

http://embedsigproc.wordpress.com/eecs0712

Assoc. Prof. Dr. P.Yuvapoositanon

ASP1-7](https://image.slidesharecdn.com/eecs07122of25581-160510025725/85/Introduction-to-adaptive-signal-processing-7-320.jpg)

![Input

• From

• We construct the x as vector with first

element is the most recent

(3), (2), (1), (0), ( 1), ( 2),...x x x x x x

• From

• We construct the x as vector with first

element is the most recent

CESdSP

[ (3) (2) (1) (0)...]T

x x x xx

ASP1-20

EECS0712 Adaptive Signal Processing

http://embedsigproc.wordpress.com/eecs0712

Assoc. Prof. Dr. P.Yuvapoositanon](https://image.slidesharecdn.com/eecs07122of25581-160510025725/85/Introduction-to-adaptive-signal-processing-20-320.jpg)

![Regression input signal vector

• If the current time is n, we have “Regression

input signal vector”

[ ( ) ( 1) ( 2) ( 3)...]T

x n x n x n x n x

CESdSP

[ ( ) ( 1) ( 2) ( 3)...]T

x n x n x n x n x

ASP1-22

EECS0712 Adaptive Signal Processing

http://embedsigproc.wordpress.com/eecs0712

Assoc. Prof. Dr. P.Yuvapoositanon](https://image.slidesharecdn.com/eecs07122of25581-160510025725/85/Introduction-to-adaptive-signal-processing-22-320.jpg)

![0

0 1

1

[ ]T

w

w ww

w

CESdSP

0

0 1

1

[ ]T

w

w ww

w

0

0 1

1

ˆ [ ]

I

I I T

I

w

w w

w

w

ASP1-23

EECS0712 Adaptive Signal Processing

http://embedsigproc.wordpress.com/eecs0712

Assoc. Prof. Dr. P.Yuvapoositanon](https://image.slidesharecdn.com/eecs07122of25581-160510025725/85/Introduction-to-adaptive-signal-processing-23-320.jpg)

![• We can use a vector-matrix multiplication

• For example, for n=3 we construct y(3) as

• For example, for n=1 we construct y(1) as

0 1 0 1

(3)

(3) (3) (2) [ ] (3)

(2)

T

x

y w x w x w w

x

w x

• We can use a vector-matrix multiplication

• For example, for n=3 we construct y(3) as

• For example, for n=1 we construct y(1) as

CESdSP

0 1 0 1

(3)

(3) (3) (2) [ ] (3)

(2)

T

x

y w x w x w w

x

w x

0 1 0 1

(1)

(1) (1) (0) [ ] (1)

(0)

T

x

y w x w x w w

x

w x

ASP1-26

EECS0712 Adaptive Signal Processing

http://embedsigproc.wordpress.com/eecs0712

Assoc. Prof. Dr. P.Yuvapoositanon](https://image.slidesharecdn.com/eecs07122of25581-160510025725/85/Introduction-to-adaptive-signal-processing-26-320.jpg)

![0 1 0 1

0 1 0 1

0 1 0 1

0 1 0 1

(3)

(3) (3) (2) [ ] (3)

(2)

(2)

(2) (2) (1) [ ] (2)

(1)

(1)

(1) (1) (0) [ ] (1)

(0)

(2)

(0) (0) ( 1) [ ] (0

(1)

T

T

T

T

x

y w x w x w w

x

x

y w x w x w w

x

x

y w x w x w w

x

x

y w x w x w w

x

w x

w x

w x

w x )

CESdSP

0 1 0 1

0 1 0 1

0 1 0 1

0 1 0 1

(3)

(3) (3) (2) [ ] (3)

(2)

(2)

(2) (2) (1) [ ] (2)

(1)

(1)

(1) (1) (0) [ ] (1)

(0)

(2)

(0) (0) ( 1) [ ] (0

(1)

T

T

T

T

x

y w x w x w w

x

x

y w x w x w w

x

x

y w x w x w w

x

x

y w x w x w w

x

w x

w x

w x

w x )

ASP1-27

EECS0712 Adaptive Signal Processing

http://embedsigproc.wordpress.com/eecs0712

Assoc. Prof. Dr. P.Yuvapoositanon](https://image.slidesharecdn.com/eecs07122of25581-160510025725/85/Introduction-to-adaptive-signal-processing-27-320.jpg)

![Cumulative Distribution Function

CESdSP

( ( )) Pr[ ( )]P x n X x n x

ASP1-43

EECS0712 Adaptive Signal Processing

http://embedsigproc.wordpress.com/eecs0712

Assoc. Prof. Dr. P.Yuvapoositanon](https://image.slidesharecdn.com/eecs07122of25581-160510025725/85/Introduction-to-adaptive-signal-processing-43-320.jpg)

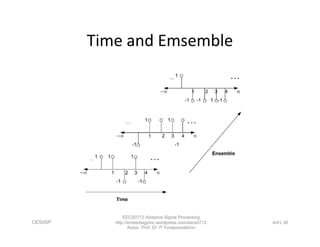

![Ensemble Average

i ensembles

1 1 2 2Ensemble Average of (1) (1)Pr[ (1)] (1)Pr[ (1)]

(1)Pr[ (1)]N N

x x x x x

x x

1 ensemble

CESdSP

EECS0712 Adaptive Signal Processing

http://embedsigproc.wordpress.com/eecs0712

Assoc. Prof. Dr. P.Yuvapoositanon

ASP1-50

i ensembles](https://image.slidesharecdn.com/eecs07122of25581-160510025725/85/Introduction-to-adaptive-signal-processing-50-320.jpg)

![Covariance

( , ) {[ ( ) ( )][ ( ) ( )]}c n m E x n n x m m xx

CESdSP

EECS0712 Adaptive Signal Processing

http://embedsigproc.wordpress.com/eecs0712

Assoc. Prof. Dr. P.Yuvapoositanon

ASP1-59

( , ) {[ ( ) ( )][ ( ) ( )]}c n m E x n n x m m xx](https://image.slidesharecdn.com/eecs07122of25581-160510025725/85/Introduction-to-adaptive-signal-processing-59-320.jpg)