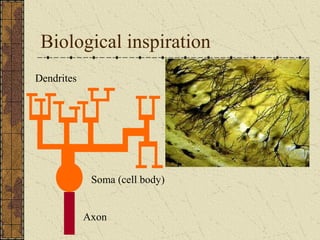

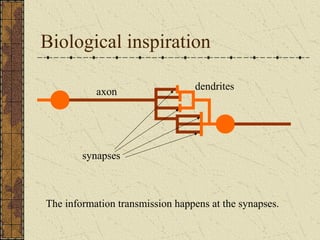

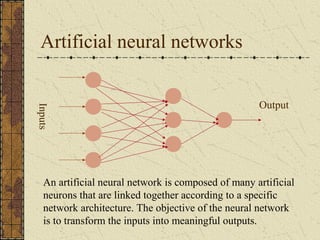

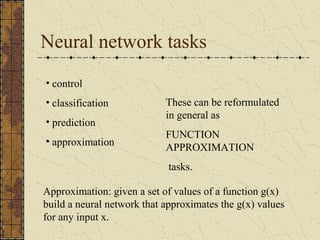

- Artificial neural networks are inspired by biological neural networks and try to mimic their learning mechanisms by modifying synaptic strengths through an optimization process.

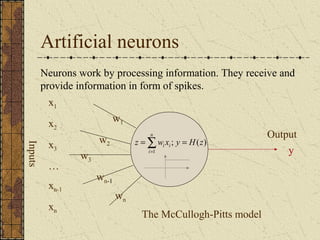

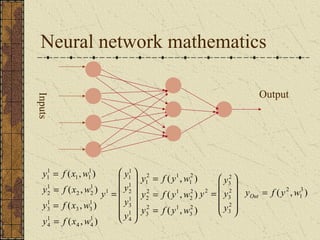

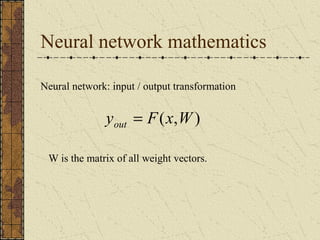

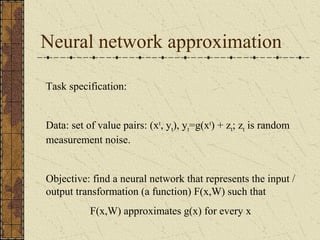

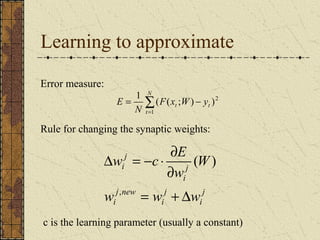

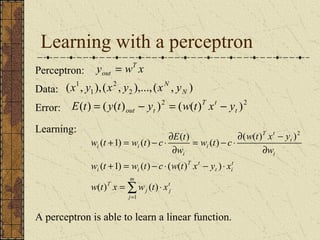

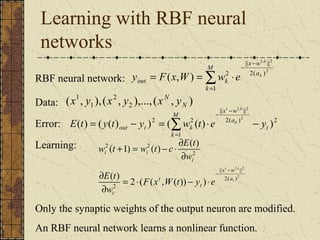

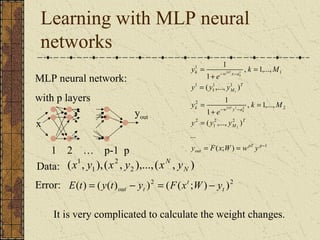

- Learning in neural networks can be formulated as a function approximation task where the network learns to approximate a function by minimizing an error measure through optimization of synaptic weights.

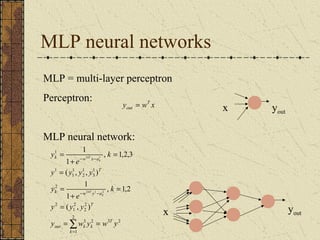

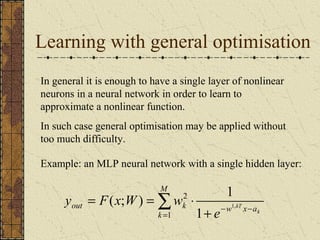

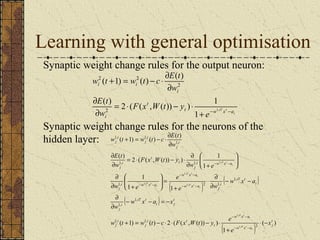

- A single hidden layer neural network is capable of learning nonlinear function approximations if general optimization methods are applied to update the synaptic weights.