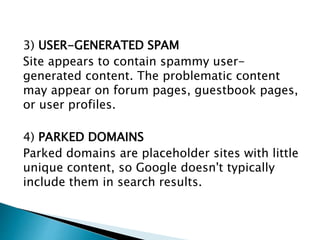

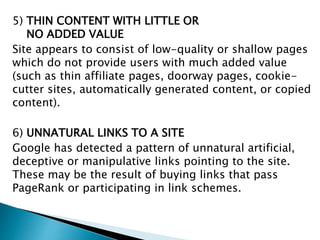

Google uses software robots called spiders to crawl and index the web. The spiders start from popular sites and pages and follow links to build an index of words on pages. This index currently contains over 100 million gigabytes of data. When a user searches Google, it uses the index and over 200 factors to select and rank relevant pages in under a second. It also works to filter out spam using both automated techniques and manual review.