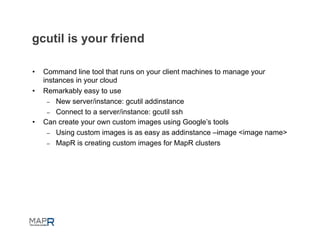

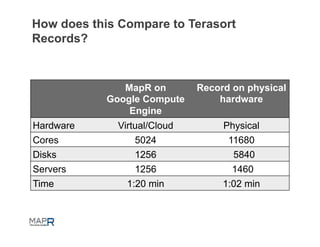

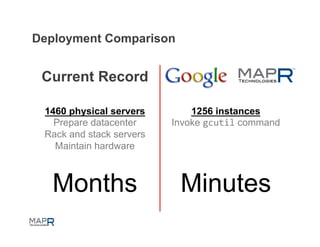

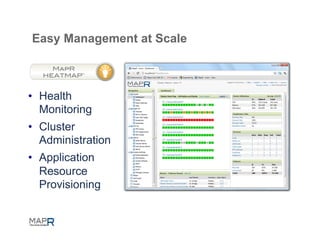

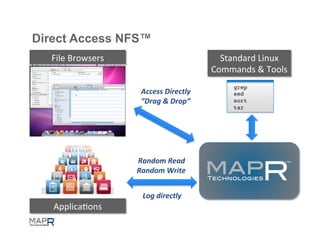

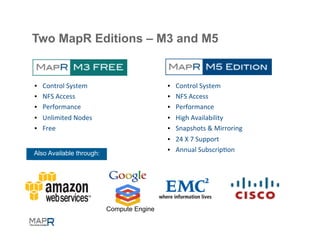

MapR provides a Hadoop distribution that can run on Google Compute Engine. It is fast, easy to provision, and cost effective compared to on-premise hardware. MapR clusters on GCE can be started with a single command and provide a fully functional Hadoop environment within minutes. This allows huge clusters to be easily created and torn down on demand in the cloud at low cost. MapR also provides features for high availability, security, and multi-tenancy on GCE.