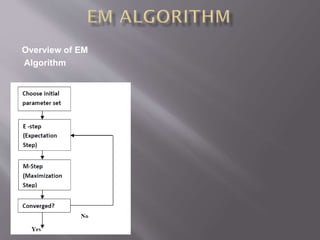

This document discusses Gaussian mixture models (GMMs) and their use in applications like speaker recognition and language identification. GMMs represent a probability density function as a weighted sum of Gaussian distributions. GMM parameters are estimated from training data using Expectation-Maximization or Maximum A Posteriori estimation. GMMs are computationally inexpensive and well-suited for text-independent tasks without strong prior knowledge of content.