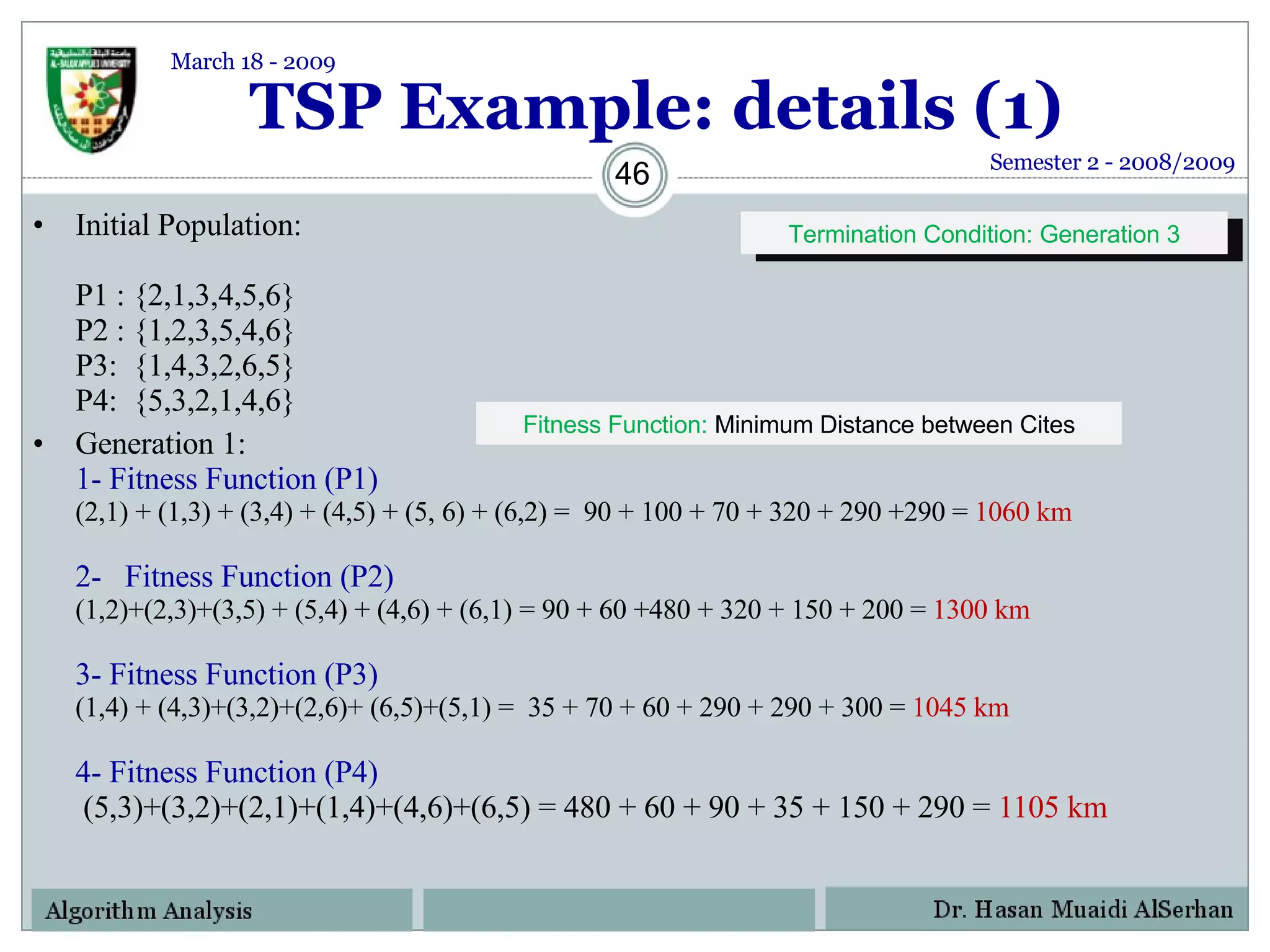

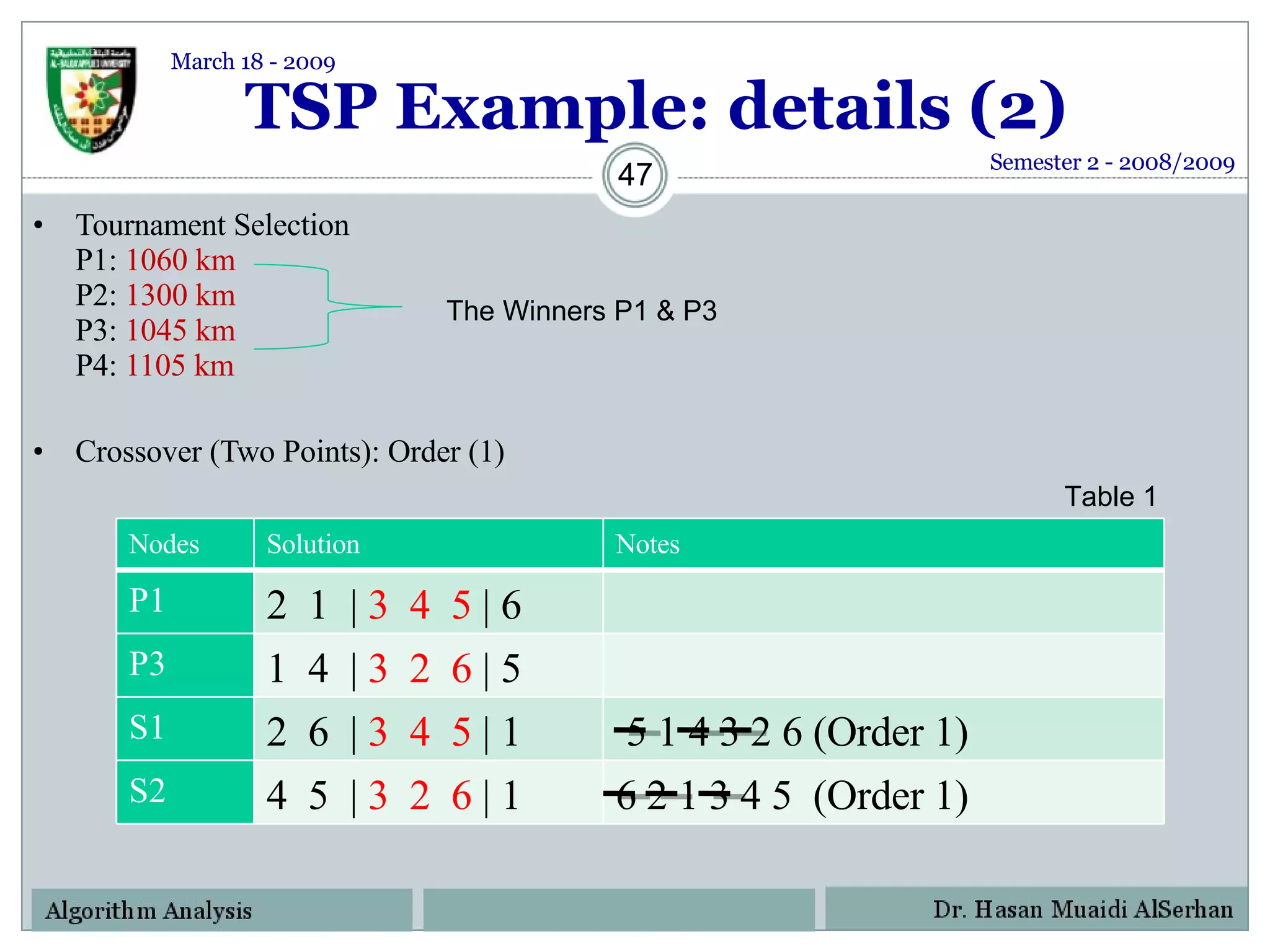

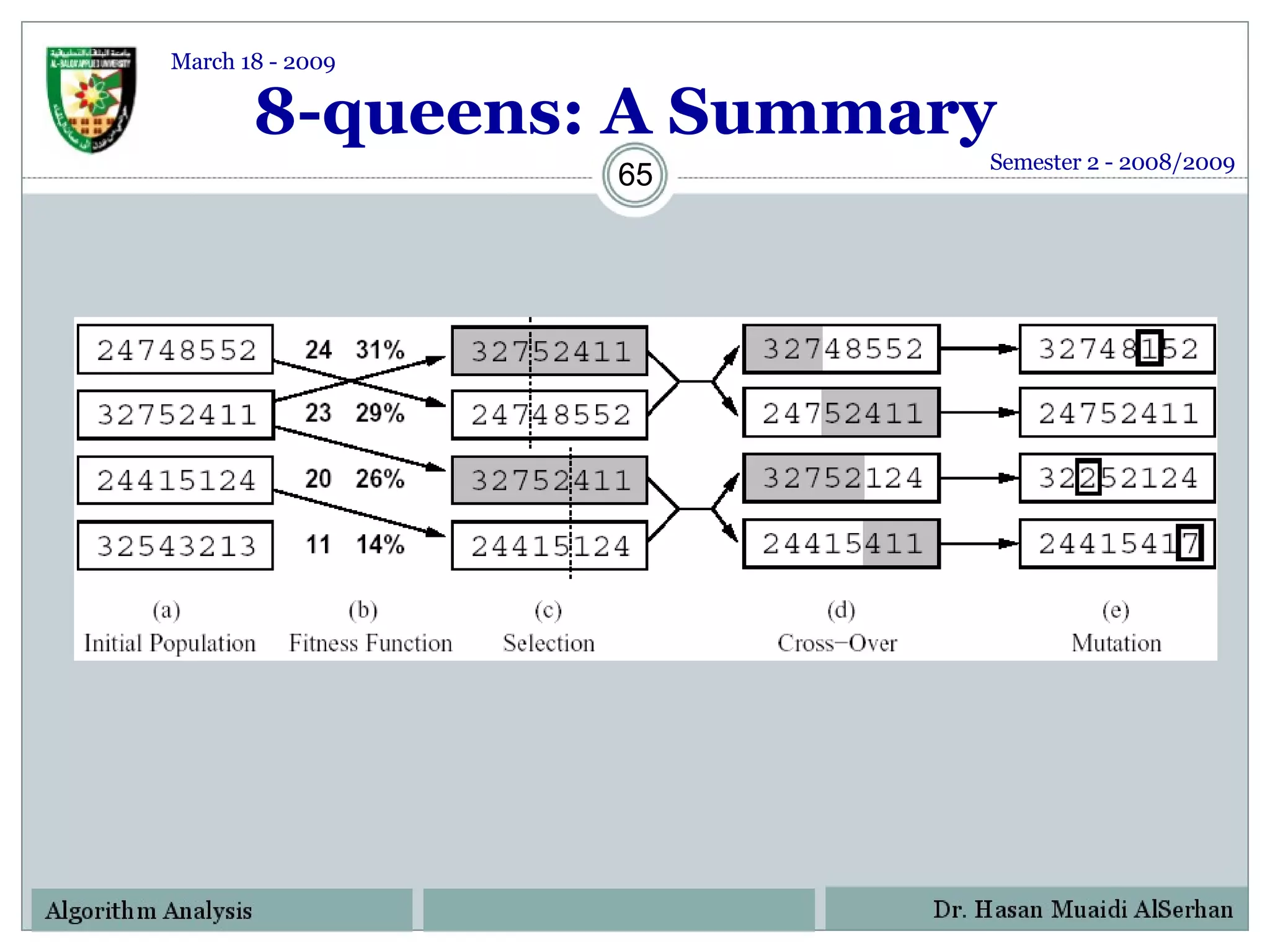

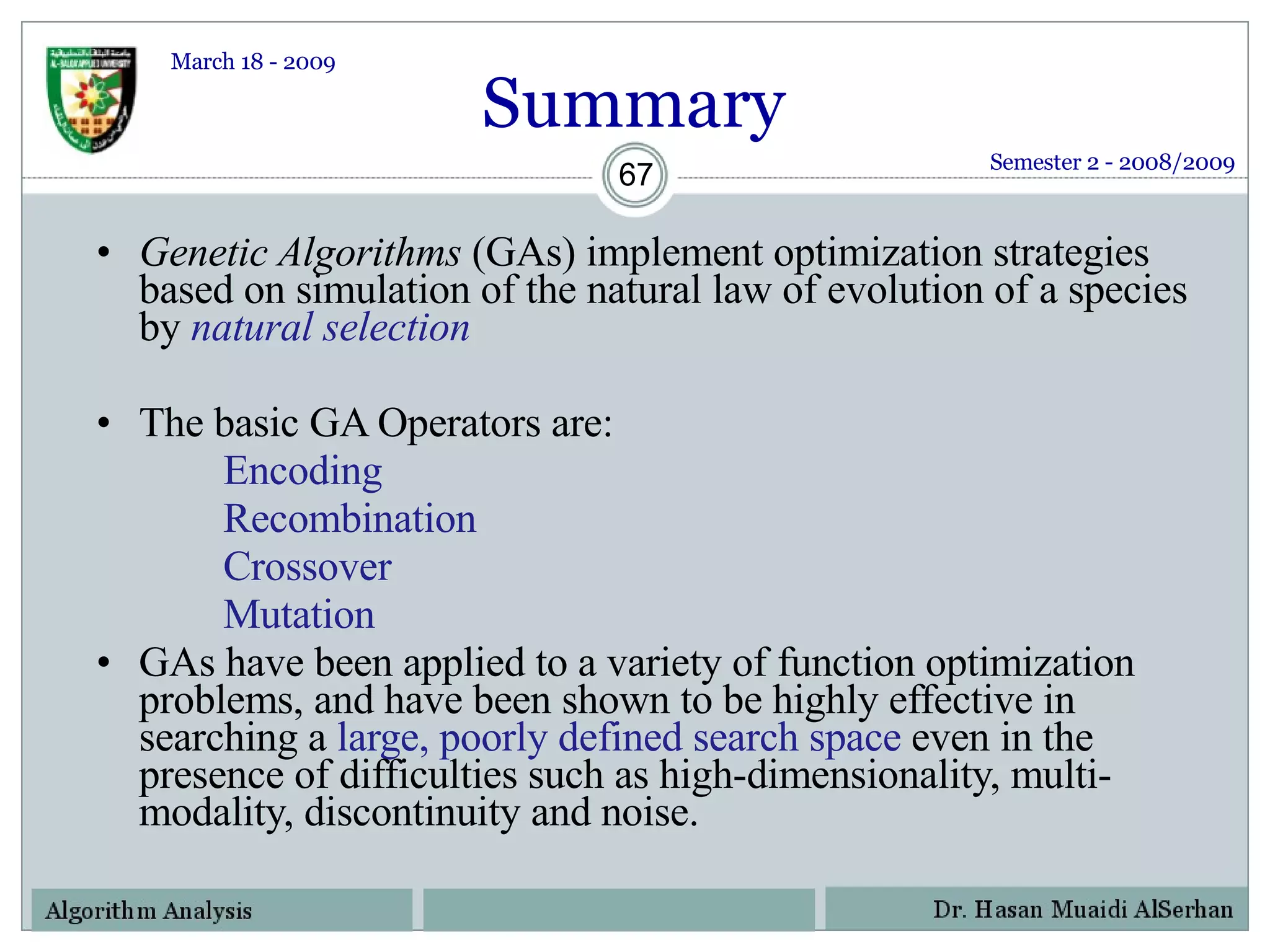

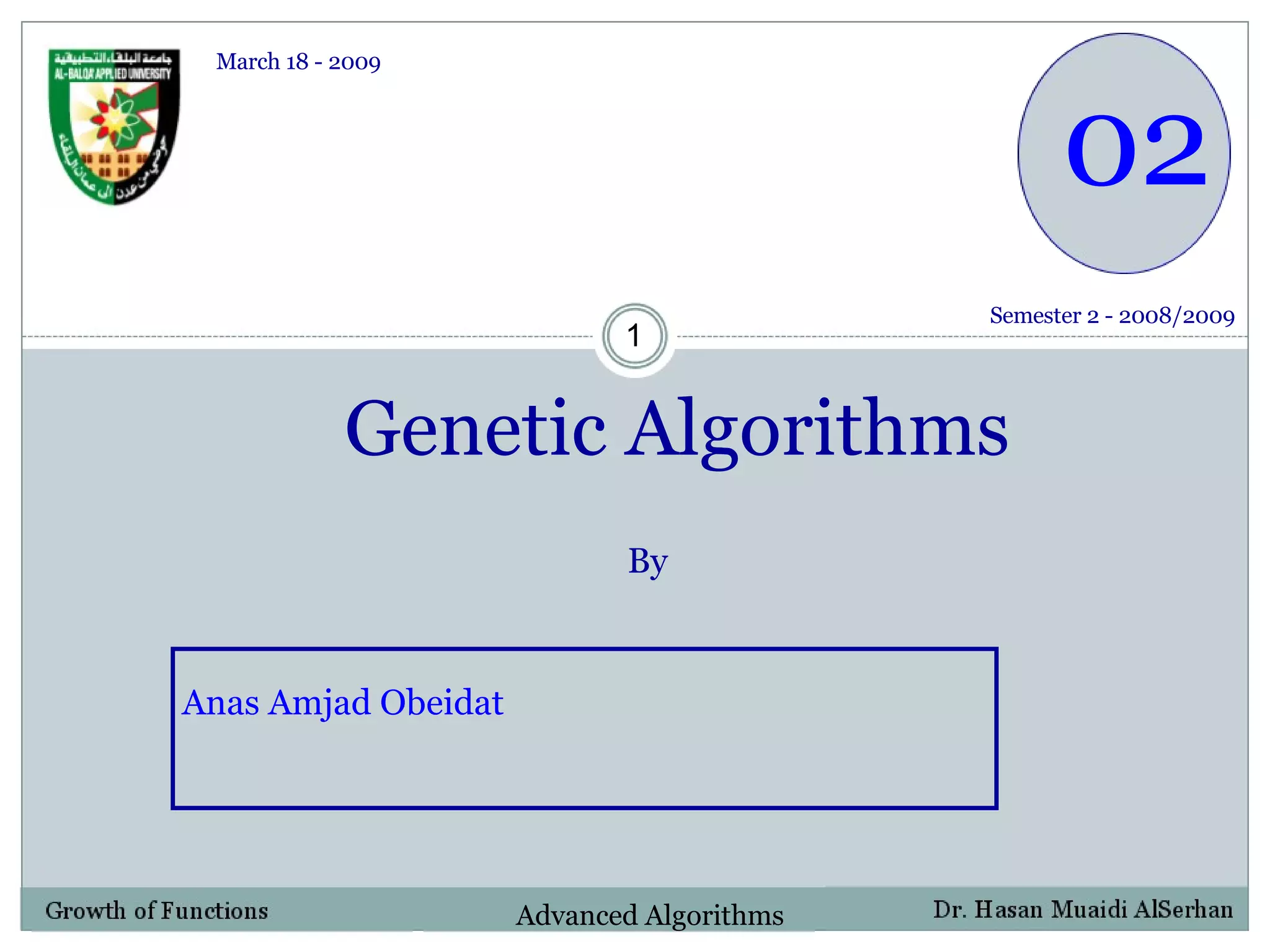

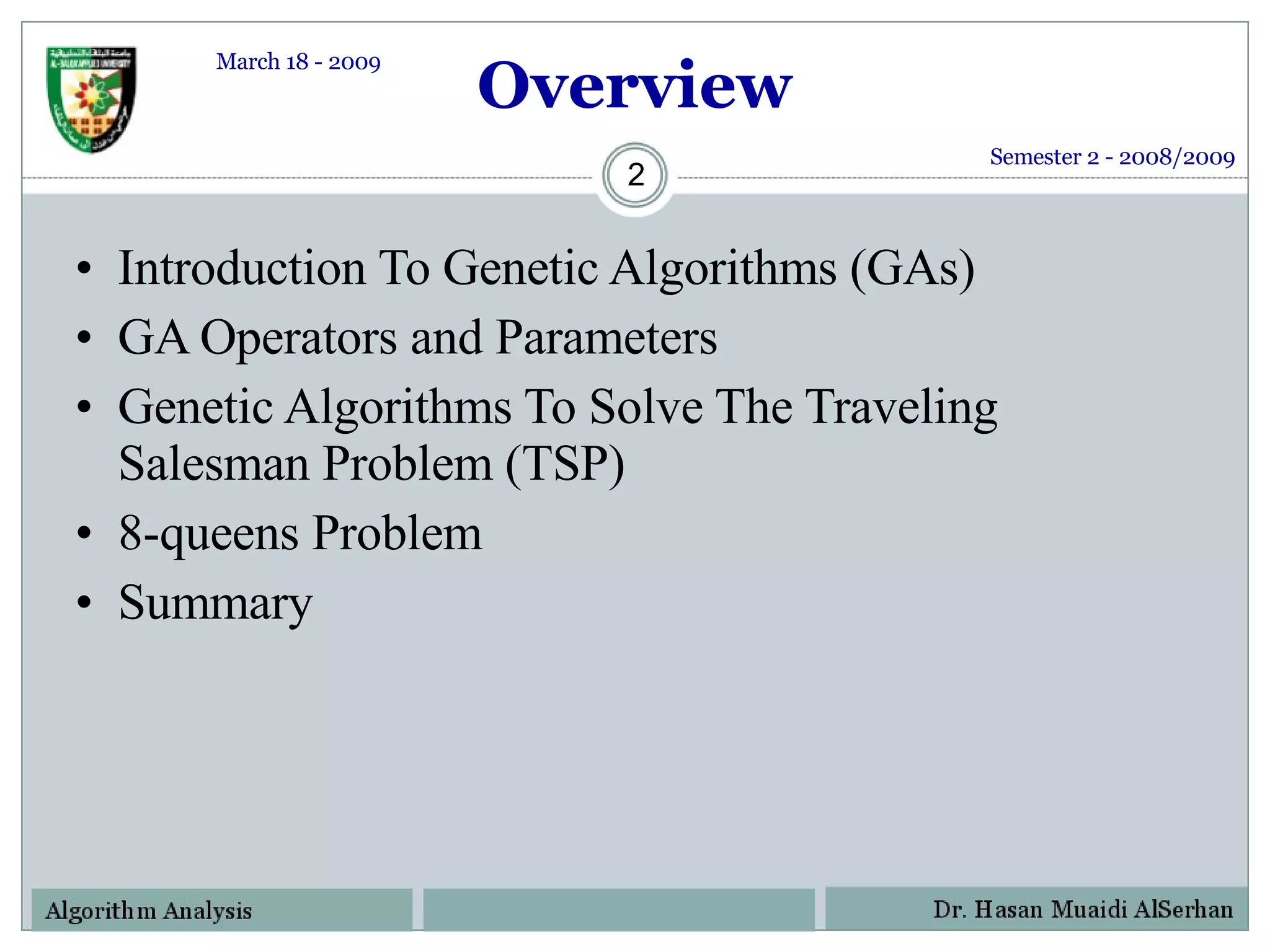

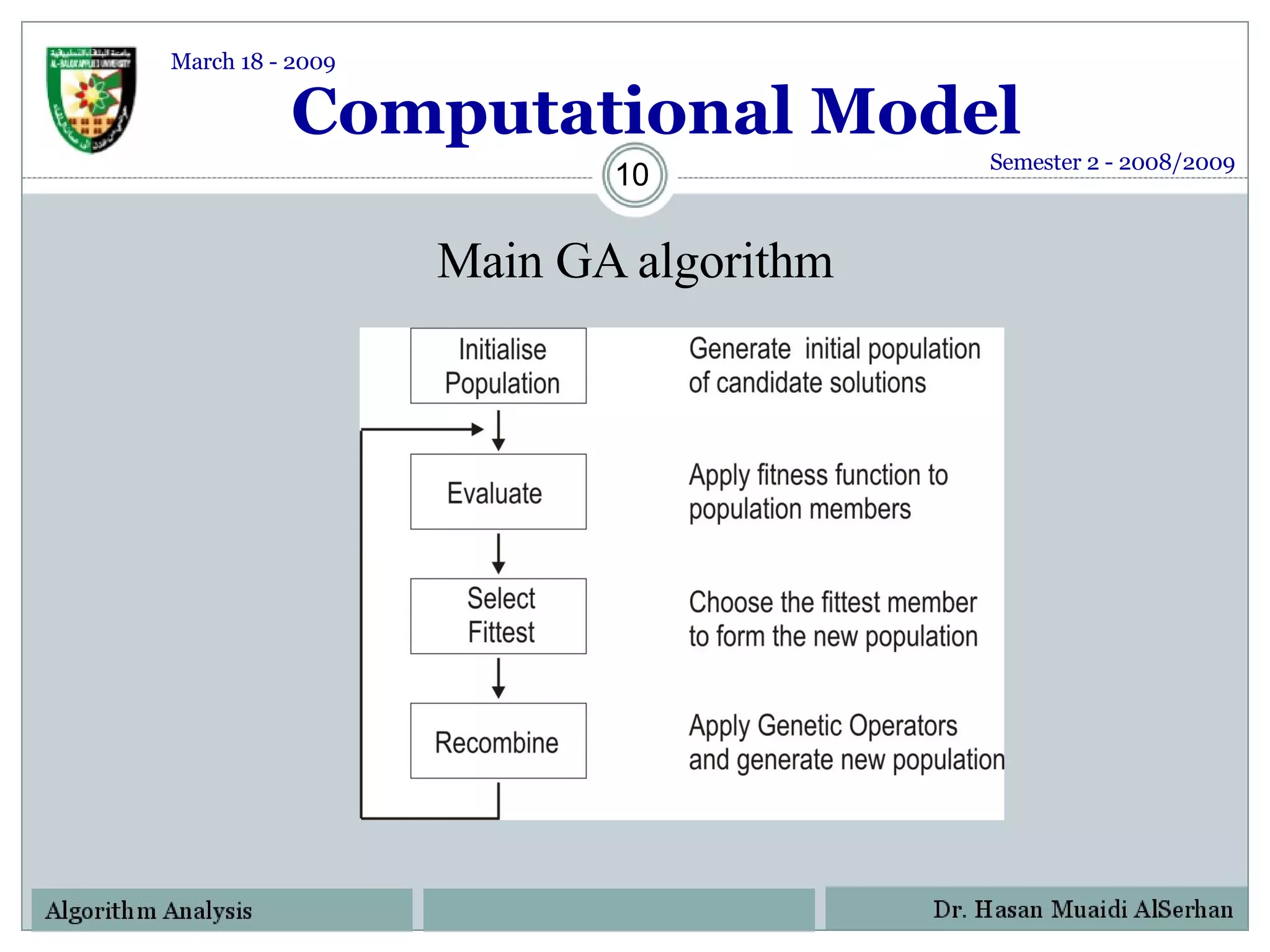

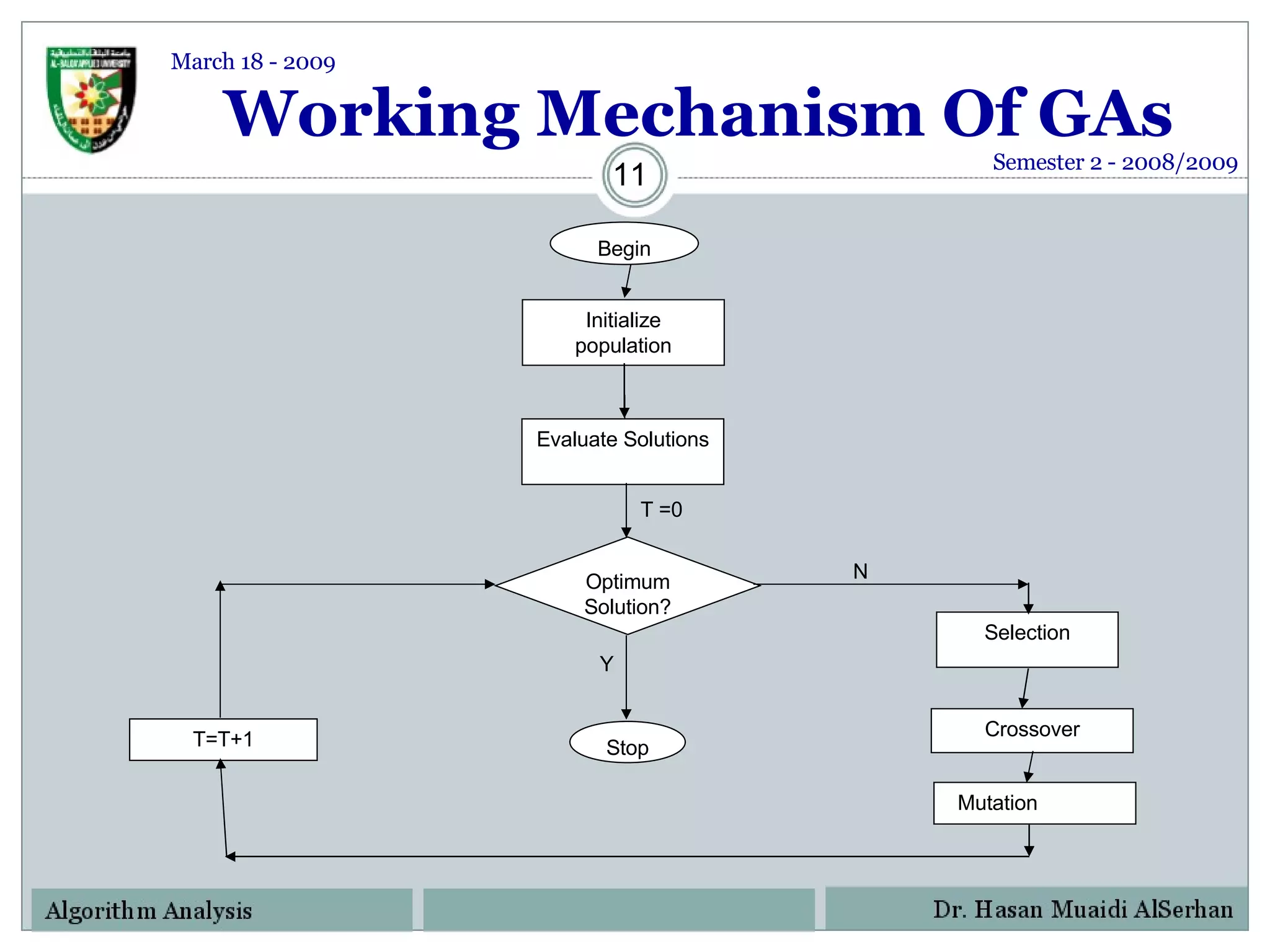

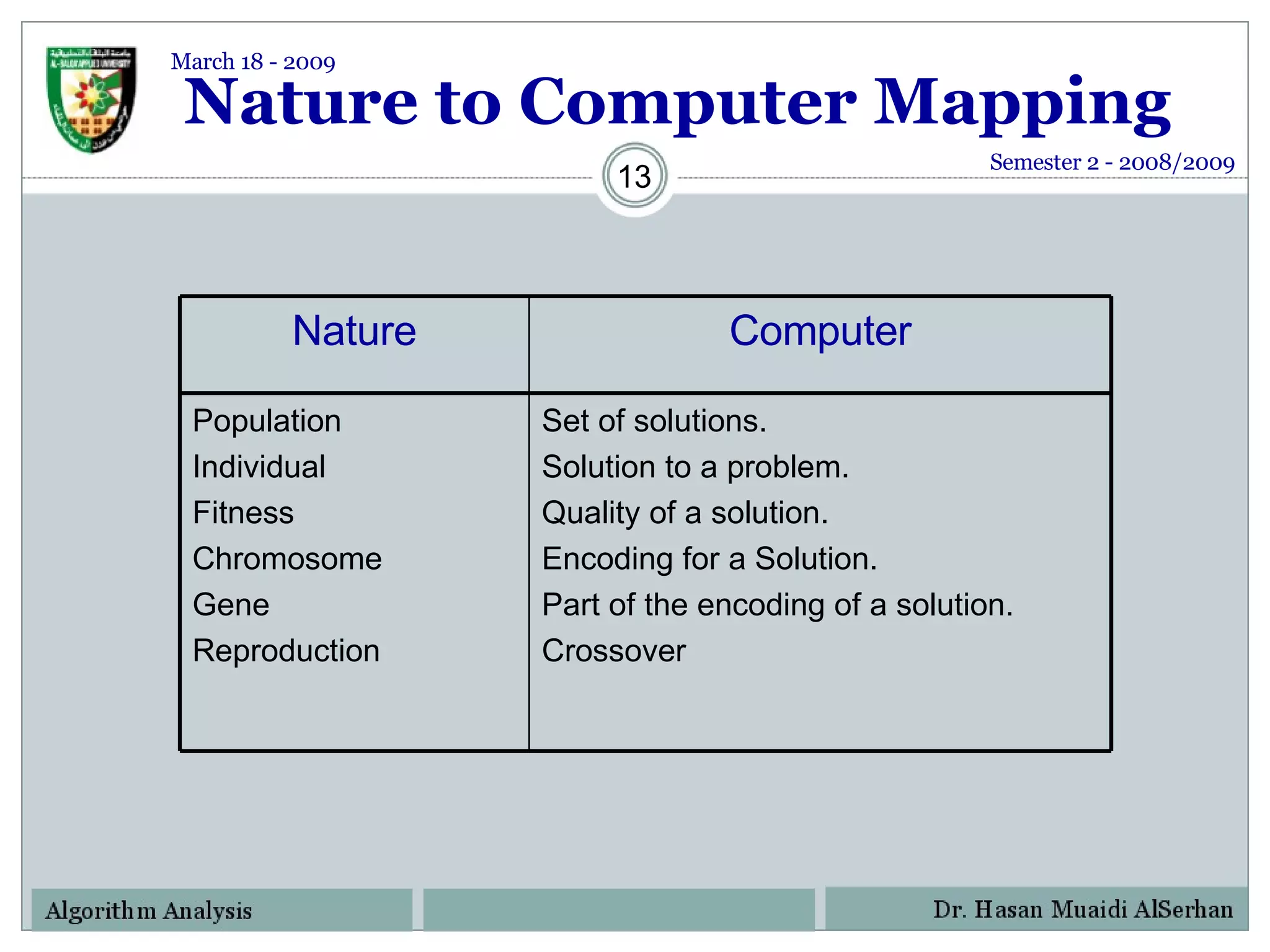

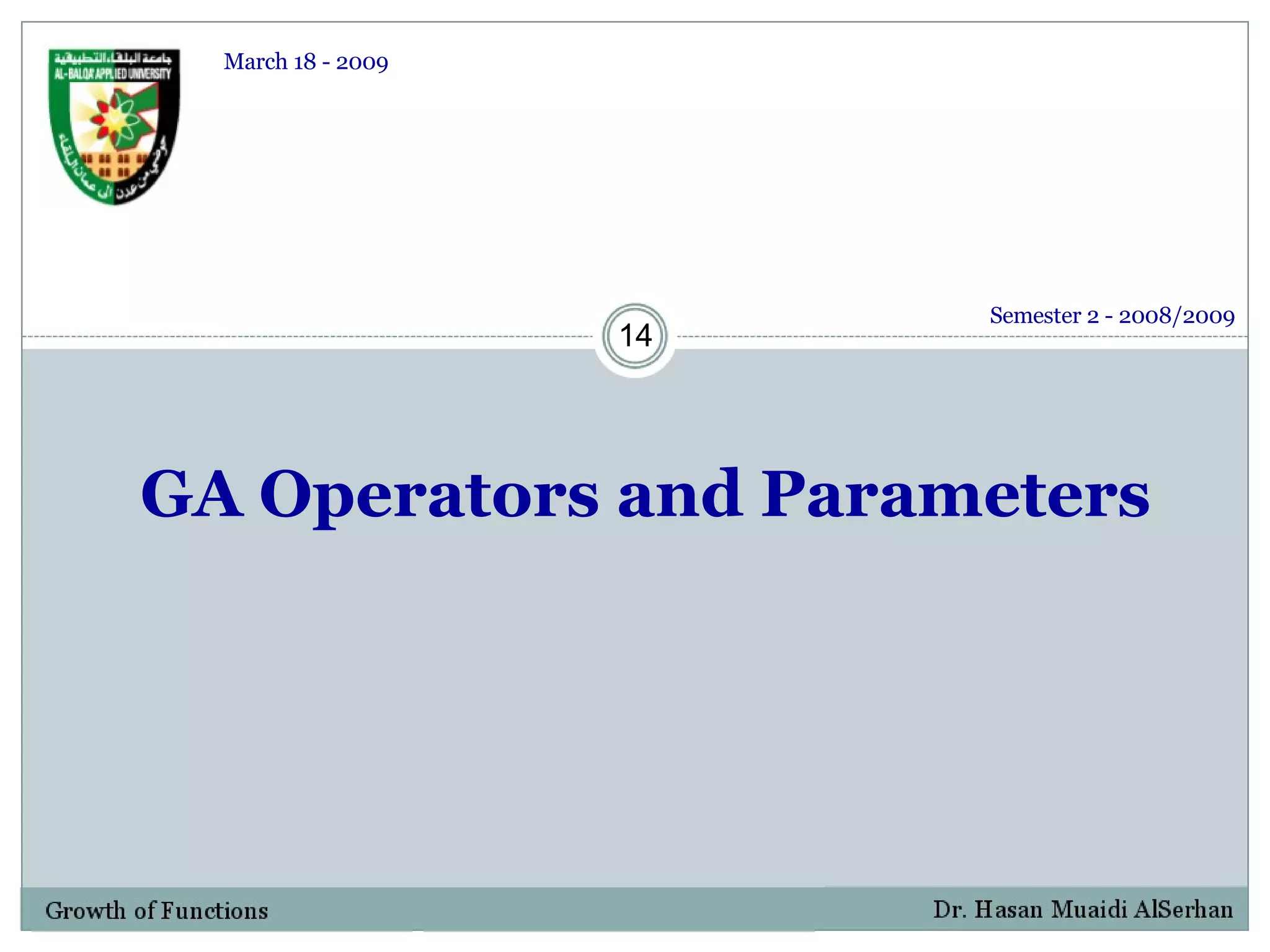

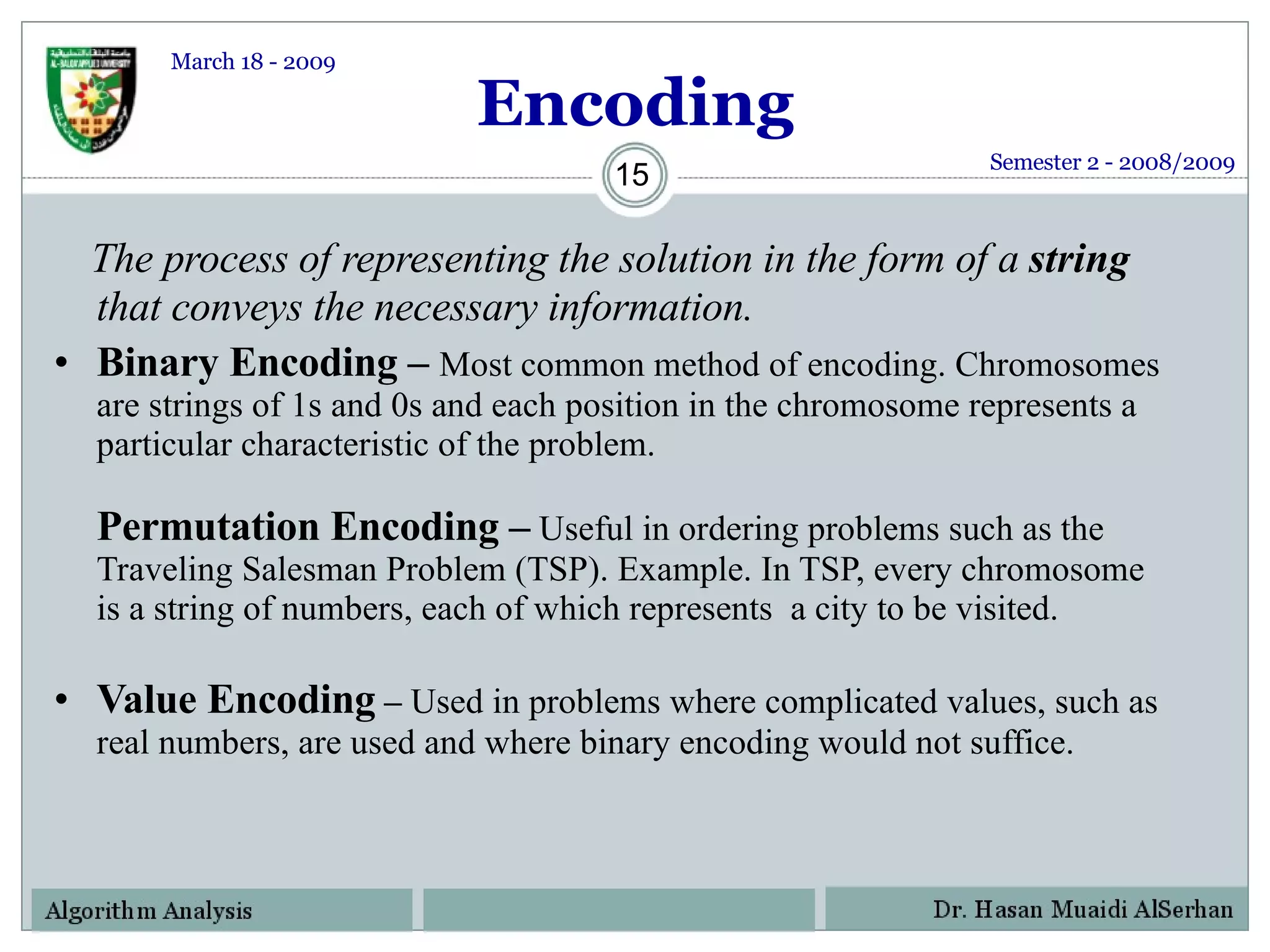

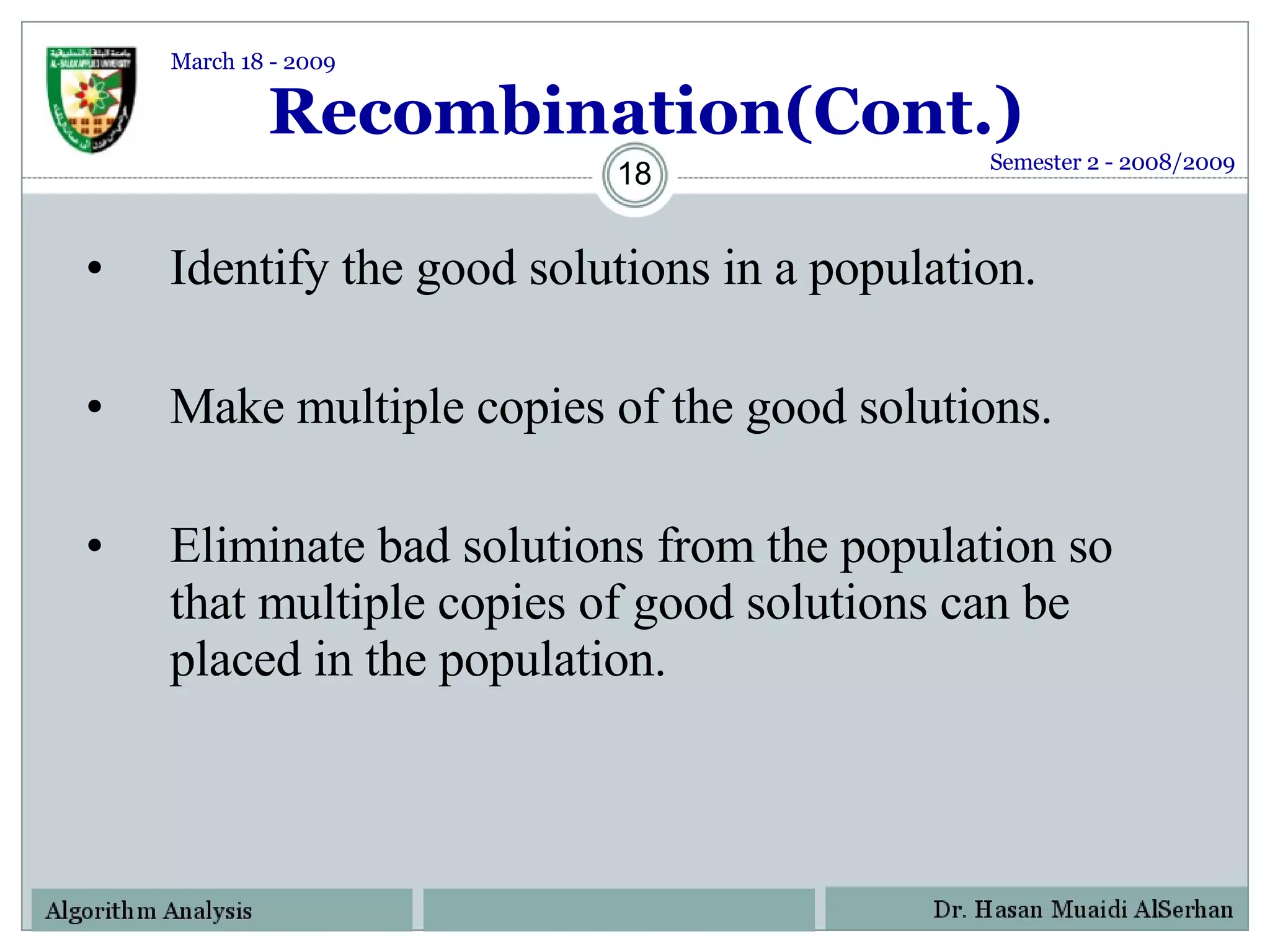

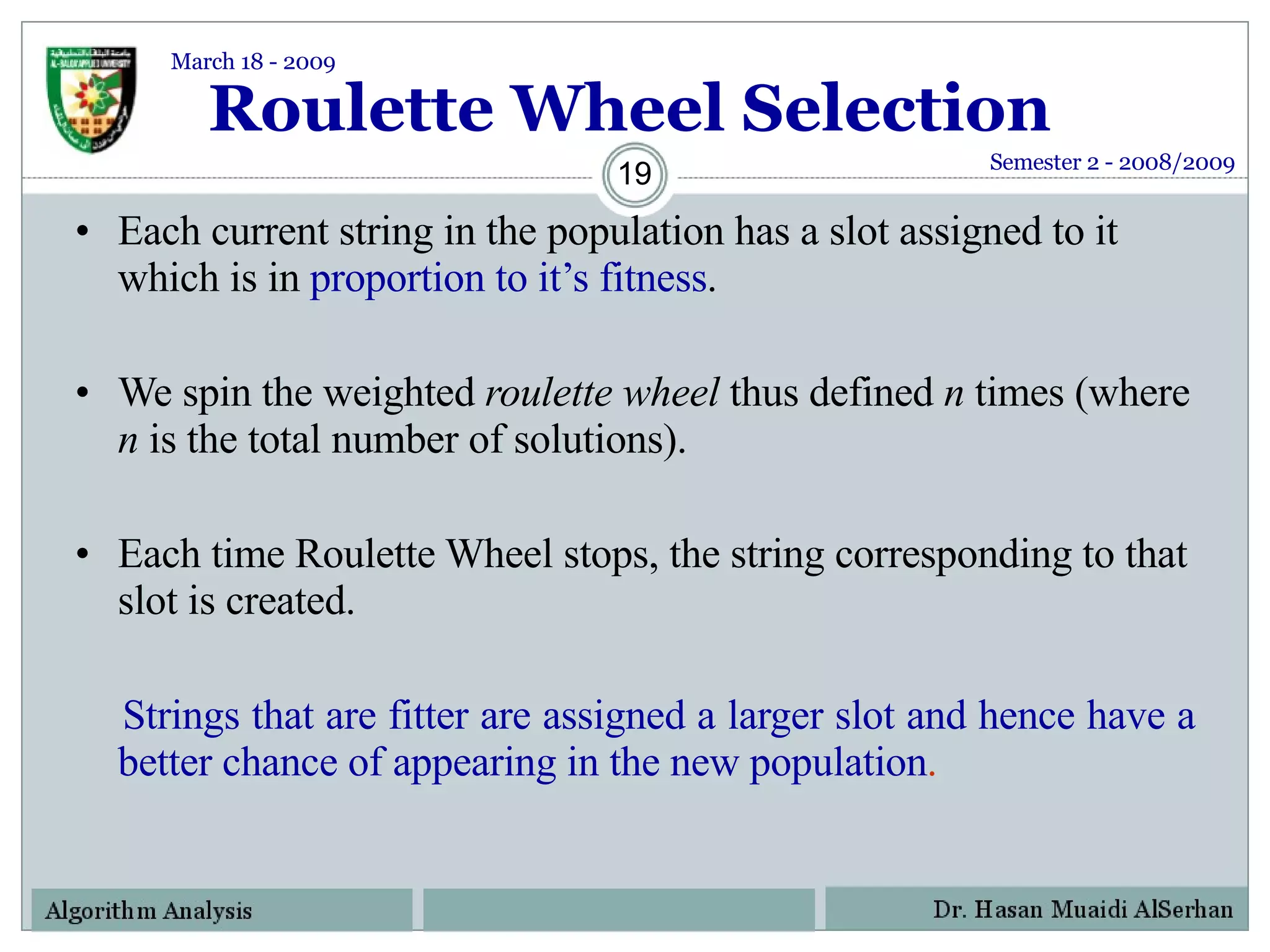

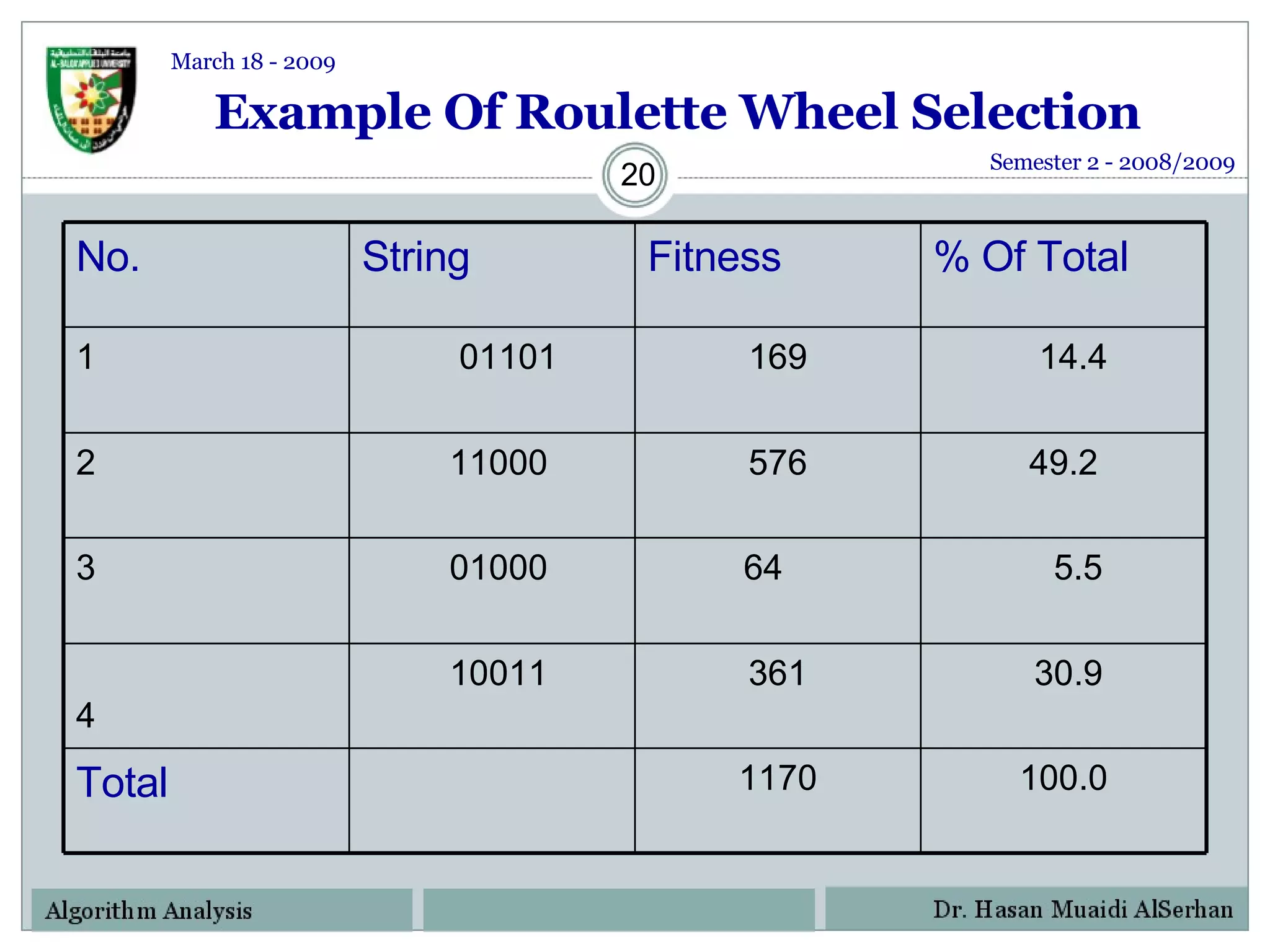

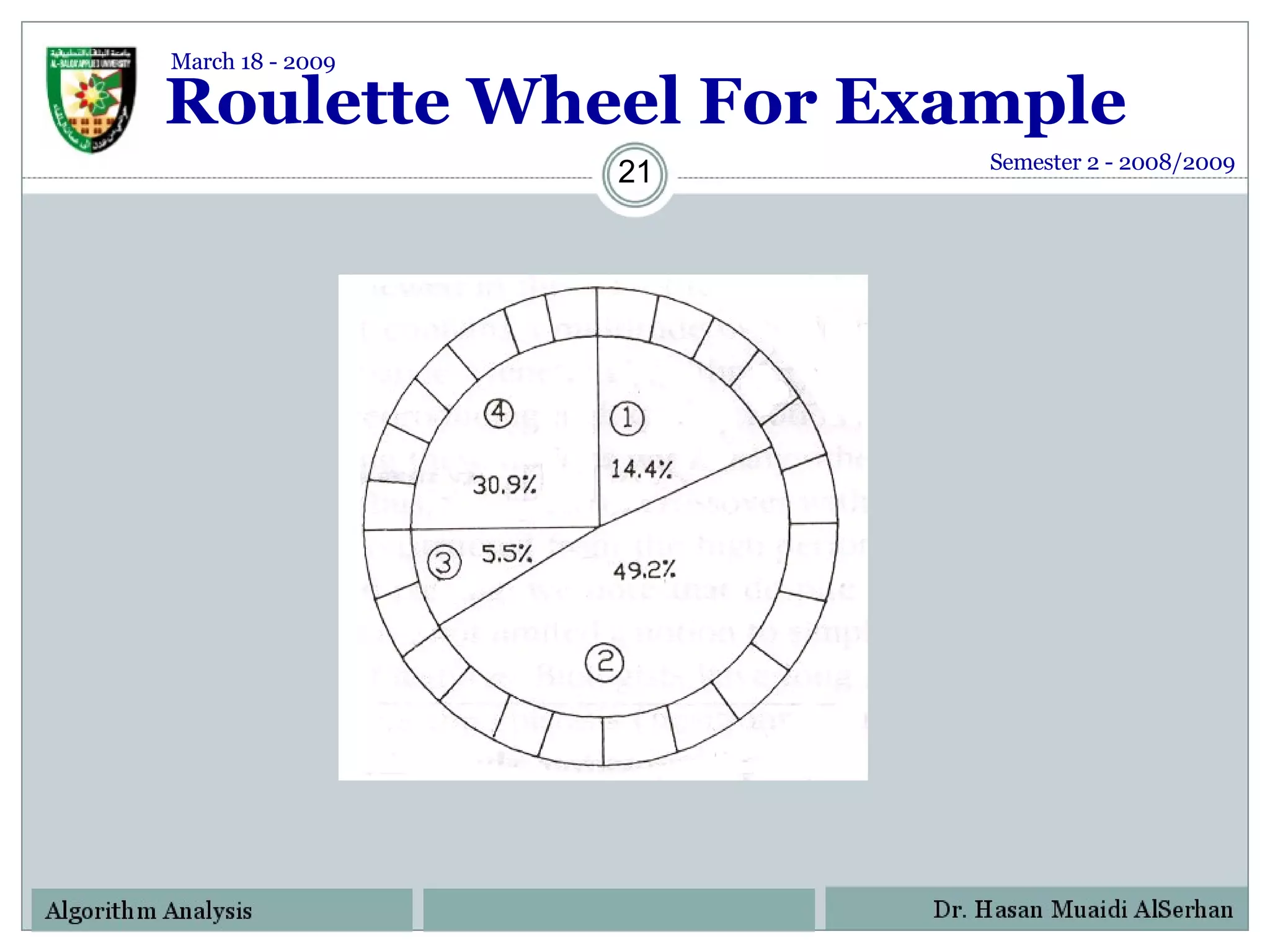

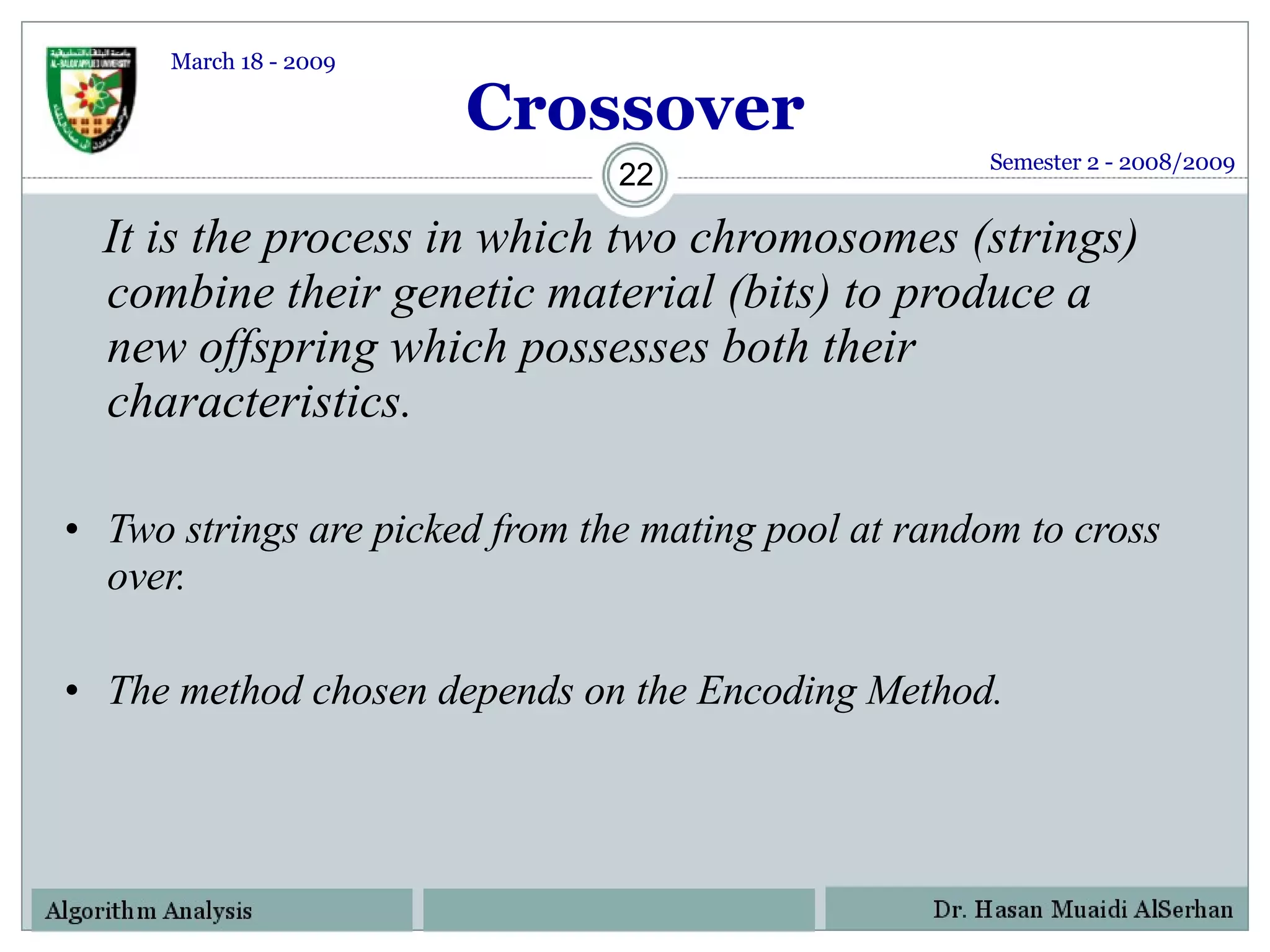

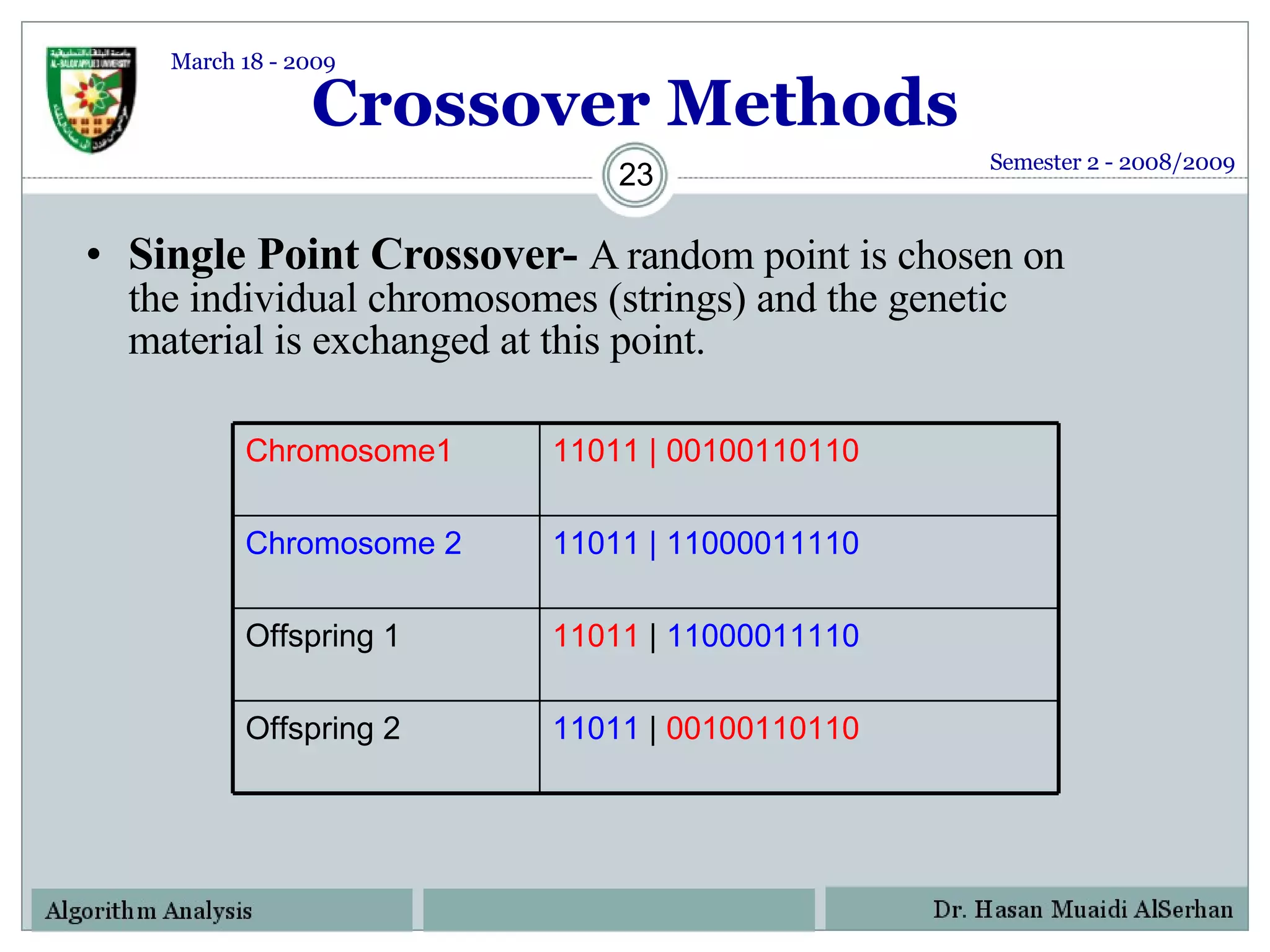

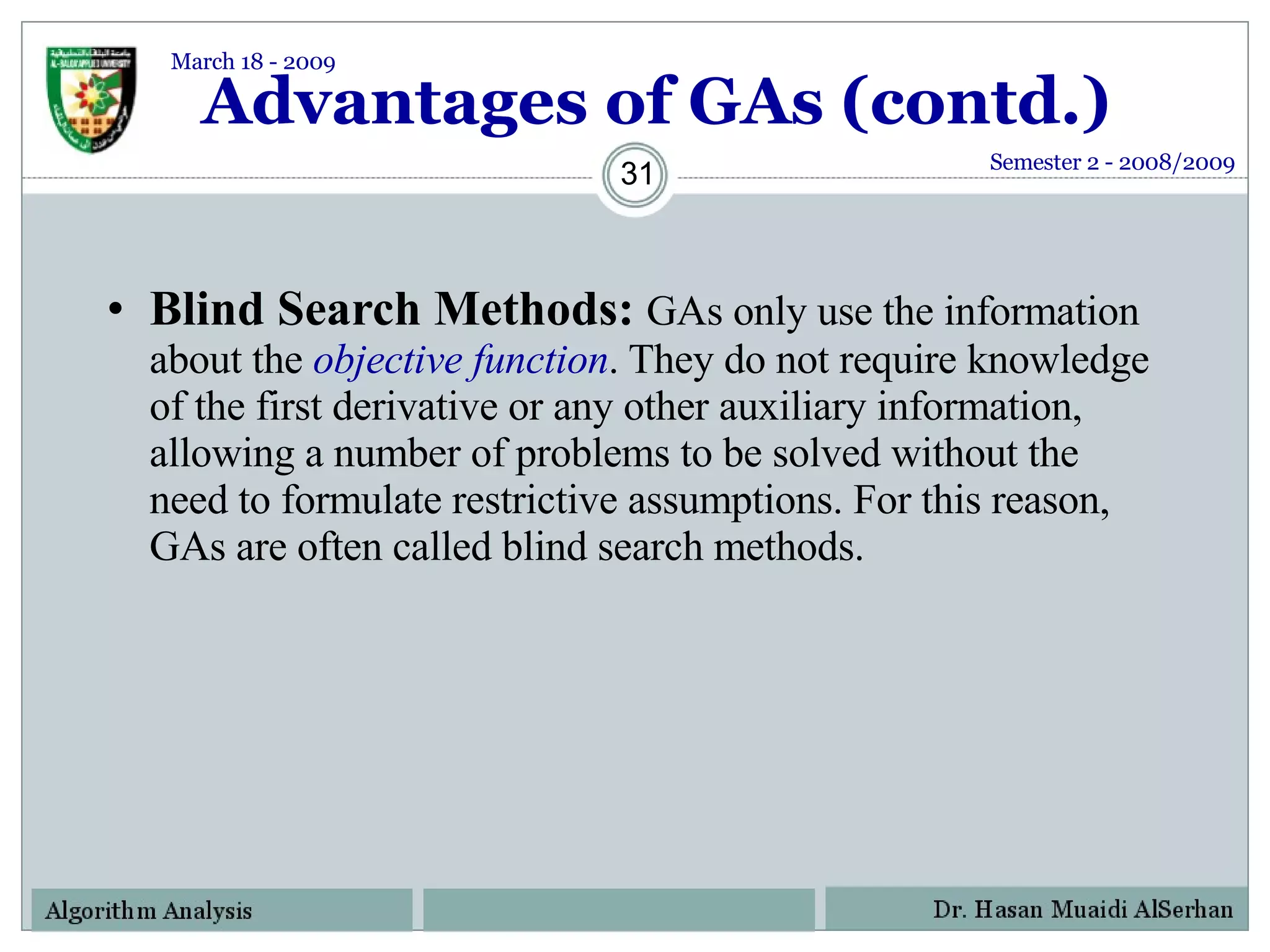

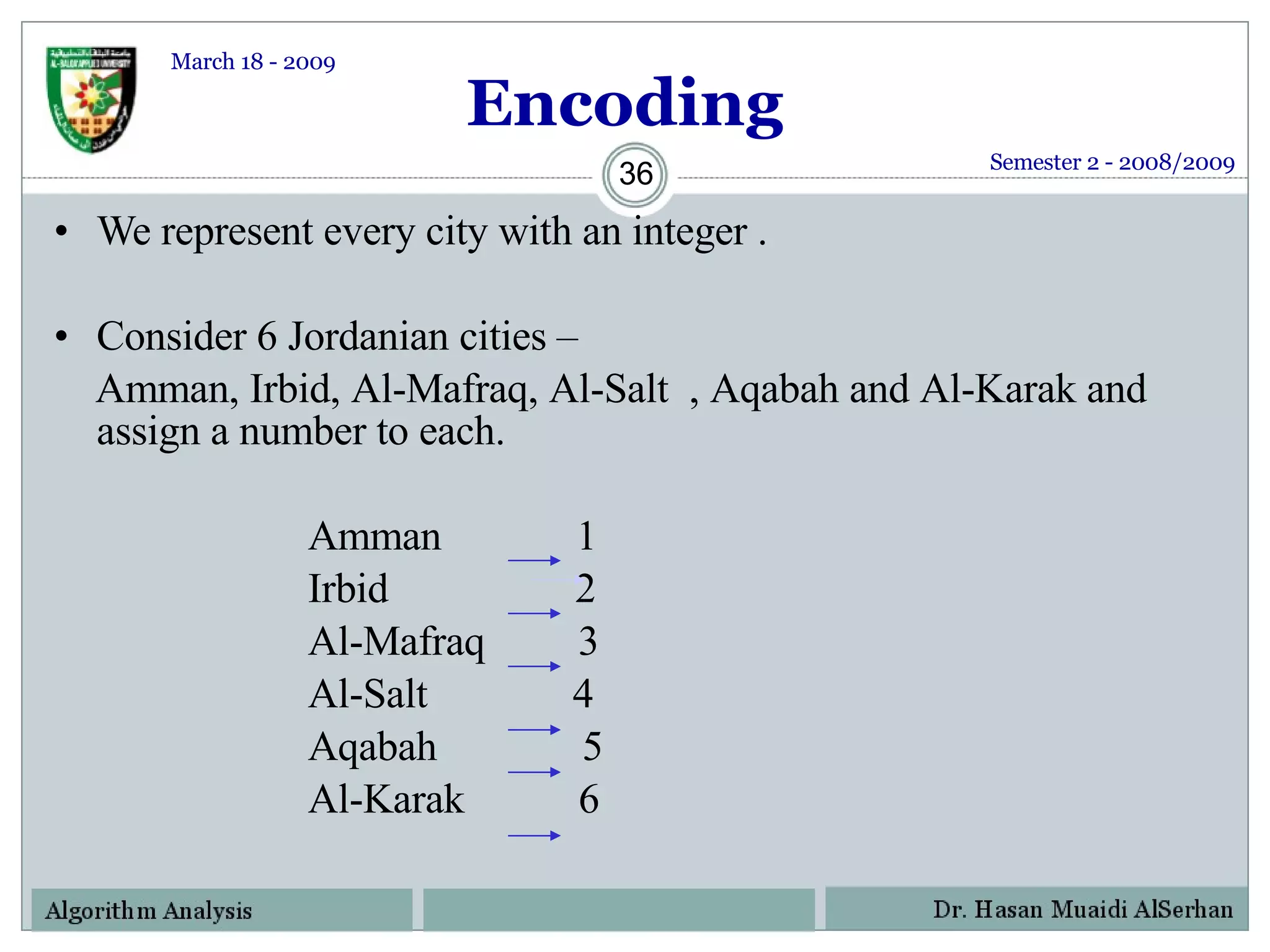

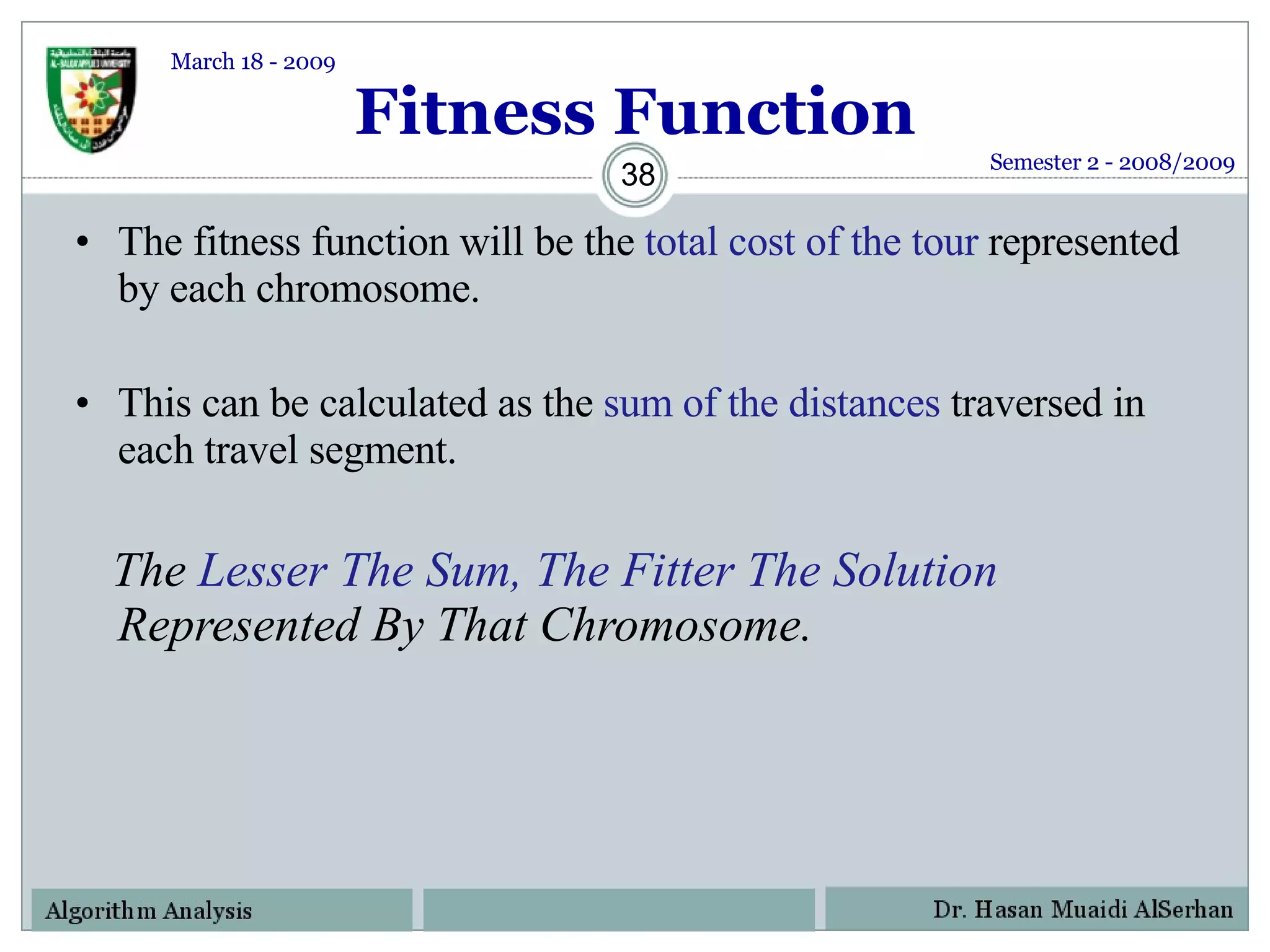

The document provides an overview of genetic algorithms, including their history, principles, components, and applications. Specifically, it discusses how genetic algorithms can be used to solve the traveling salesman problem (TSP) through permutation encoding of cities, calculating fitness based on total tour distance, and using techniques like order-1 crossover to preserve city order in offspring.

![Principle Of Natural Selection “ Select The Best, Discard The Rest” [1] Semester 2 - 2008/2009 March 18 - 2009](https://image.slidesharecdn.com/geneticalgorithms-124130142809-phpapp02/75/Genetic-Algorithms-6-2048.jpg)

![Encoding (contd.) Thus a path would be represented as a sequence of integers from 1 to 6. The path [1 2 3 4 5 6 ] represents a path from Amman to Irbid , Irbid to Al-Mafraq, Al-Mafraq to Al-Salt , Al-Salt to Aqabah , Aqabah to Al-Karak . Finally Al-Karak to Amman This is an example of Permutation Encoding as the position of the elements determines the fitness of the solution. Semester 2 - 2008/2009 March 18 - 2009](https://image.slidesharecdn.com/geneticalgorithms-124130142809-phpapp02/75/Genetic-Algorithms-37-2048.jpg)

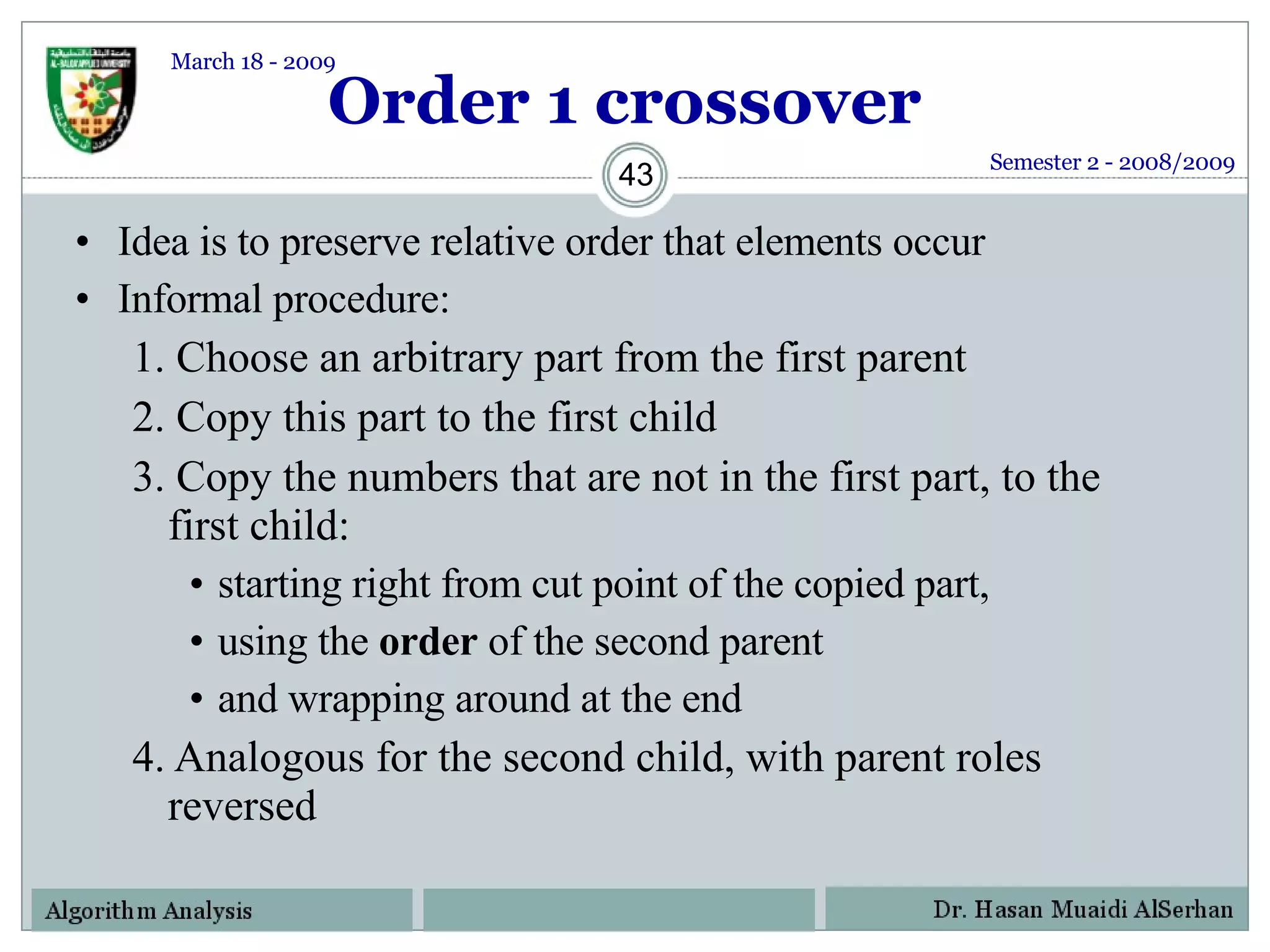

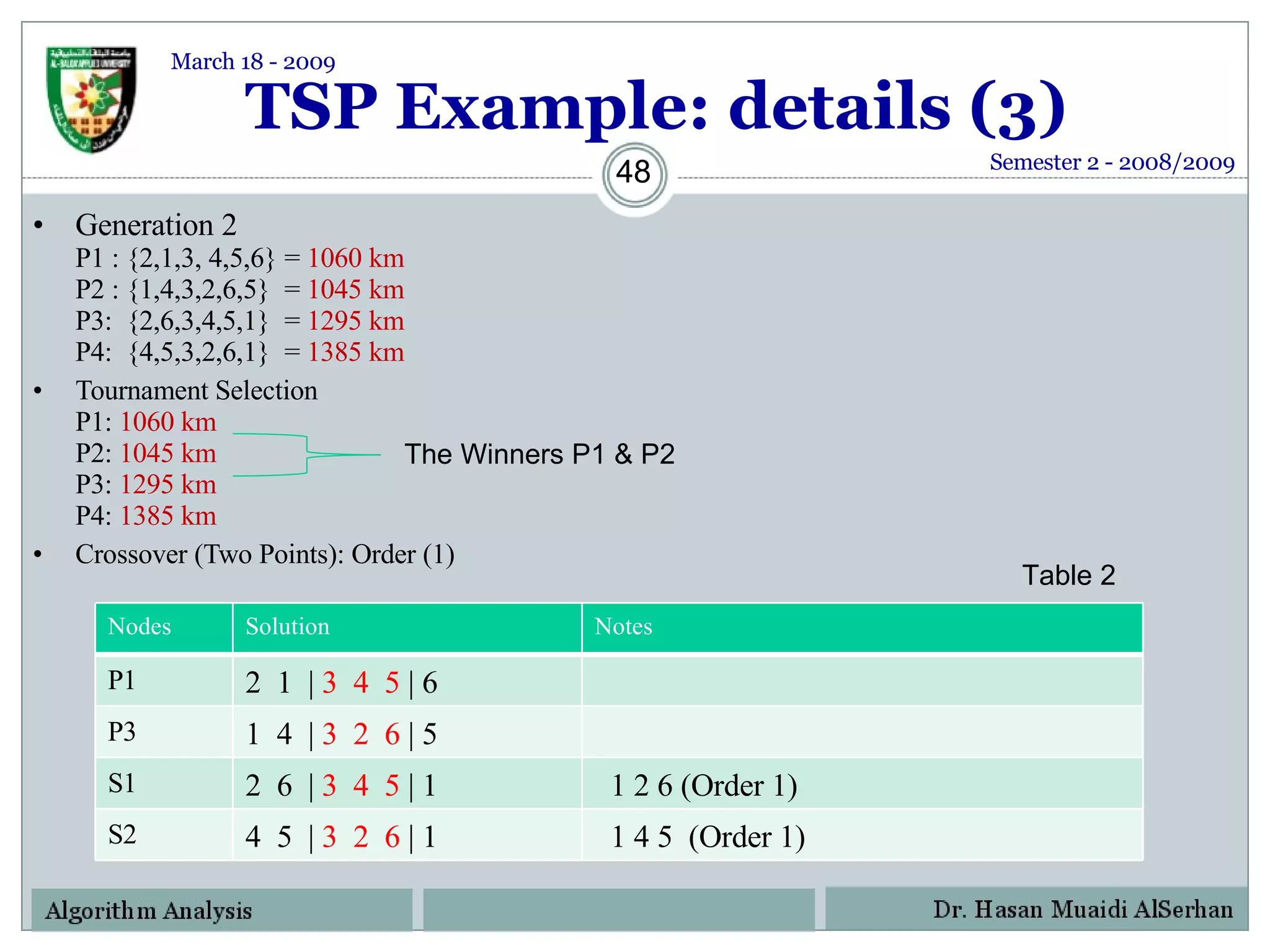

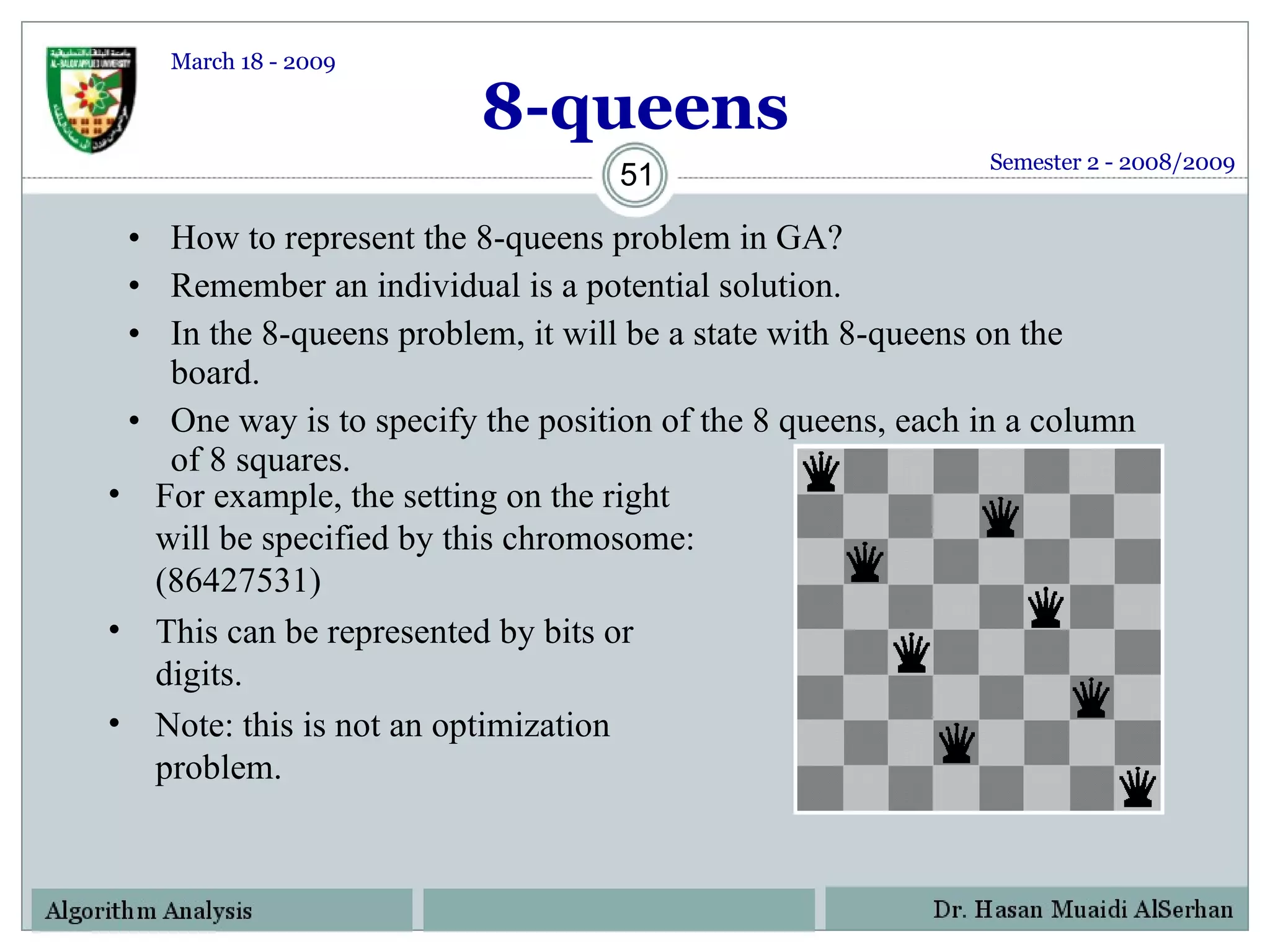

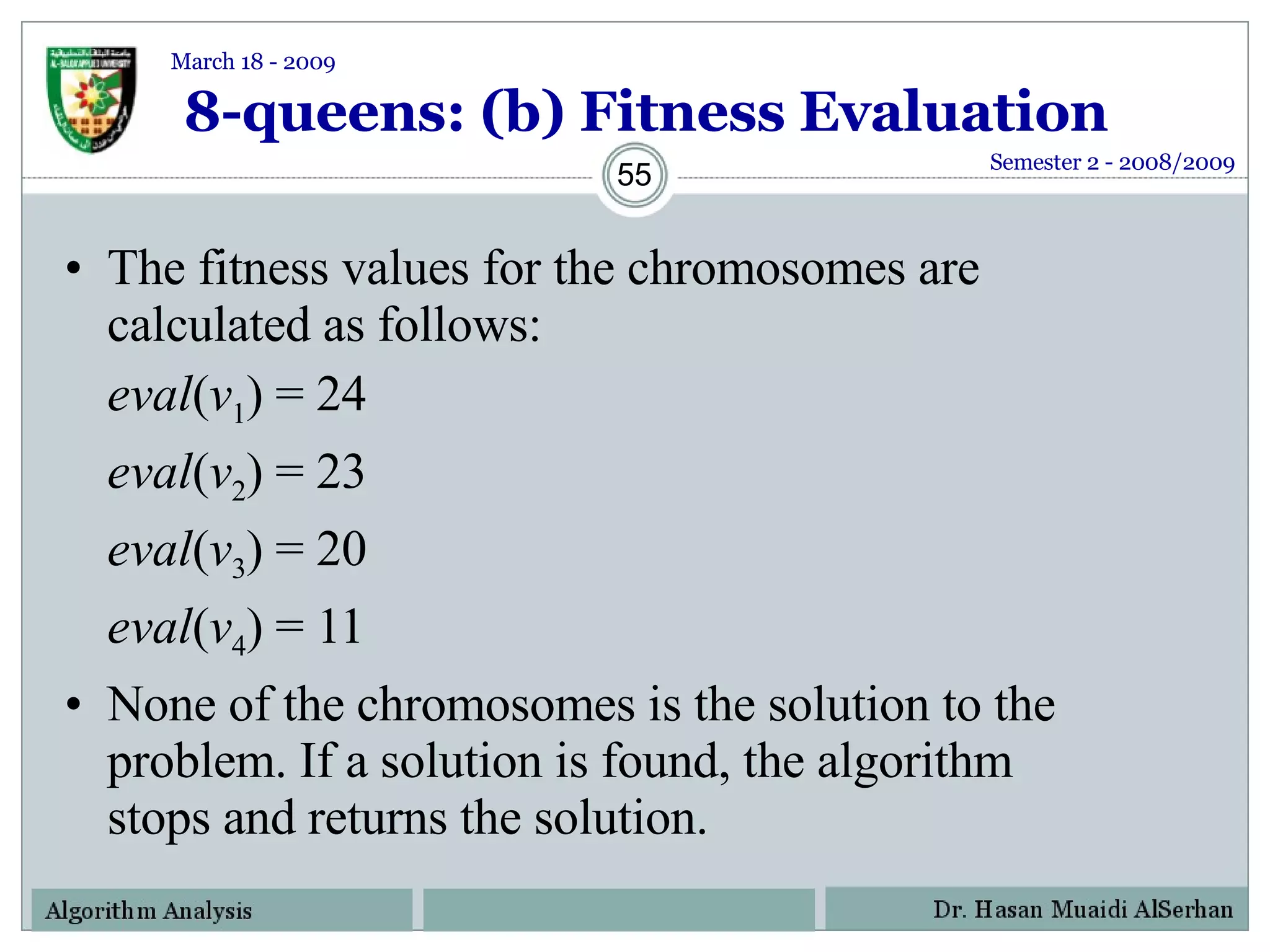

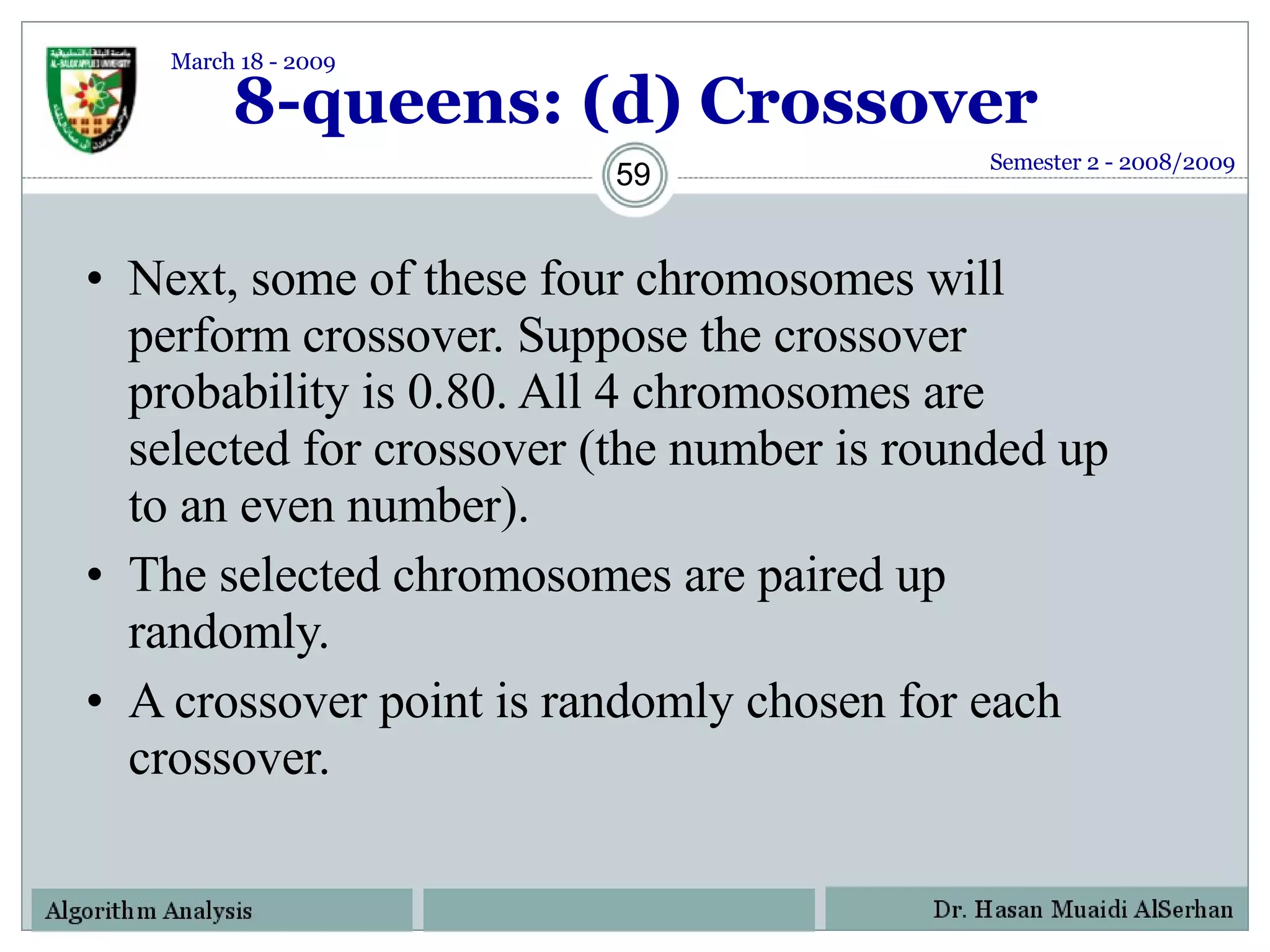

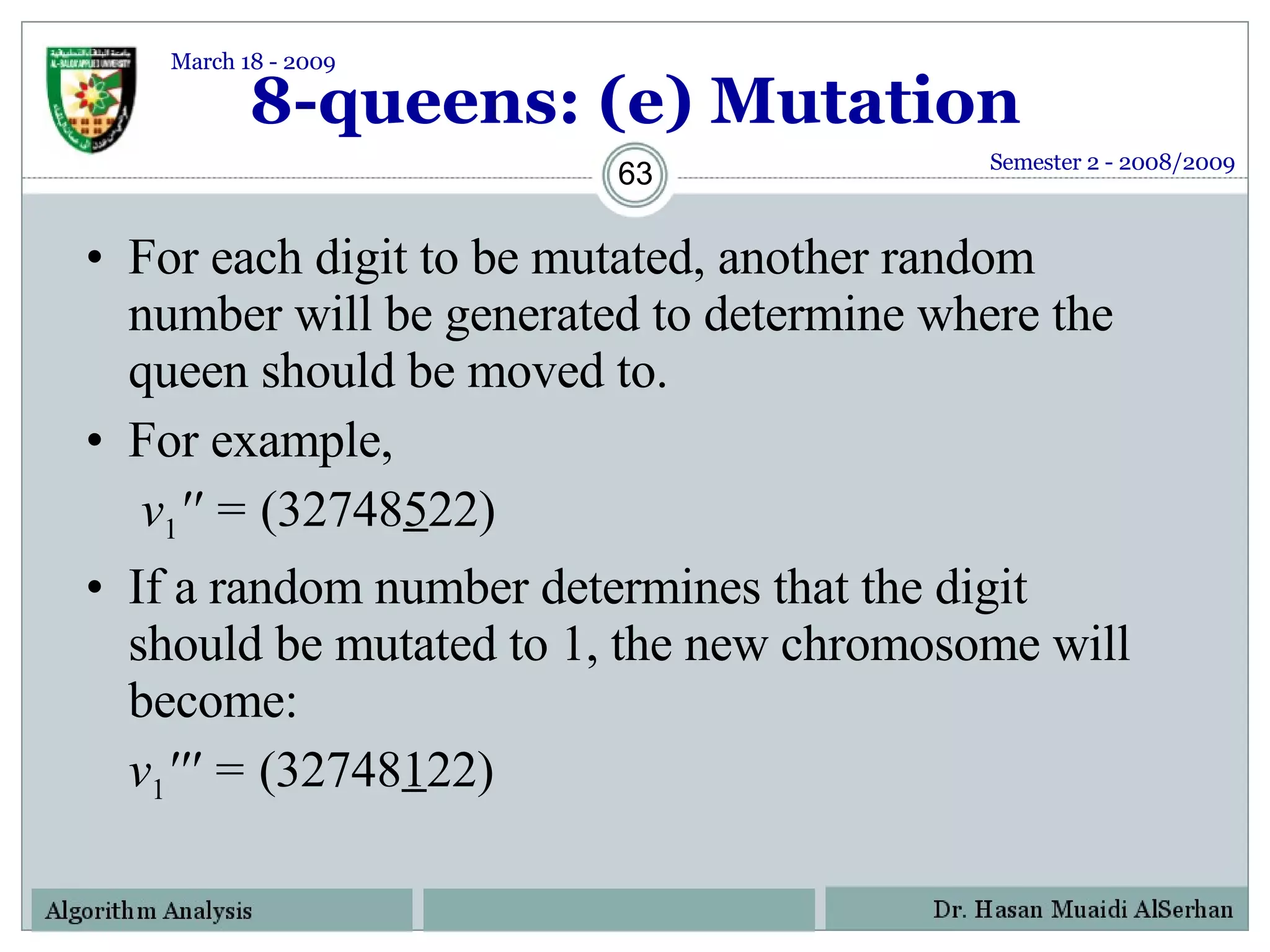

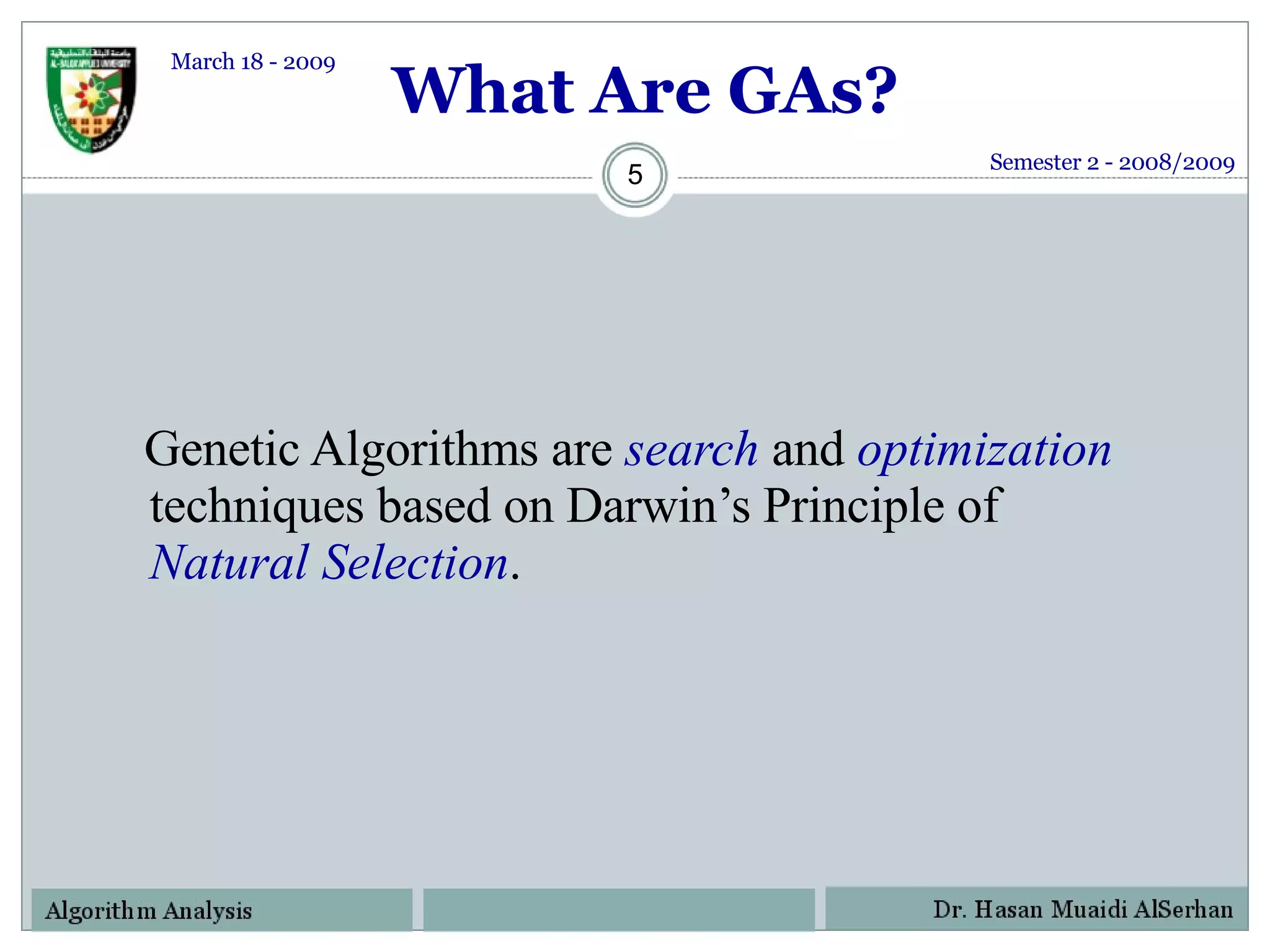

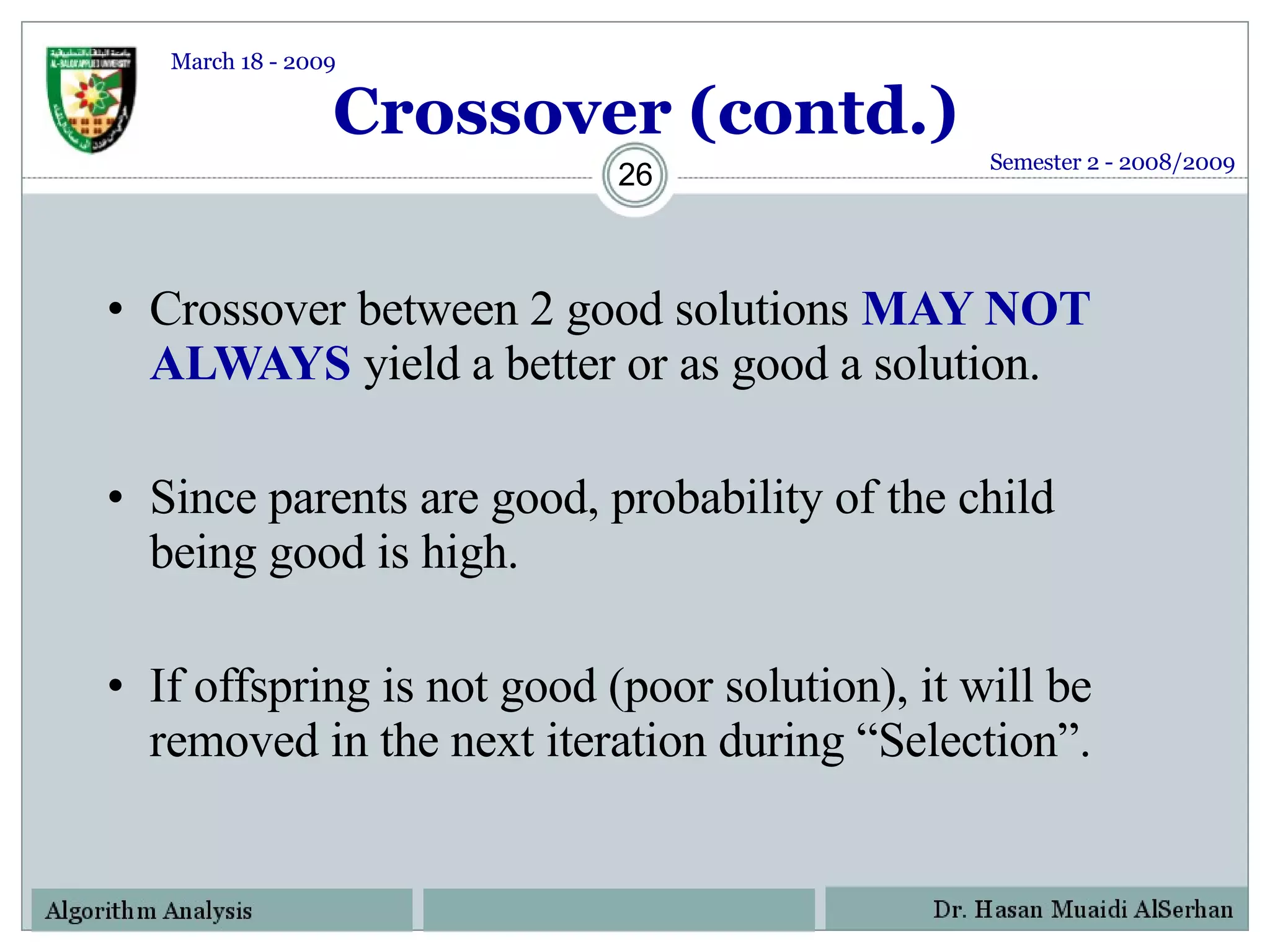

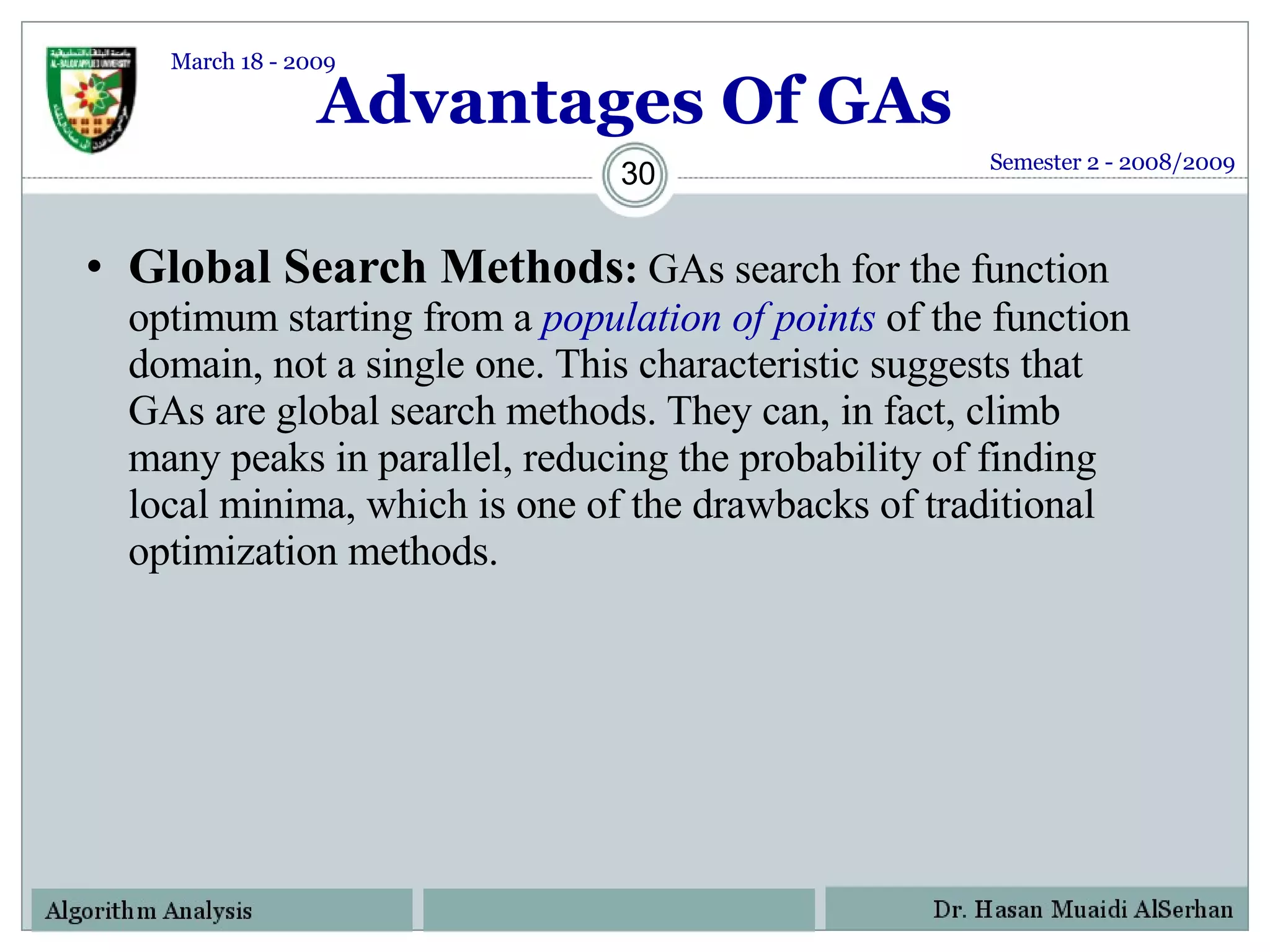

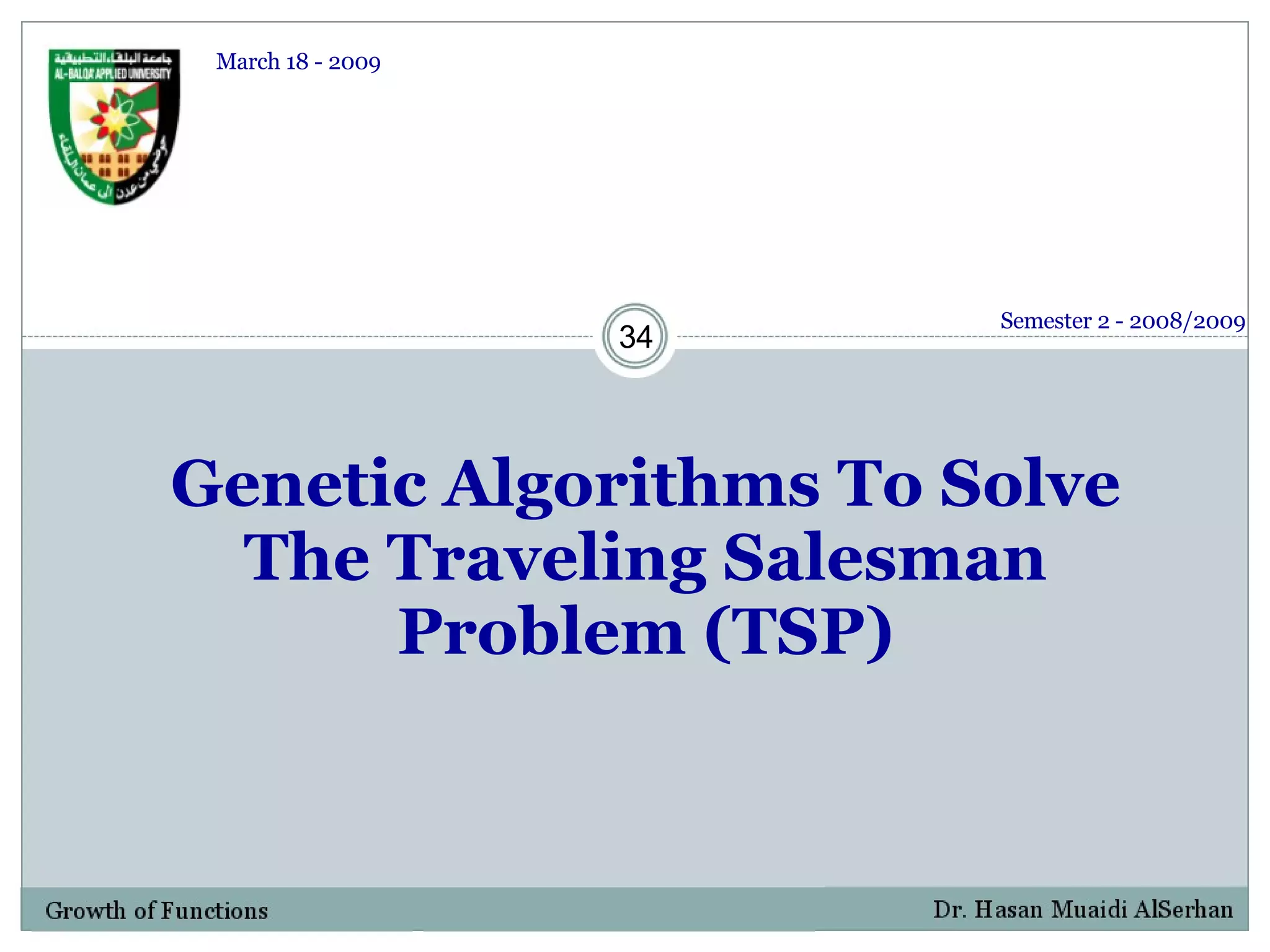

![Distance/Cost Matrix For TSP Cost matrix for six city example. Distances in Kilometers Semester 2 - 2008/2009 March 18 - 2009 Amman 1 Irbid 2 Al-Mafraq 3 Al-Salt 4 Al-Aqabah 5 Al-Karak 6 Amman [1] 0 90 100 35 300 200 Irbid [2] 90 0 60 120 400 290 Al-Mafraq [3] 100 60 0 70 480 225 Al-Salt [4] 35 120 70 0 320 150 Aqabah [5] 300 400 480 320 0 290 Al-Karak [6] 200 290 225 150 290 0](https://image.slidesharecdn.com/geneticalgorithms-124130142809-phpapp02/75/Genetic-Algorithms-39-2048.jpg)

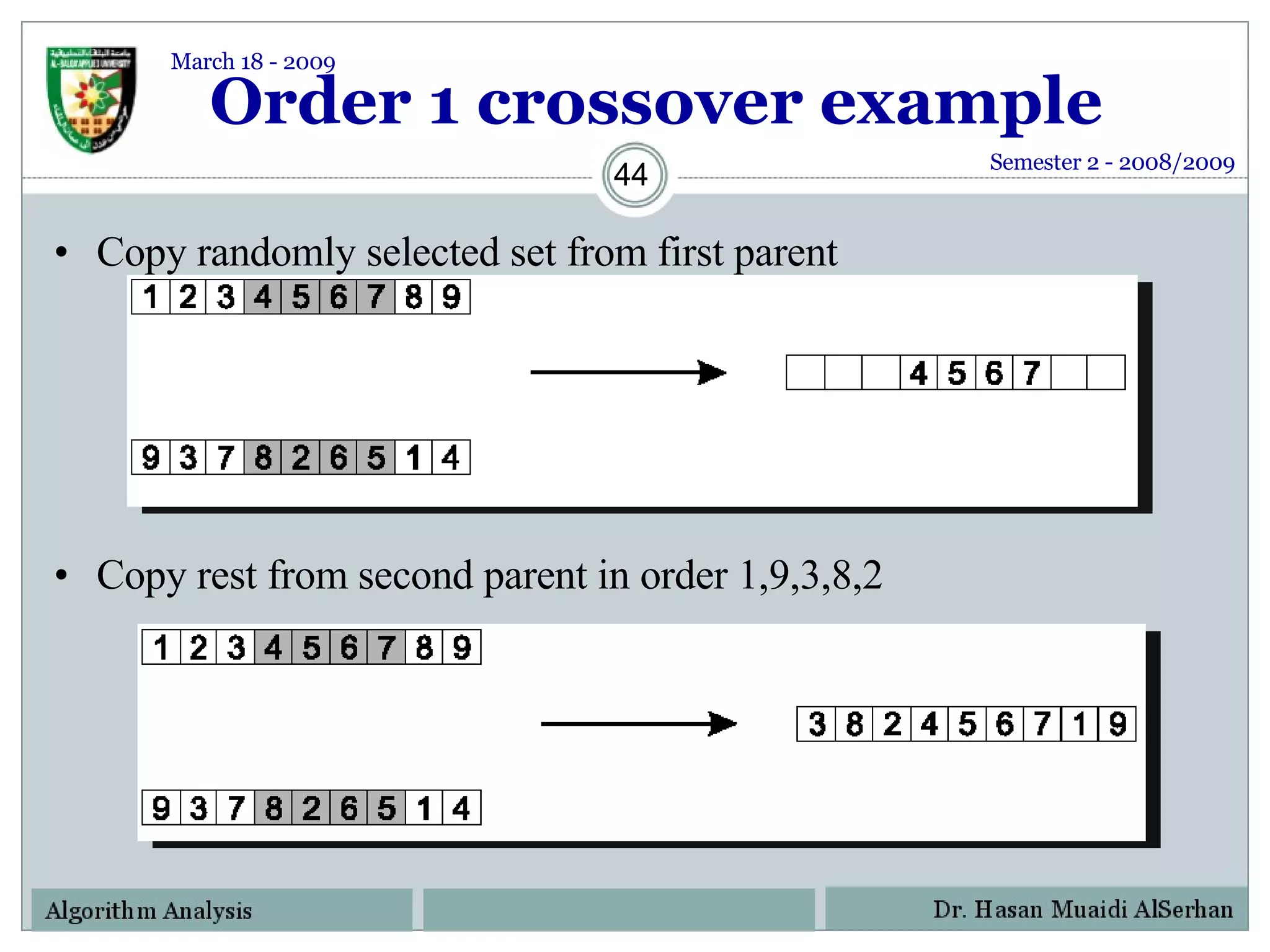

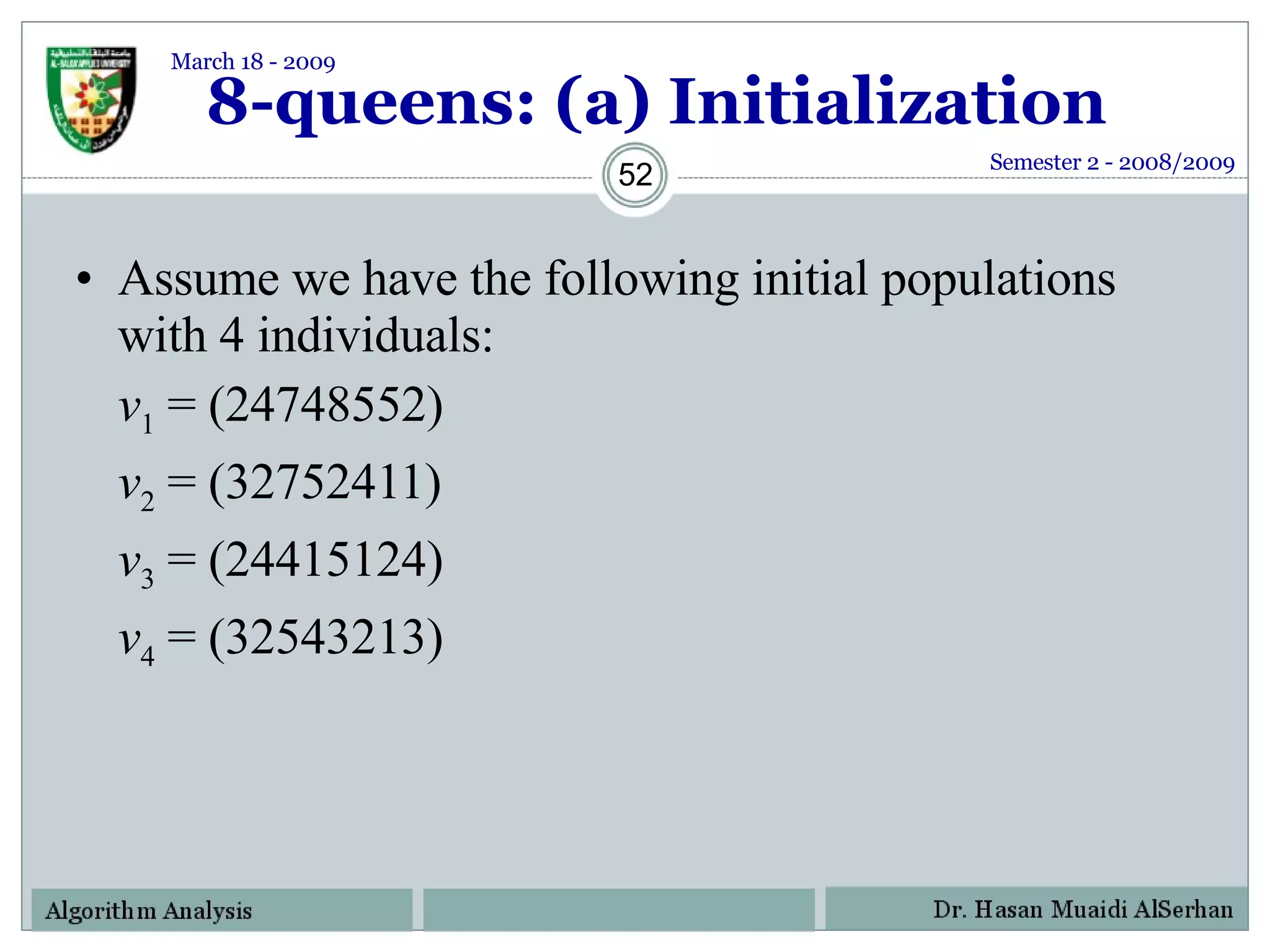

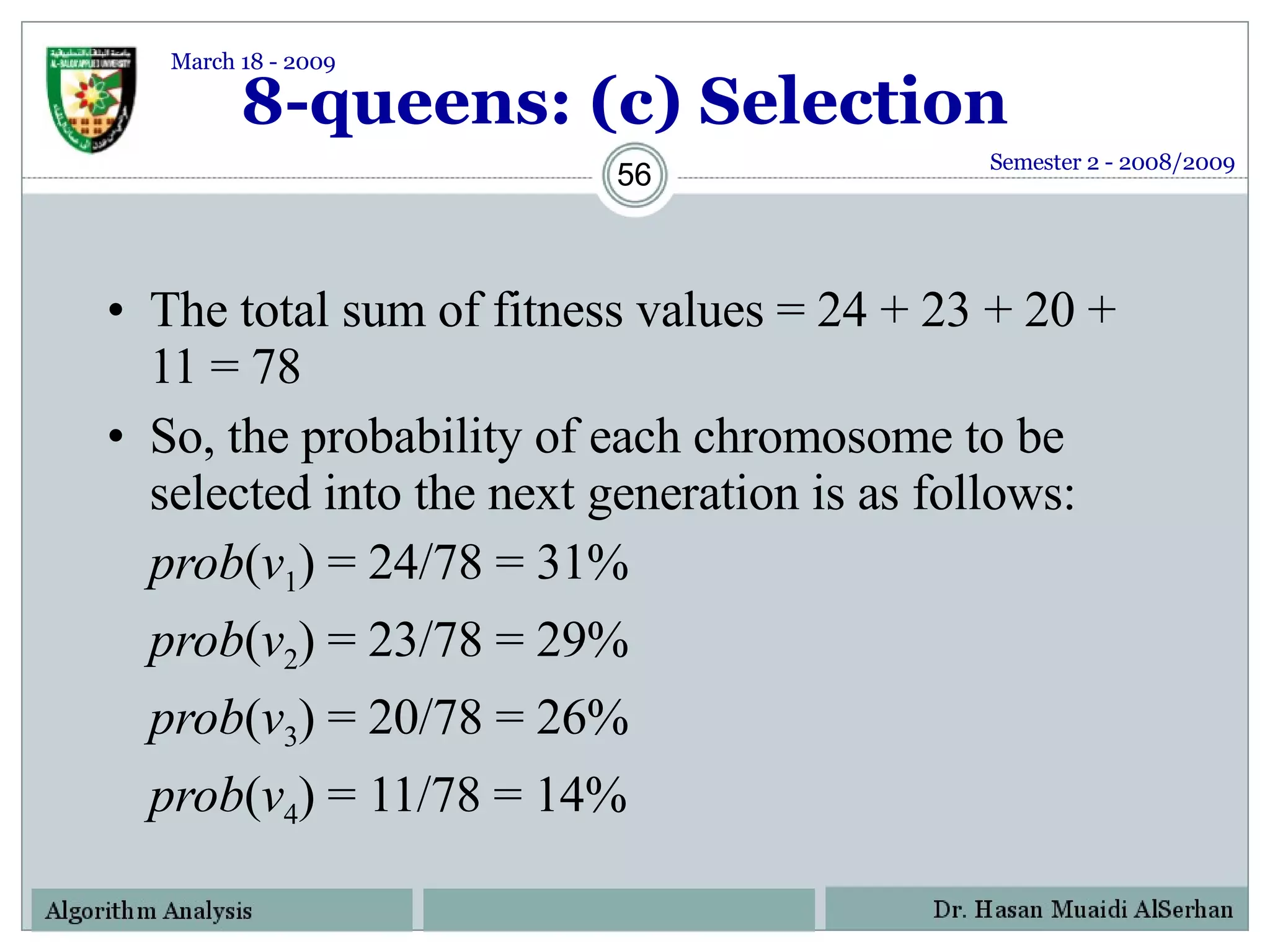

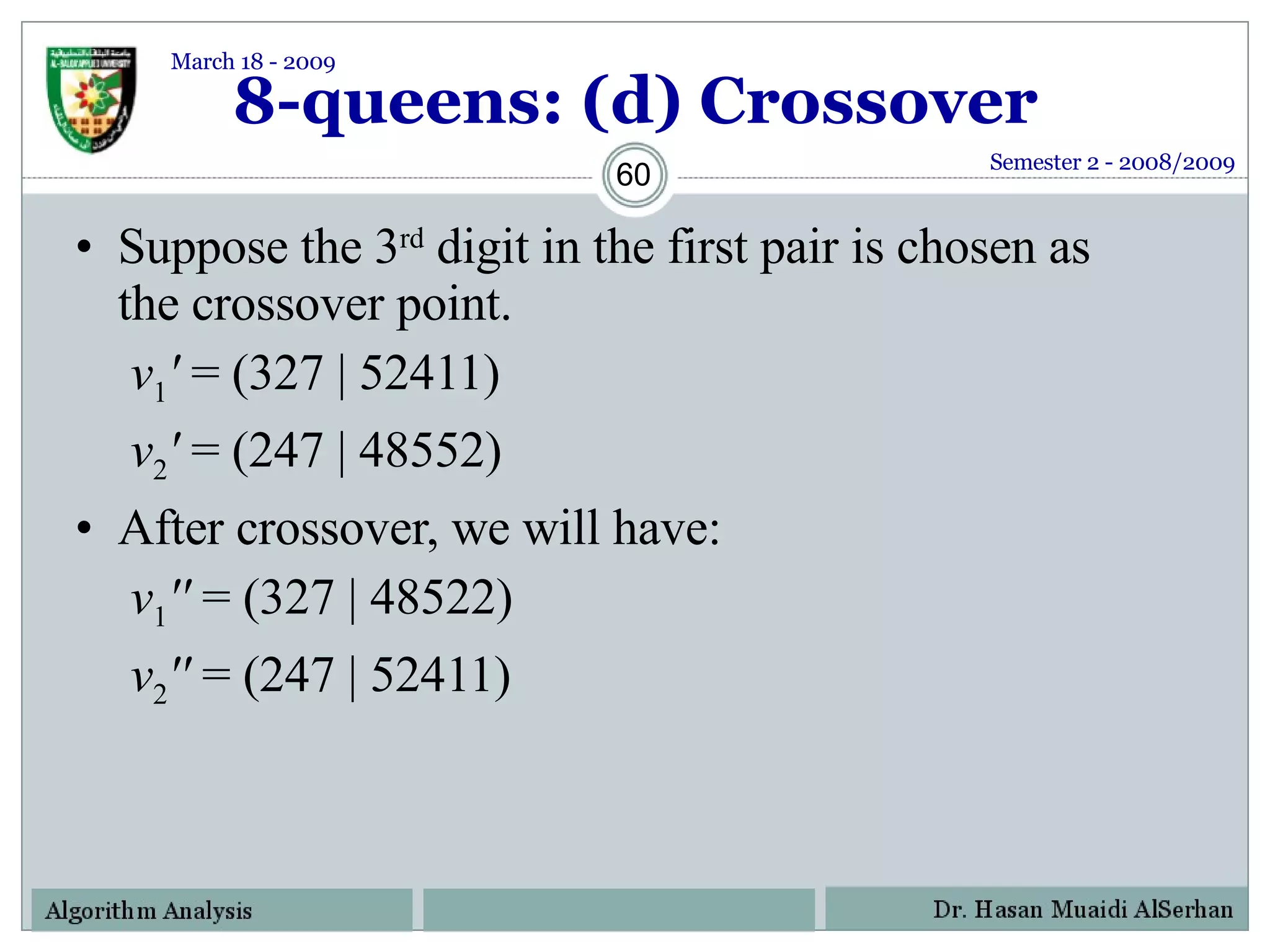

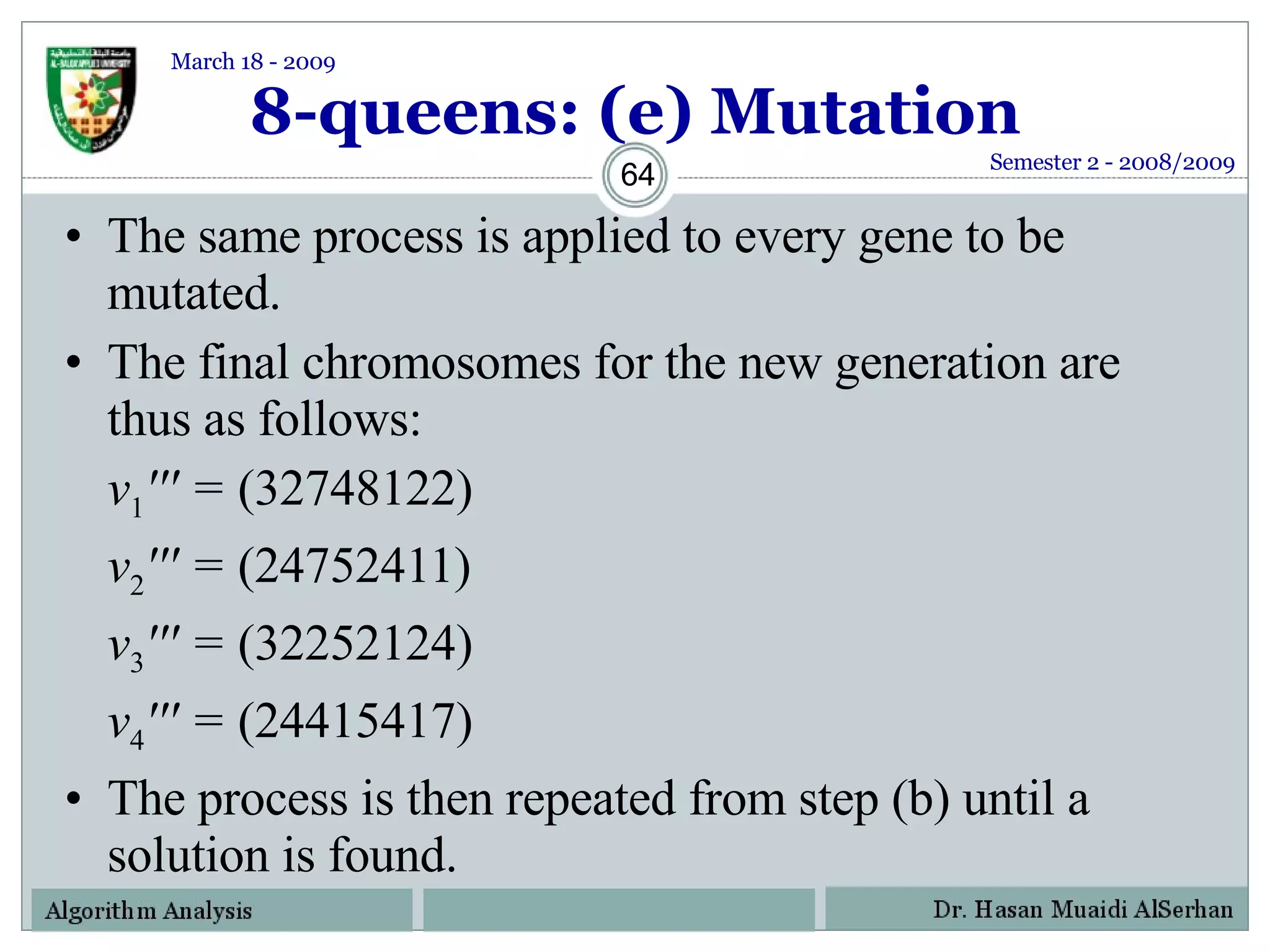

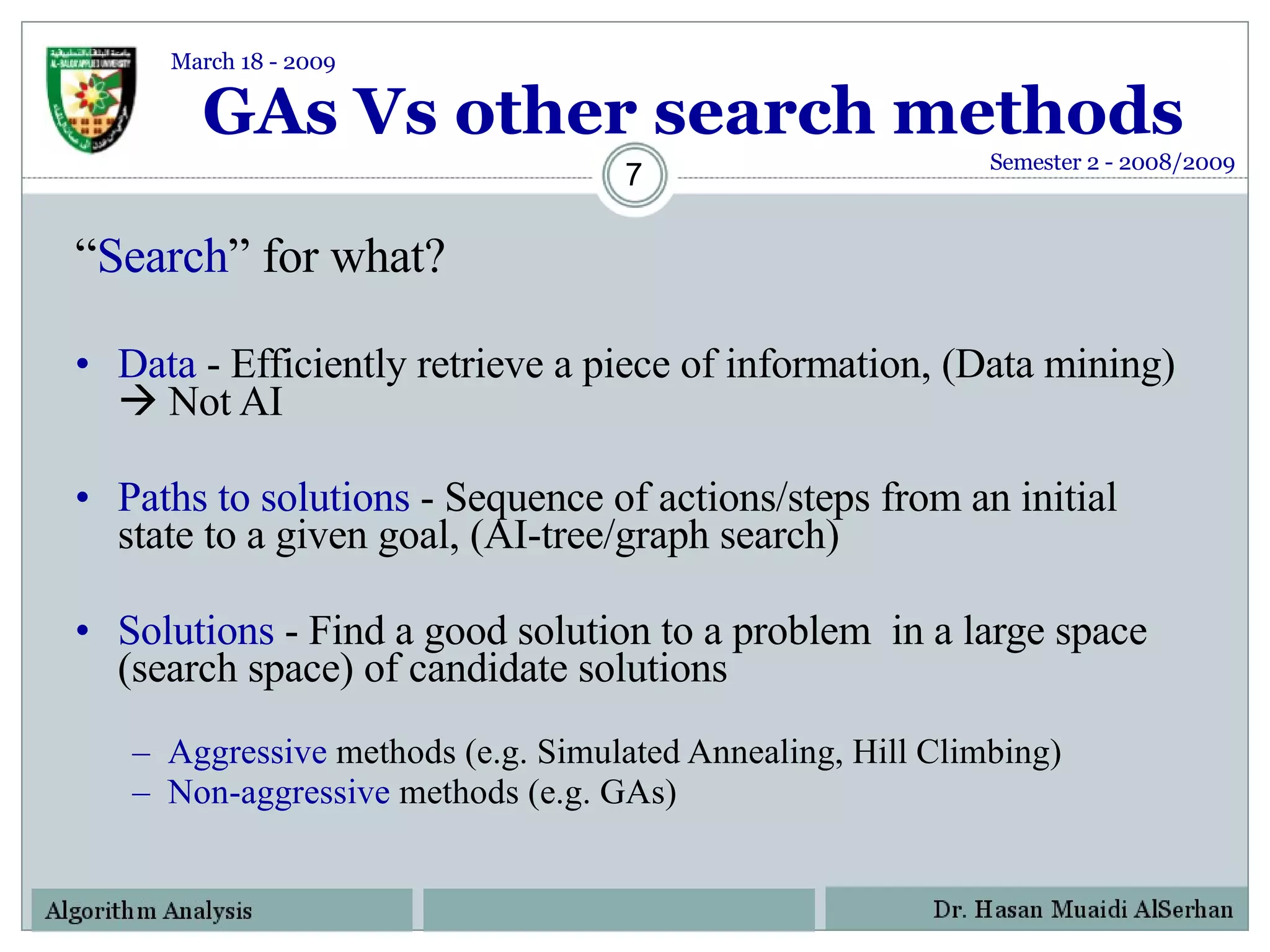

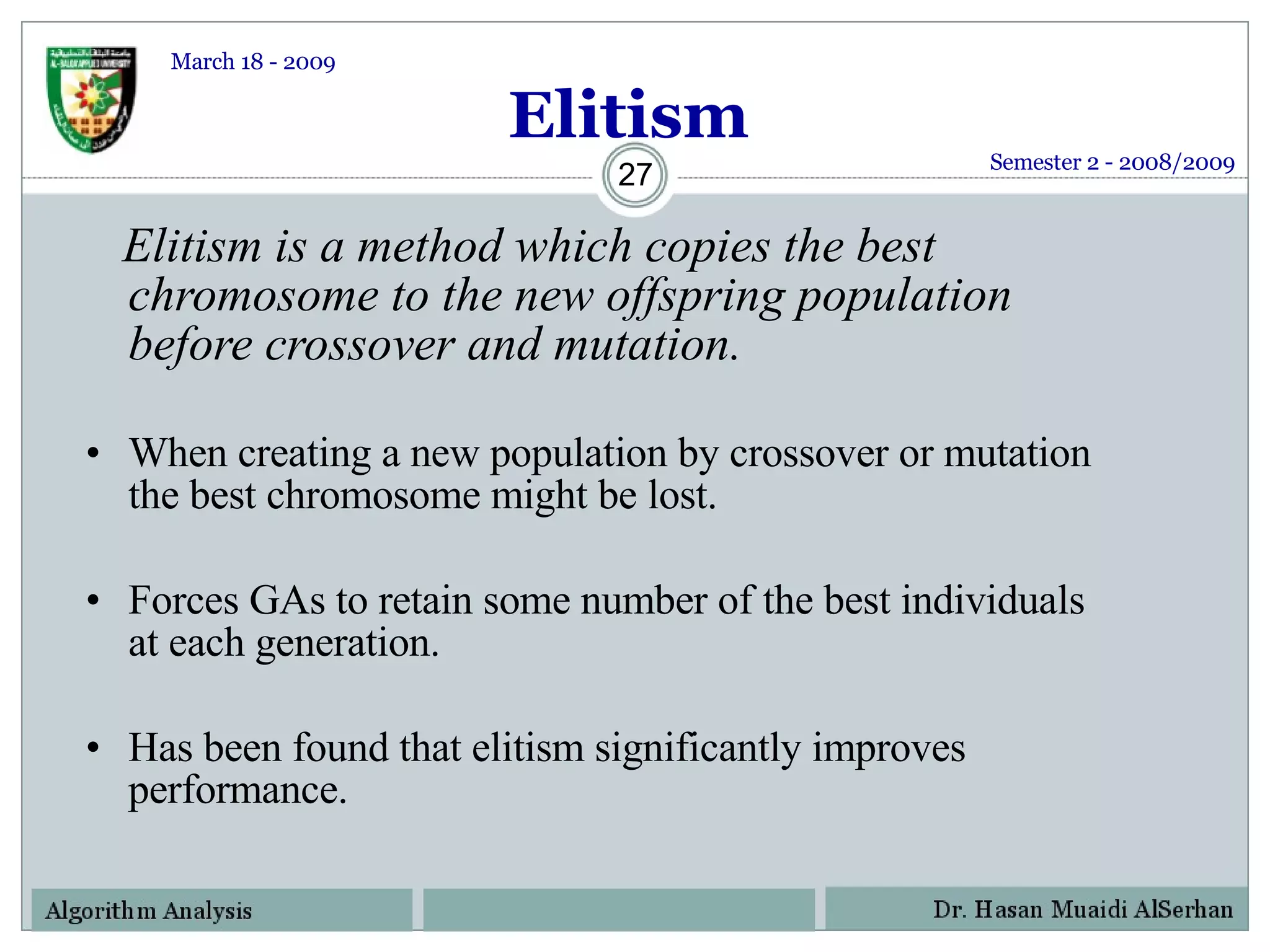

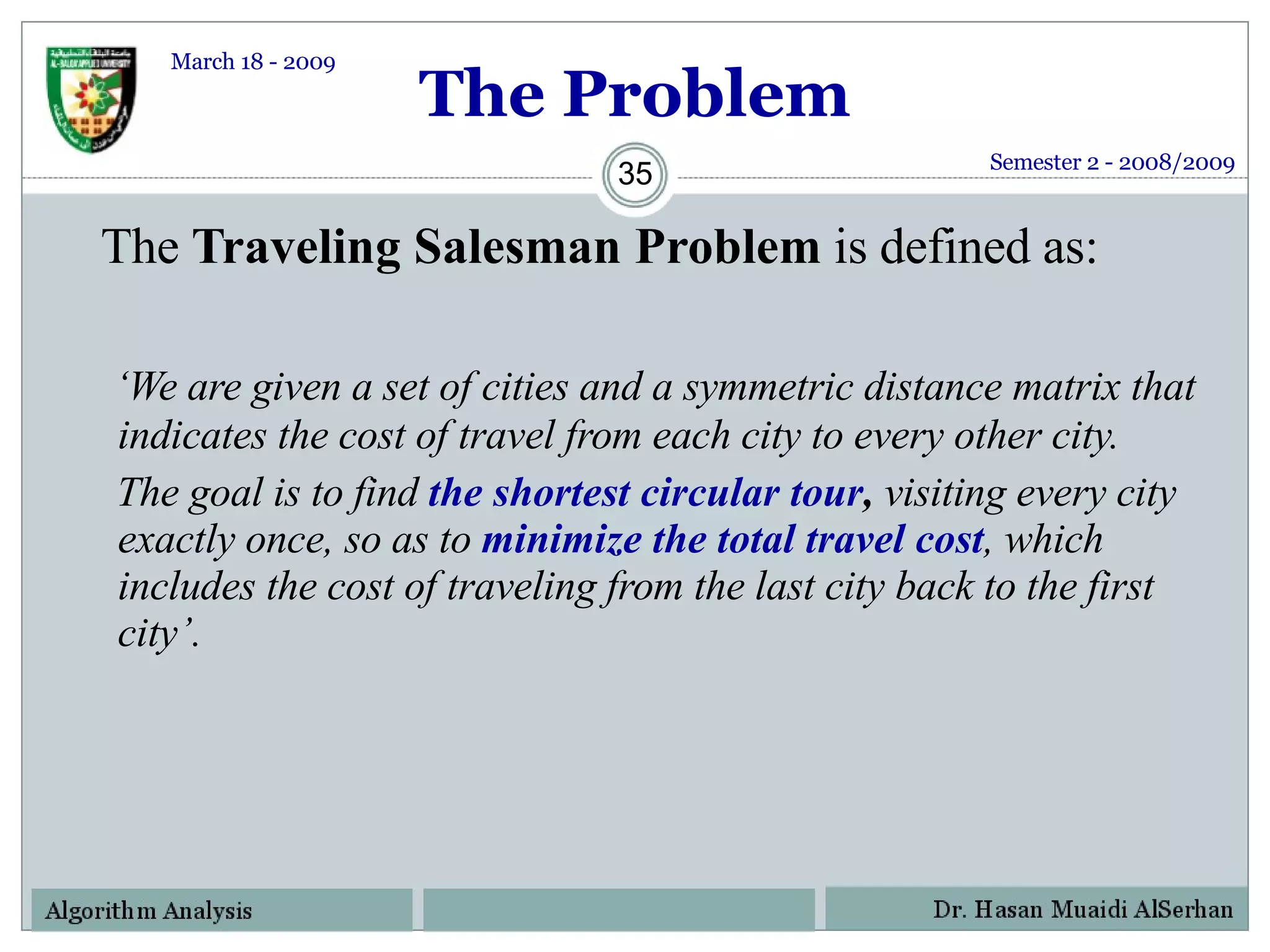

![Fitness Function (contd.) So, for a chromosome [4 1 3 2 5 6], the total cost of travel or fitness will be calculated as shown below Fitness = 35+ 100+ 60+ 400+ 290 + 150 = 1035 kms. Since our objective is to Minimize the distance, the lesser the total distance, the fitter the solution. Semester 2 - 2008/2009 March 18 - 2009](https://image.slidesharecdn.com/geneticalgorithms-124130142809-phpapp02/75/Genetic-Algorithms-40-2048.jpg)