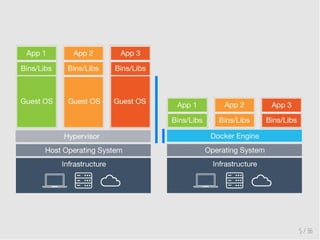

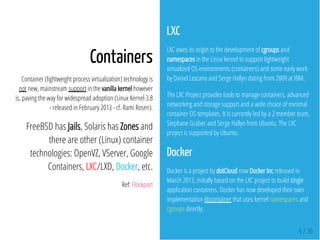

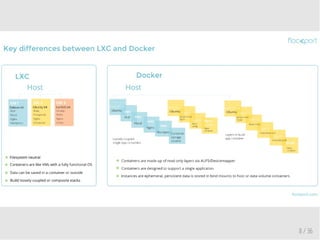

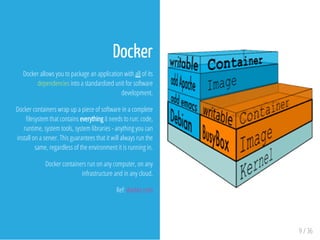

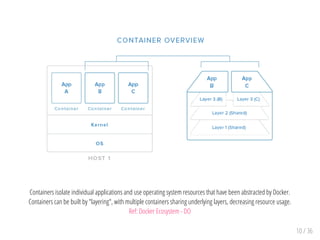

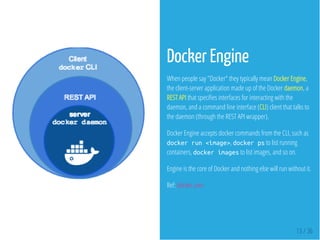

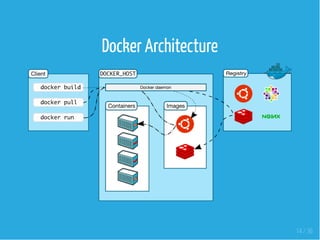

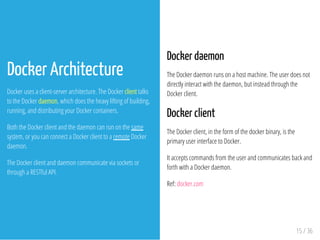

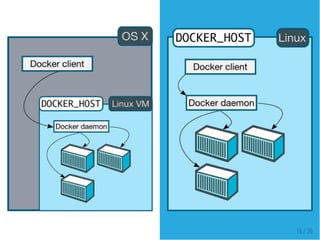

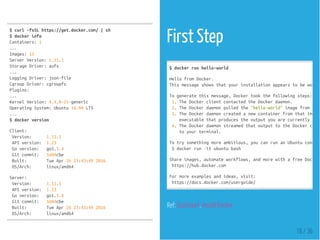

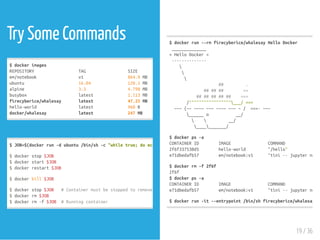

This document discusses containers, virtual machines, and Docker. It provides an overview of containers and how they differ from virtual machines by sharing the host operating system kernel and making more efficient use of system resources. The document then covers Docker specifically, explaining that Docker uses containerization to package applications and dependencies into standardized units called containers. It also provides examples of Docker commands to build custom images and run containers.

![Example Dockerfile

docker/whalesay

FROMubuntu:14.04

RUNapt-getupdate

&&apt-getinstall-ycowsay--no-install-recommends

&&rm-rf/var/lib/apt/lists/*

&&mv/usr/share/cowsay/cows/default.cow/usr/share/cowsay/cows/orig-default.cow

#"cowsay"installsto/usr/games

ENVPATH$PATH:/usr/games

COPYdocker.cow/usr/share/cowsay/cows/

RUNln-sv/usr/share/cowsay/cows/docker.cow/usr/share/cowsay/cows/default.cow

CMD["cowsay"]

firecyberice/whalesay

FROMalpine:3.2

RUNapkupdate

&&apkaddgitperl

&&cd/tmp/

&&gitclonehttps://github.com/jasonm23/cowsay.git

&&cdcowsay&&./install.sh/usr/local

&&cd..

&&rm-rfcowsay

&&apkdelgit

ENVPATH$PATH

COPYdocker.cow/usr/local/share/cows/

#Movethe"default.cow"outofthewaysowecanoverwriteit

RUN

mv/usr/local/share/cows/default.cow/usr/local/share/cows

&&ln-sv/usr/local/share/cows/docker.cow/usr/local/shar

ENTRYPOINT["cowsay"]

24 / 36](https://image.slidesharecdn.com/docker-basics-160502003155/85/Docker-Basics-24-320.jpg)

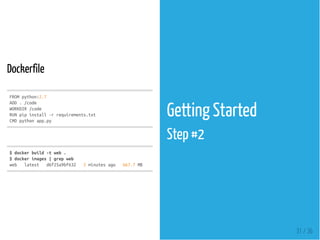

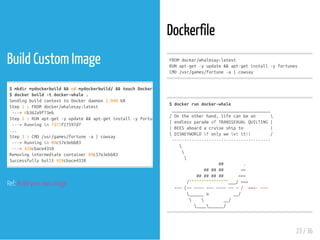

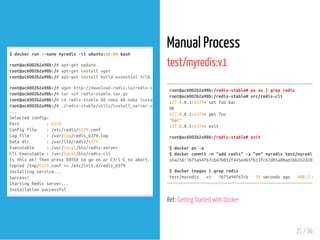

![Dockerfile

test/myredis:df

$dockerbuild-ttest/myredis:df.

$dockerimages|grepredis

test/myredis df 9a450ae418d8 Aboutaminuteago 408.6

test/myredis v1 9b75a94f67cb 13minutesago 408.3

$dockerrun-d-p6379:6379test/myredis:df

1240a12b56e4a87dfe89e4ca4400eb1cafde802ac0187a54776f9ea54bb7f74f

$dockerps

CONTAINERID IMAGE COMMAND CREATED STATUS PORTS

1240a12b56e4 test/myredis:df "redis-server"

$sudoapt-getinstallredis-tools

$redis-cli

127.0.0.1:6379>setbatman

OK

127.0.0.1:6379>getbat

"man"

127.0.0.1:6379>quit

FROMubuntu:16.04

RUNapt-getupdate

RUNapt-getinstall-ywget

RUNapt-getinstall-ybuild-essentialtcl8.5

RUNwgethttp://download.redis.io/redis-stable.tar.gz

RUNtarxzfredis-stable.tar.gz

RUNcdredis-stable&&make&&makeinstall

RUN./redis-stable/utils/install_server.sh

EXPOSE6379

ENTRYPOINT ["redis-server"]

26 / 36](https://image.slidesharecdn.com/docker-basics-160502003155/85/Docker-Basics-26-320.jpg)