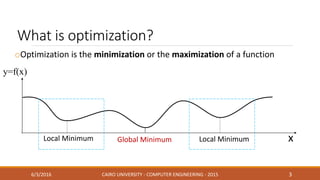

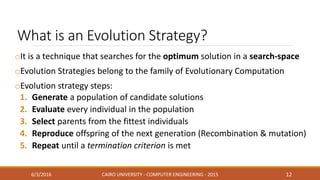

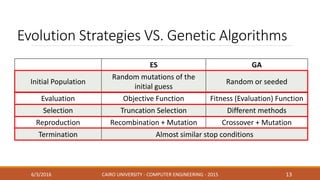

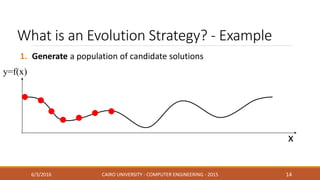

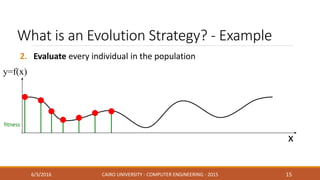

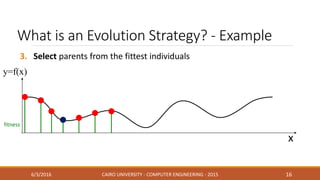

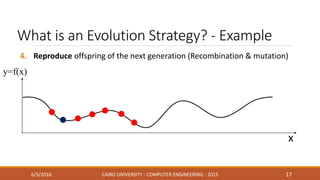

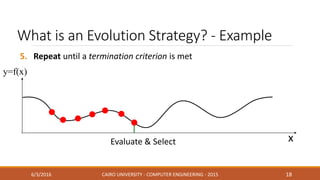

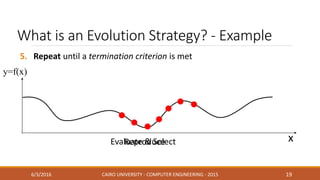

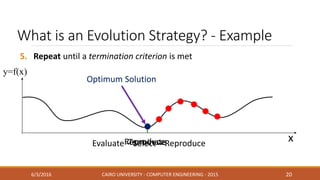

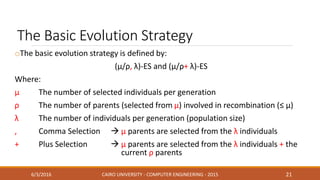

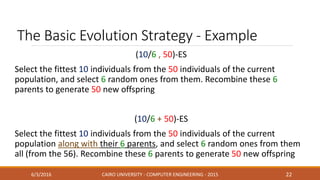

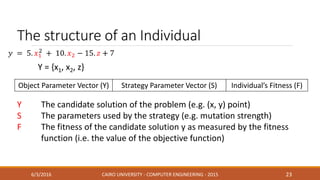

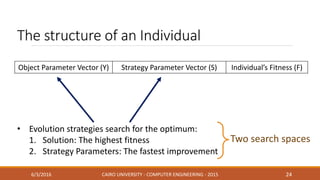

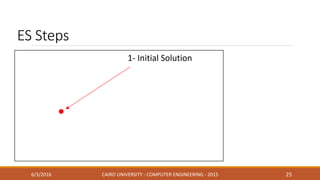

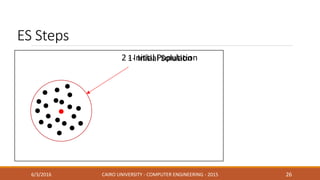

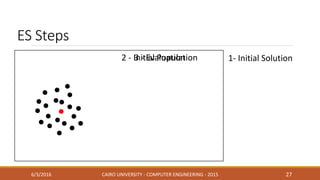

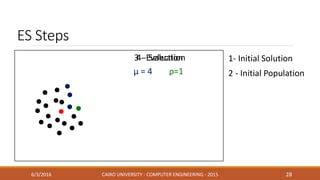

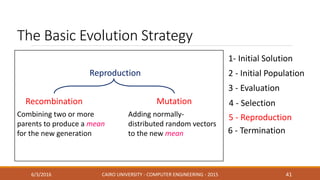

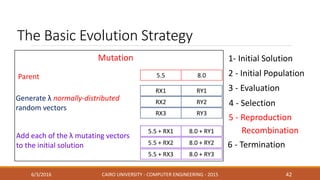

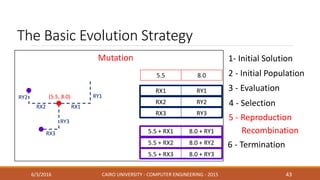

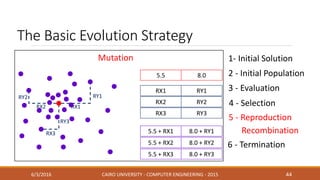

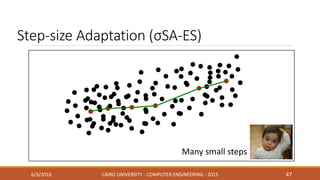

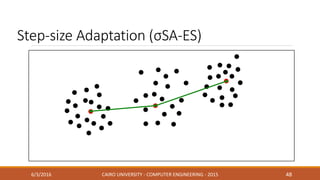

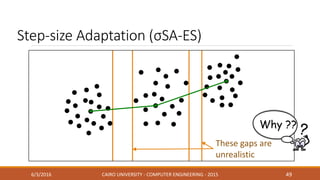

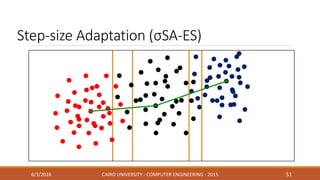

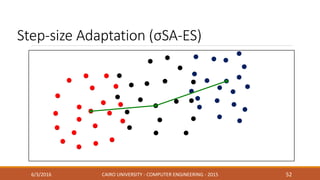

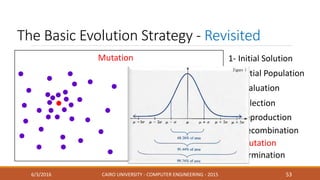

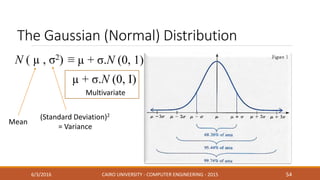

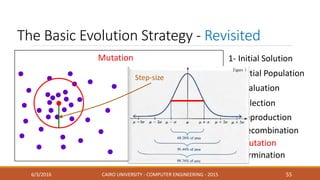

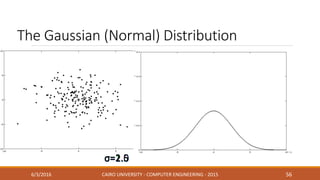

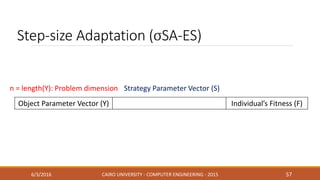

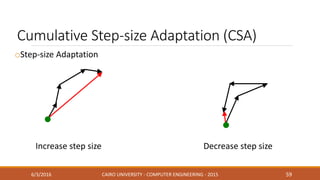

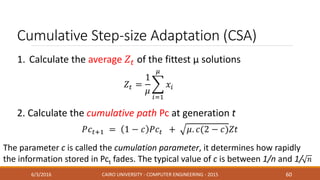

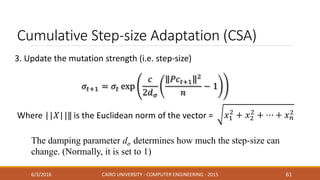

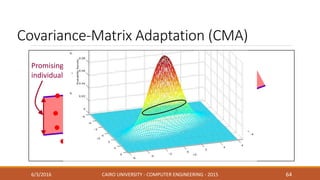

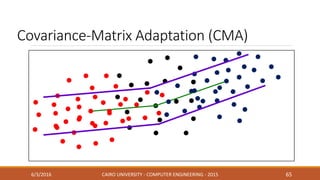

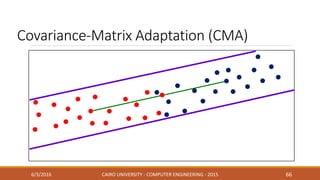

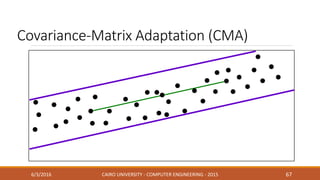

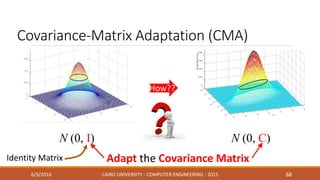

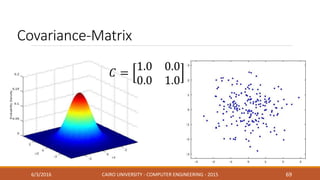

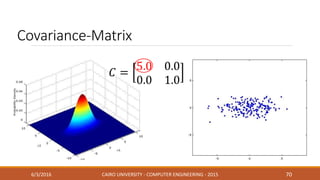

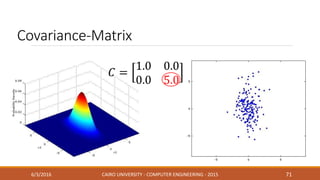

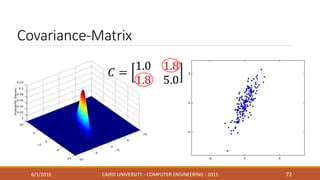

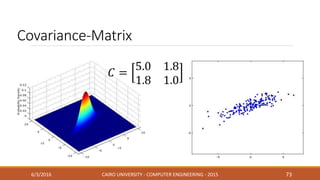

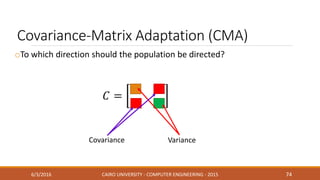

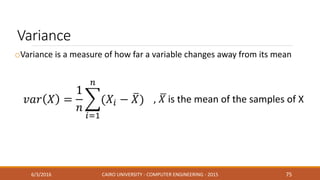

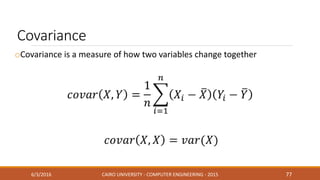

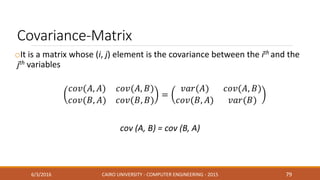

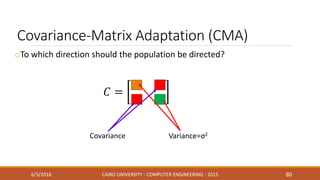

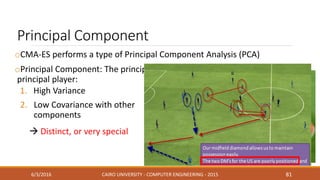

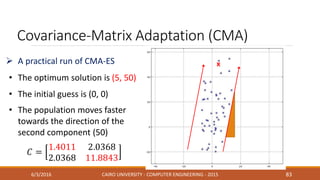

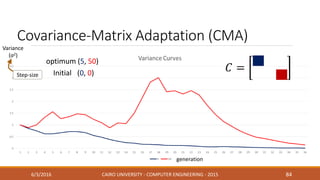

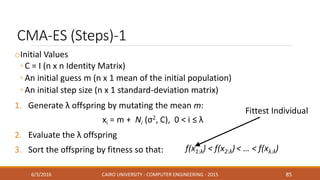

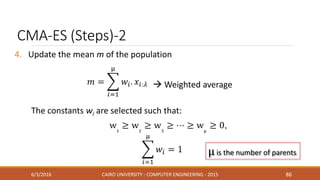

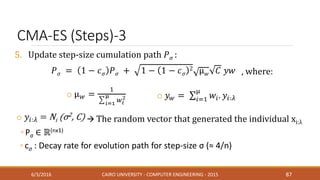

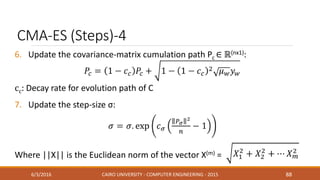

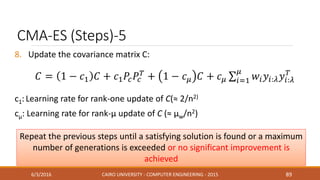

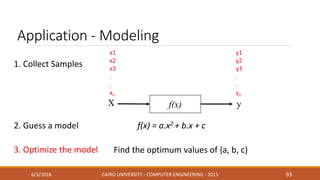

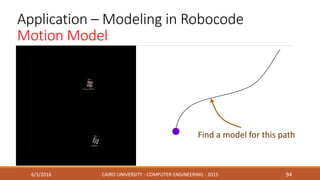

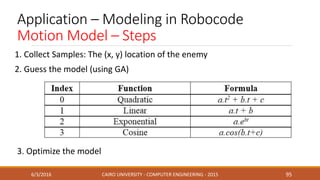

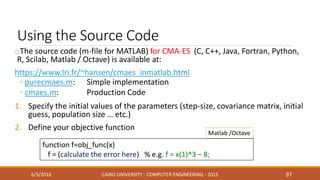

This document discusses covariance matrix adaptation evolution strategy (CMA-ES), an optimization technique. It begins with an introduction to optimization and evolution strategies. CMA-ES adapts the covariance matrix of a multivariate normal distribution used to sample new solutions, allowing it to better model the objective function. The document covers step-size adaptation, cumulative step-size adaptation, and covariance matrix adaptation, with examples provided.