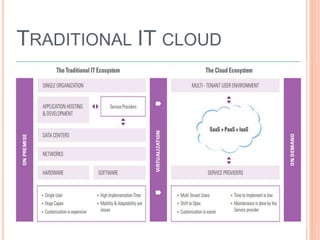

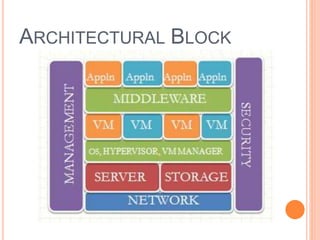

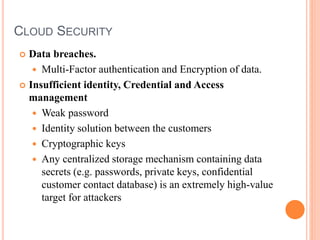

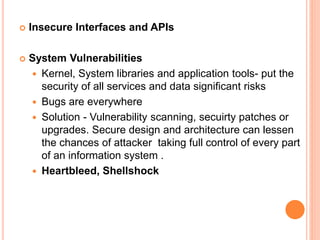

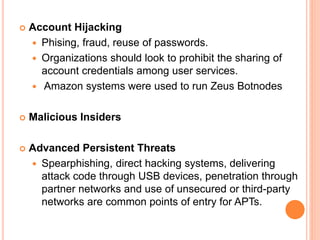

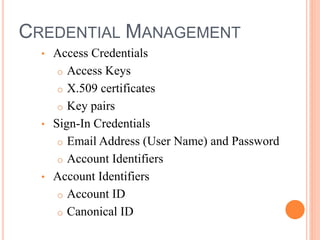

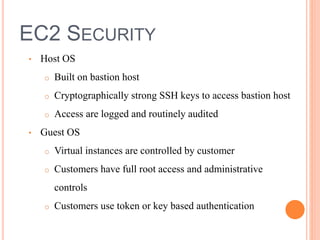

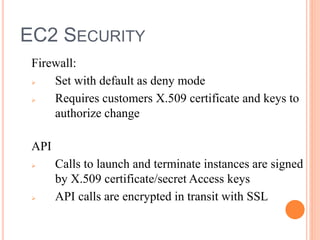

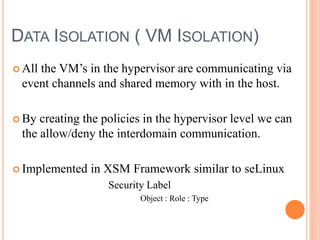

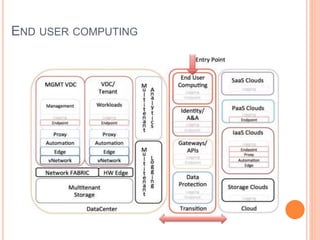

Cloud computing is a model that provides convenient access to configurable computing resources over a network. It allows users to access shared pools of configurable systems like storage, networks, servers and applications. Some key aspects of cloud security include data breaches, insecure interfaces, account hijacking, insider threats and data loss. Physical security of data centers is also important with access control, environmental controls and backup power. Network security focuses on denial of service attacks, port scanning, man-in-the-middle attacks and IP spoofing. Middleware and EC2 security use techniques like security groups, firewalls, access keys and digital certificates. Privacy can be improved through policies that give users more control over personal data collection and use.