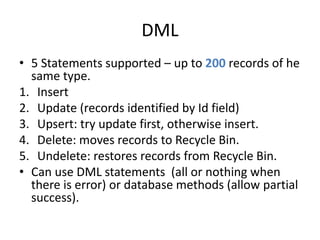

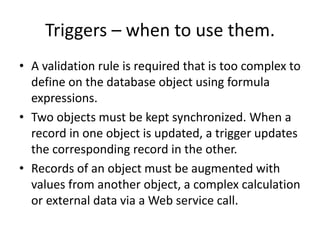

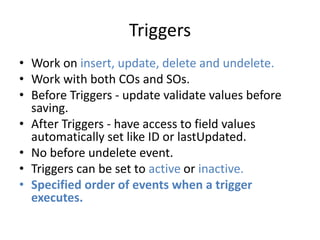

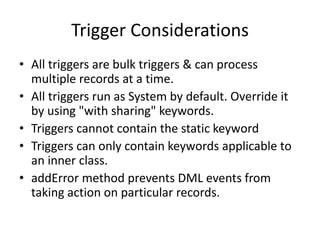

This document provides an overview of Apex and Force.com development. It covers Apex language basics, data types, collections, exceptions, asynchronous execution, database integration, triggers, debugging, limits, and unit testing. Key topics include the similarities between Apex and Java, SOQL, DML statements, polymorphism in Apex, and the requirements to deploy code changes to production.

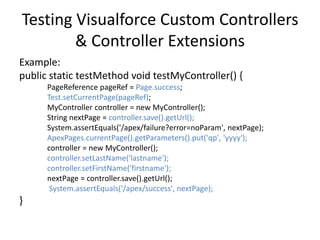

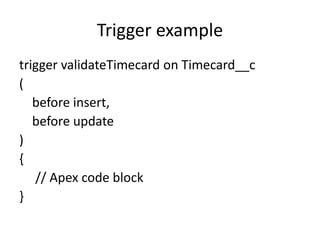

![SOQL For Loop

• Allows it to run when the Project object contains up to

50,000 records for this year without consuming 50,000

records worth of heap space at one time.

Decimal totalHours = 0;

for (Proj__c project : [ SELECT Total_Billable_Hours_Invoiced__c

FROM Proj__c

WHERE Start_Date__c = THIS_YEAR ]) {

totalHours += project.Total_Billable_Hours_Invoiced__c;

}

• Above code is still inefficient & run out of governor limits.

• Change the type of variable in the For loop to a list of

Project records, Force.com provides up to 200 records per

loop iteration. This allows you to modify a whole list of

records in a single operation.](https://image.slidesharecdn.com/chapter5apexbasics-140917112513-phpapp01/85/SFDC-Introduction-to-Apex-21-320.jpg)

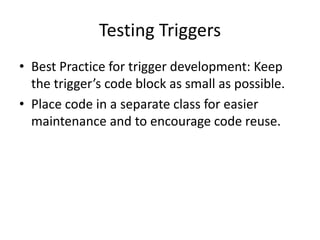

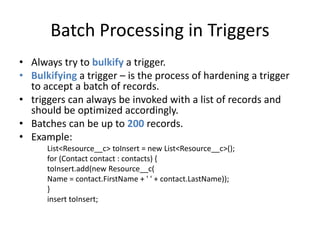

![Implicit Variables in Triggers

• Trigger.new, Trigger.newMap, Trigger.old,

Trigger.oldMap, isInsert, isUpdate, isExecuting, …

• Trigger.newMap and Trigger.oldMap map IDs to

sObjects.

• Trigger.new is a collection. When used as a bind

variable in a SOQL query, Apex transforms the records

into corresponding ids.

Example:

Contact[] cons = [SELECT LastName FROM Contact

WHERE AccountId IN :Trigger.new];](https://image.slidesharecdn.com/chapter5apexbasics-140917112513-phpapp01/85/SFDC-Introduction-to-Apex-26-320.jpg)

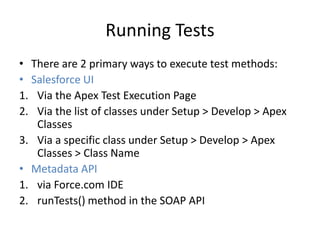

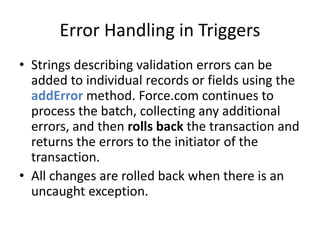

![Debug Log Example

EXECUTION_STARTED

CODE_UNIT_STARTED|[EXTERNAL]execute_anonymous_apex

CODE_UNIT_STARTED|[EXTERNAL]MyTrigger on Account trigger event

BeforeInsert for [new]

CODE_UNIT_FINISHED <-- The trigger ends

CODE_UNIT_FINISHED <-- The executeAnonymous ends

EXECUTION_FINISHED](https://image.slidesharecdn.com/chapter5apexbasics-140917112513-phpapp01/85/SFDC-Introduction-to-Apex-37-320.jpg)

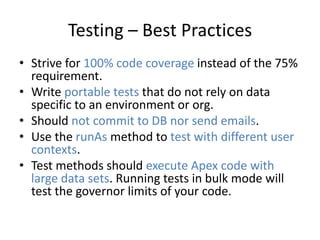

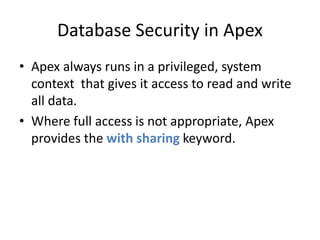

![Debug Log Line

• Log lines are made up of a set of fields, delimited by a pipe (|).

The format is:

• timestamp: consists of the time when the event occurred and a

value between parentheses. The time is in the user's time zone and

in the format HH:mm:ss.SSS.

• event identifier: consists of the specific event that triggered the

debug log being written to, such

as SAVEPOINT_RESET or VALIDATION_RULE, and any additional

information logged with that event, such as the method name or

the line and character number where the code was executed.

• Example:

11:47:46.038 (38450000)|USER_DEBUG|[2]|DEBUG|Hello World!](https://image.slidesharecdn.com/chapter5apexbasics-140917112513-phpapp01/85/SFDC-Introduction-to-Apex-38-320.jpg)