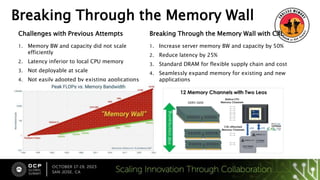

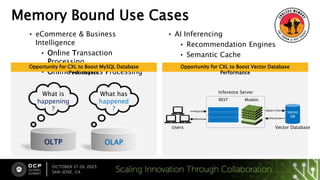

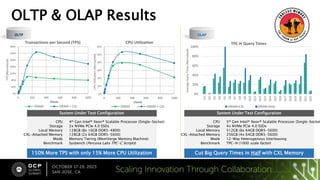

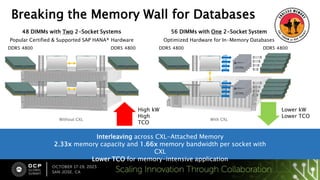

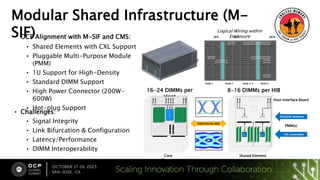

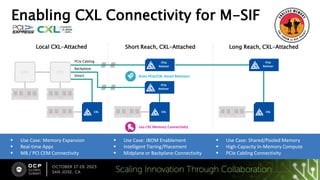

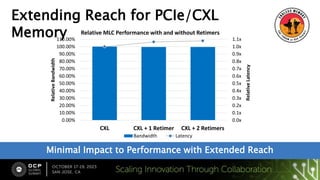

The document discusses advancements in overcoming memory bottlenecks through the adoption of CXL technology, highlighting improvements in memory bandwidth and capacity. It reviews use cases in various industries, showcasing successful performance boosts for databases and analytics applications. Additionally, it emphasizes current collaborations and the benefits of a modular shared infrastructure for memory expansion and efficient computing.

![Call to Action

Visit Check out how we

smashed through Memory

[OCP Map and where we

are]

Learn More

www.opencompute.org

• wiki/server/DC-MHS/M-SIF Base Spec:

0.5

• wiki/server/CMS: CMM Proposal

• wiki/hardware_management/DC-SCM:

2.0

CXL Resources

• Linux: https://pmem.io/ndctl/collab

• Interop:

https://www.asteralabs.com/interop

Ecosystem Alliance Contact:

• michael.ocampo@asteralabs.com

See the Demos

Booth A11

Etherne

t

Scale memory

bandwidth and

capacity

Scale parallel

processing of

accelerators

Scale rack

connectivity over

copper](https://image.slidesharecdn.com/cxlforumasteralabsfinalpresentation-231025005123-2a50a8f2/85/Breaking-the-Memory-Wall-12-320.jpg)