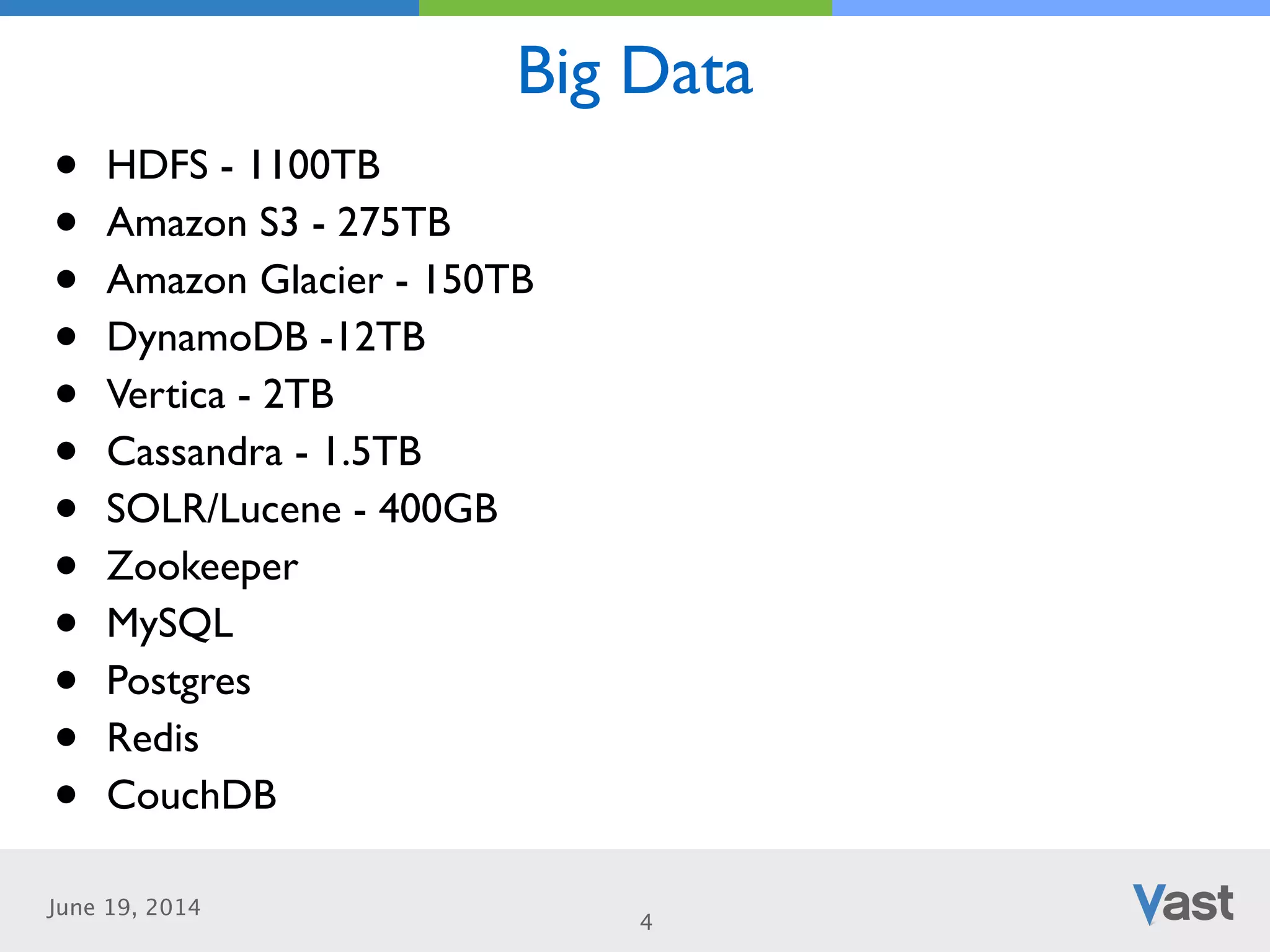

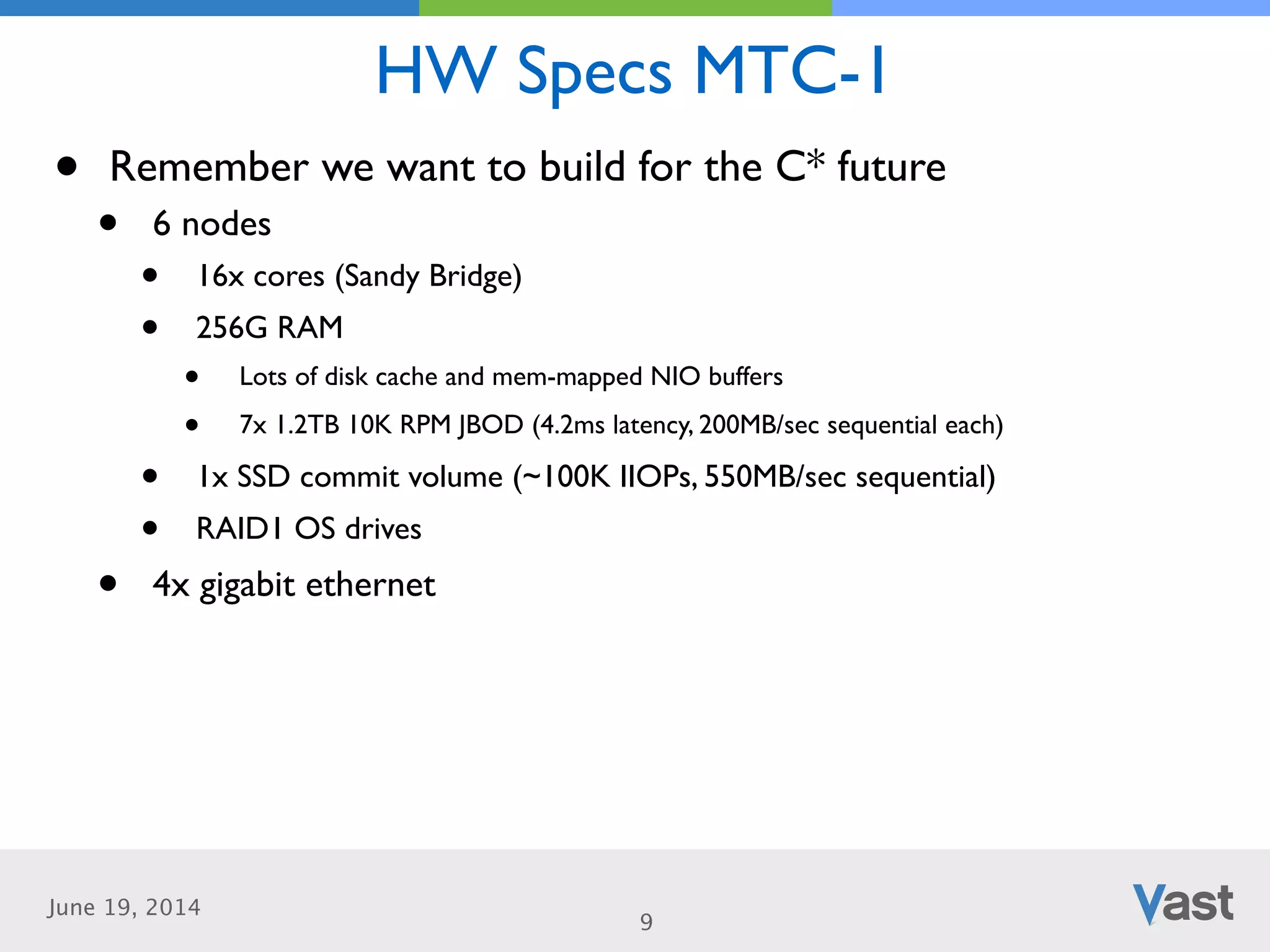

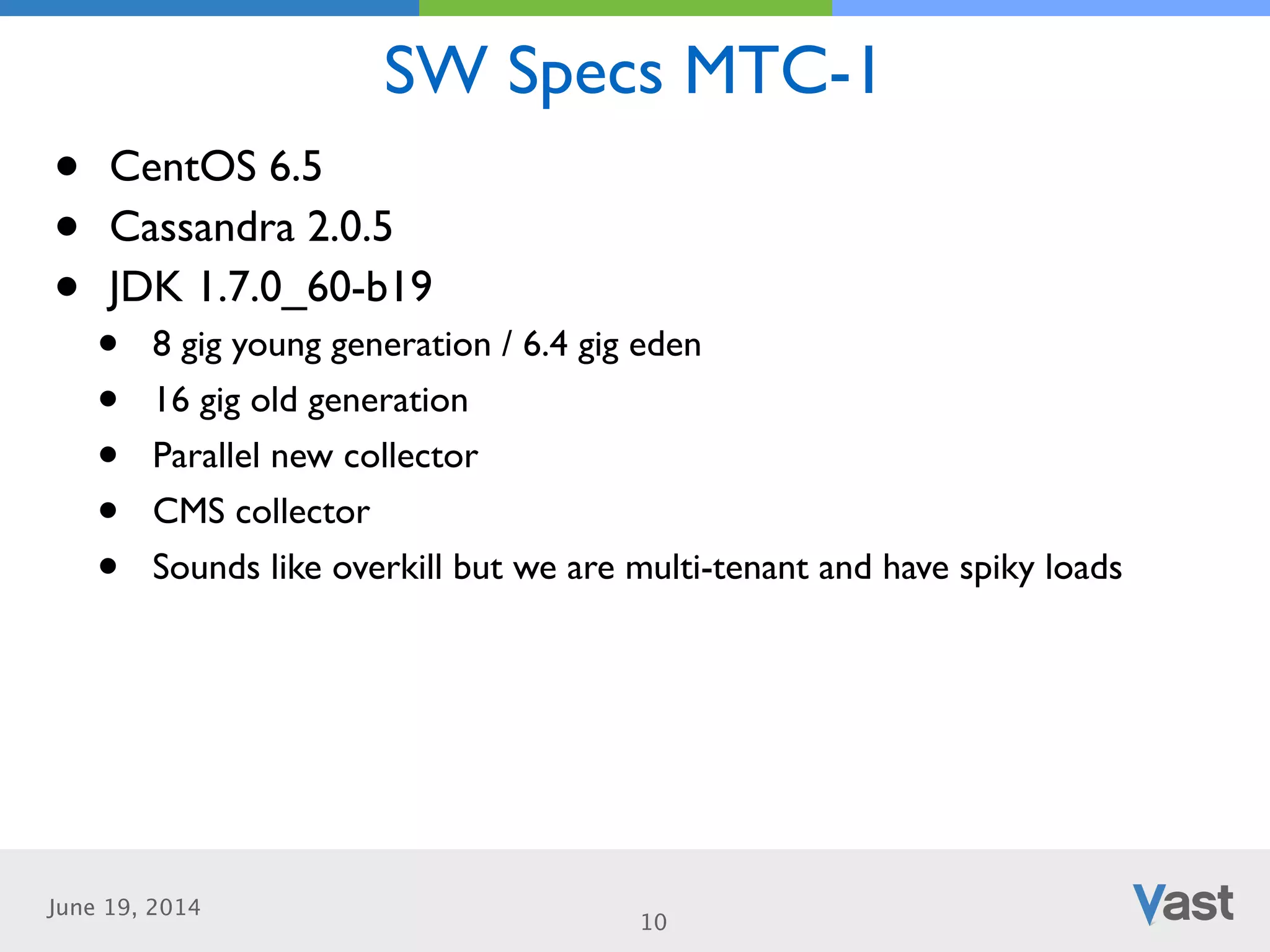

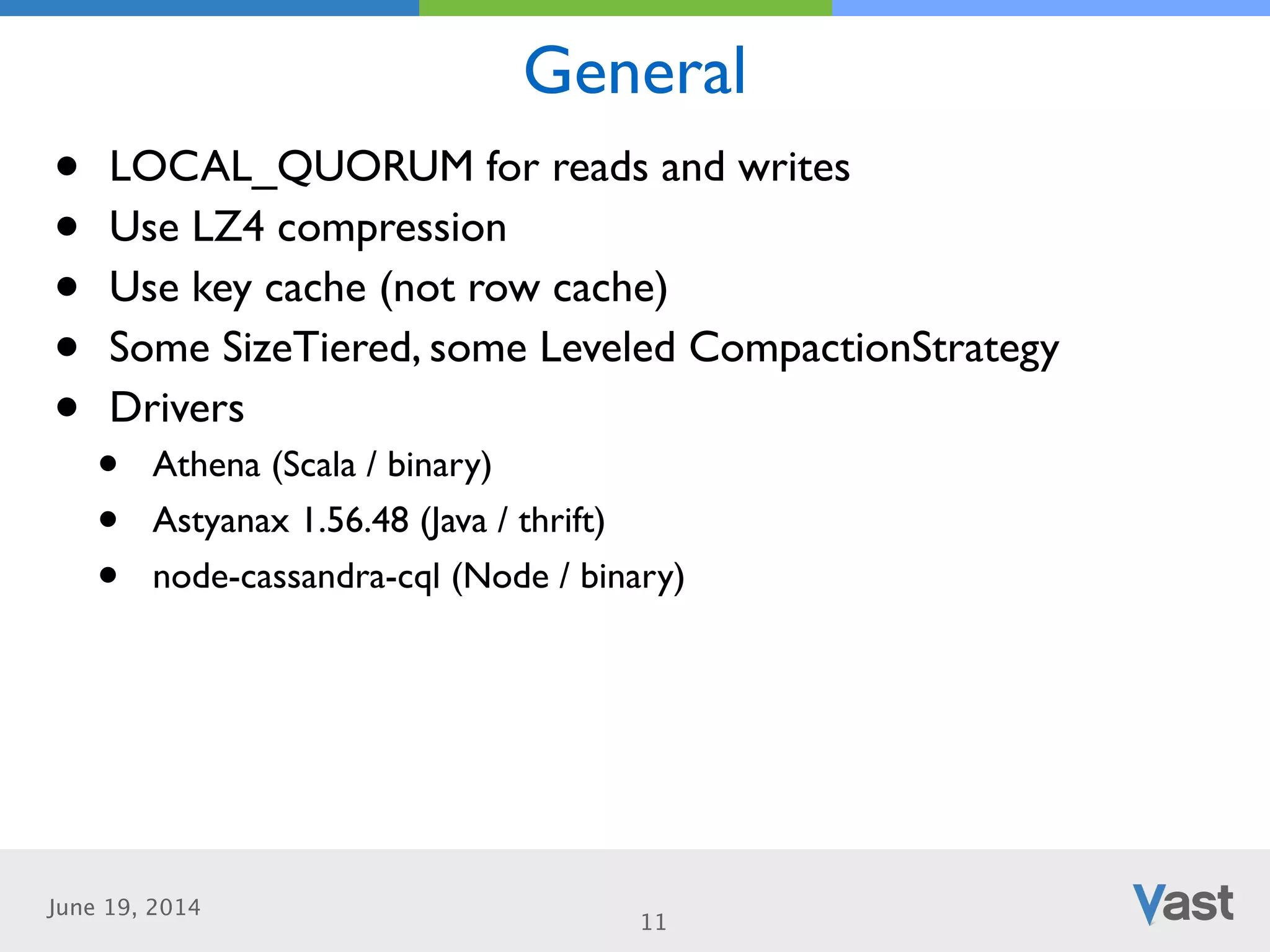

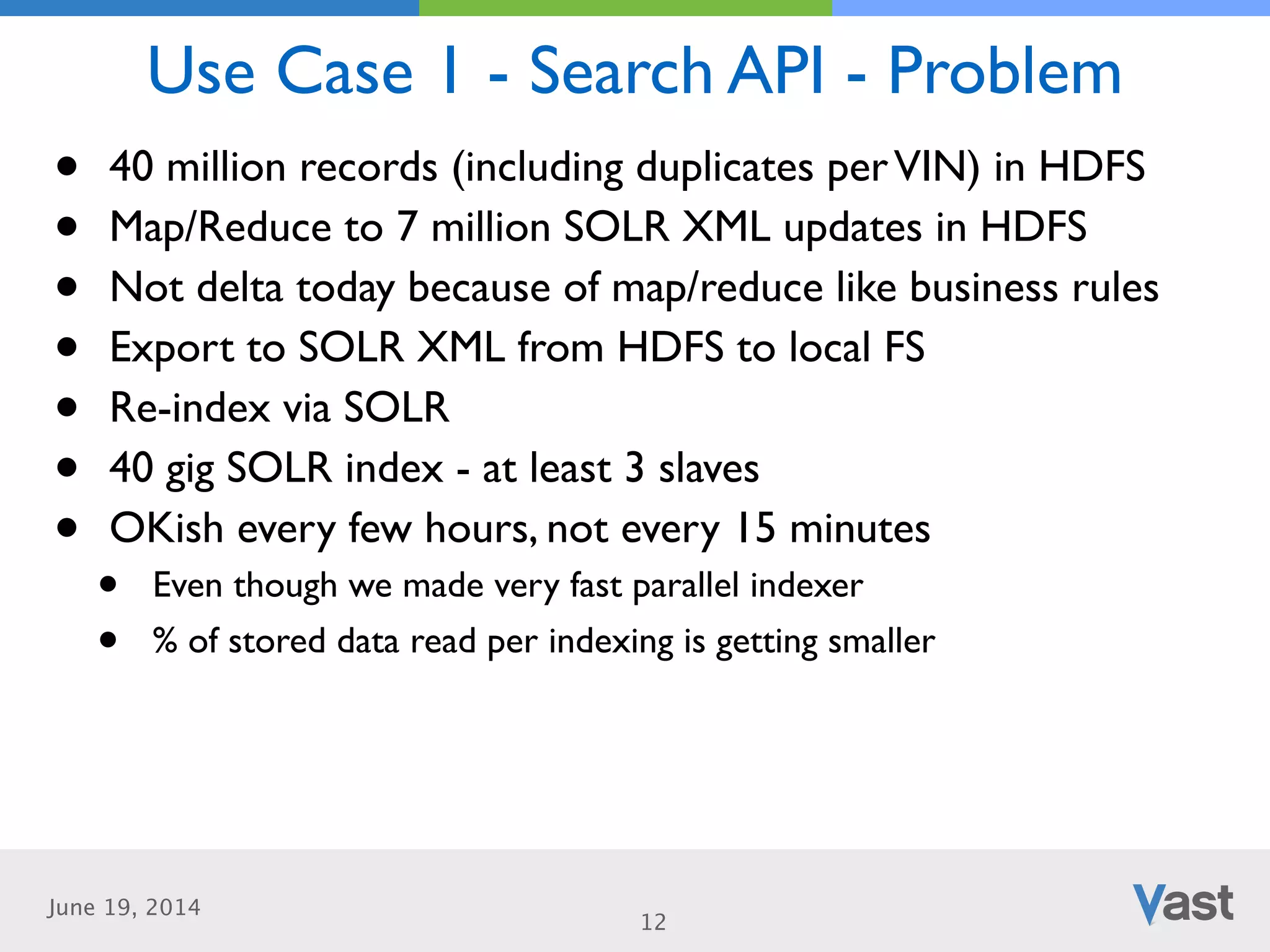

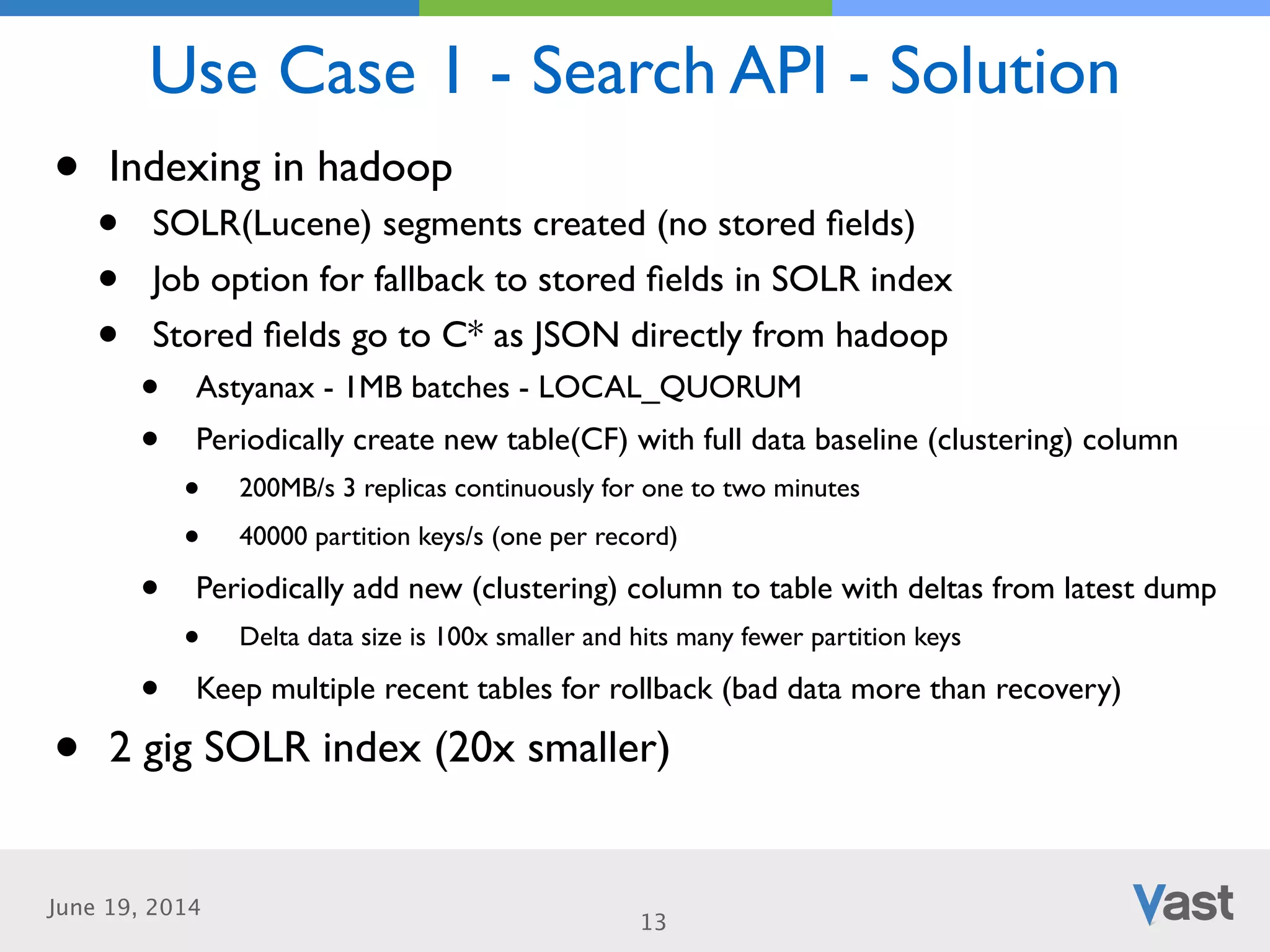

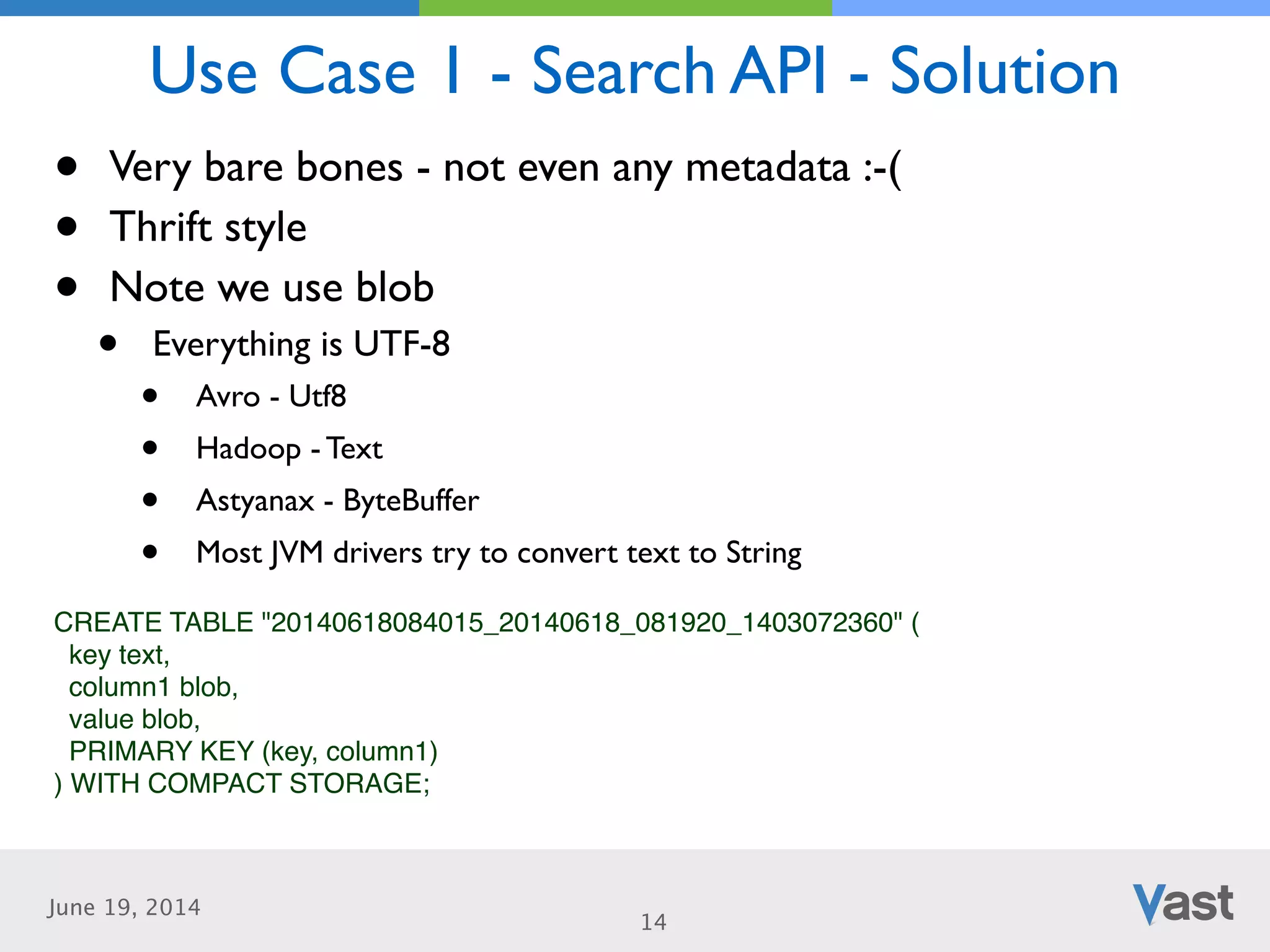

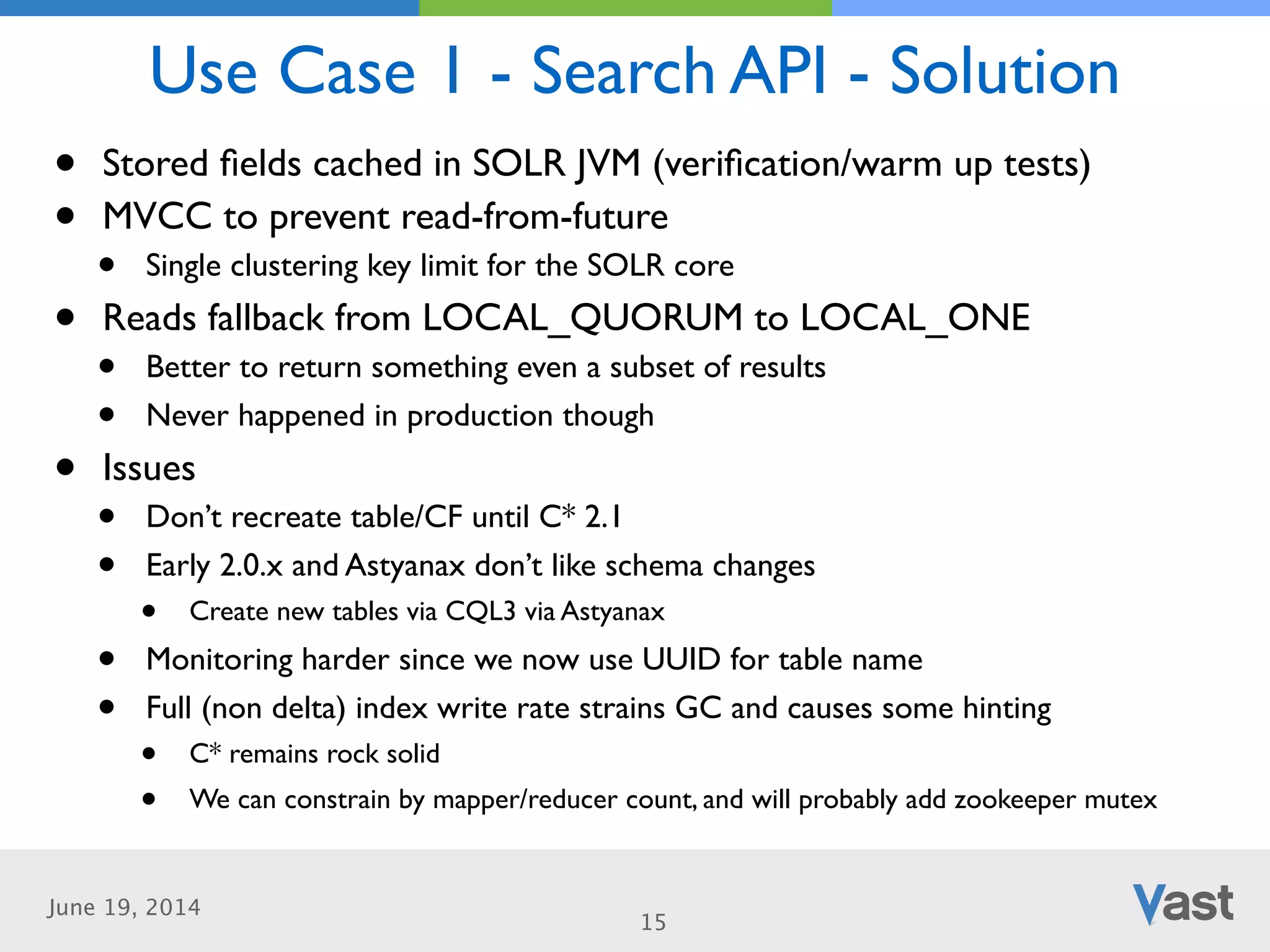

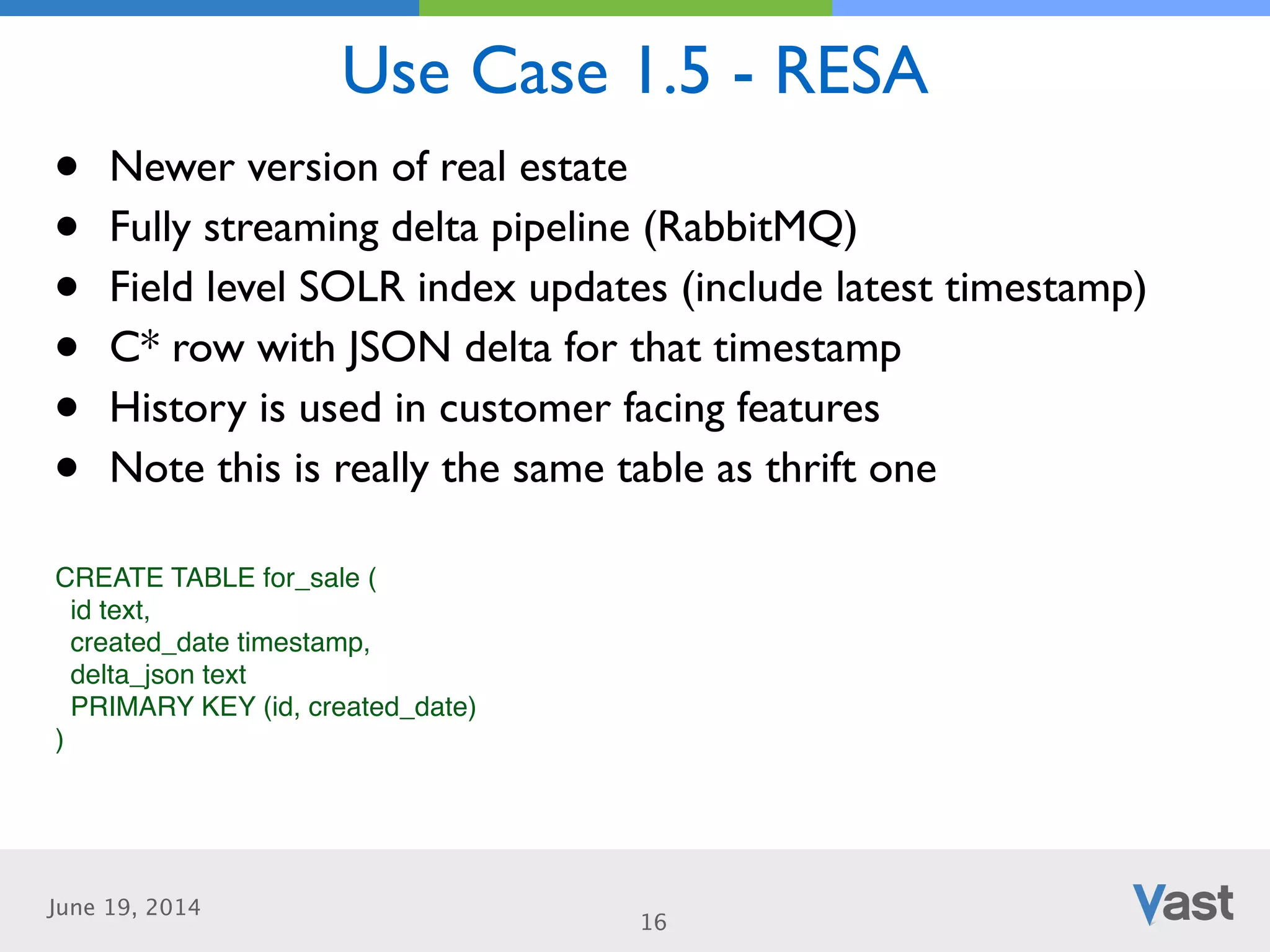

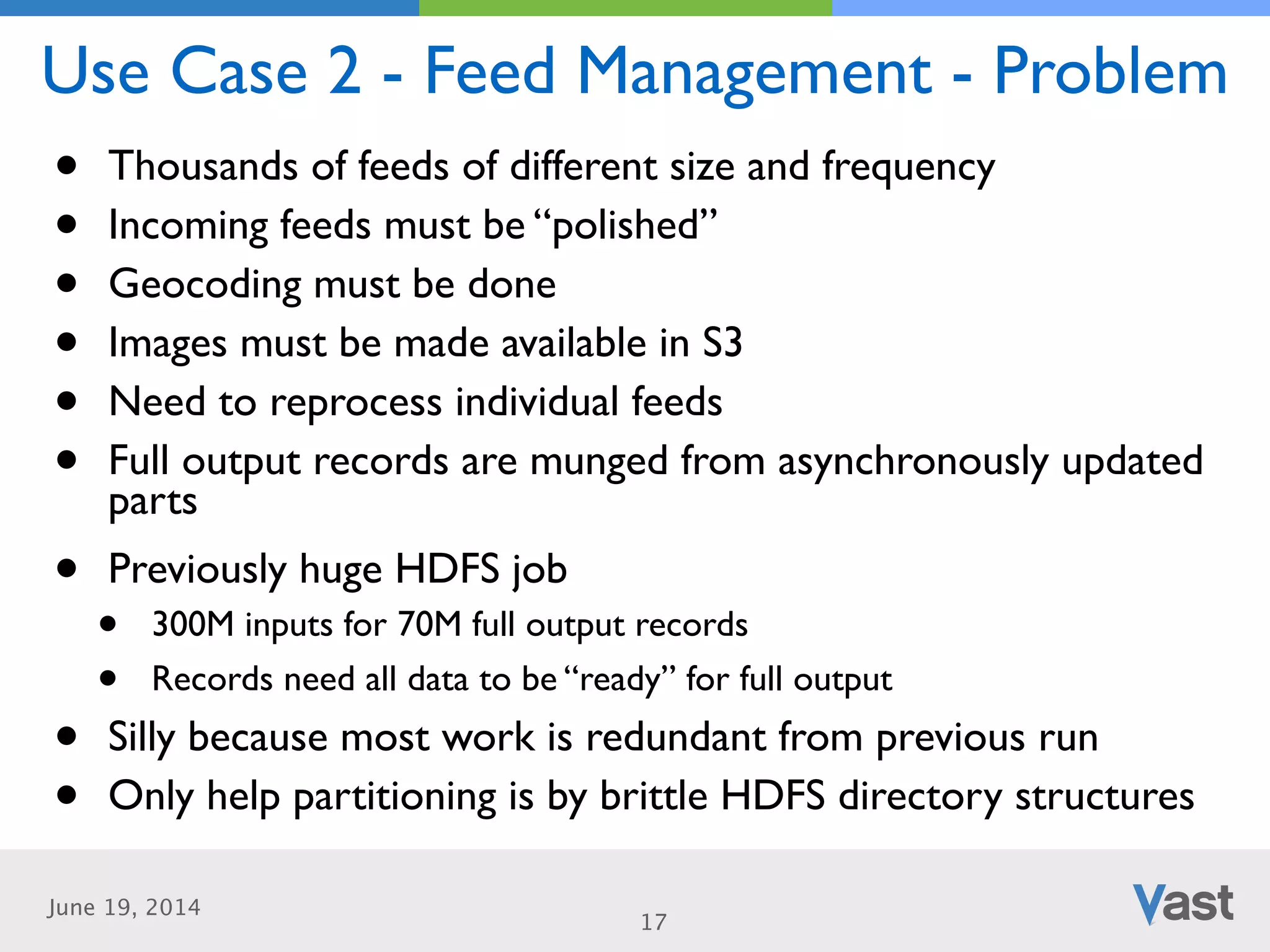

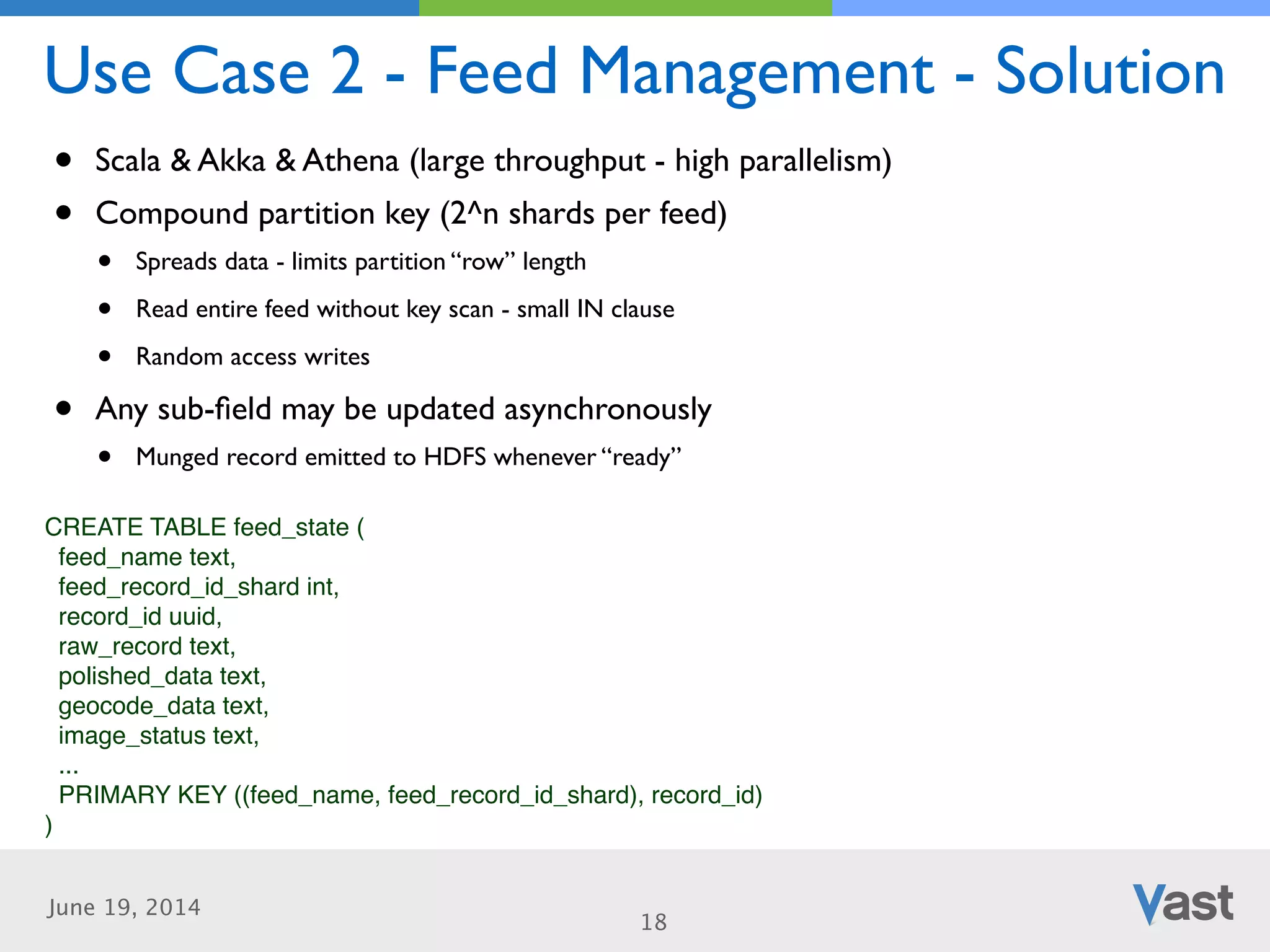

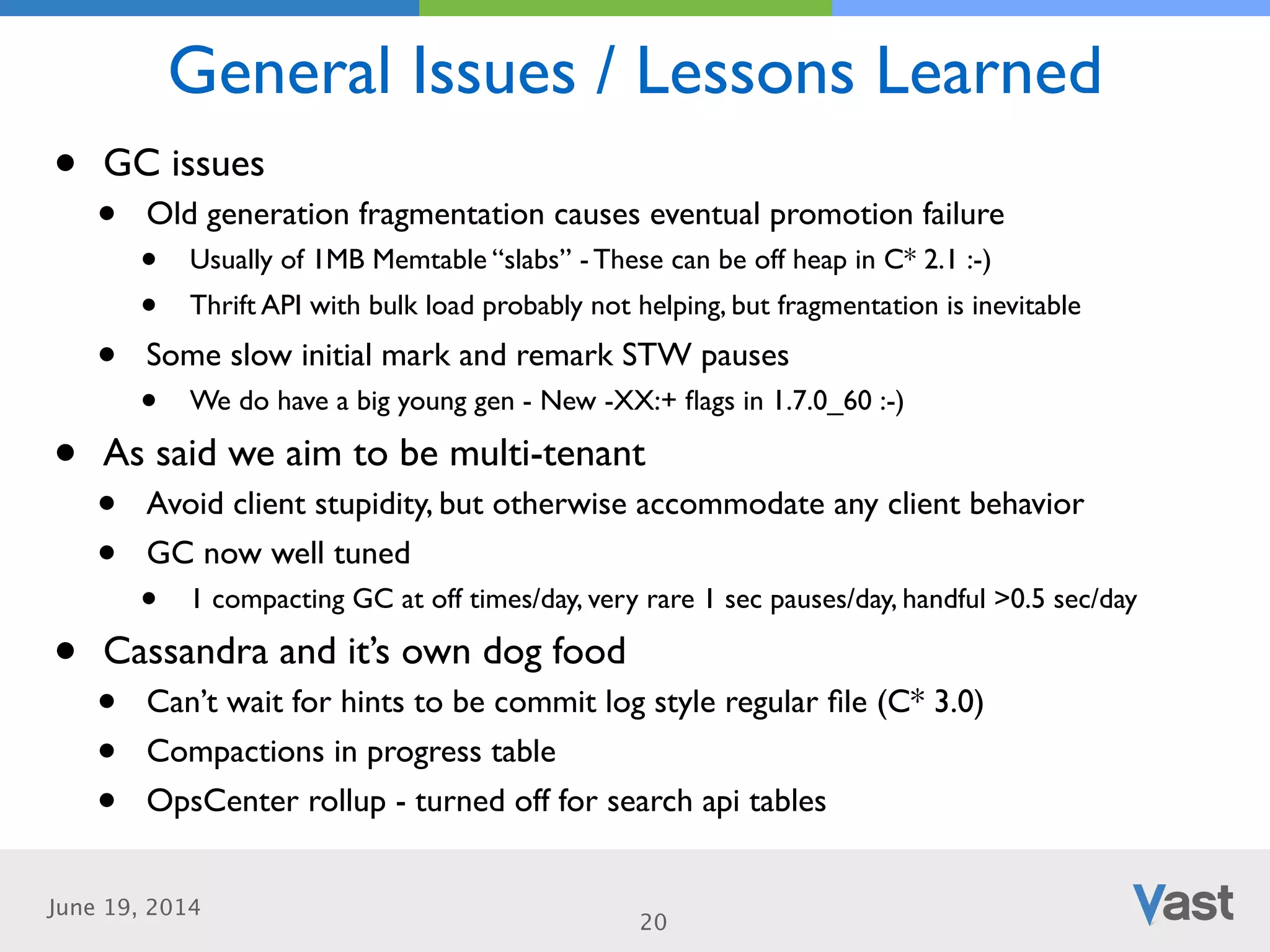

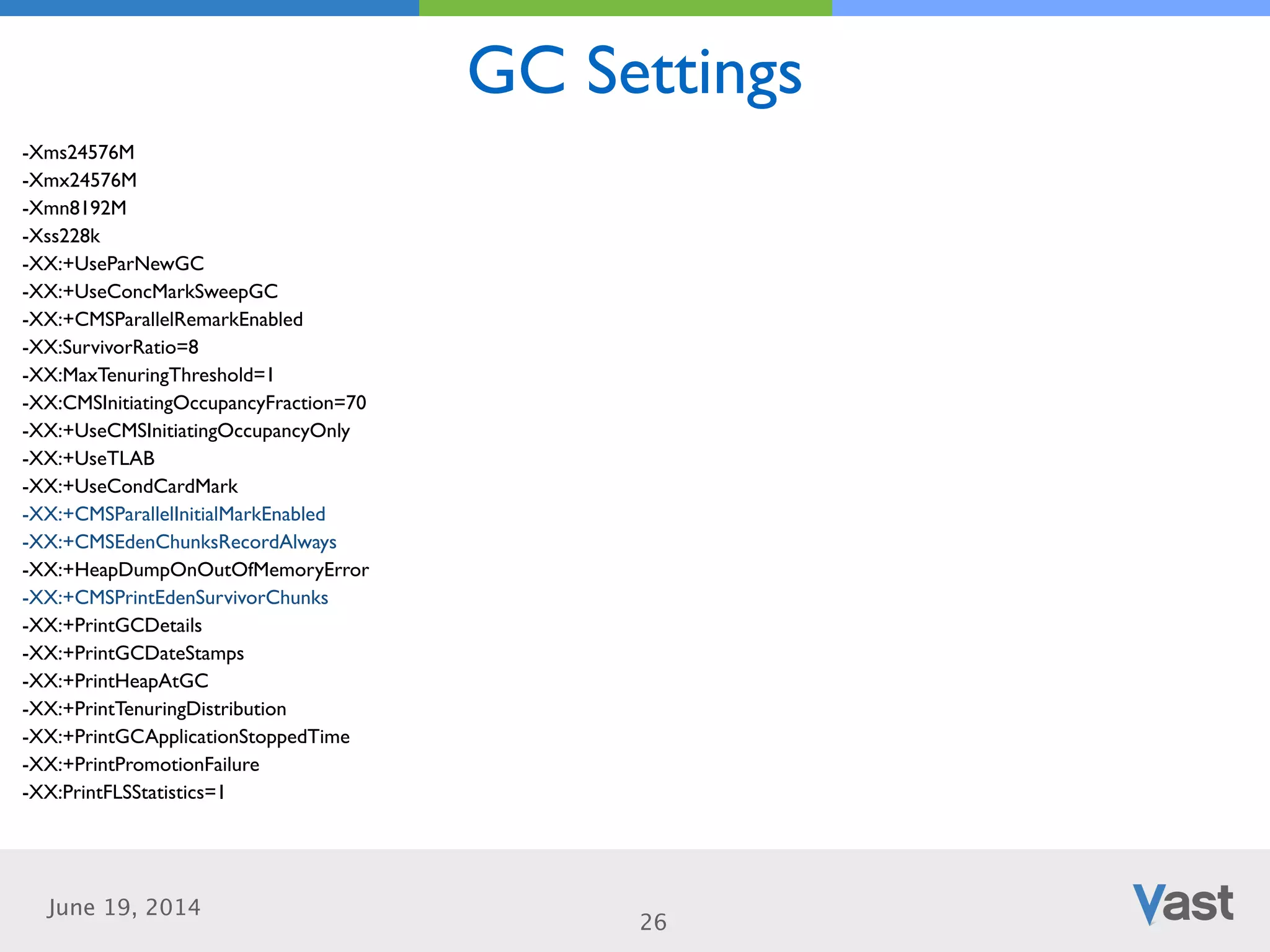

The document discusses Vast's experience in transitioning to Cassandra for data handling in their performance-based marketplaces. It outlines their goals for reducing latency and improving data flow, highlights challenges and solutions in managing large datasets via Cassandra, and shares insights from their implementation, including future plans for enhancements. The presentation reflects a learning journey, emphasizing the importance of adaptability and innovation in big data applications.