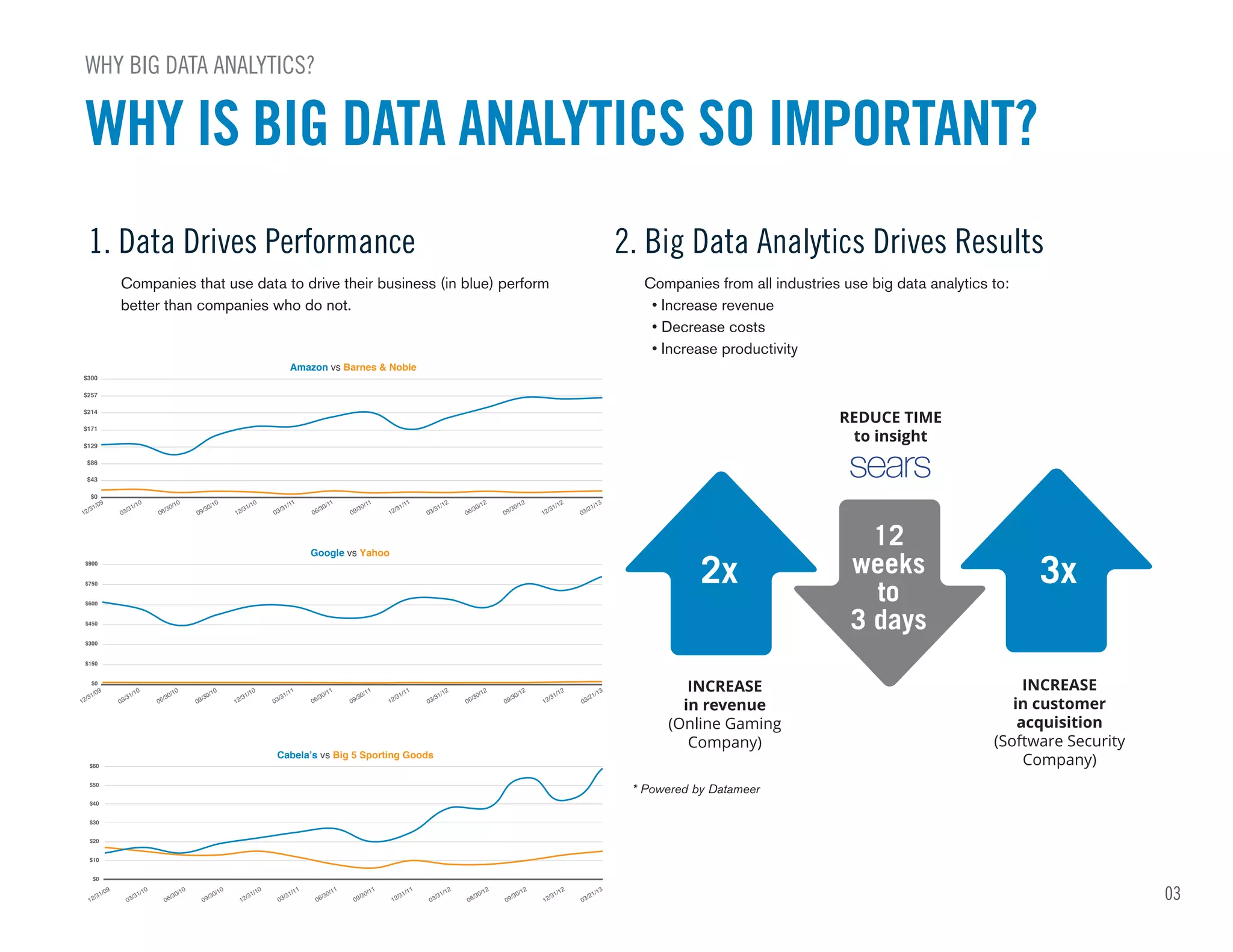

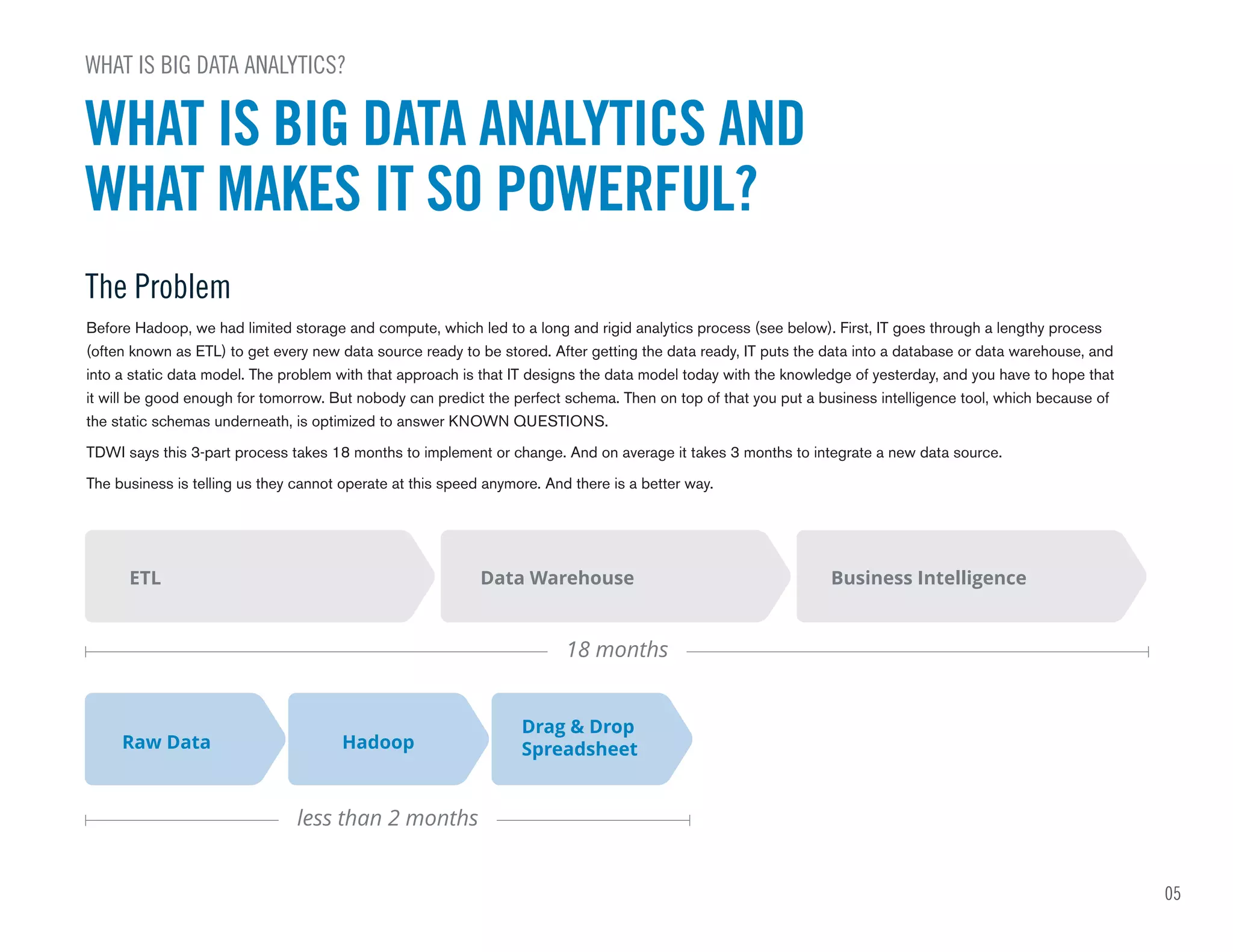

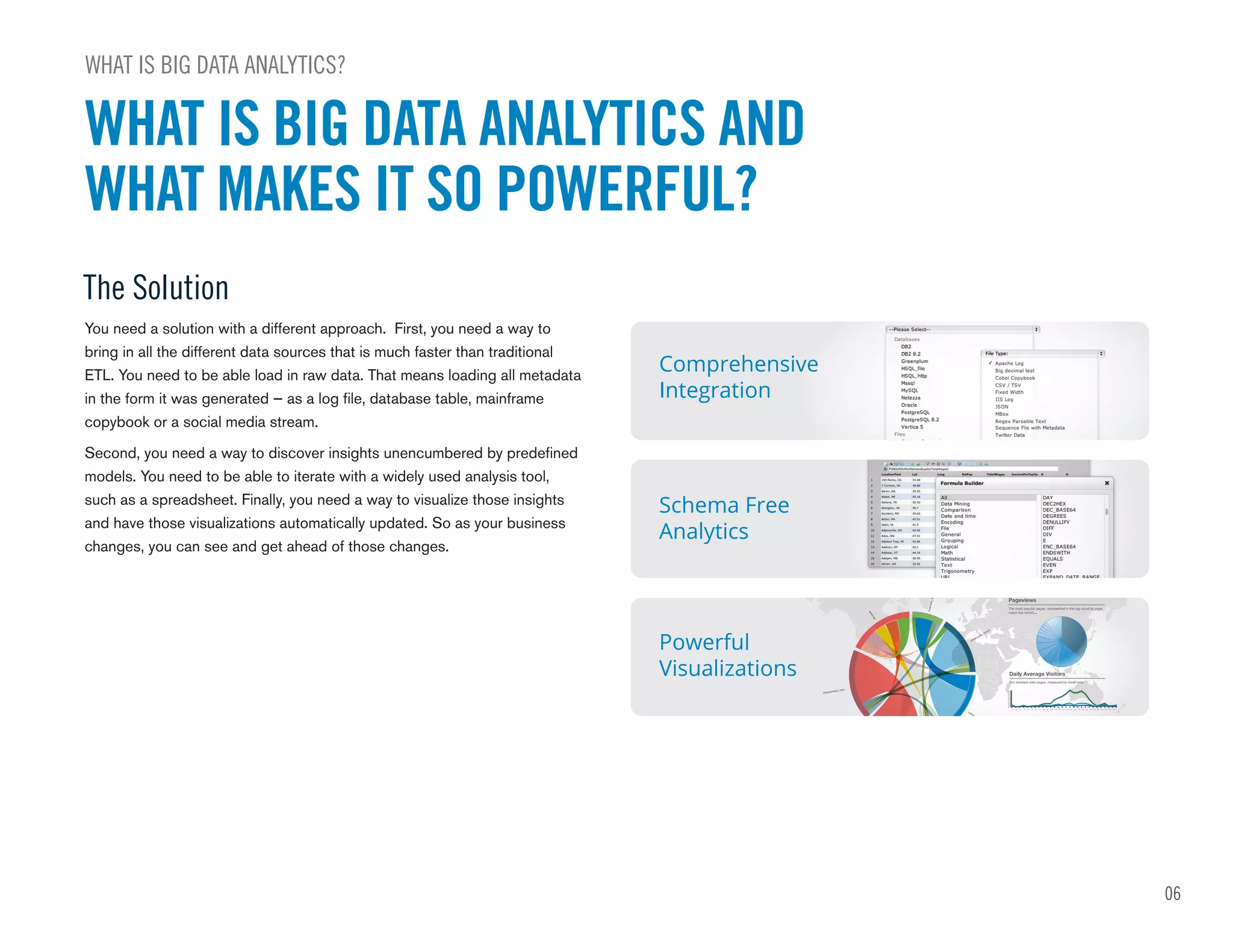

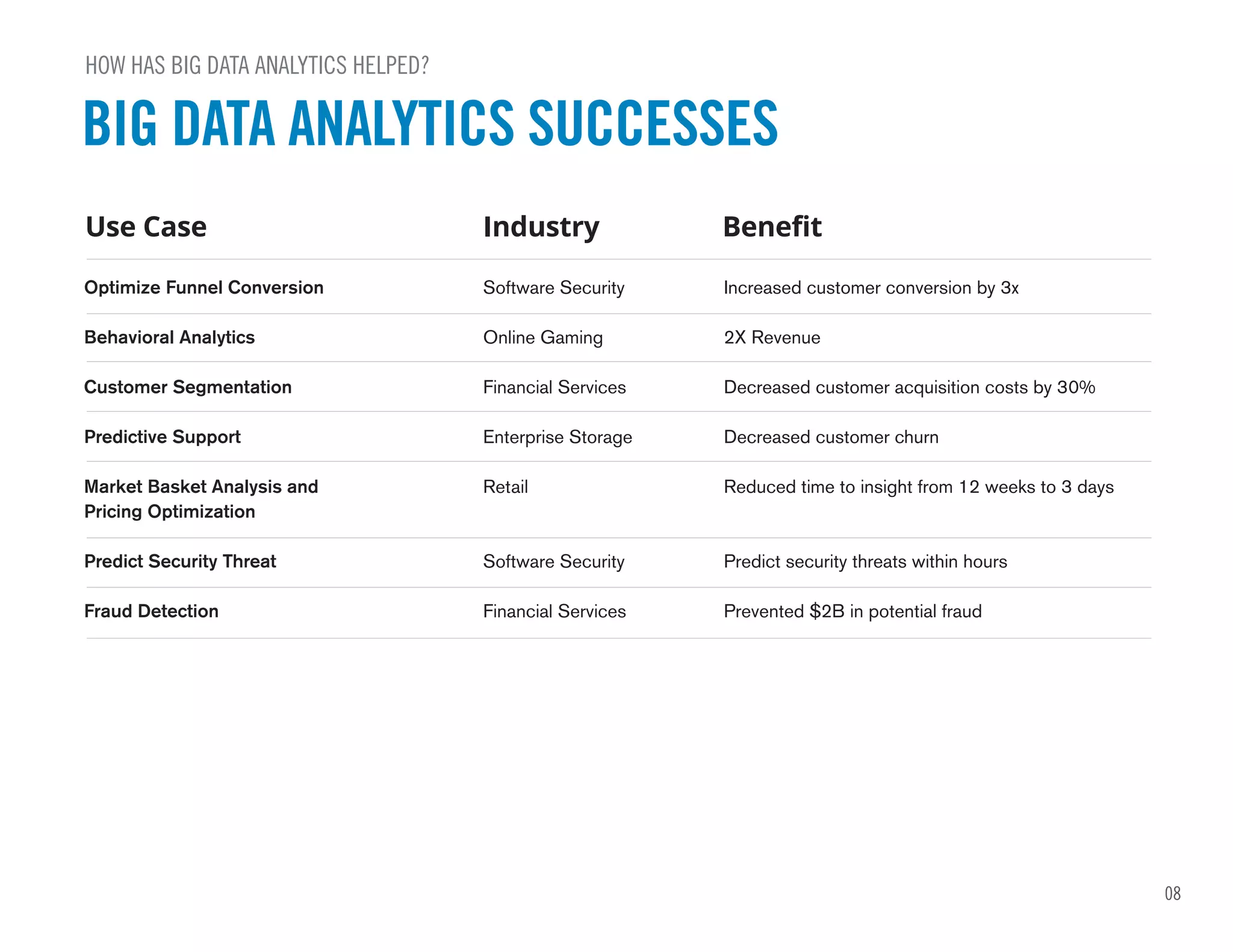

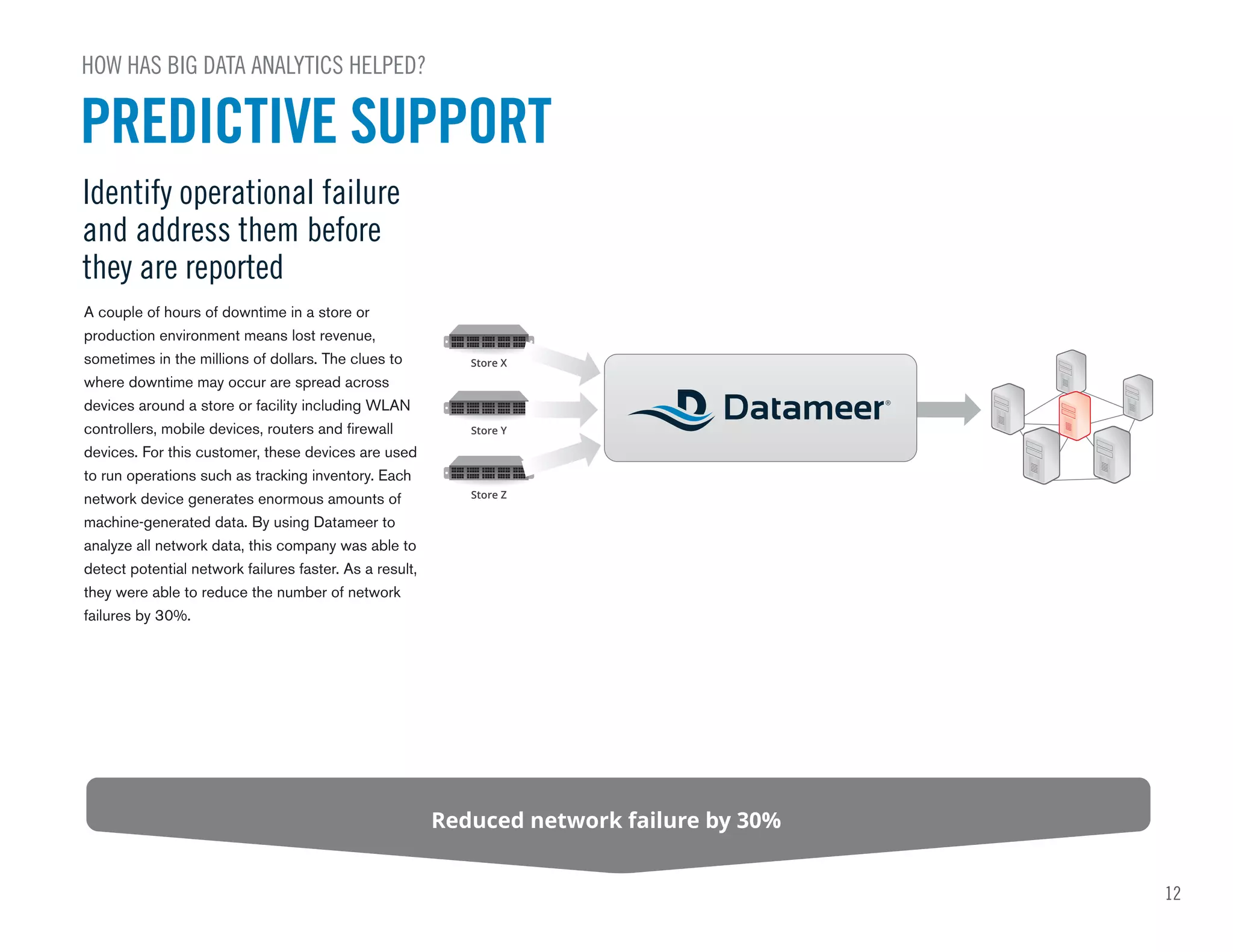

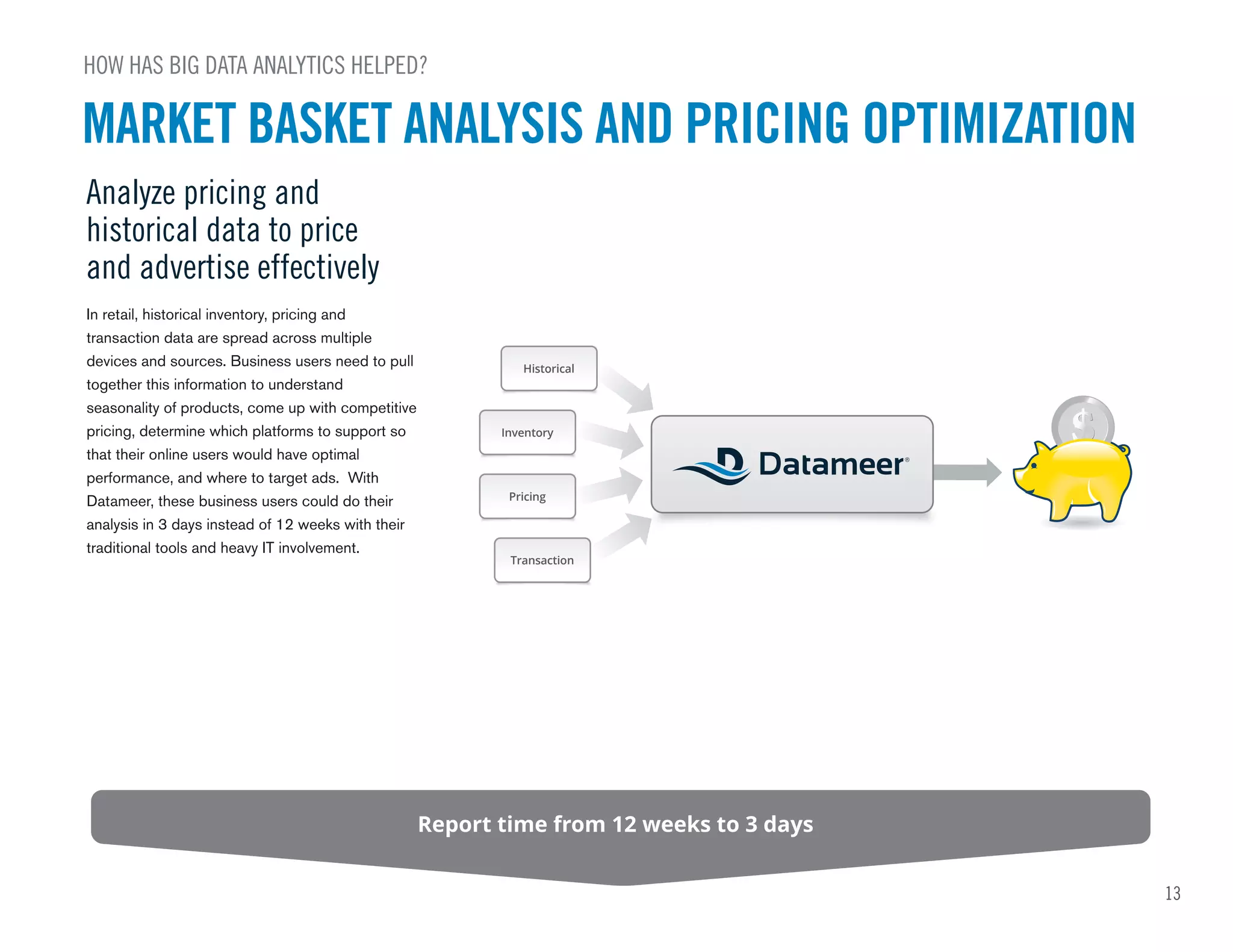

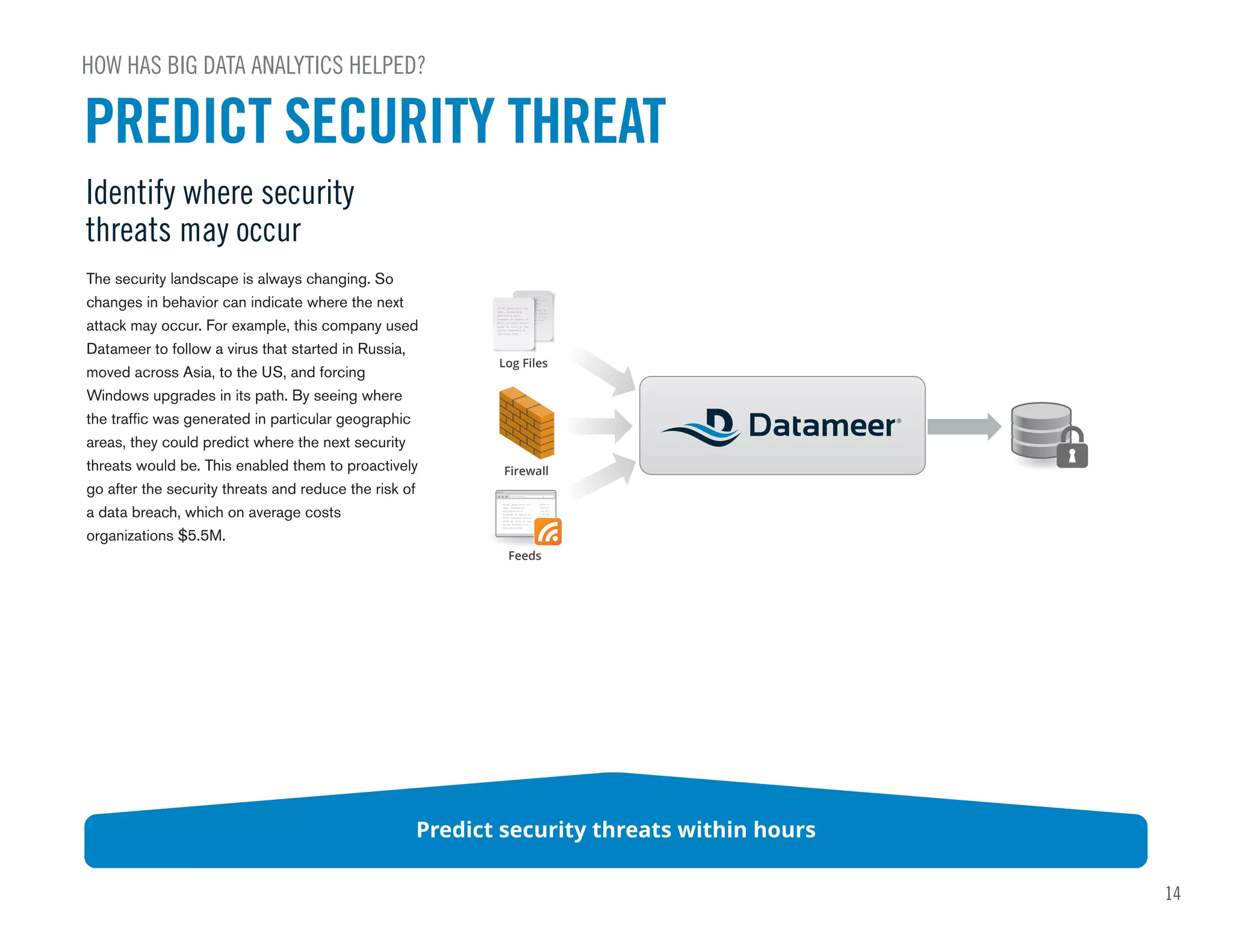

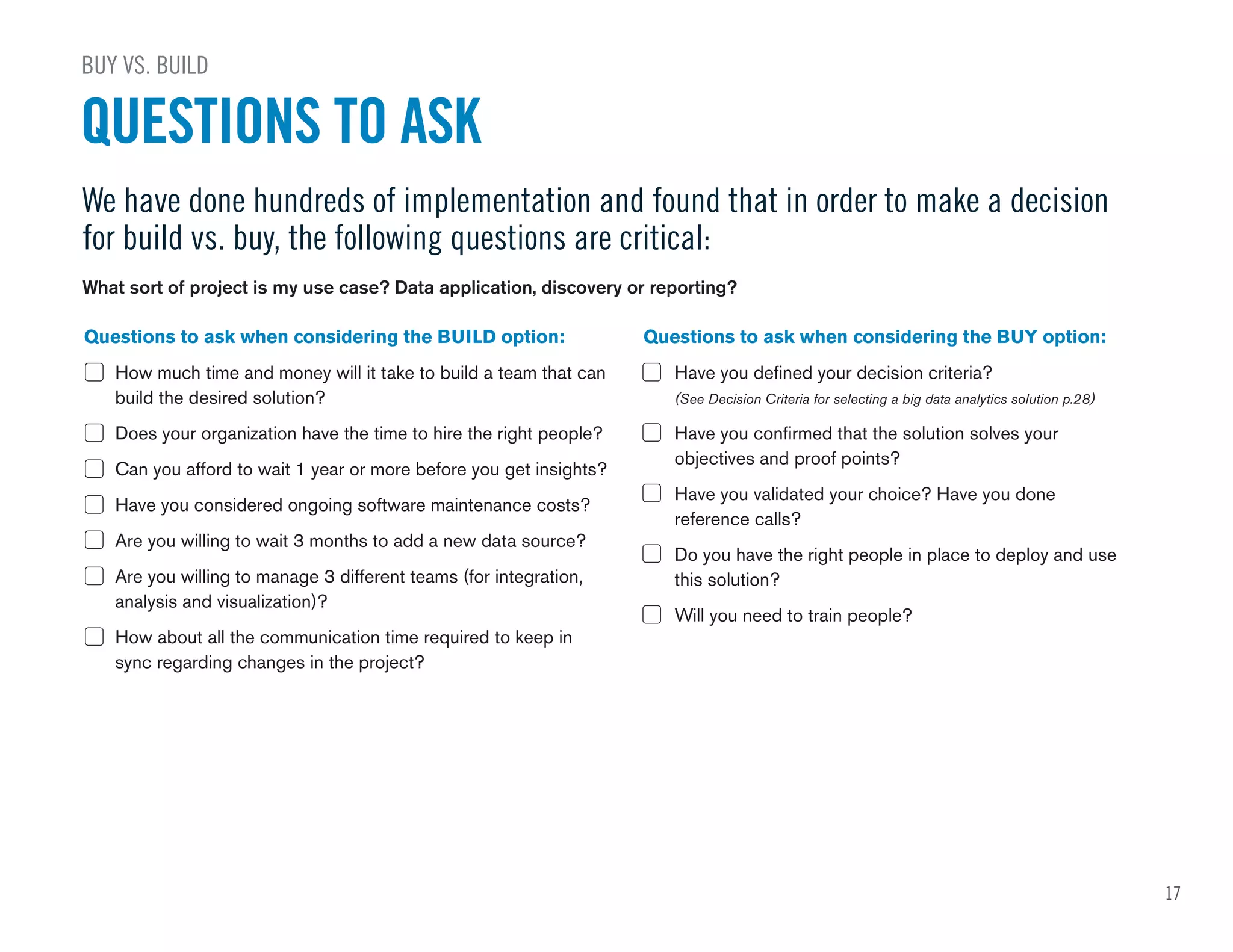

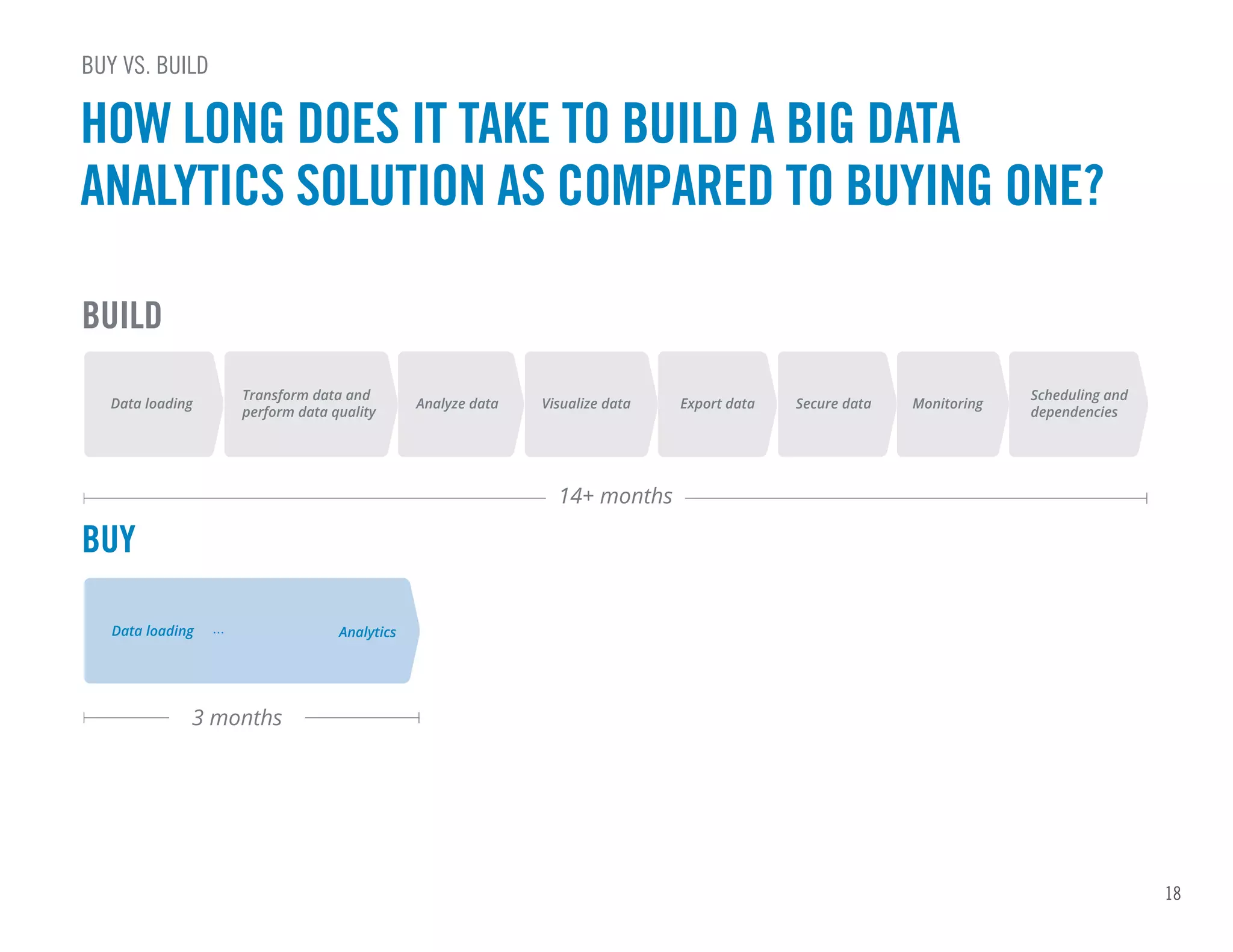

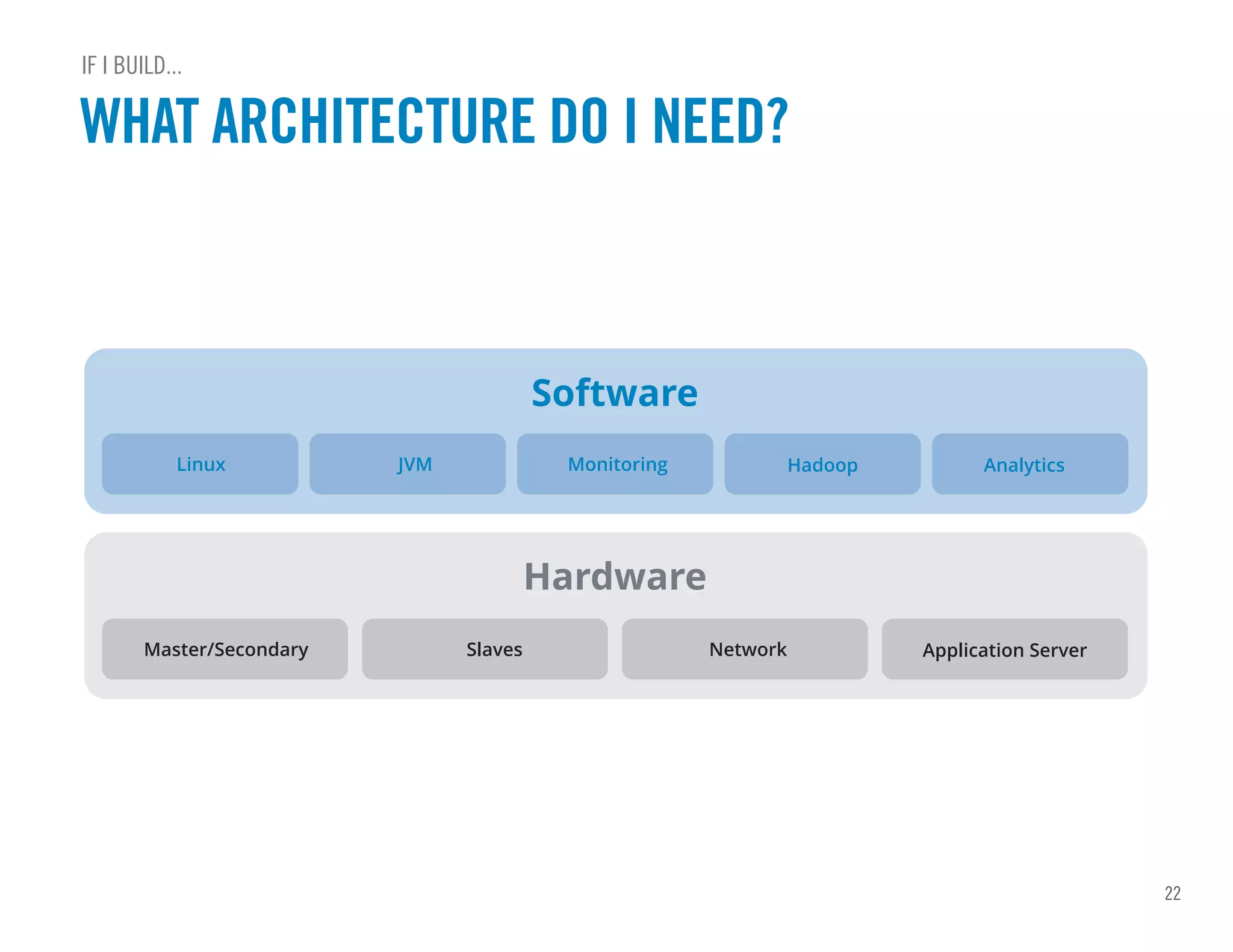

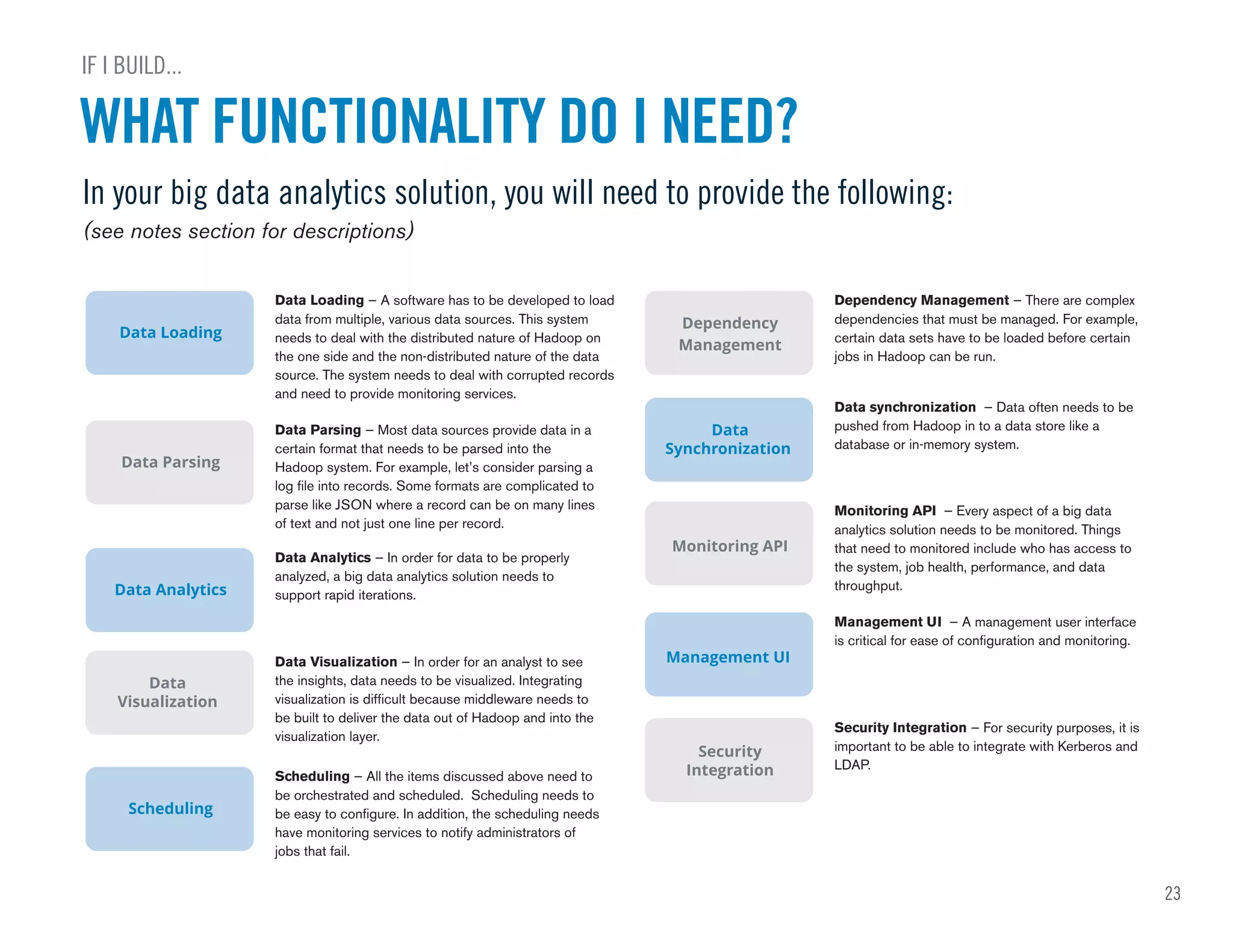

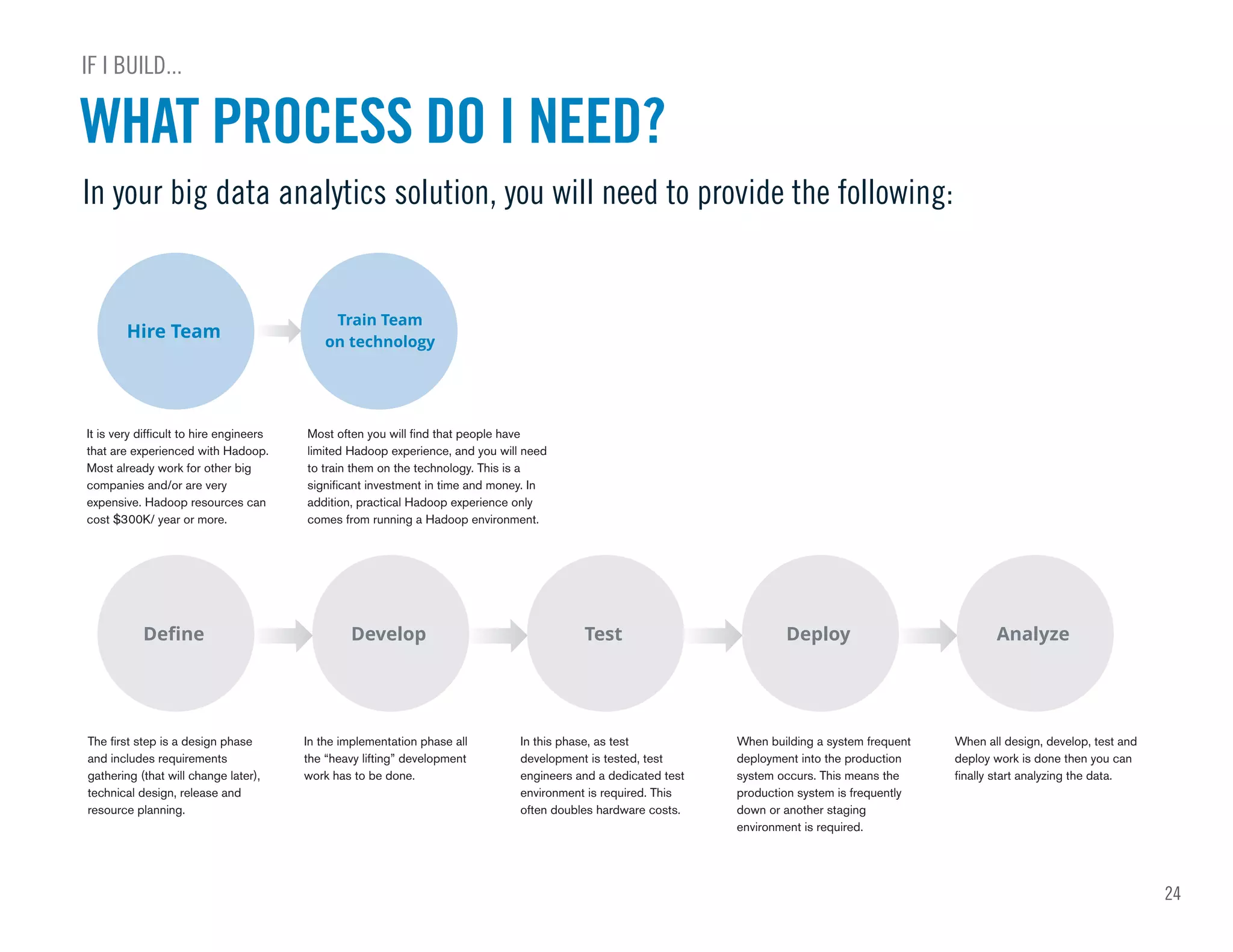

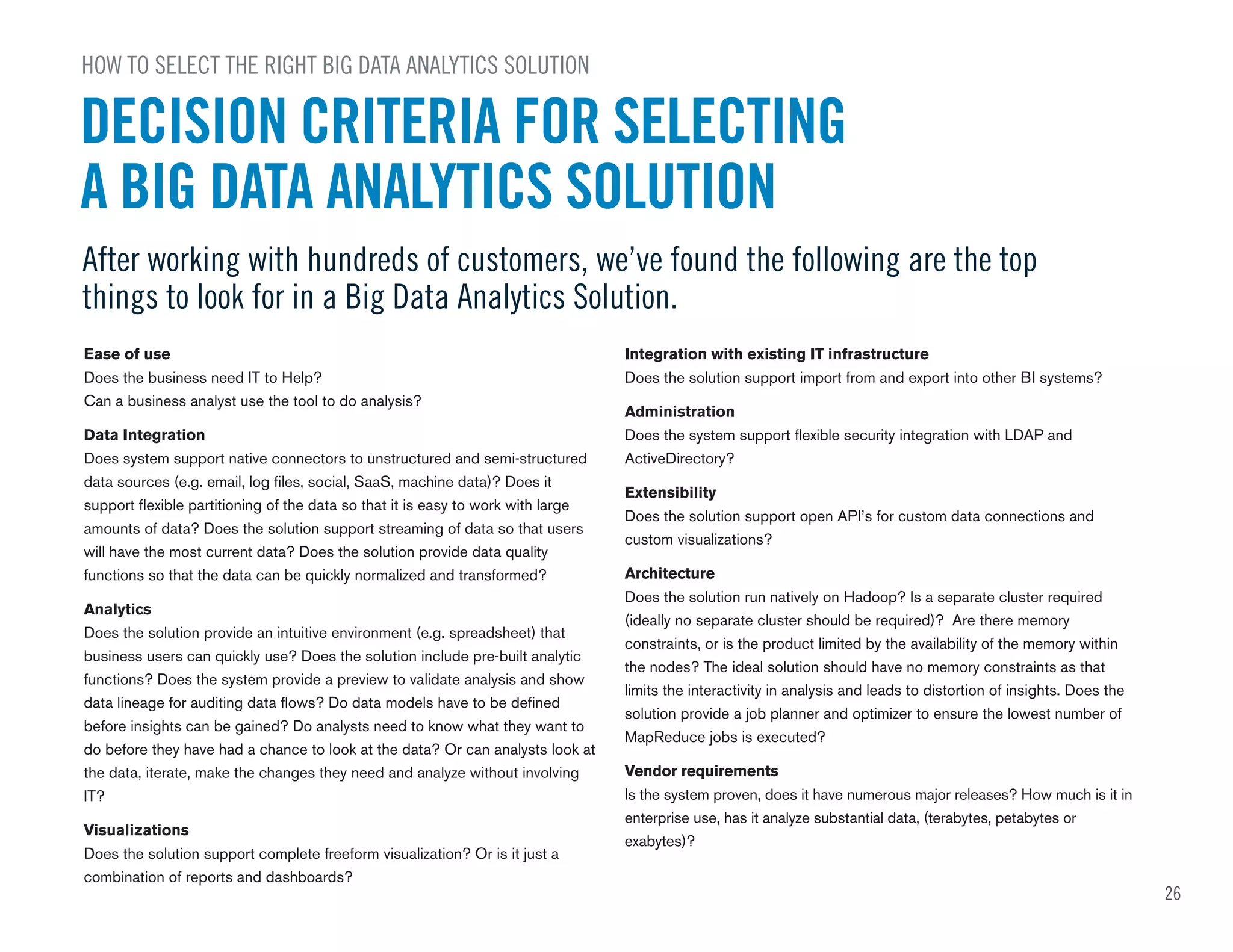

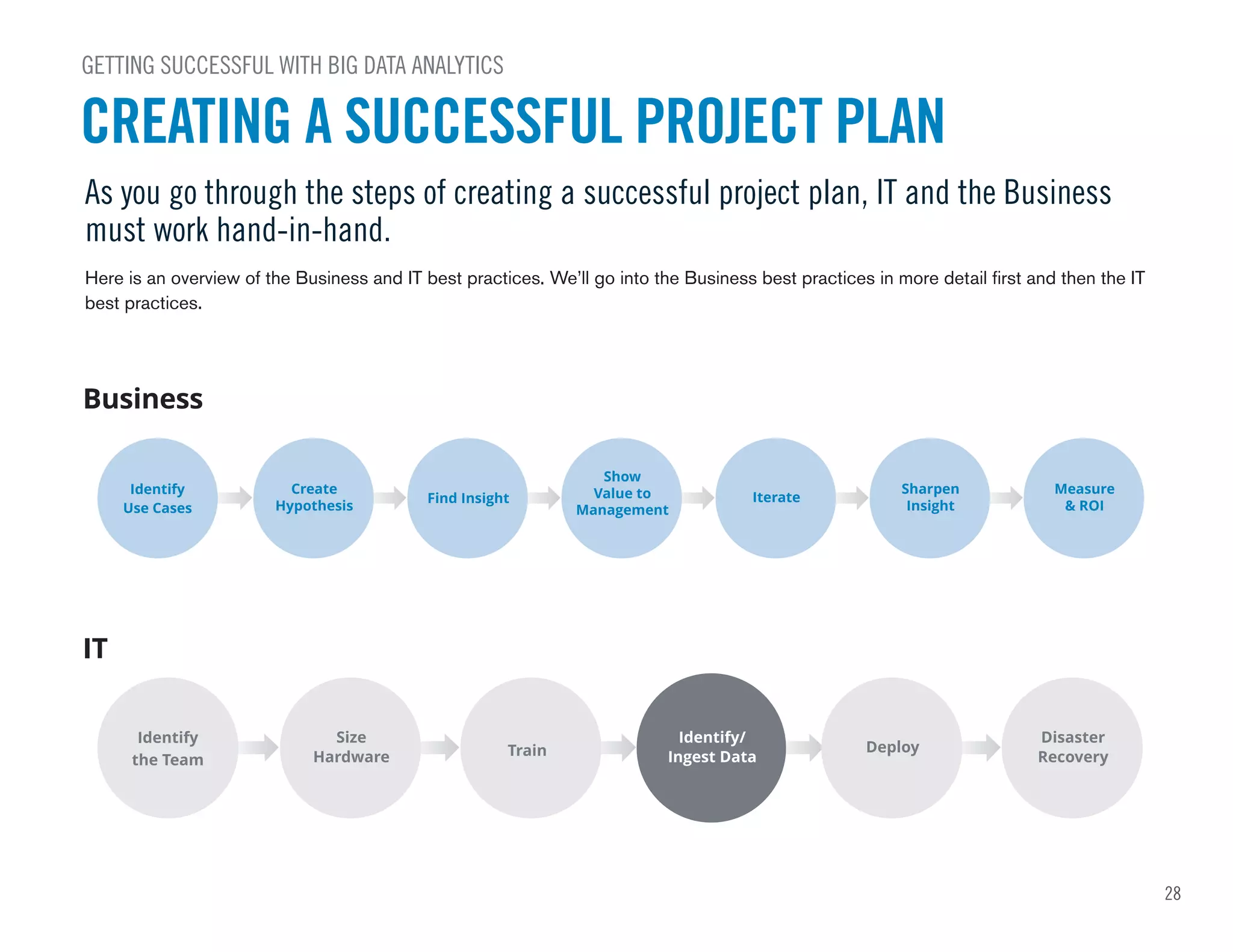

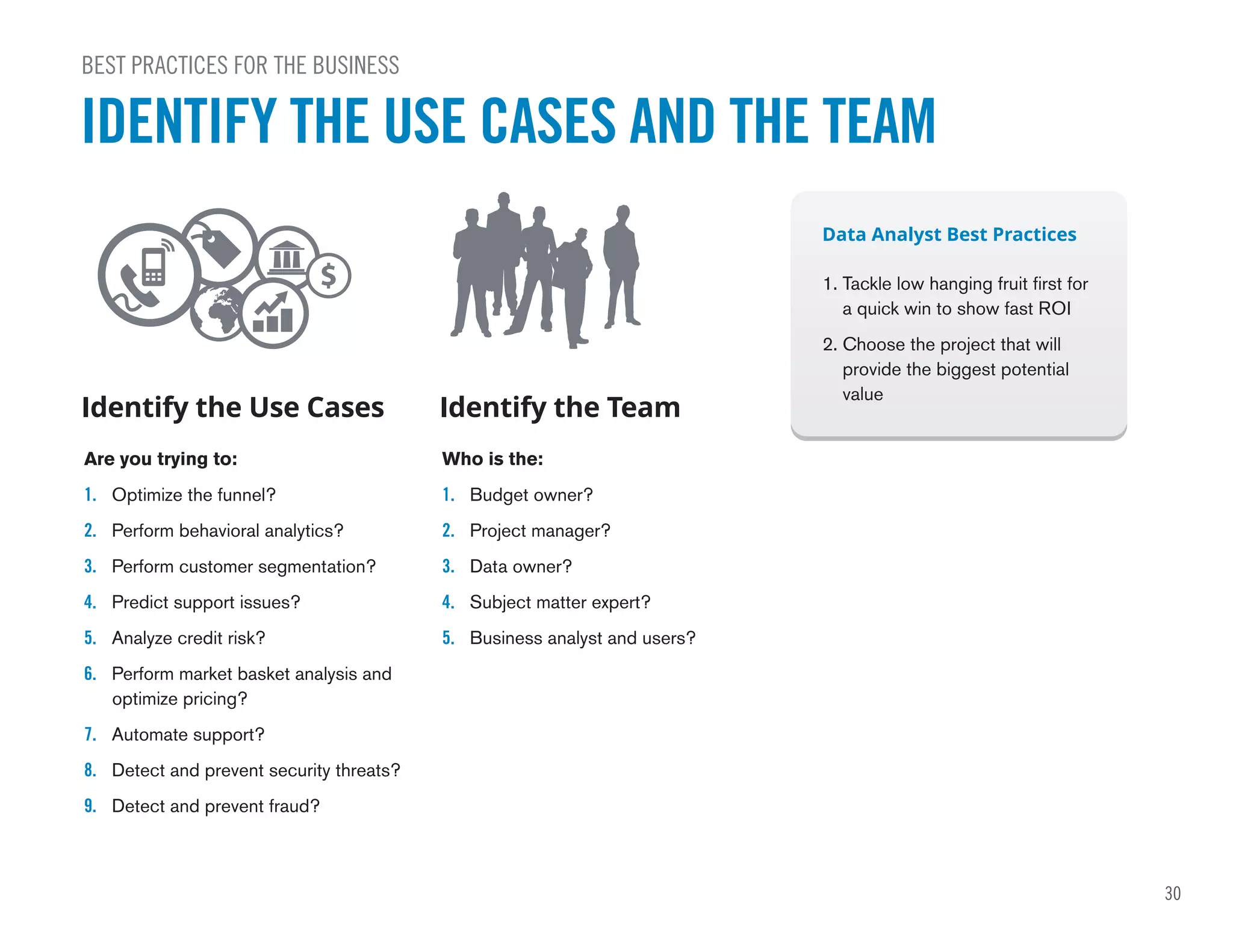

The document provides guidance on big data analytics. It discusses why big data analytics is important for companies to drive performance and results. It defines big data analytics as using analytics on large, diverse datasets to discover insights faster. The document then gives examples of how big data analytics has helped companies by increasing revenue, decreasing costs and time to insight, and improving customer acquisition, retention, and security. It addresses common questions around whether to buy or build a big data analytics solution.