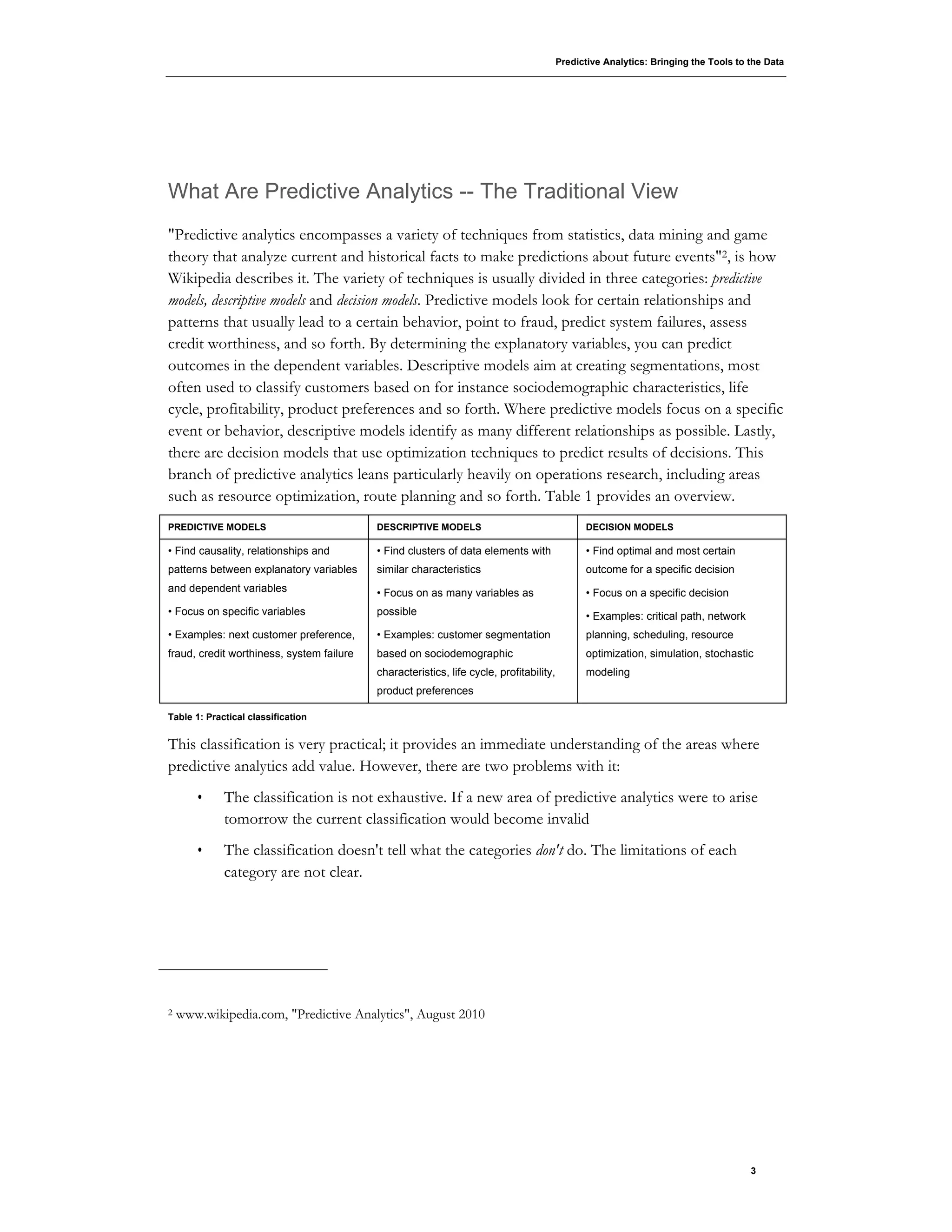

This document discusses predictive analytics and provides an overview of Oracle's predictive analytics tools.

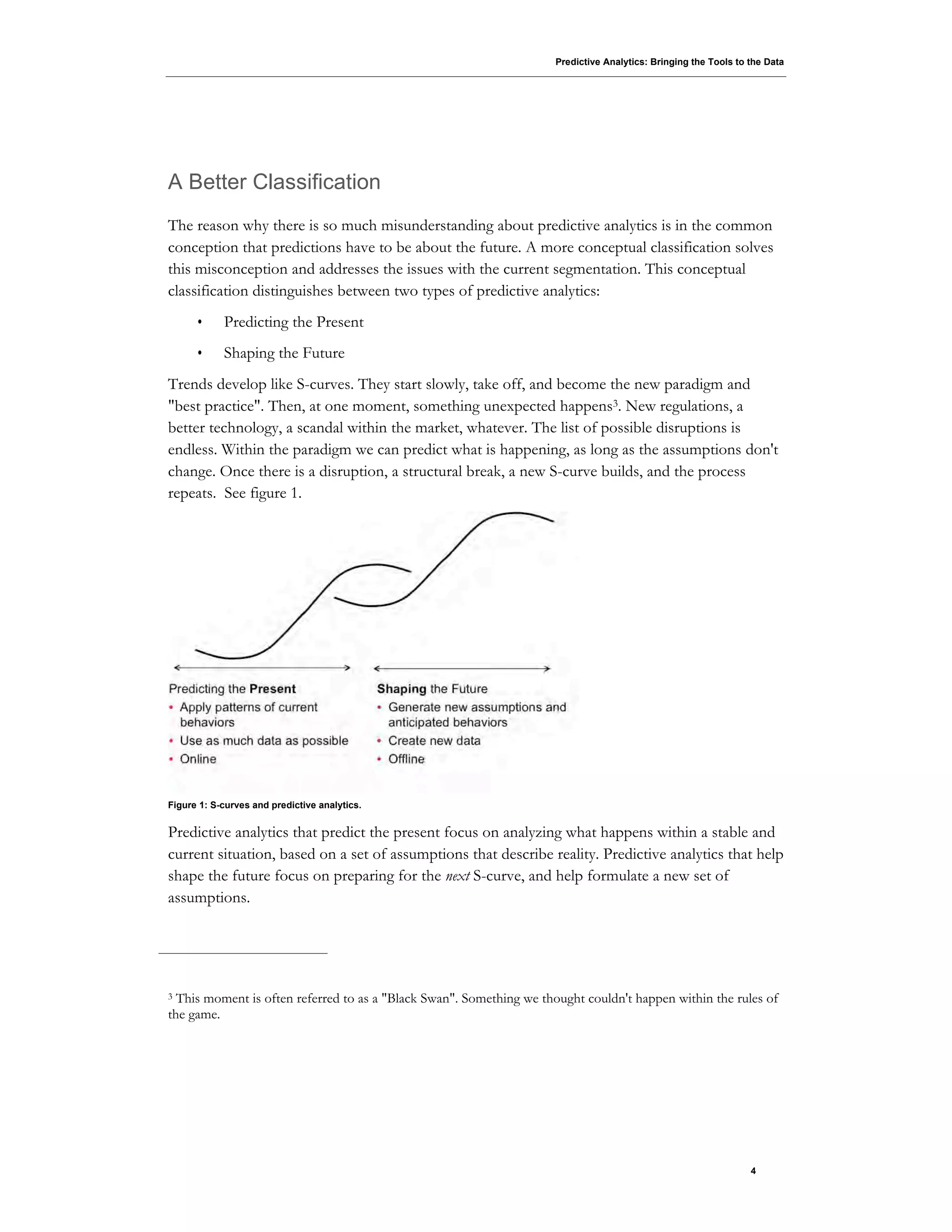

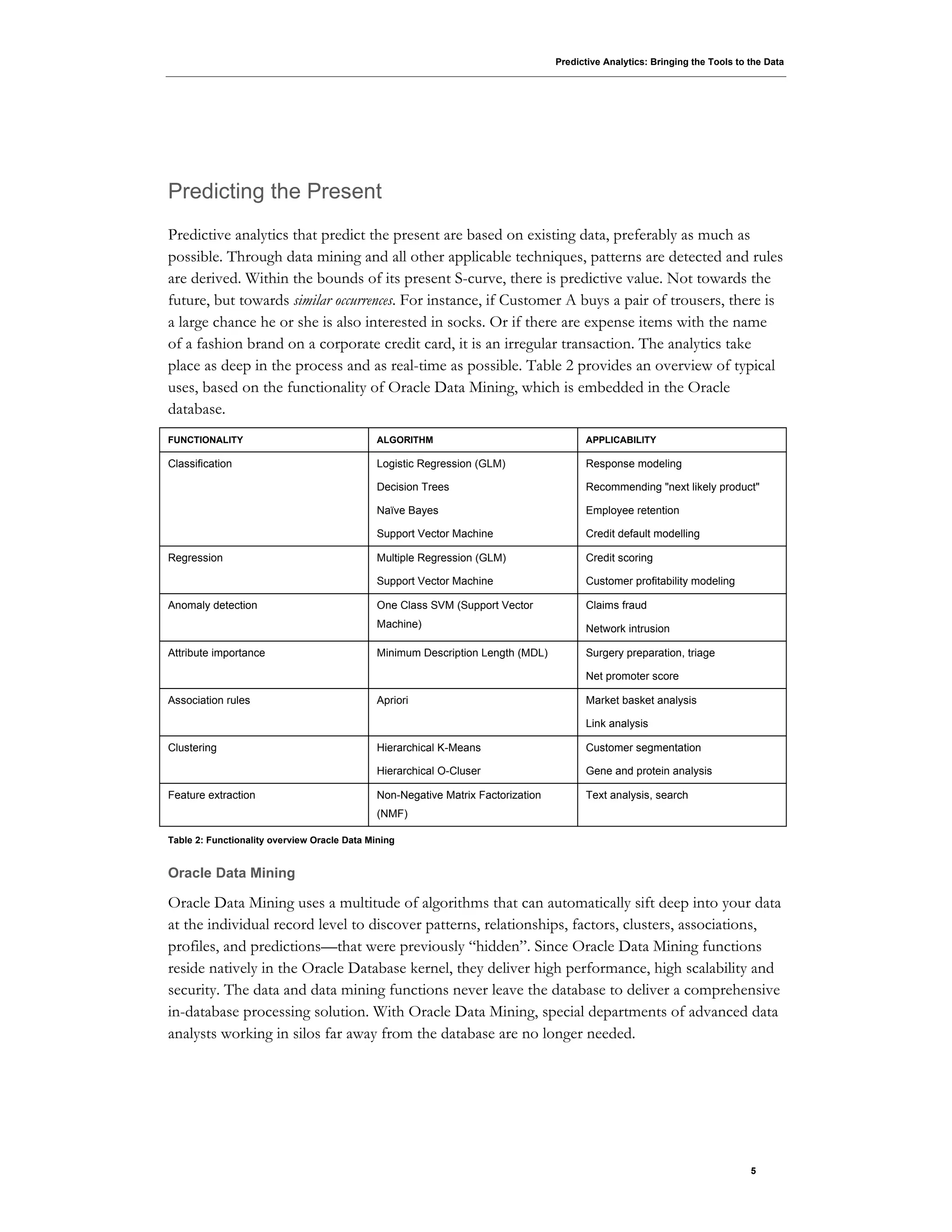

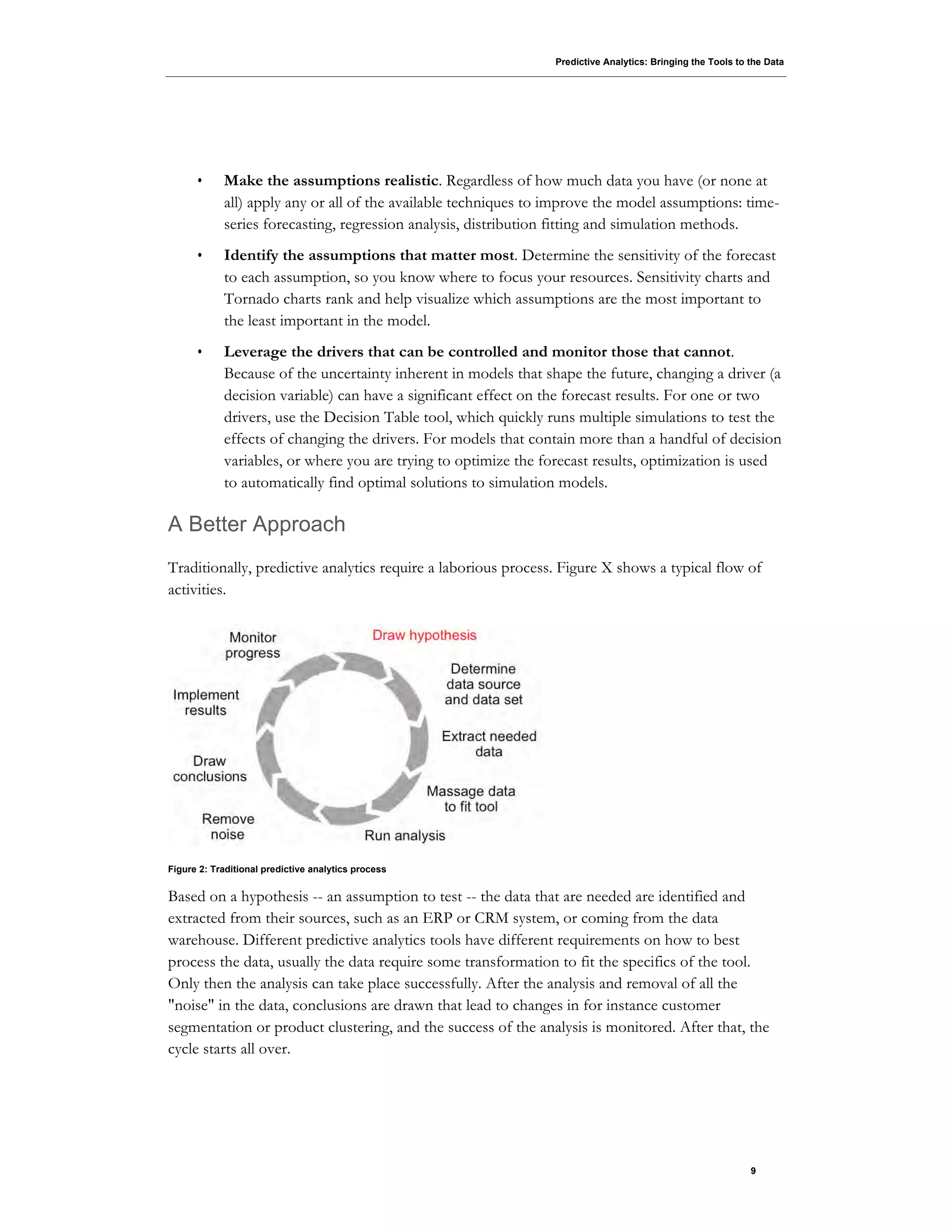

It argues that predictive analytics is commonly misunderstood as only predicting the future, but can also be used to predict the present based on existing data patterns. It proposes a new conceptual classification of predictive analytics into "predicting the present" and "shaping the future". The document then provides examples of how Oracle Data Mining can be used to predict things in the present like customer preferences, fraud detection, and credit scoring. It also discusses how Oracle Real-Time Decisions integrates predictive analytics into real-time processes.