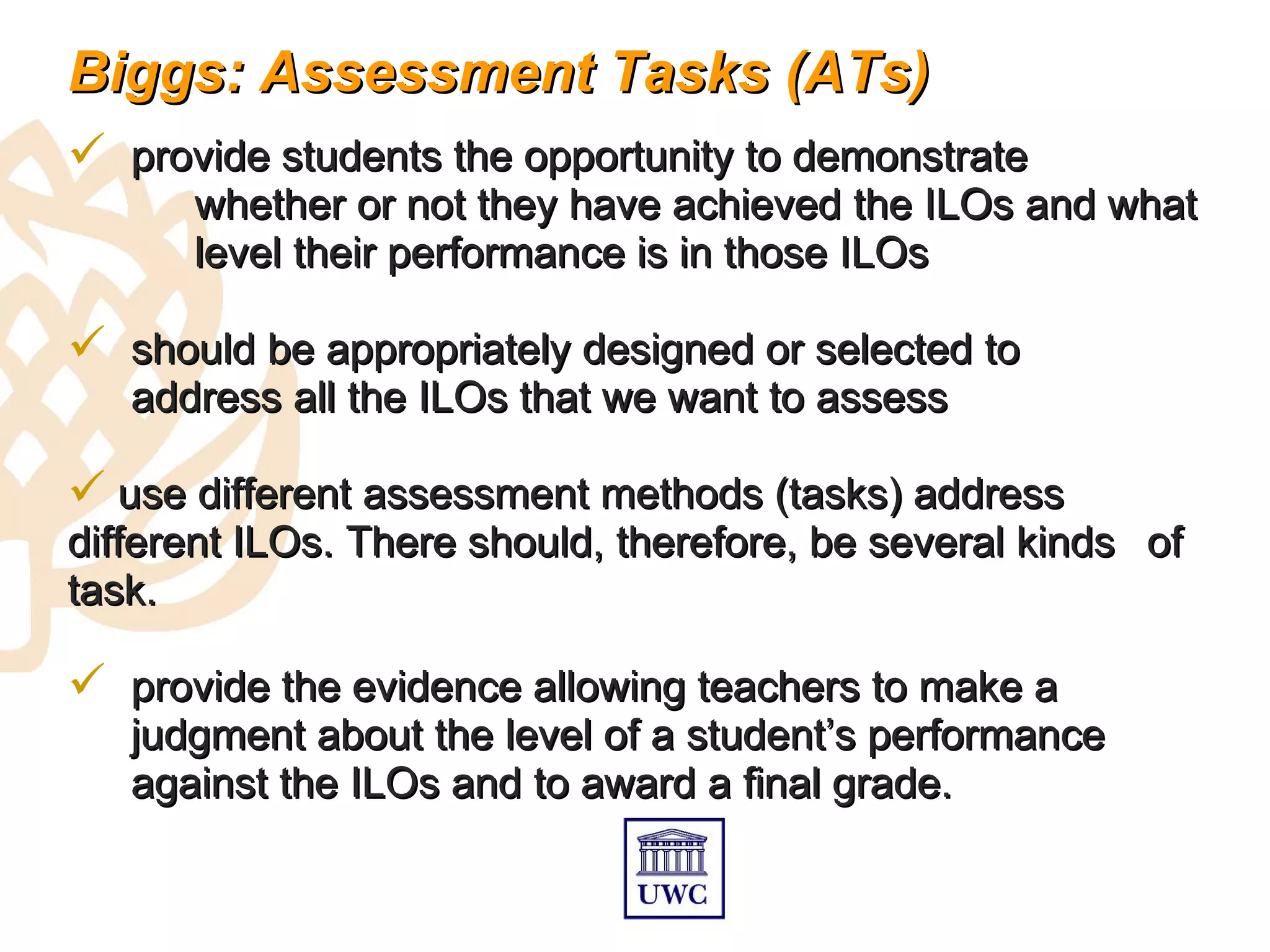

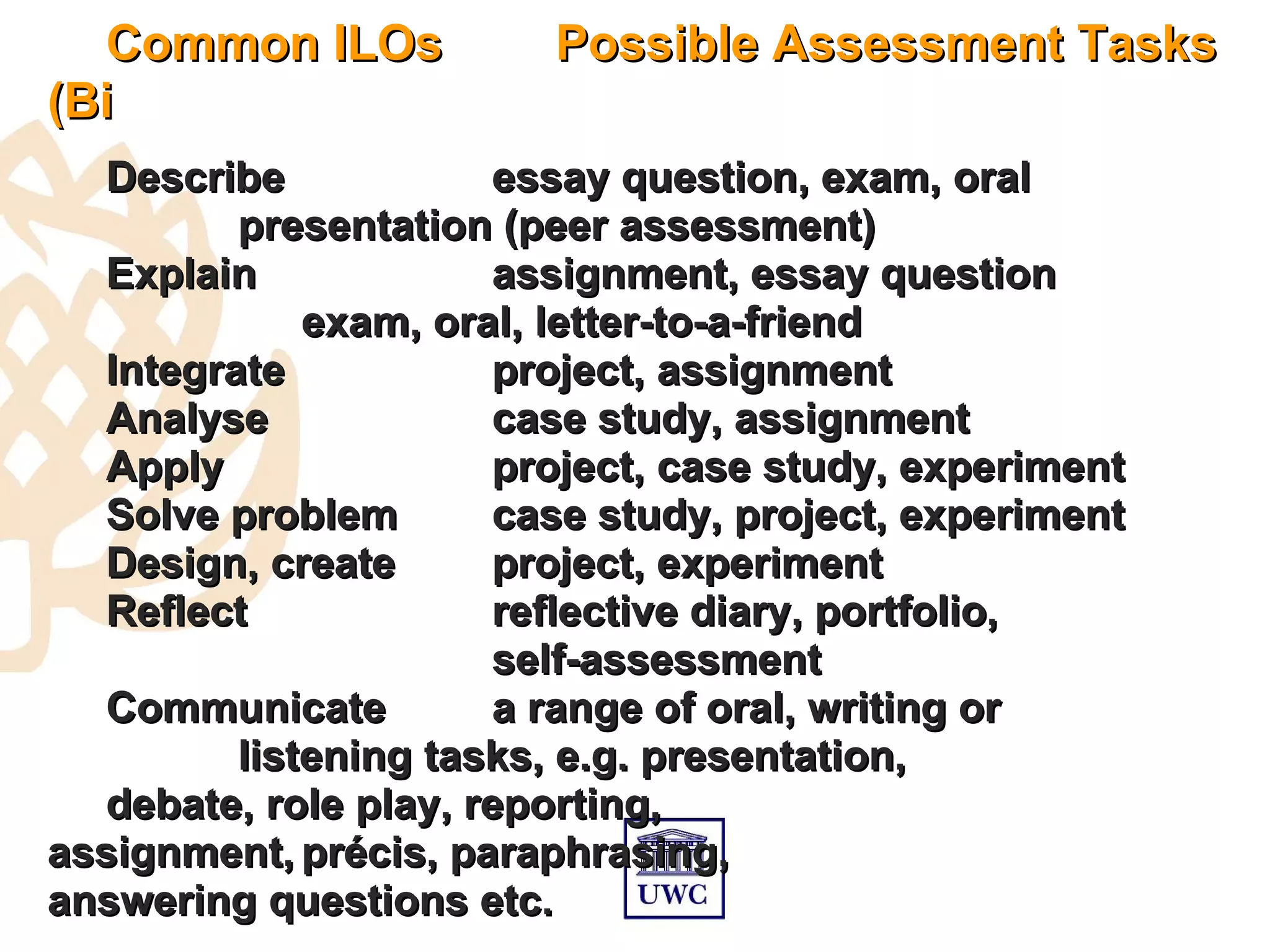

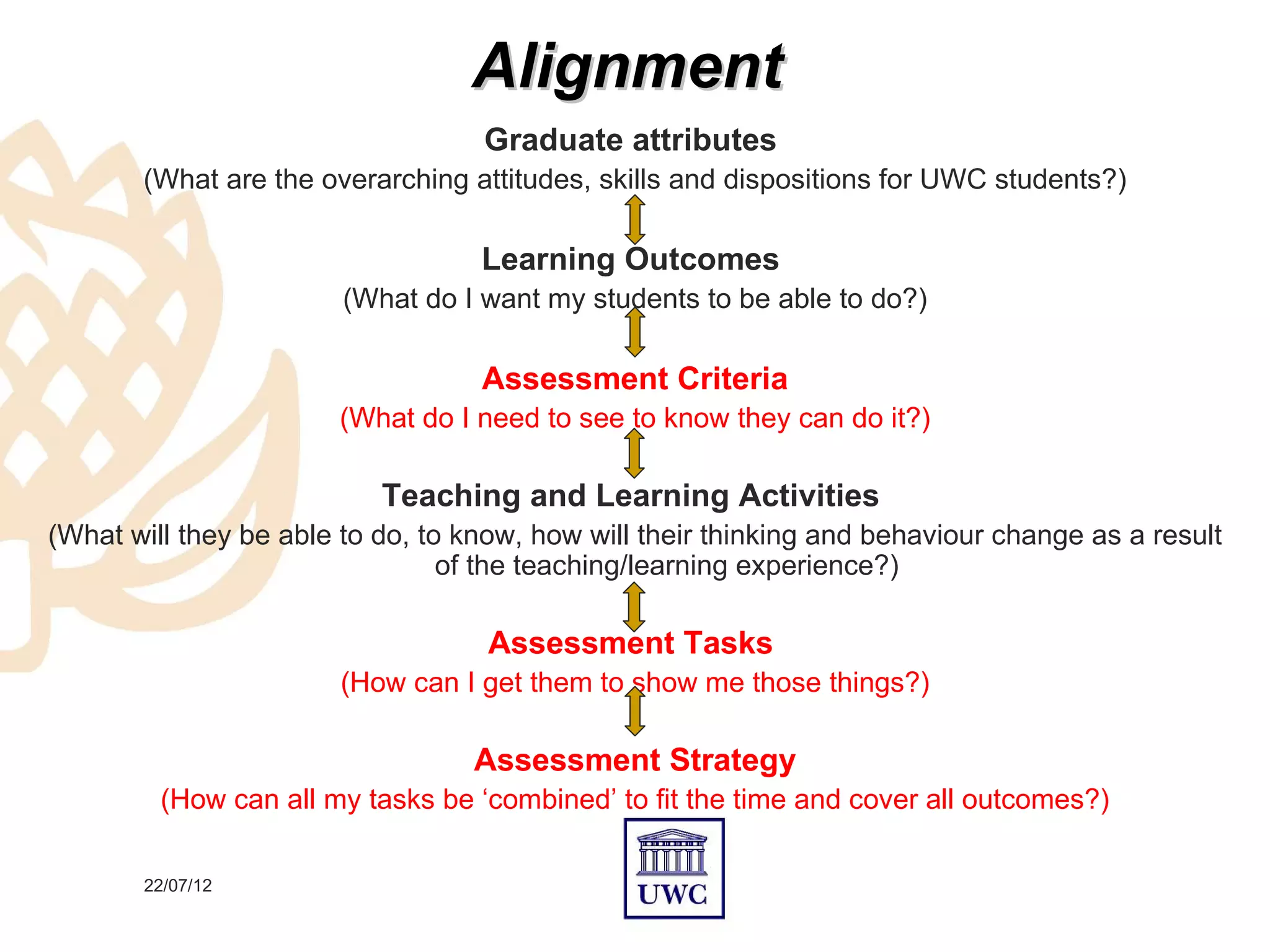

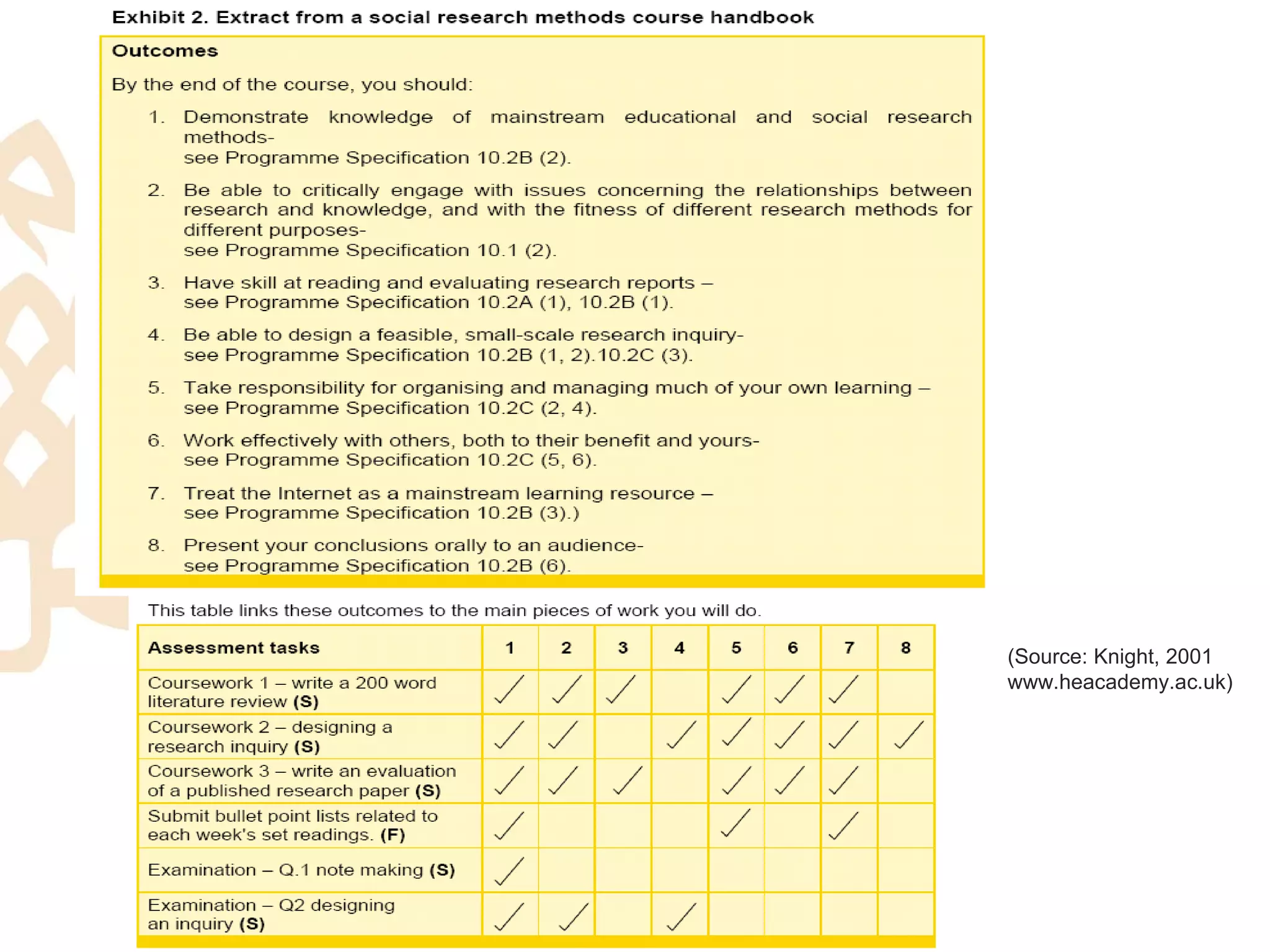

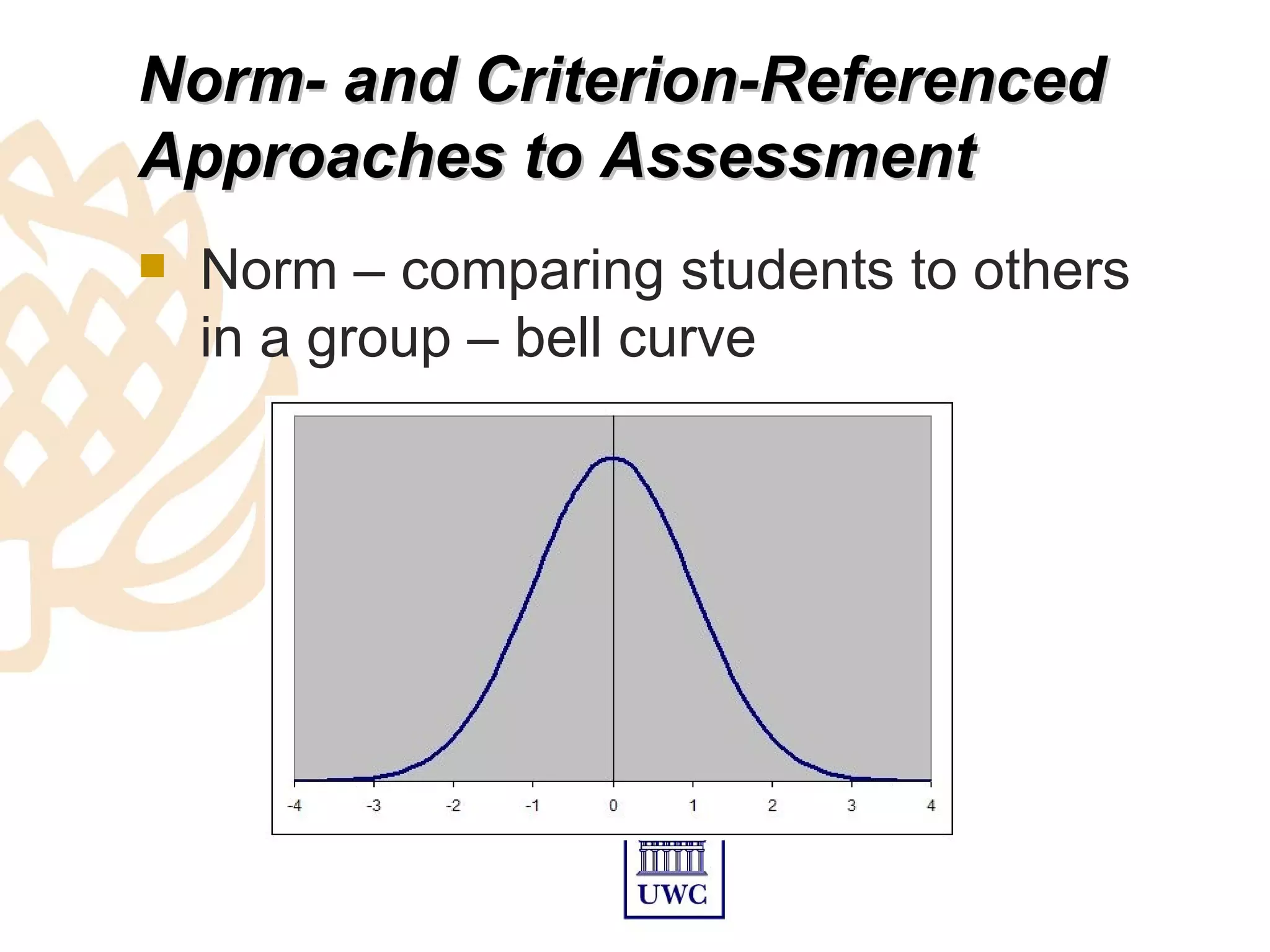

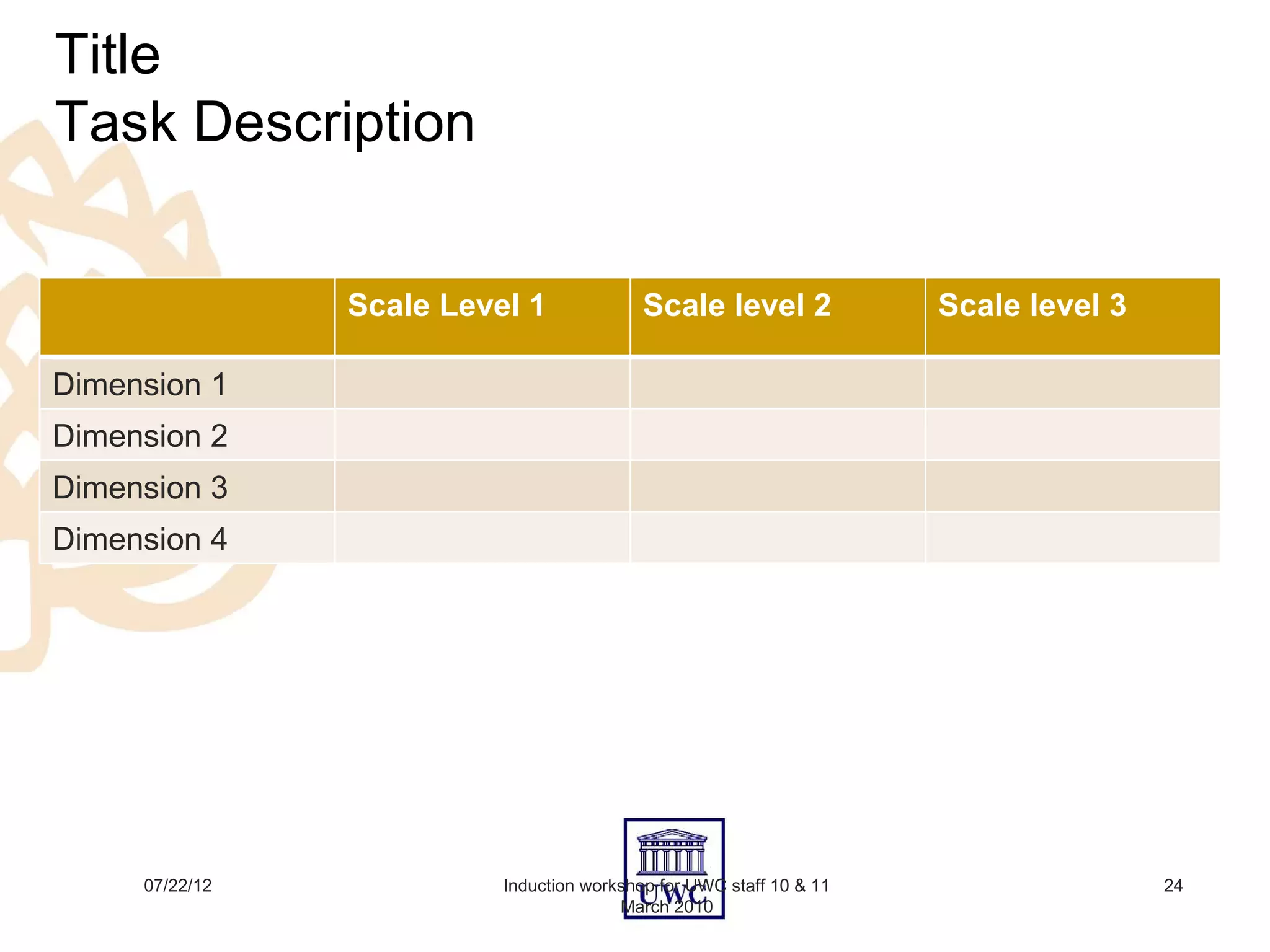

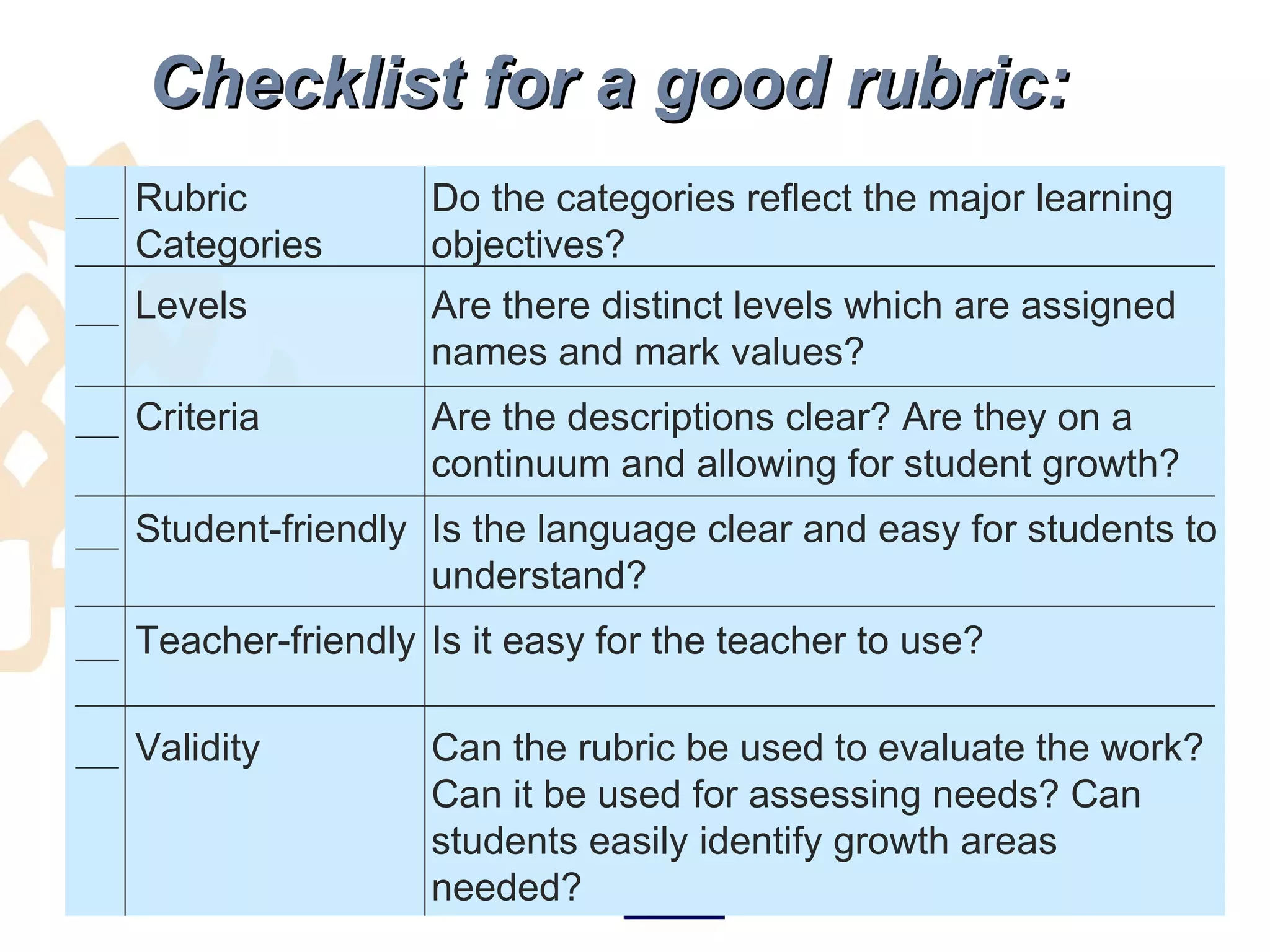

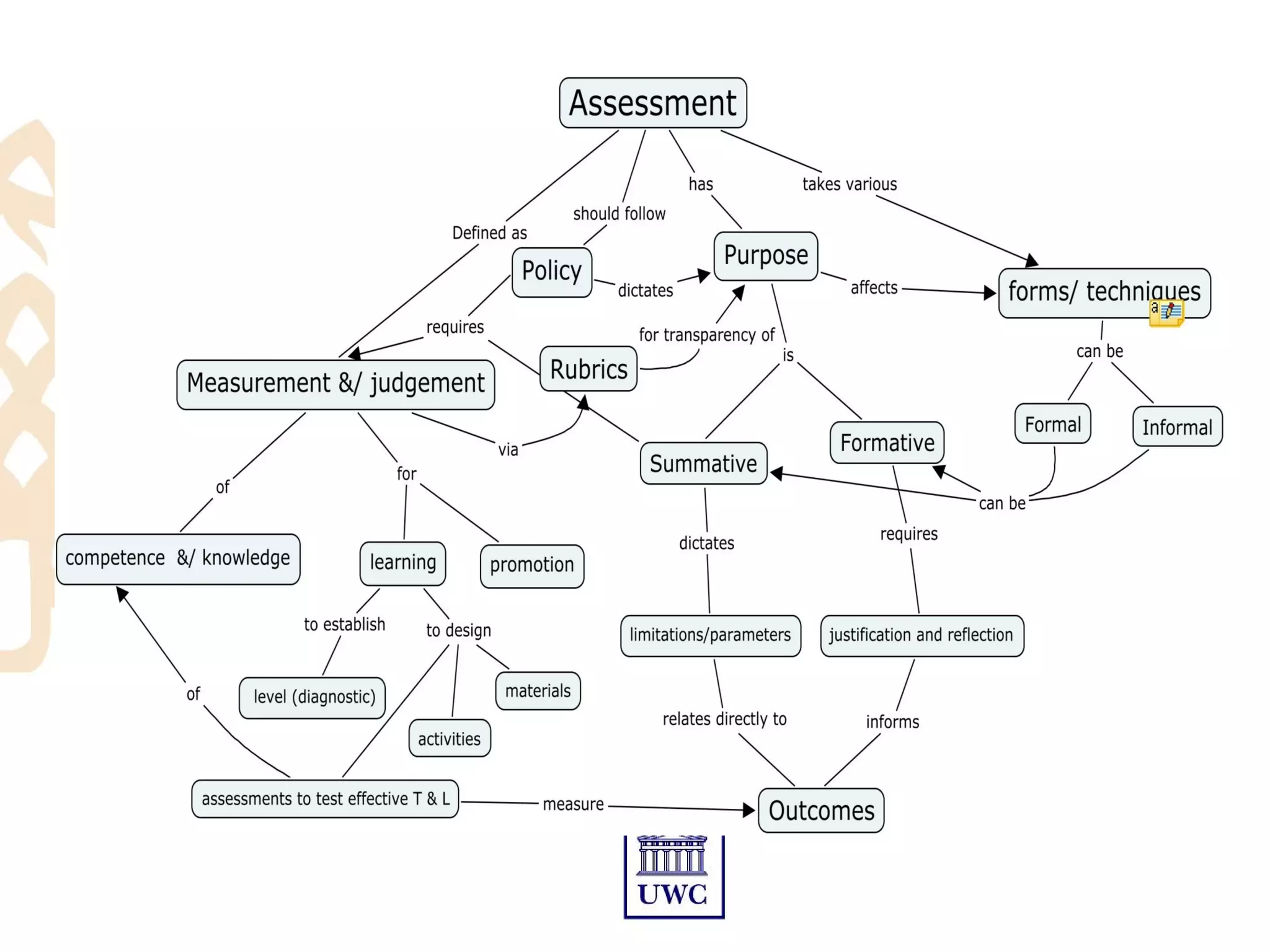

The document discusses assessment and rubrics for a workshop on teaching and learning. It provides information on aligning assessment with learning outcomes, selecting appropriate assessment forms, justifying assessments, and creating rubrics. Assessment should measure what students have learned based on the intended outcomes and provide feedback. The document discusses formative and summative assessment, as well as reliability, validity, and different assessment methods. It also covers feedback, rubrics, and the assessment policy at UWC.