This document provides an introduction to artificial intelligence and intelligent agents. It discusses key concepts including:

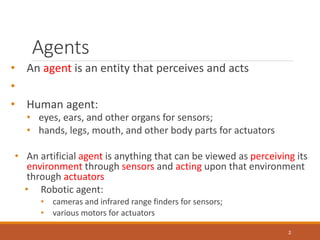

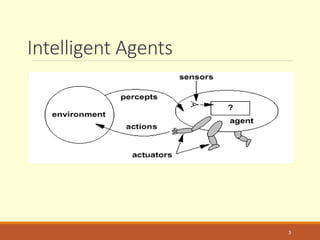

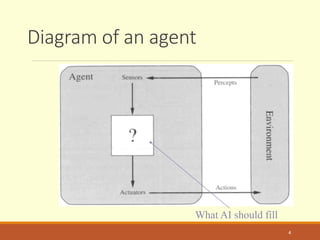

- Agents can be human, robotic, or software entities that perceive their environment and act upon it.

- There are different types of agent programs including simple reflex agents, model-based reflex agents, goal-based agents, and utility-based agents.

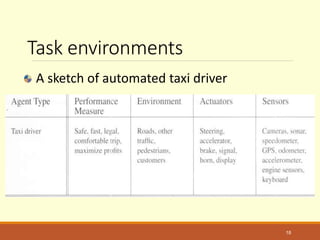

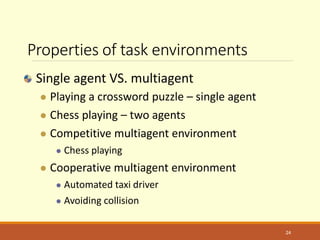

- Task environments for agents can have different properties such as being fully or partially observable, deterministic or stochastic, and single-agent or multi-agent.

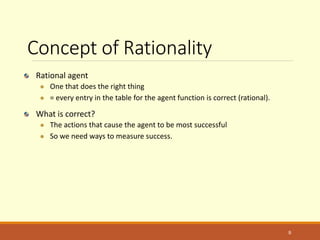

- Rational agents are designed to perform well according to a defined performance measure in their given environment. Learning agents can improve their performance over time by experiencing examples.

![Program implements the agent

function

Function Reflex-Vacuum-Agent([location,status]) return an

action

If status = Dirty then return Suck

else if location = A then return Right

else if location = B then return left

8](https://image.slidesharecdn.com/lecture-2-200920145038/85/Artificial-Intelligent-Agents-8-320.jpg)