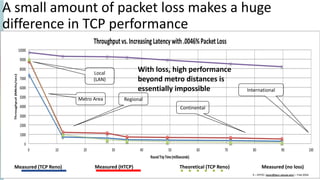

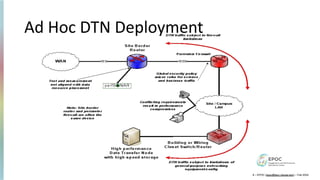

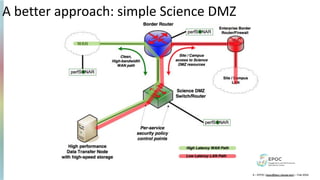

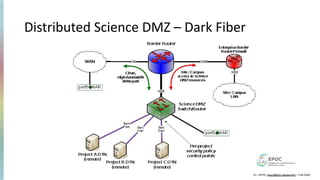

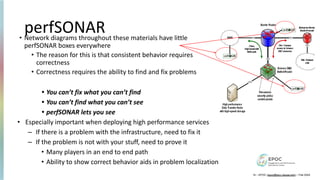

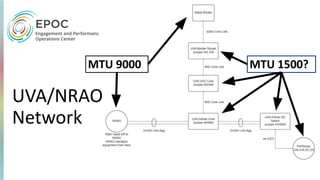

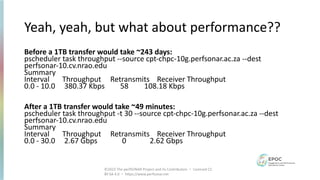

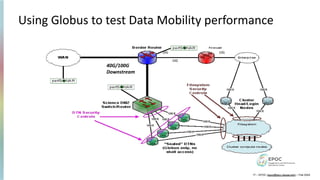

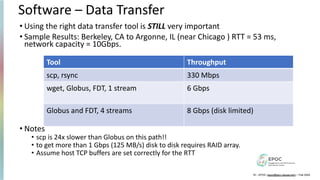

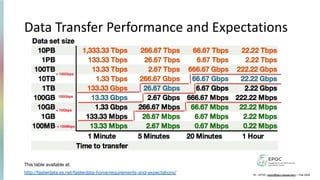

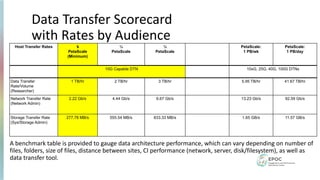

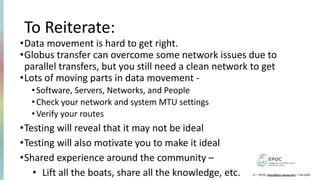

The document outlines the NSF award #2328479 focused on improving data transfer performance using Globus and the Science DMZ framework during the Globus World event in Chicago, May 2024. It highlights critical components for effective data transfer networks, including the need for optimized hardware, dedicated nodes, and specific transfer tools to achieve high performance. Challenges such as packet loss and network capacity are discussed, along with recommendations for testing and maintaining network integrity to facilitate efficient research data movement.