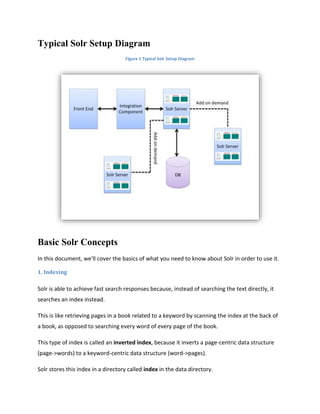

The document provides a comprehensive overview of Apache Solr, an enterprise search server that allows for fast indexing and querying of data using various formats such as XML and JSON. It covers installation, basic concepts, features like faceting, spell checking, and relevance, as well as advanced capabilities in SolrCloud, emphasizing fault-tolerant clusters and efficient data management. Limitations and integration with .NET using the SolrNet library are also discussed, highlighting Solr's ability to handle large volumes of data with optimized performance.

!["numFound": 1,

"start": 0,

"docs": [

{

"id": "3007WFP",

"name": "Dell Widescreen UltraSharp 3007WFP",

"manu": "Dell, Inc.",

"includes": "USB cable",

"weight": 401.6,

"price": 2199,

"popularity": 6,

"inStock": true,

"store": "43.17614,-90.57341",

"cat": [

"electronics",

"monitor"

],

"features": [

"30" TFT active matrix LCD, 2560 x 1600, .25mm dot pitch, 700:1 contrast"

]

}

]

}

}

Faceting

Faceting is the arrangement of search results into categories based on indexed terms. Searchers

are presented with the indexed terms along with numerical counts of how many matching

documents were found were each term. Faceting makes it easy for users to explore search

results, narrowing in on exactly the results they are looking for.](https://image.slidesharecdn.com/apachesolrtechdoc-150416051405-conversion-gate02/85/Apache-solr-tech-doc-9-320.jpg)