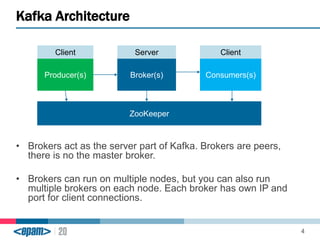

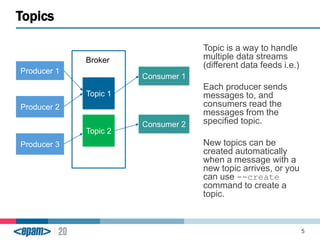

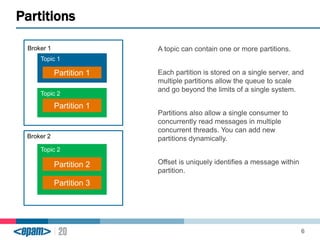

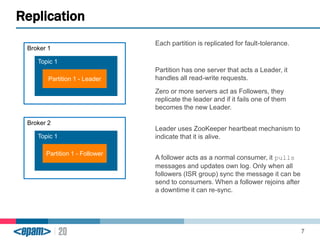

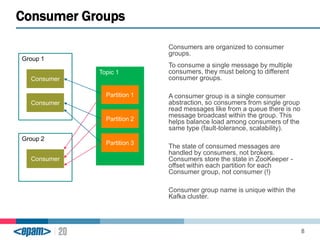

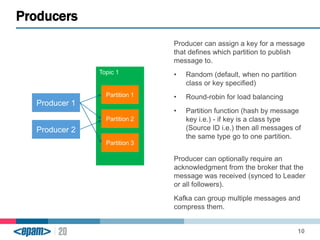

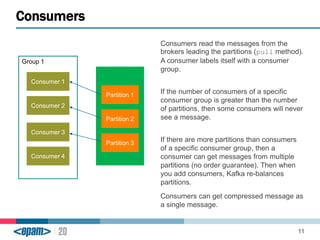

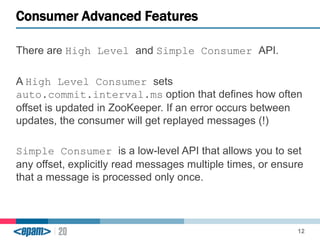

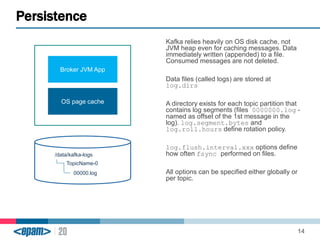

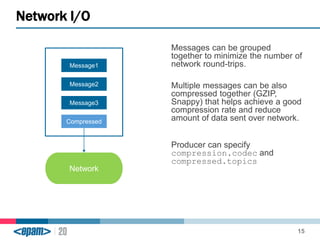

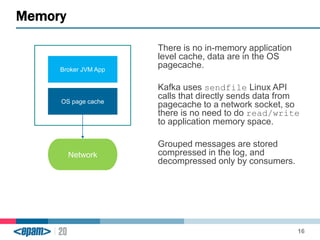

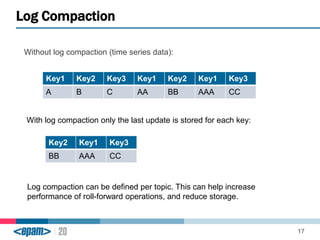

Apache Kafka is a scalable, fault-tolerant messaging system that functions as a publish-subscribe model connecting producers and consumers. It utilizes topics and partitions to manage data streams and ensures message durability and delivery through a broker system and a consumer group architecture. Additionally, Kafka supports advanced features such as message compression, log compaction, and retention policies for effective data handling.