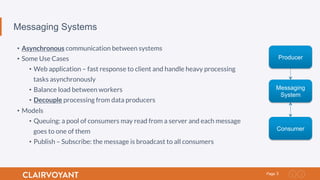

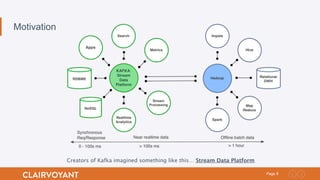

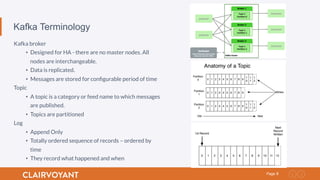

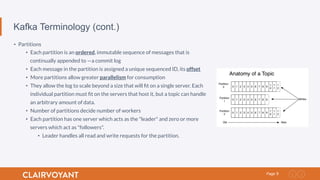

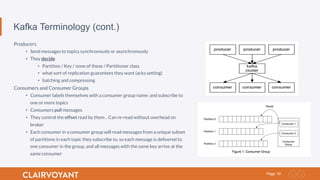

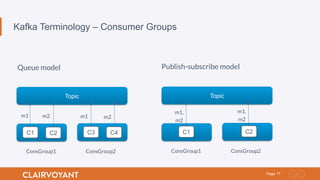

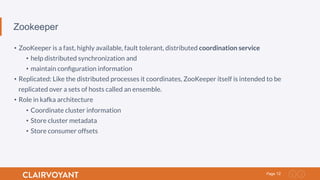

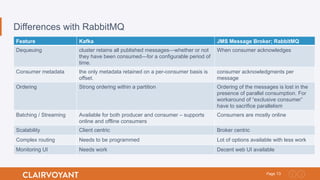

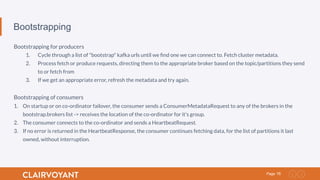

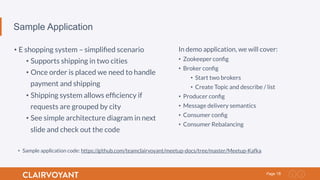

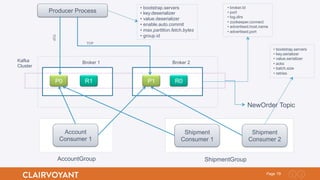

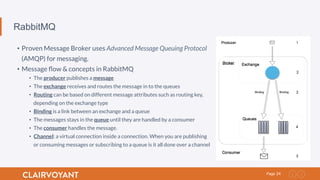

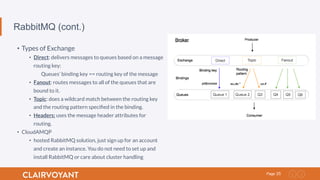

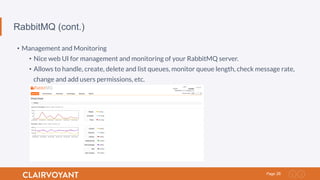

The document presents an overview of Kafka, an open-source message broker designed for asynchronous communication between systems, highlighting its architecture, features, and use cases. It discusses Kafka's terminology, Zookeeper's role in coordination, and compares Kafka with RabbitMQ, emphasizing its scalability and message handling capabilities. Additionally, it includes a sample application for an e-shopping system that demonstrates Kafka's functionality.