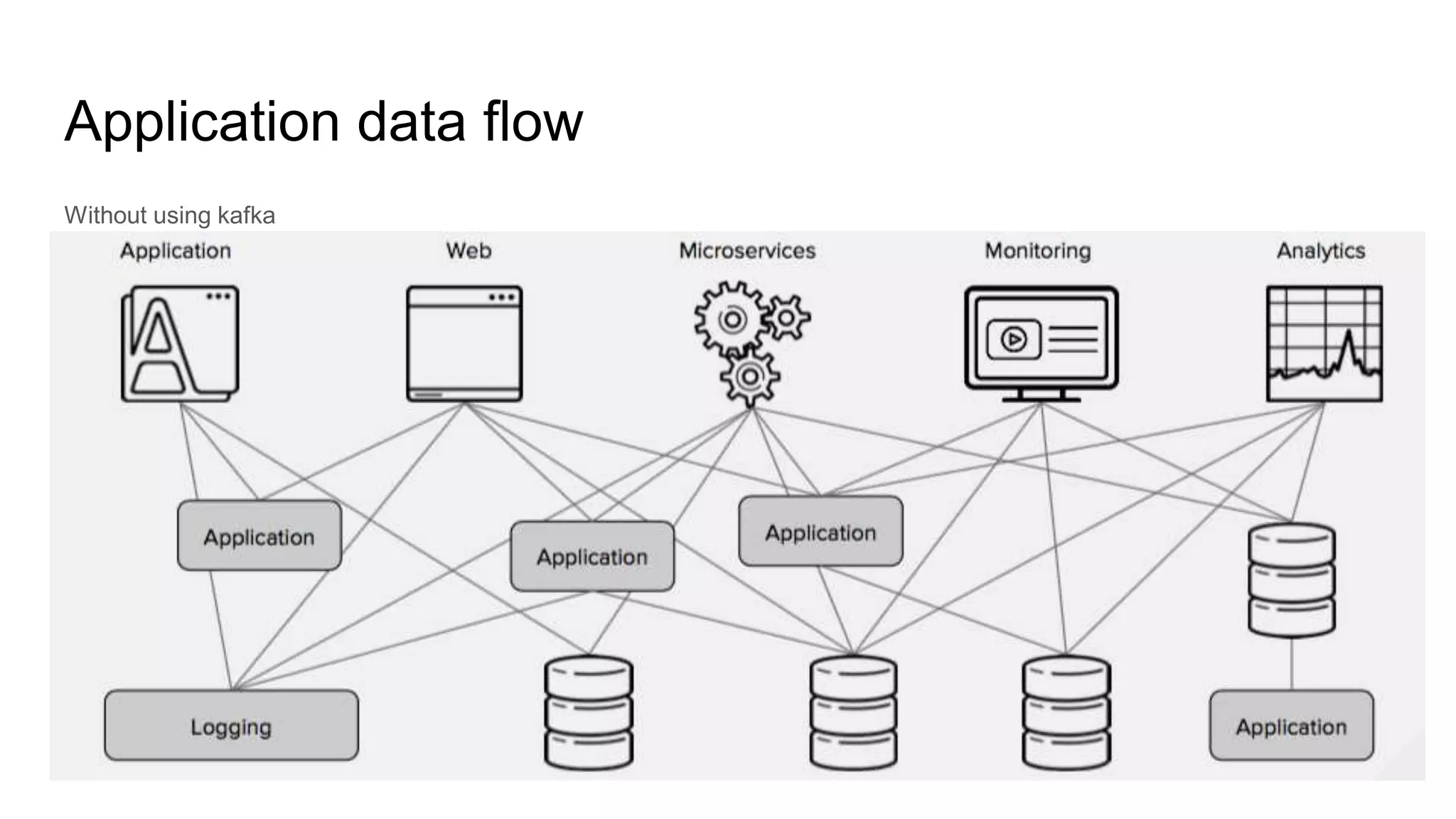

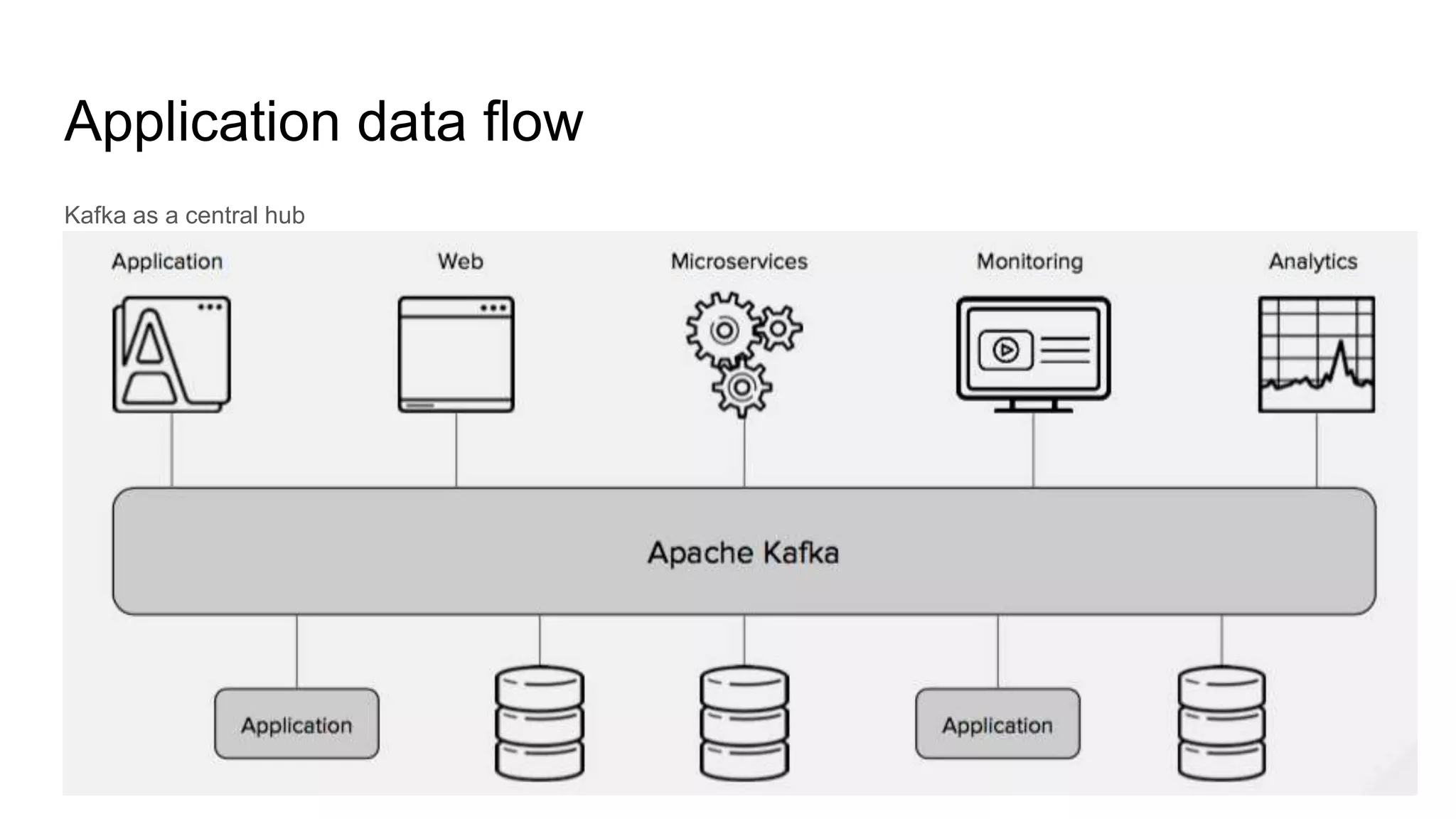

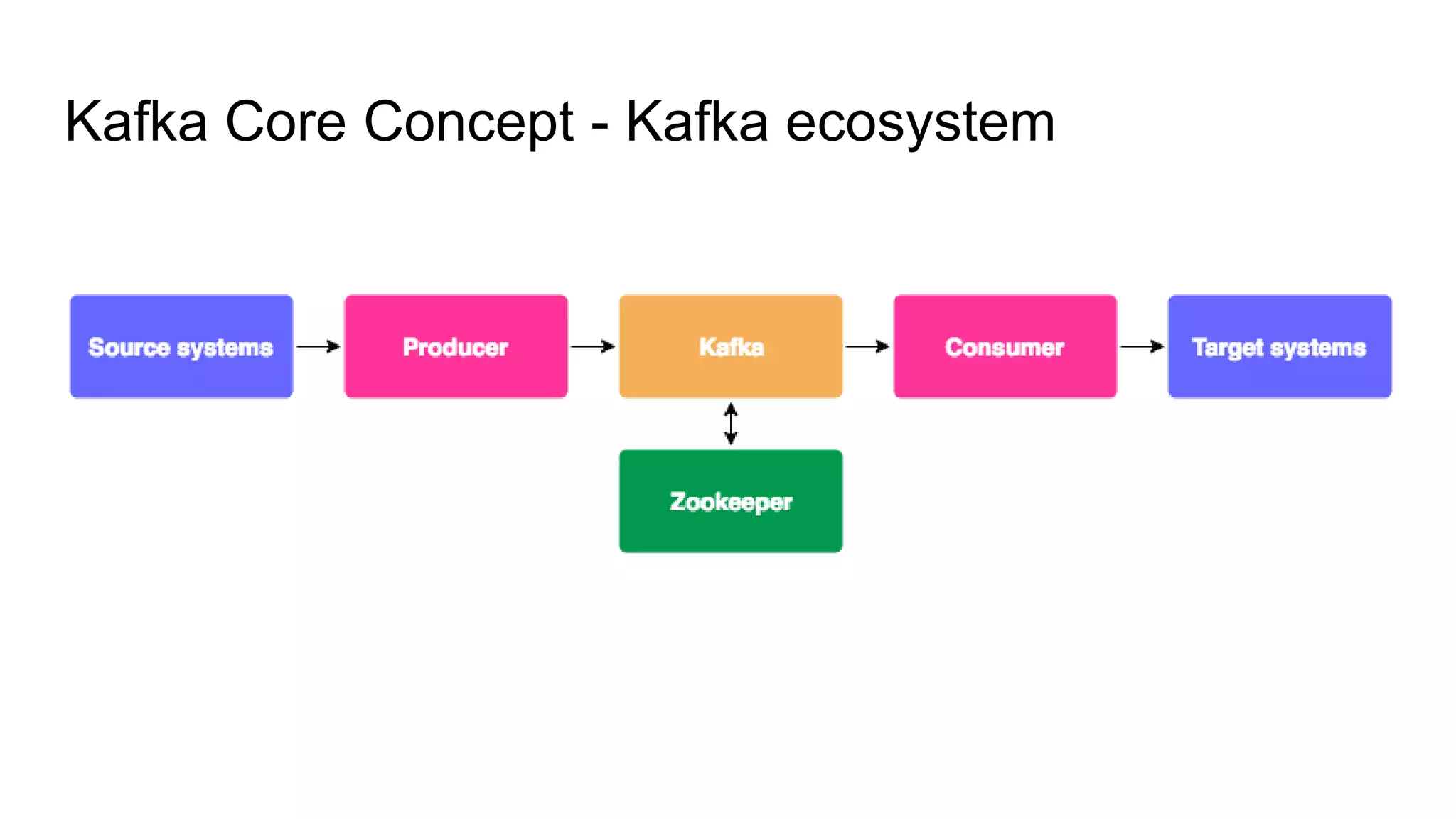

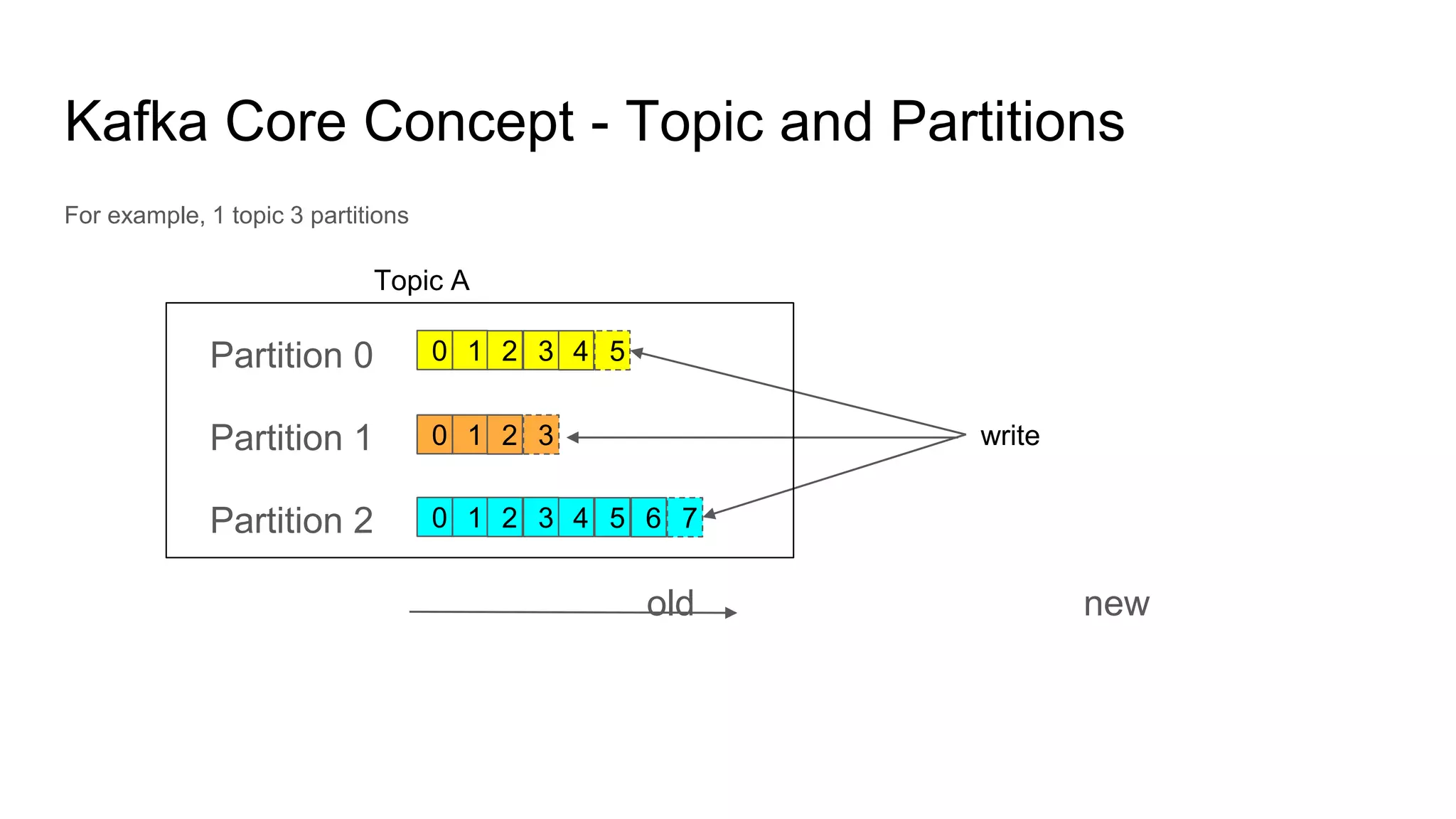

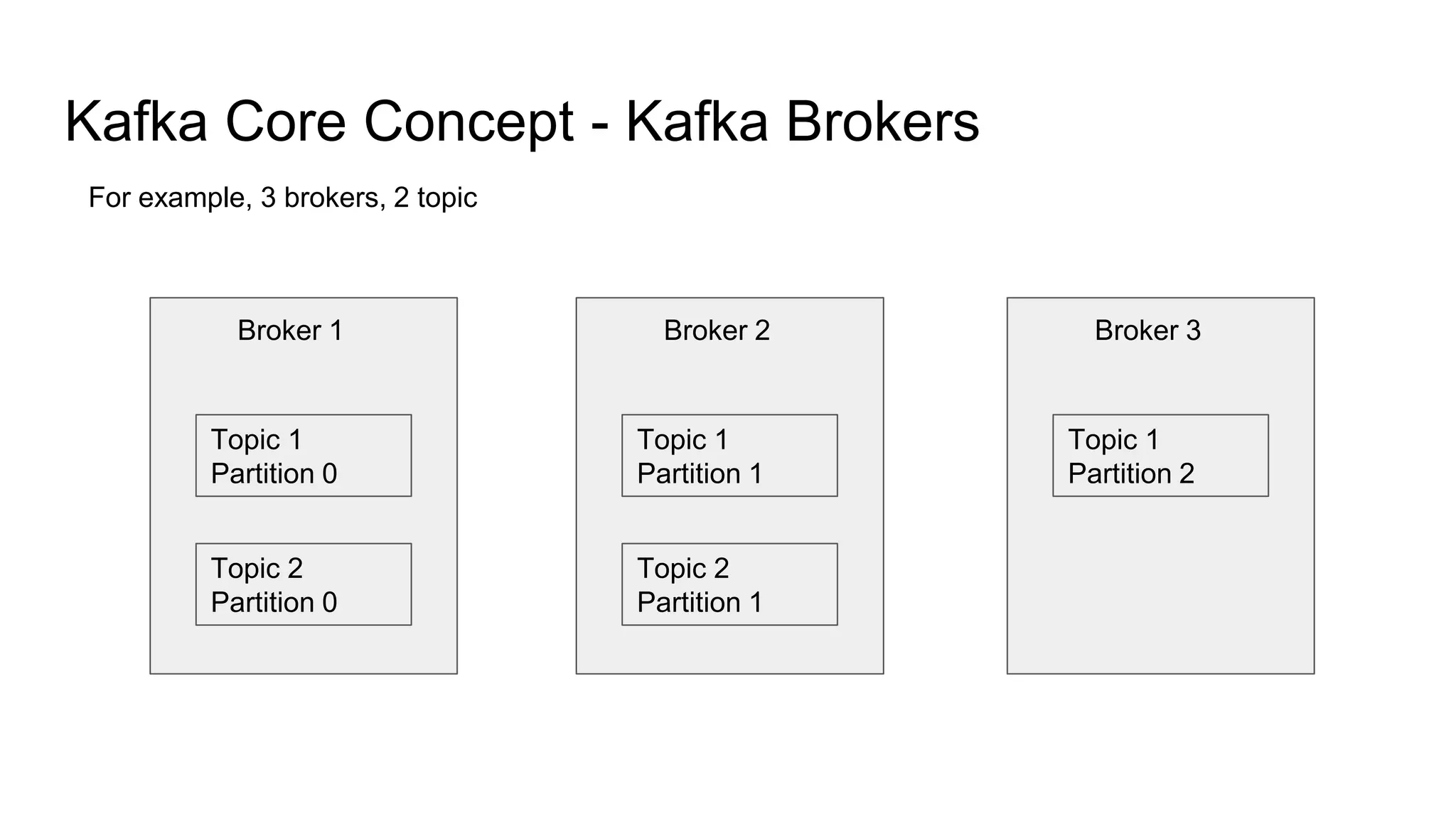

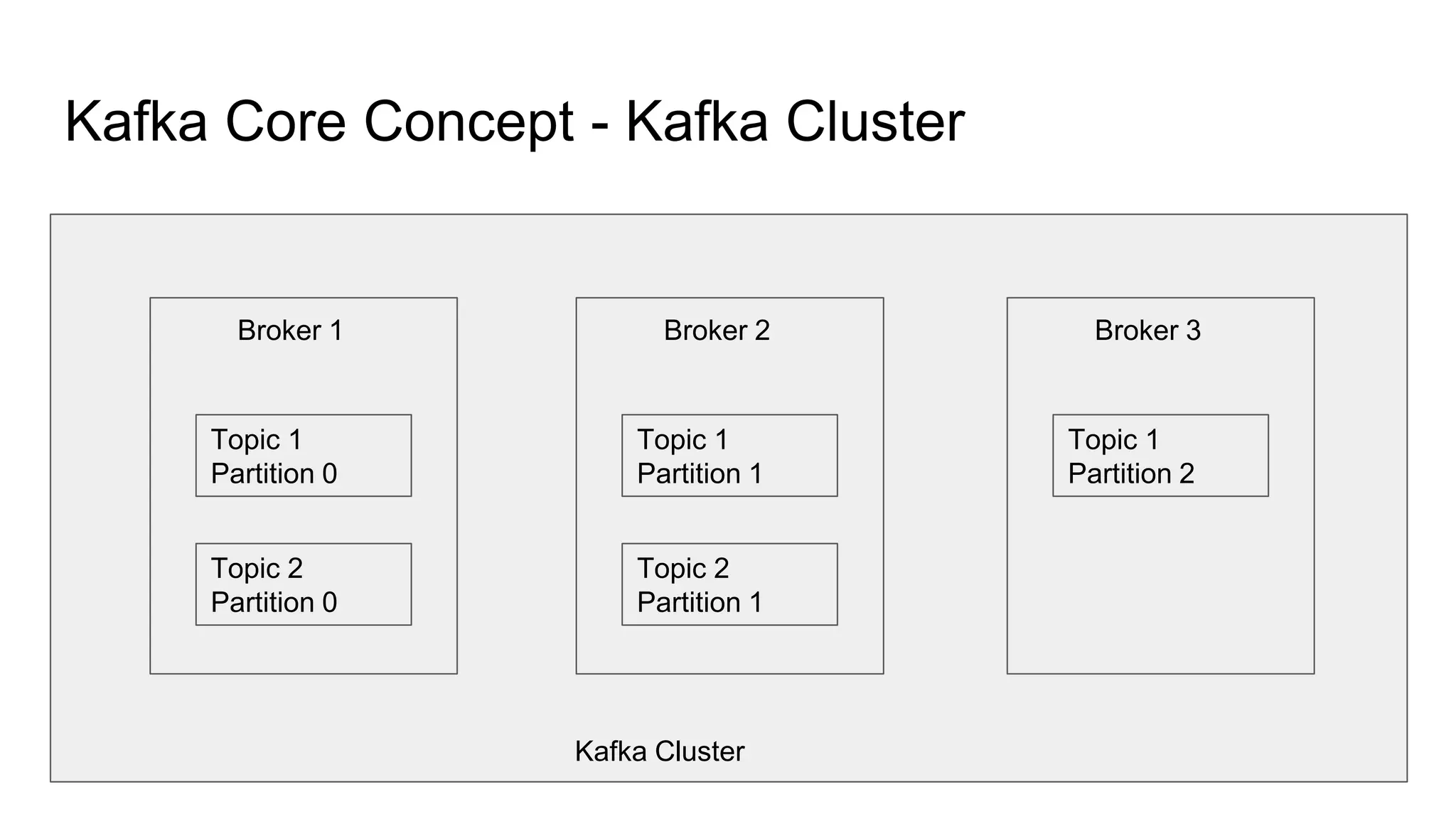

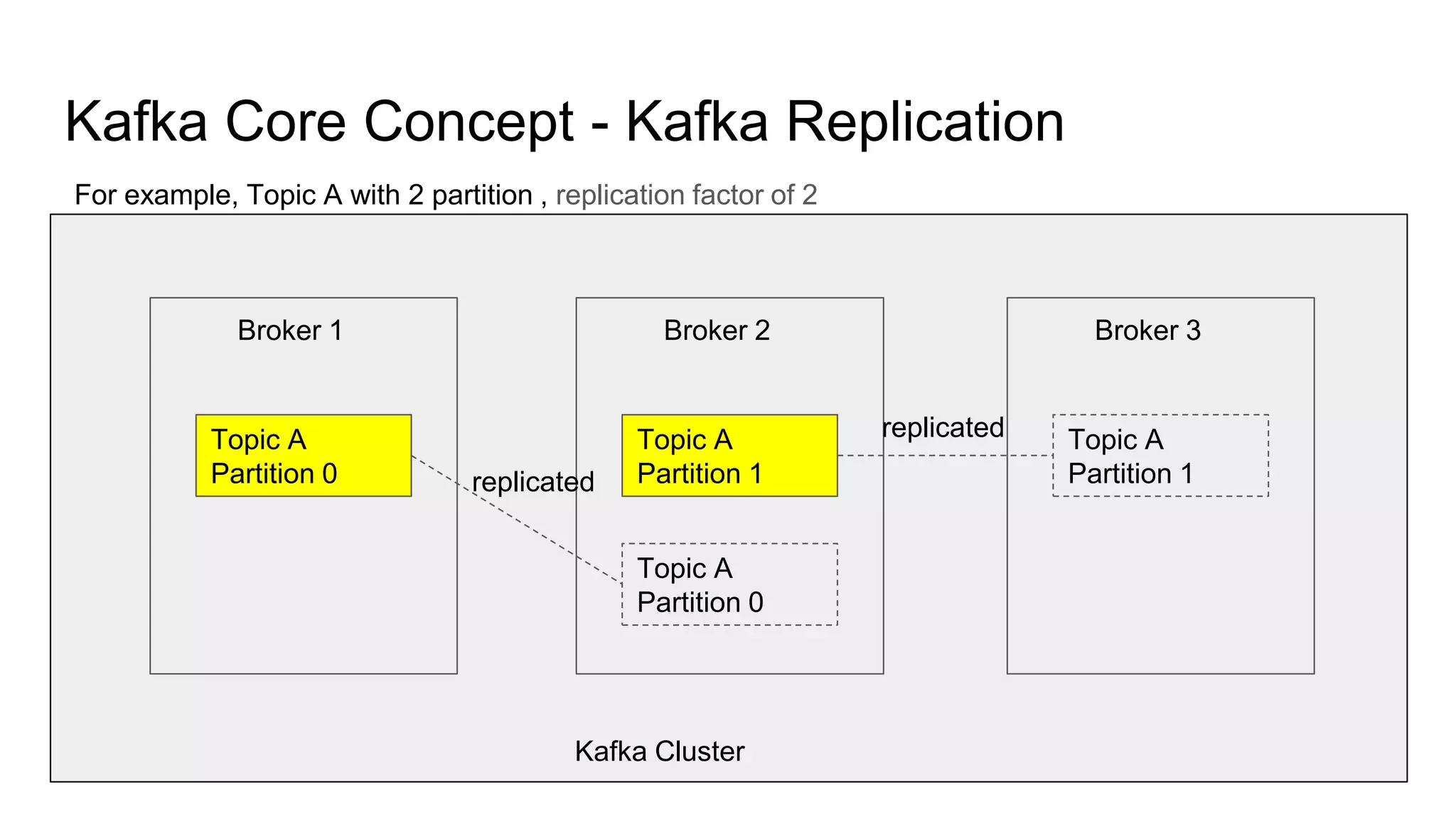

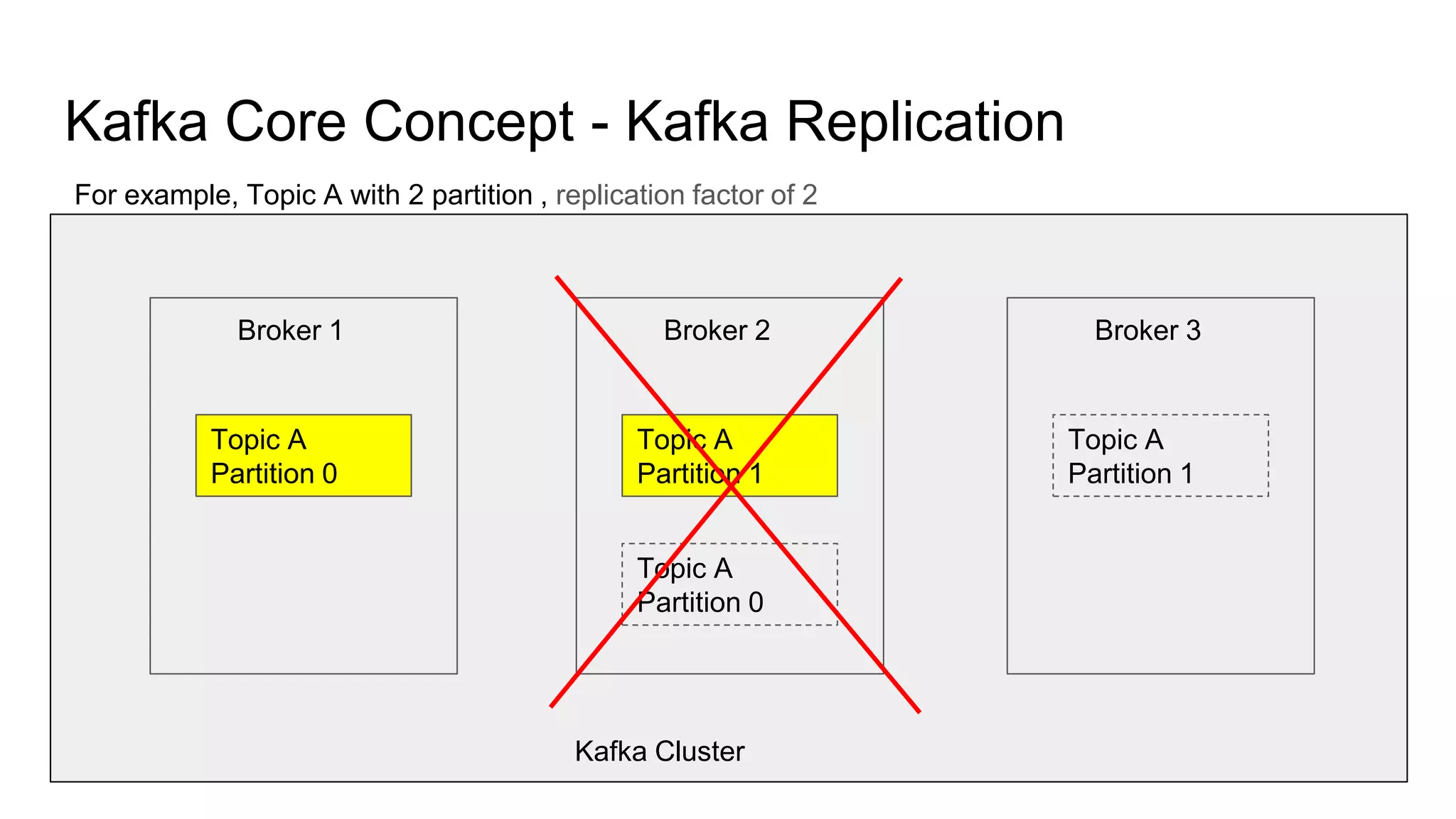

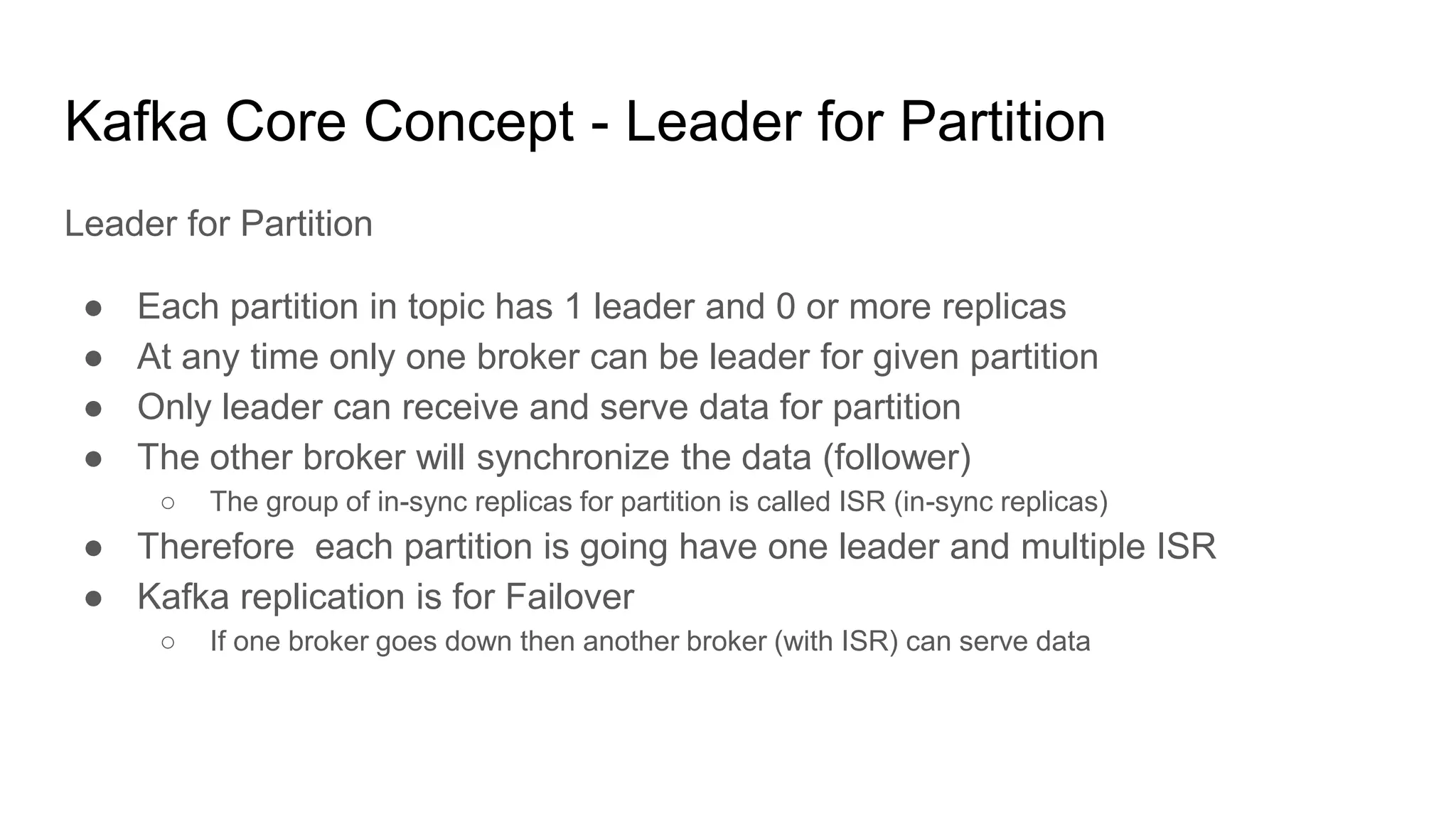

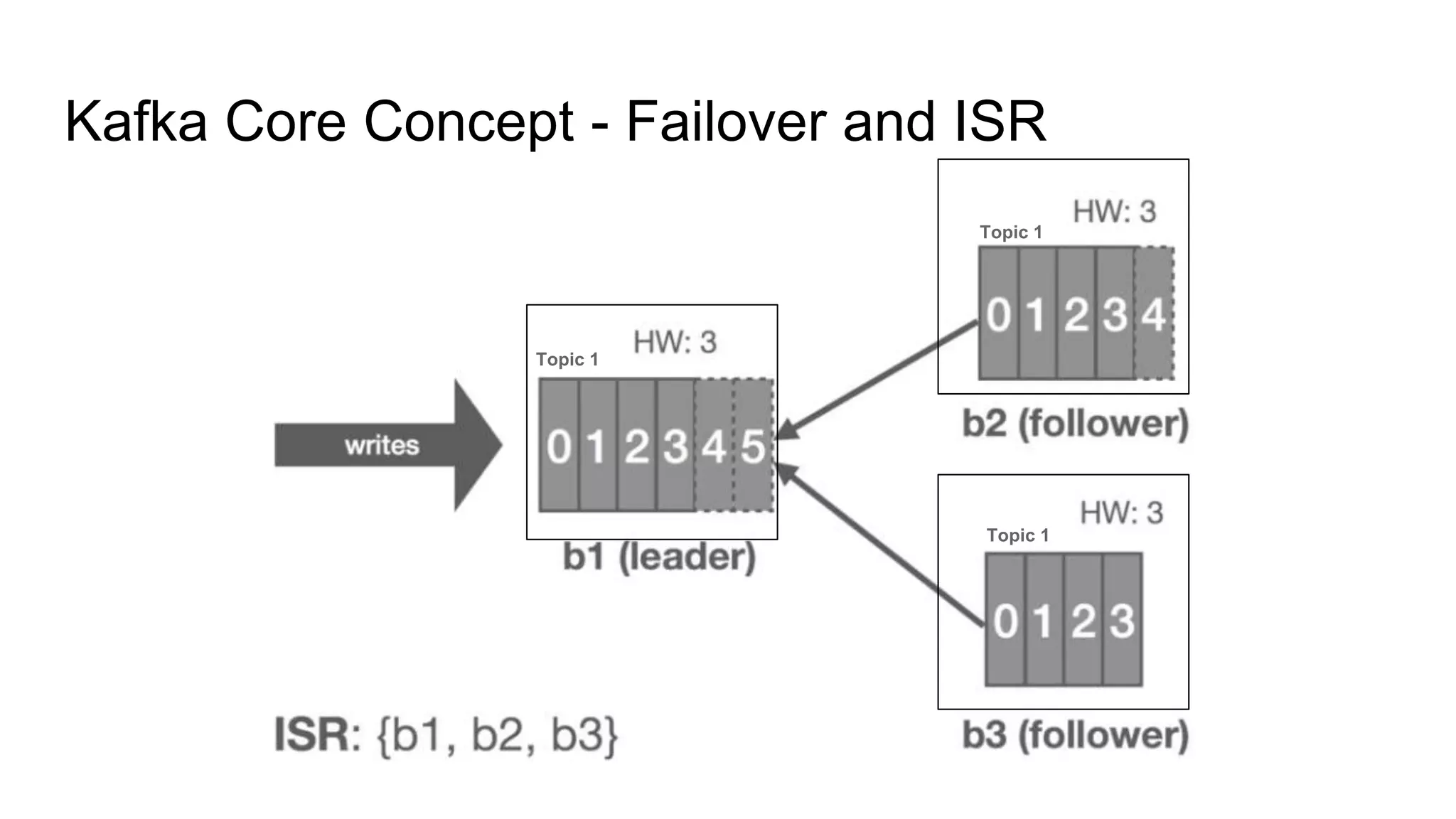

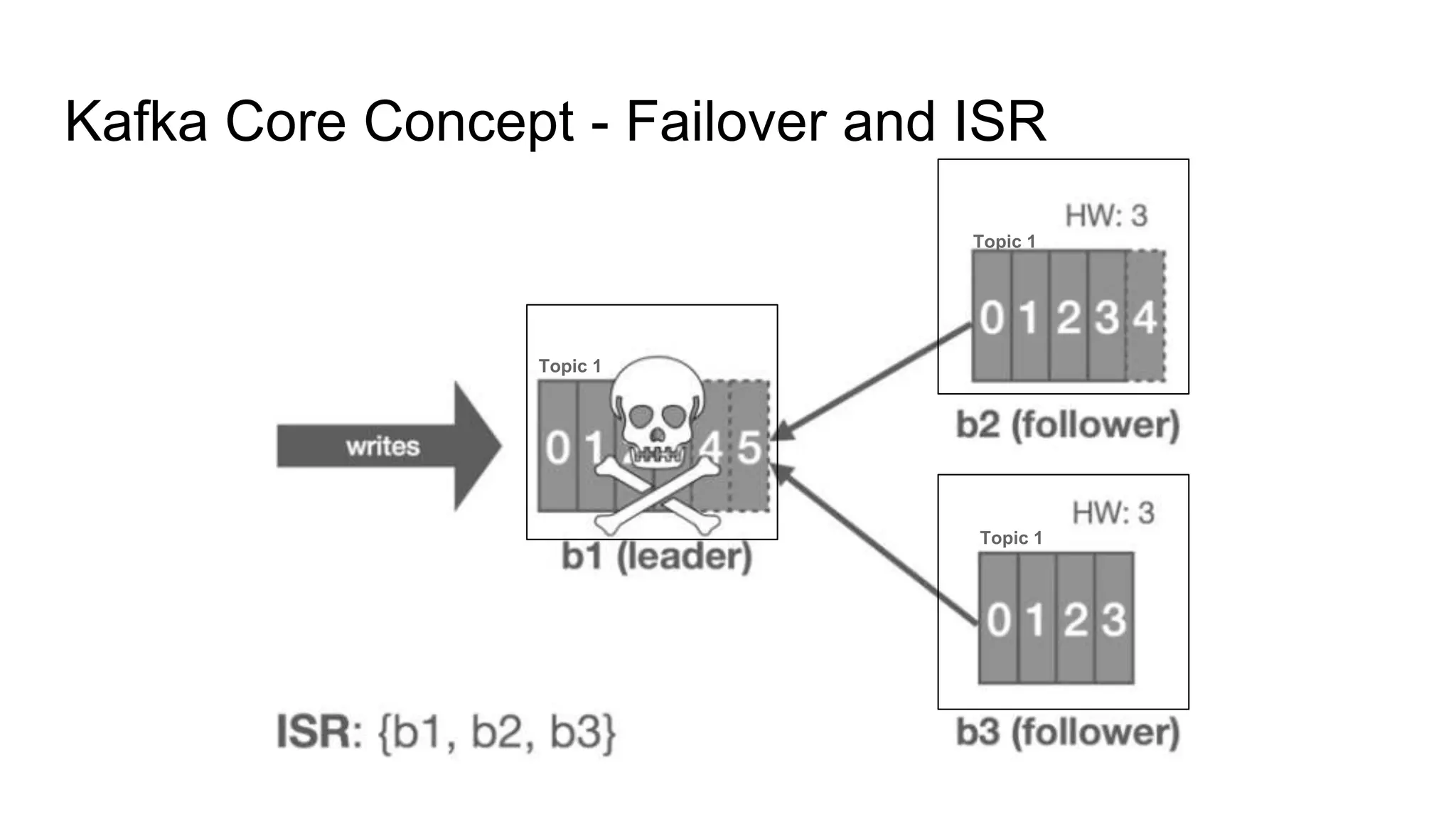

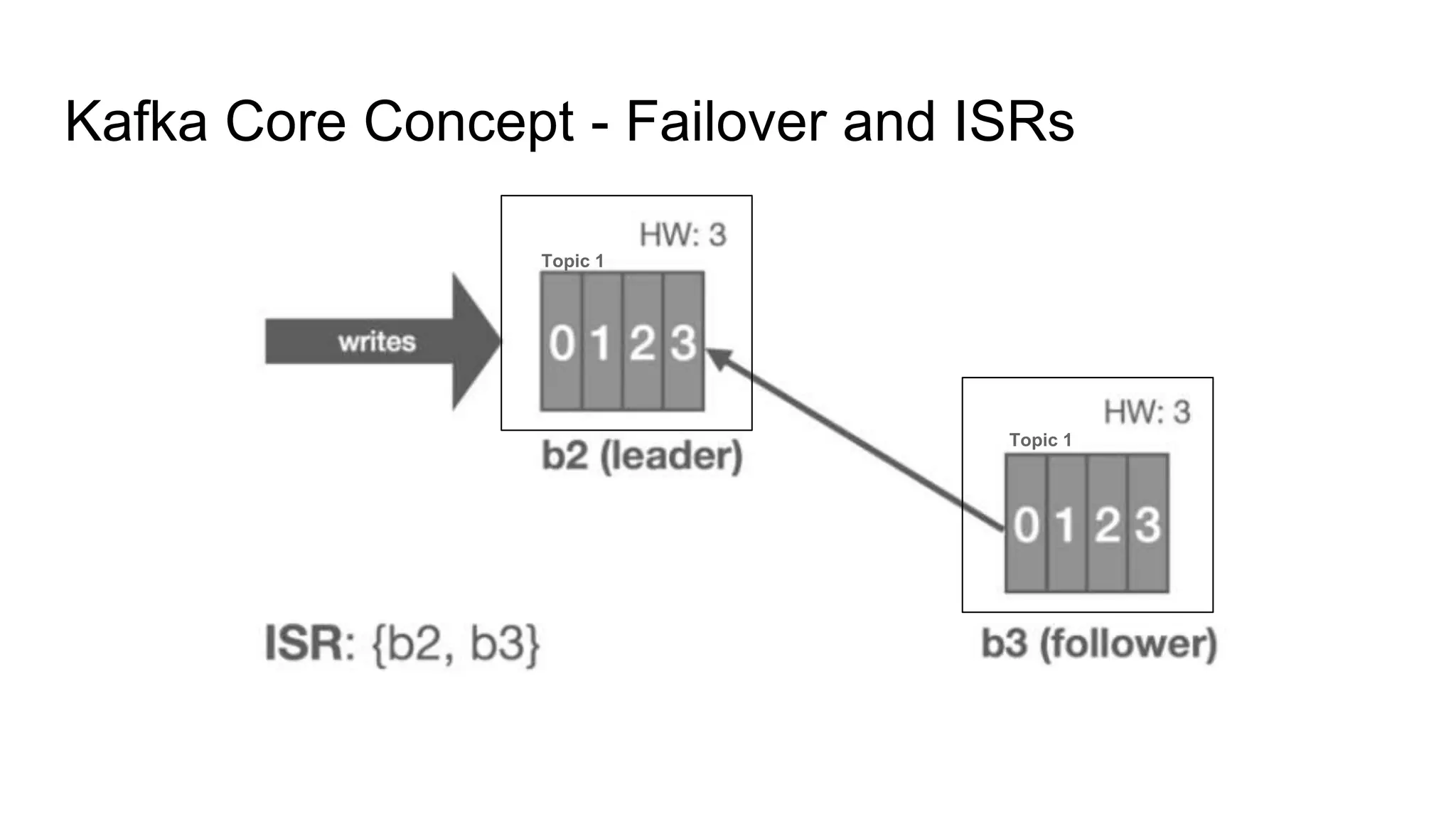

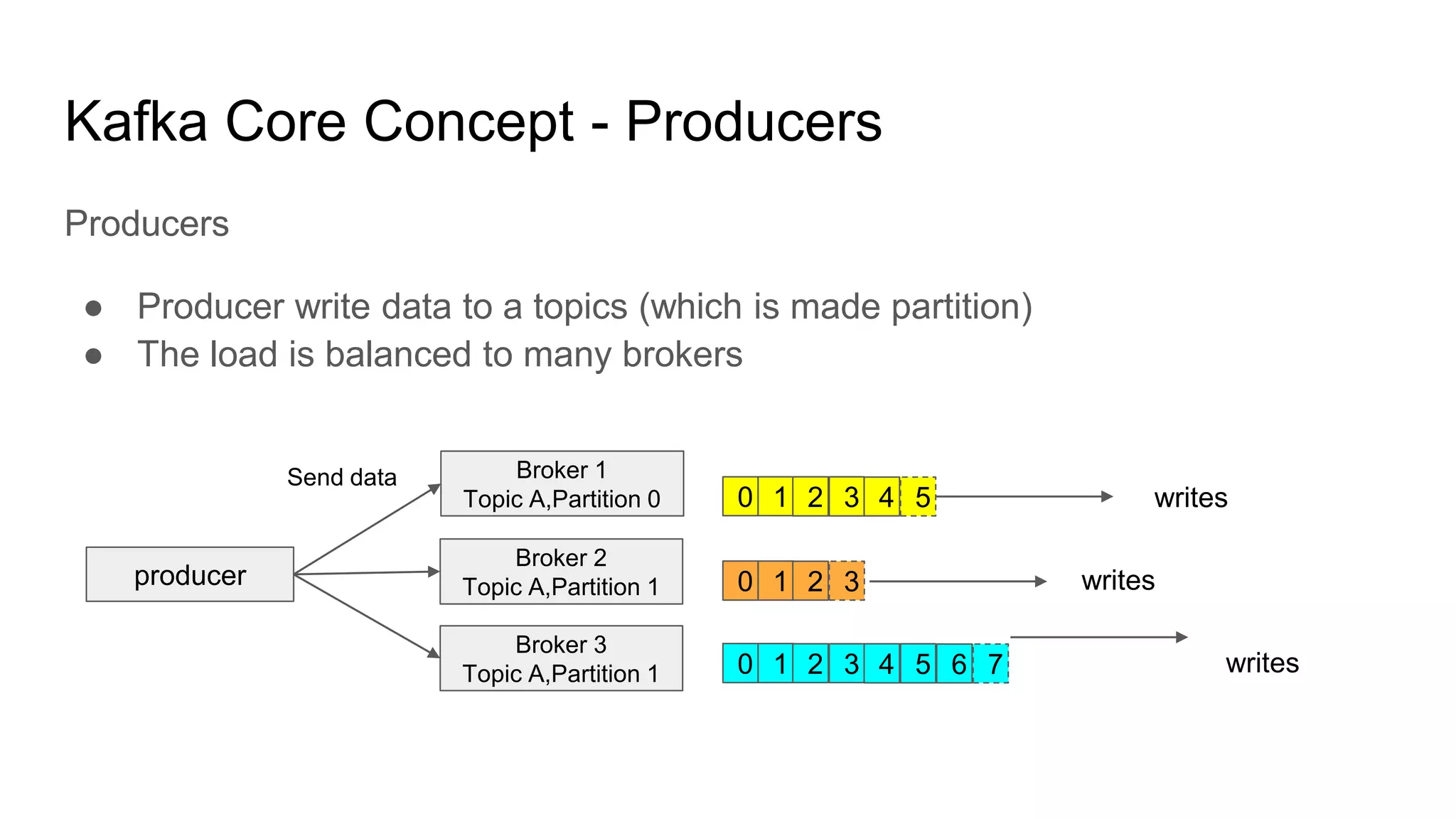

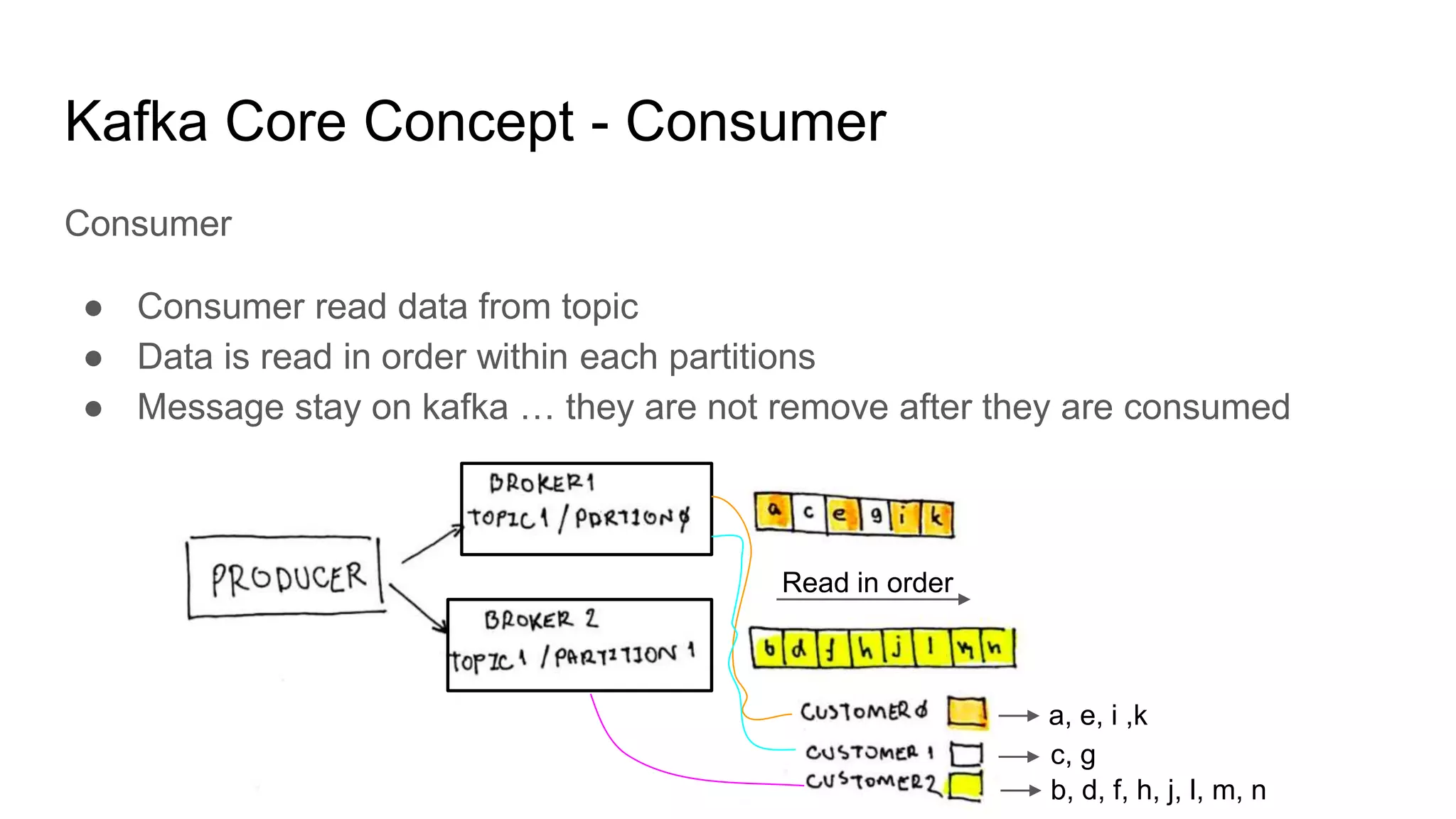

This document provides an overview of Apache Kafka. It begins with defining Kafka as a distributed streaming platform and messaging system. It then lists the agenda which includes what Kafka is, why it is used, common use cases, major companies that use it, how it achieves high performance, and core concepts. Core concepts explained include topics, partitions, brokers, replication, leaders, and producers and consumers. The document also provides examples to illustrate these concepts.