Embed presentation

Download as PDF, PPTX

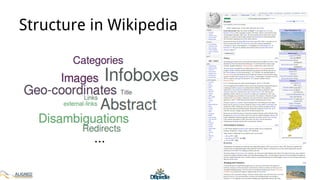

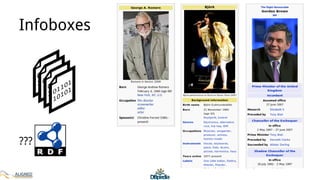

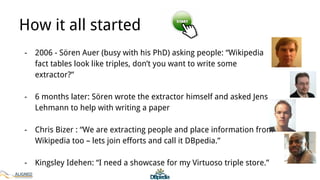

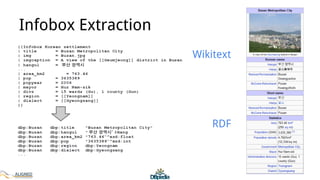

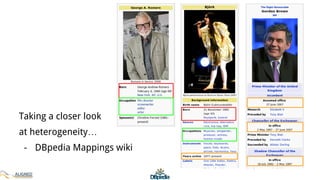

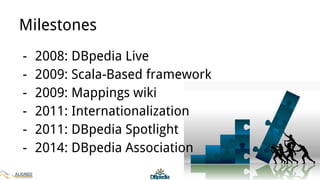

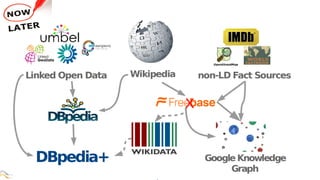

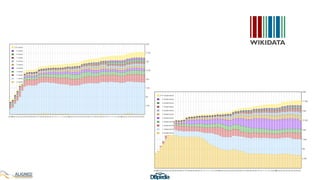

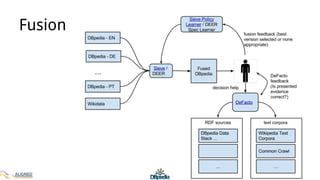

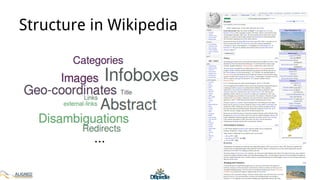

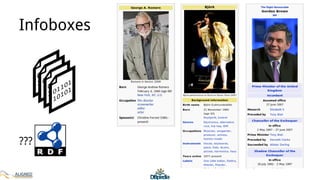

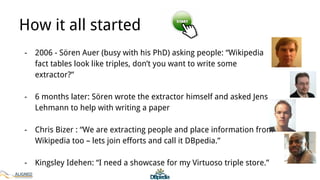

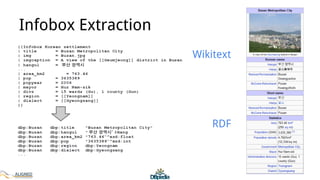

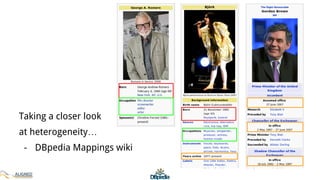

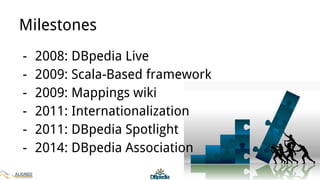

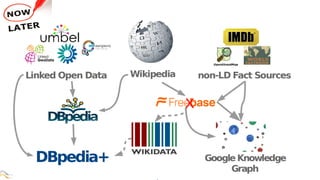

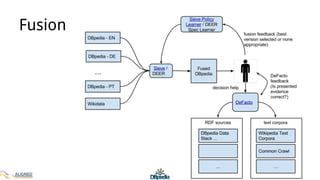

The document discusses the development of DBpedia, detailing its origins, milestones, and the collaborative efforts that led to its evolution. It highlights the extraction of structured data from Wikipedia, the growth in entities and triples over the years, and the need for DBpedia to adapt and integrate with enterprise knowledge. Additionally, it mentions ongoing projects and acknowledges contributors to the presentation.