FewShotImagewithSVM .pptx

•Download as PPTX, PDF•

0 likes•3 views

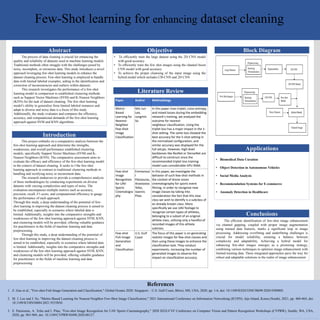

To efficiently train the large dataset using the 2D CNN model with good accuracy To efficiently train the few shot images using the channel boost CNN model with good accuracy To achieve the proper cleansing of the input image using the hybrid model which includes CB-CNN and 2D CNN

Report

Share

Report

Share

Recommended

Recommended

More Related Content

Similar to FewShotImagewithSVM .pptx

Similar to FewShotImagewithSVM .pptx (20)

A Comparative Case Study on Compression Algorithm for Remote Sensing Images

A Comparative Case Study on Compression Algorithm for Remote Sensing Images

Partial half fine-tuning for object detection with unmanned aerial vehicles

Partial half fine-tuning for object detection with unmanned aerial vehicles

DISK FAILURE PREDICTION BASED ON MULTI-LAYER DOMAIN ADAPTIVE LEARNING

DISK FAILURE PREDICTION BASED ON MULTI-LAYER DOMAIN ADAPTIVE LEARNING

Locate, Size and Count: Accurately Resolving People in Dense Crowds via Detec...

Locate, Size and Count: Accurately Resolving People in Dense Crowds via Detec...

IRJET- A Survey on the Enhancement of Video Action Recognition using Semi-Sup...

IRJET- A Survey on the Enhancement of Video Action Recognition using Semi-Sup...

Visual Saliency Model Using Sift and Comparison of Learning Approaches

Visual Saliency Model Using Sift and Comparison of Learning Approaches

Investigating the Effect of BD-CRAFT to Text Detection Algorithms

Investigating the Effect of BD-CRAFT to Text Detection Algorithms

INVESTIGATING THE EFFECT OF BD-CRAFT TO TEXT DETECTION ALGORITHMS

INVESTIGATING THE EFFECT OF BD-CRAFT TO TEXT DETECTION ALGORITHMS

A Review on Prediction of Compressive Strength and Slump by Using Different M...

A Review on Prediction of Compressive Strength and Slump by Using Different M...

CONTENT BASED VIDEO CATEGORIZATION USING RELATIONAL CLUSTERING WITH LOCAL SCA...

CONTENT BASED VIDEO CATEGORIZATION USING RELATIONAL CLUSTERING WITH LOCAL SCA...

Development and Comparison of Image Fusion Techniques for CT&MRI Images

Development and Comparison of Image Fusion Techniques for CT&MRI Images

IRJET- An Improvised Multi Focus Image Fusion Algorithm through Quadtree

IRJET- An Improvised Multi Focus Image Fusion Algorithm through Quadtree

IRJET- Analysis of Vehicle Number Plate Recognition

IRJET- Analysis of Vehicle Number Plate Recognition

IRJET- Brain Tumor Detection using Hybrid Model of DCT DWT and Thresholding

IRJET- Brain Tumor Detection using Hybrid Model of DCT DWT and Thresholding

IRJET - Fusion of CT and MRI for the Detection of Brain Tumor by SWT and Prob...

IRJET - Fusion of CT and MRI for the Detection of Brain Tumor by SWT and Prob...

A Low Rank Mechanism to Detect and Achieve Partially Completed Image Tags

A Low Rank Mechanism to Detect and Achieve Partially Completed Image Tags

Neuroendoscopy Adapter Module Development for Better Brain Tumor Image Visual...

Neuroendoscopy Adapter Module Development for Better Brain Tumor Image Visual...

Recently uploaded

Differences between analog and digital communicationanalog-vs-digital-communication (concept of analog and digital).pptx

analog-vs-digital-communication (concept of analog and digital).pptxKarpagam Institute of Teechnology

Recently uploaded (20)

Theory of Time 2024 (Universal Theory for Everything)

Theory of Time 2024 (Universal Theory for Everything)

Instruct Nirmaana 24-Smart and Lean Construction Through Technology.pdf

Instruct Nirmaana 24-Smart and Lean Construction Through Technology.pdf

analog-vs-digital-communication (concept of analog and digital).pptx

analog-vs-digital-communication (concept of analog and digital).pptx

5G and 6G refer to generations of mobile network technology, each representin...

5G and 6G refer to generations of mobile network technology, each representin...

Developing a smart system for infant incubators using the internet of things ...

Developing a smart system for infant incubators using the internet of things ...

Interfacing Analog to Digital Data Converters ee3404.pdf

Interfacing Analog to Digital Data Converters ee3404.pdf

Working Principle of Echo Sounder and Doppler Effect.pdf

Working Principle of Echo Sounder and Doppler Effect.pdf

Involute of a circle,Square, pentagon,HexagonInvolute_Engineering Drawing.pdf

Involute of a circle,Square, pentagon,HexagonInvolute_Engineering Drawing.pdf

Fuzzy logic method-based stress detector with blood pressure and body tempera...

Fuzzy logic method-based stress detector with blood pressure and body tempera...

FewShotImagewithSVM .pptx

- 1. Few-Shot learning for enhancing dataset cleaning 1. Z. Guo et al., "Few-shot Fish Image Generation and Classification," Global Oceans 2020: Singapore – U.S. Gulf Coast, Biloxi, MS, USA, 2020, pp. 1-6, doi: 10.1109/IEEECONF38699.2020.9389005. 2. M. J. Lee and J. So, "Metric-Based Learning for Nearest-Neighbor Few-Shot Image Classification," 2021 International Conference on Information Networking (ICOIN), Jeju Island, Korea (South), 2021, pp. 460-464, doi: 10.1109/ICOIN50884.2021.9333850. 3. E. Patsiouras, A. Tefas and I. Pitas, "Few-shot Image Recognition for UAV Sports Cinematography," 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, WA, USA, 2020, pp. 965-969, doi: 10.1109/CVPRW50498.2020.00127. References The process of data cleaning is crucial for enhancing the quality and reliability of datasets used in machine learning models. Traditional methods often struggle with the challenges posed by noisy, incomplete, or erroneous data. This study introduces a novel approach leveraging few-shot learning models to enhance the dataset cleaning process. Few-shot learning is employed to handle data with limited labeled examples, aiding in the identification and correction of inconsistencies and outliers within datasets. This research investigates the performance of a few-shot learning model in comparison to established clustering methods such as Support Vector Machines (SVM) and K-Nearest Neighbors (KNN) for the task of dataset cleaning. The few-shot learning model’s ability to generalize from limited labeled instances and adapt to diverse and noisy data is a focus of this study. Additionally, the study evaluates and compares the efficiency, accuracy, and computational demands of the few-shot learning approach against SVM and KNN algorithms. Abstract Introduction Objective Block Diagram The efficient identification of few-shot image enhancement via channel gapping, coupled with proper image augmentation using trained data features, marks a significant leap in image processing. Addressing overfitting and underfitting challenges is crucial for model reliability, ensuring a balance between complexity and adaptability. Achieving a hybrid model for enhancing few-shot images emerges as a promising strategy, combining various techniques to optimize image enhancement with limited training data. These integrated approaches pave the way for robust and adaptable solutions in the realm of image enhancement Conclusions This project embarks on a comparative analysis between the few-shot learning approach and determine the strengths, weaknesses, and overall performance established clustering models, specifically Support Vector Machines (SVM) and K- Nearest Neighbors (KNN). The comparative assessment aims to evaluate the efficacy and efficiency of the few-shot learning model in the context of dataset cleaning. It seeks to f the few-shot learning approach in contrast to traditional clustering methods in handling and rectifying noisy or inconsistent data. The research endeavors to provide a comprehensive analysis of these methodologies by conducting experiments on diverse datasets with varying complexities and types of noise. The evaluation encompasses multiple metrics such as accuracy, precision, recall, F1-score, and computational efficiency to gauge the performance of each approach. Through this study, a deep understanding of the potential of few- shot learning in improving the dataset cleaning process is aimed to be established, especially in scenarios where labeled data is limited. Additionally, insights into the comparative strengths and weaknesses of the few-shot learning approach against SVM, KNN, and clustering models will be provided, offering valuable guidance for practitioners in the fields of machine learning and data preprocessing. Through this study, a deep understanding of the potential of few-shot learning in improving the dataset cleaning process is aimed to be established, especially in scenarios where labeled data is limited. Additionally, insights into the comparative strengths and weaknesses of the few-shot learning approach against SVM, KNN, and clustering models will be provided, offering valuable guidance for practitioners in the fields of machine learning and data preprocessing. Paper Author Methodology Metric- Based Learning for Nearest- Neighbor Few-Shot Image Classification Min Jun Lee, Jungmin So In this paper Uses triplet, cross-entropy, and mixed losses during the embedding network's training, we analyzed the outcome for nearest- neighbour classification. Using the triplet loss has a major impact in the 1- shot setting. The same loss showed the best accuracy for the 5-shot setting in the normalized configuration, and similar accuracy was displayed for the full setups. However, high-level backbones like ResNet or DenseNet are difficult to construct since the recommended triplet loss training model uses considerable GPU RAM. Few-shot Image Recognition for UAV Sports Cinematogra phy Emmanoui l Patsiouras, Anastasios Tefas, Ioannis Pitas In this paper, we investigate the behavior of such few-shot methods in the context of drone vision cinematography for sports event filming, in order to recognize new image classes by taking into consideration the fact that this new class we wish to identify is a subclass of an already known class. More specifically we use UAV footage to recognize certain types of athletes, belonging to a subset of an original athlete class, utilizing only a handful of recorded images of this athlete subclass. Few-shot Fish Image Generation and Classification U.S. Gulf Coast The focus of this paper is on generating realistic images for few-shot classes and then using these images to enhance the classification task. They conduct experiments, increasing the number of generated images to observe the impact on classification accuracy. Literature Review • To efficiently train the large dataset using the 2D CNN model with good accuracy • To efficiently train the few shot images using the channel boost CNN model with good accuracy • To achieve the proper cleansing of the input image using the hybrid model which includes CB-CNN and 2D CNN Applications • Biomedical Data Curation • Object Detection in Autonomous Vehicles • Social Media Analysis • Recommendation Systems for E-commerce • Anomaly Detection in Healthcare