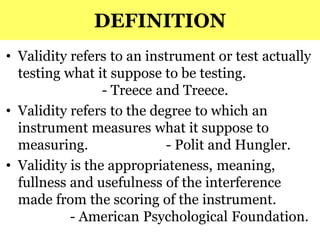

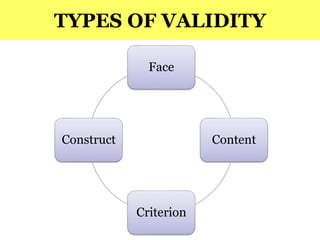

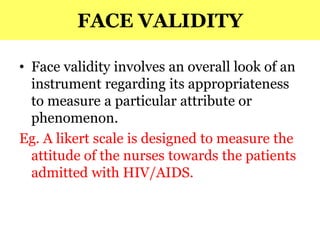

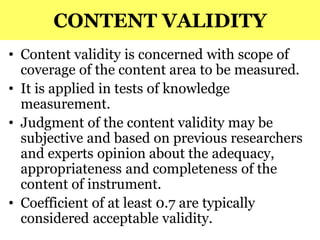

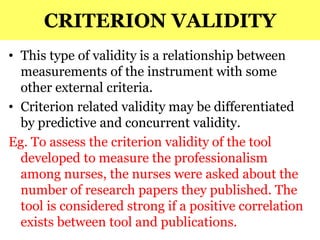

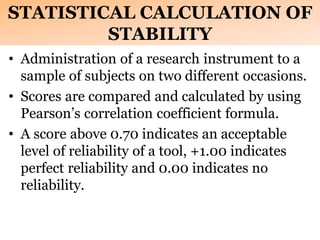

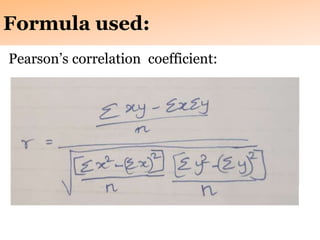

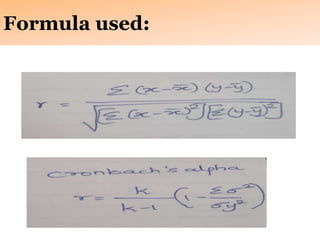

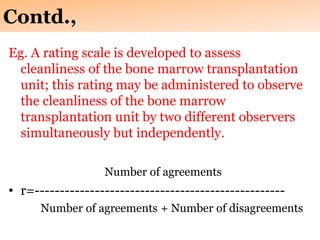

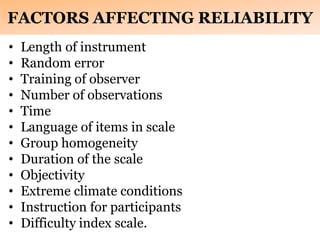

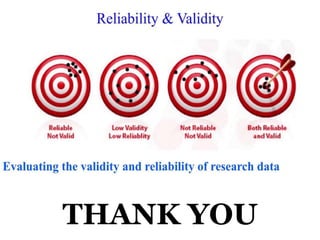

This document discusses the validity and reliability of research tools. It defines validity as the degree to which a tool measures what it is intended to measure. There are four main types of validity: face validity, content validity, criterion validity, and construct validity. Reliability refers to the consistency of a measurement tool. There are three aspects of reliability: stability, internal consistency, and equivalence. Stability assesses a tool's consistency over time, internal consistency examines consistency between items, and equivalence evaluates consistency between raters. Factors like length, training, and instructions can impact a tool's reliability. Overall, validity and reliability are important for ensuring research tools produce accurate and reproducible results.