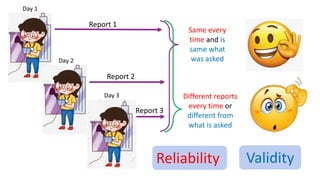

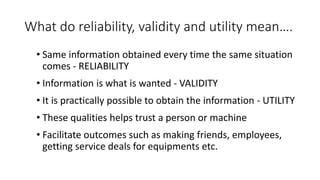

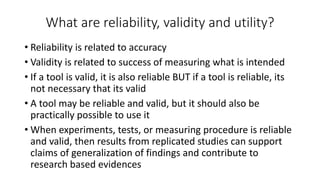

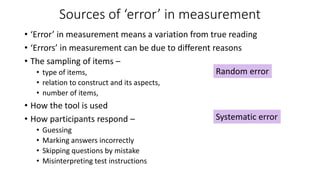

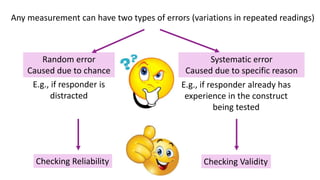

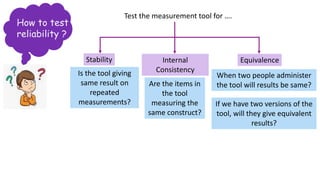

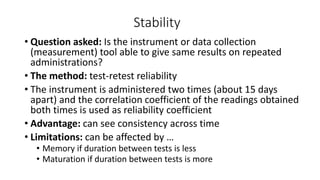

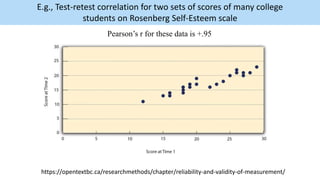

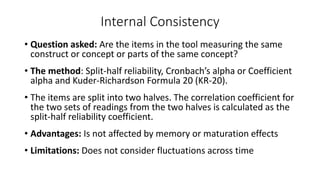

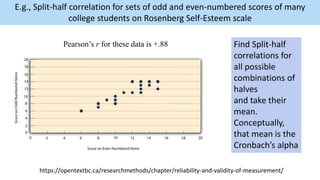

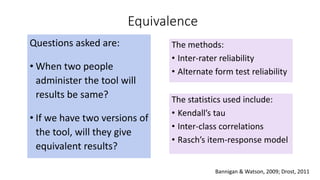

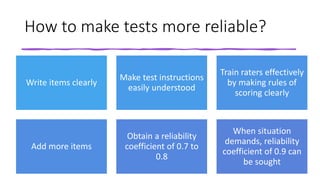

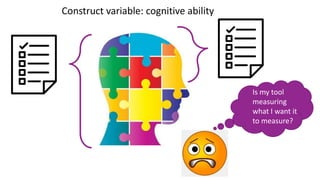

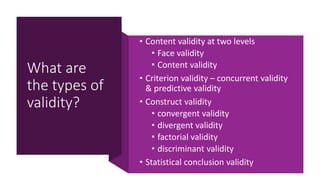

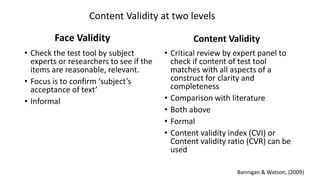

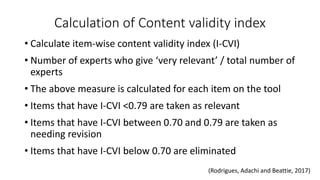

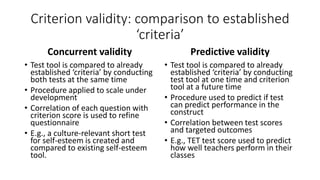

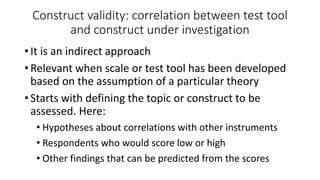

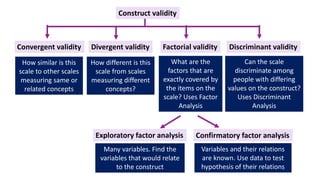

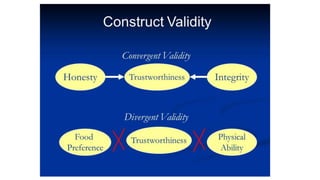

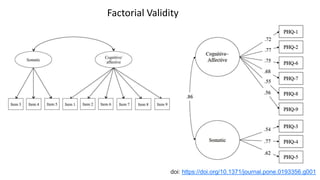

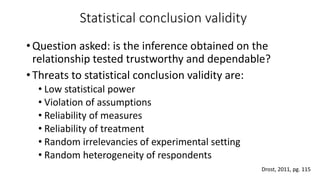

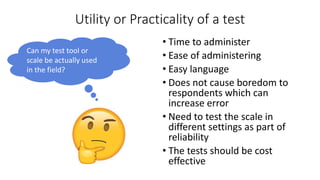

The document discusses the concepts of reliability, validity, and utility in research. It defines reliability as providing consistent results, validity as measuring what is intended, and utility as being practical to implement. The document then examines various methods for establishing reliability, such as test-retest reliability and internal consistency. It also explores different aspects of validity like content validity, criterion validity, and construct validity. Finally, it notes factors that determine the utility or practicality of a measurement tool, such as administration time and costs.