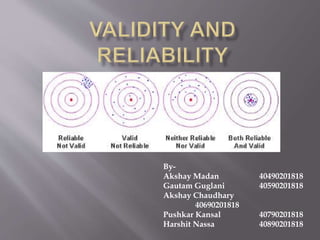

The document discusses different types of validity and reliability in psychological testing. It defines validity as the extent to which a test measures what it is intended to measure. There are several types of validity discussed, including content validity, face validity, criterion validity, construct validity, external validity, and internal validity. Reliability is defined as the degree to which a test consistently measures a construct. Types of reliability covered include test-retest reliability, parallel-forms reliability, internal consistency reliability, and inter-observer reliability.