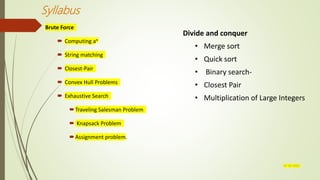

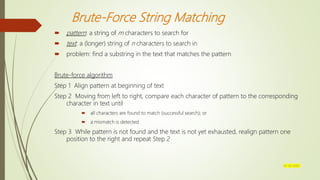

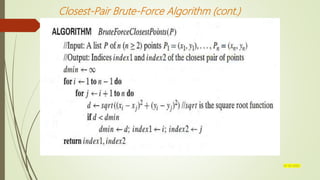

This document provides an overview of brute force and divide-and-conquer algorithms. It discusses various brute force algorithms like computing an, string matching, closest pair problem, convex hull problems, and exhaustive search algorithms like the traveling salesman problem and knapsack problem. It also analyzes the time efficiency of these brute force algorithms. The document then discusses the divide-and-conquer approach and provides examples like merge sort, quicksort, and matrix multiplication. It provides pseudocode and analysis for mergesort. In summary, the document covers brute force and divide-and-conquer techniques for solving algorithmic problems.

![Brute-Force Sorting Algorithm

Selection Sort

Scan the array to find its smallest element and swap it with the first element.

Then, starting with the second element, scan the elements to the right of it

to find the smallest among them and swap it with the second element.

Generally, on pass i (0 i n-2), find the smallest element in A[i..n-1] and

swap it with A[i]:

07-03-2022](https://image.slidesharecdn.com/unit2-220307075928/85/Unit-2-4-320.jpg)

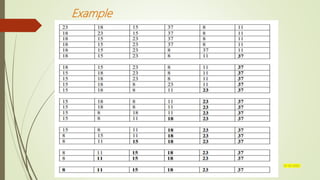

![Example 3: The Assignment Problem

There are n people who need to be assigned to n jobs, one person per job. The

cost of assigning person i to job j is C[i,j]. Find an assignment that minimizes the

total cost.

Job 0 Job 1 Job 2 Job 3

Person 0 9 2 7 8

Person 1 6 4 3 7

Person 2 5 8 1 8

Person 3 7 6 9 4

Algorithmic Plan: Generate all legitimate assignments, compute

their costs, and select the cheapest one.

How many assignments are there?

07-03-2022](https://image.slidesharecdn.com/unit2-220307075928/85/Unit-2-35-320.jpg)

![Mergesort

Split array A[0..n-1] in two about equal halves and make copies of each half in arrays B and C

Sort arrays B and C recursively

Merge sorted arrays B and C into array A as follows:

Repeat the following until no elements remain in one of the arrays:

compare the first elements in the remaining unprocessed portions of

the arrays

copy the smaller of the two into A, while incrementing the index

indicating the unprocessed portion of that array

Once all elements in one of the arrays are processed, copy the remaining

unprocessed elements from the other array into A.

07-03-2022](https://image.slidesharecdn.com/unit2-220307075928/85/Unit-2-46-320.jpg)

![Quicksort

Select a pivot (partitioning element) – here, the first element

Rearrange the list so that all the elements in the first s positions are smaller than or equal to the pivot

and all the elements in the remaining n-s positions are larger than or equal to the pivot (see next slide

for an algorithm)

Exchange the pivot with the last element in the first (i.e., ) subarray — the pivot is now in its final

position

Sort the two subarrays recursively

p

A[i]p A[i]p

07-03-2022](https://image.slidesharecdn.com/unit2-220307075928/85/Unit-2-51-320.jpg)

![Binary Search

Very efficient algorithm for searching in sorted array:

K

vs

A[0] . . . A[m] . . . A[n-1]

If K = A[m], stop (successful search); otherwise, continue

searching by the same method in A[0..m-1] if K < A[m]

and in A[m+1..n-1] if K > A[m]

l 0; r n-1

while l r do

m (l+r)/2

if K = A[m] return m

else if K < A[m] r m-1

else l m+1

return -1

07-03-2022](https://image.slidesharecdn.com/unit2-220307075928/85/Unit-2-55-320.jpg)

![Strassen’s Matrix Multiplication

Strassen observed [1969] that the product of two matrices can be computed as follows:

C00 C01 A00 A01 B00 B01

= *

C10 C11 A10 A11 B10 B11

M1 + M4 - M5 + M7 M3 + M5

=

M2 + M4 M1 + M3 - M2 + M6

07-03-2022](https://image.slidesharecdn.com/unit2-220307075928/85/Unit-2-63-320.jpg)