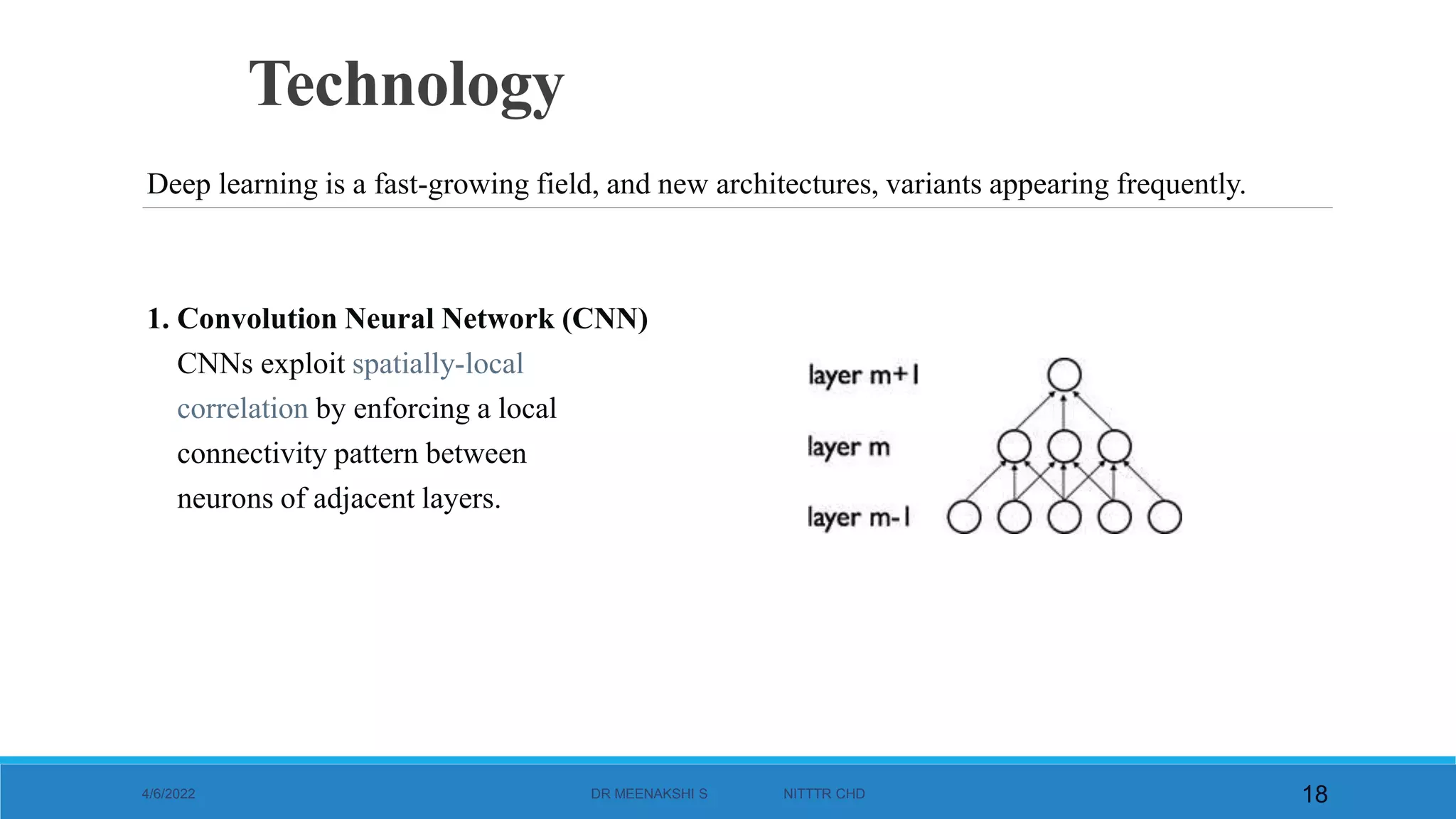

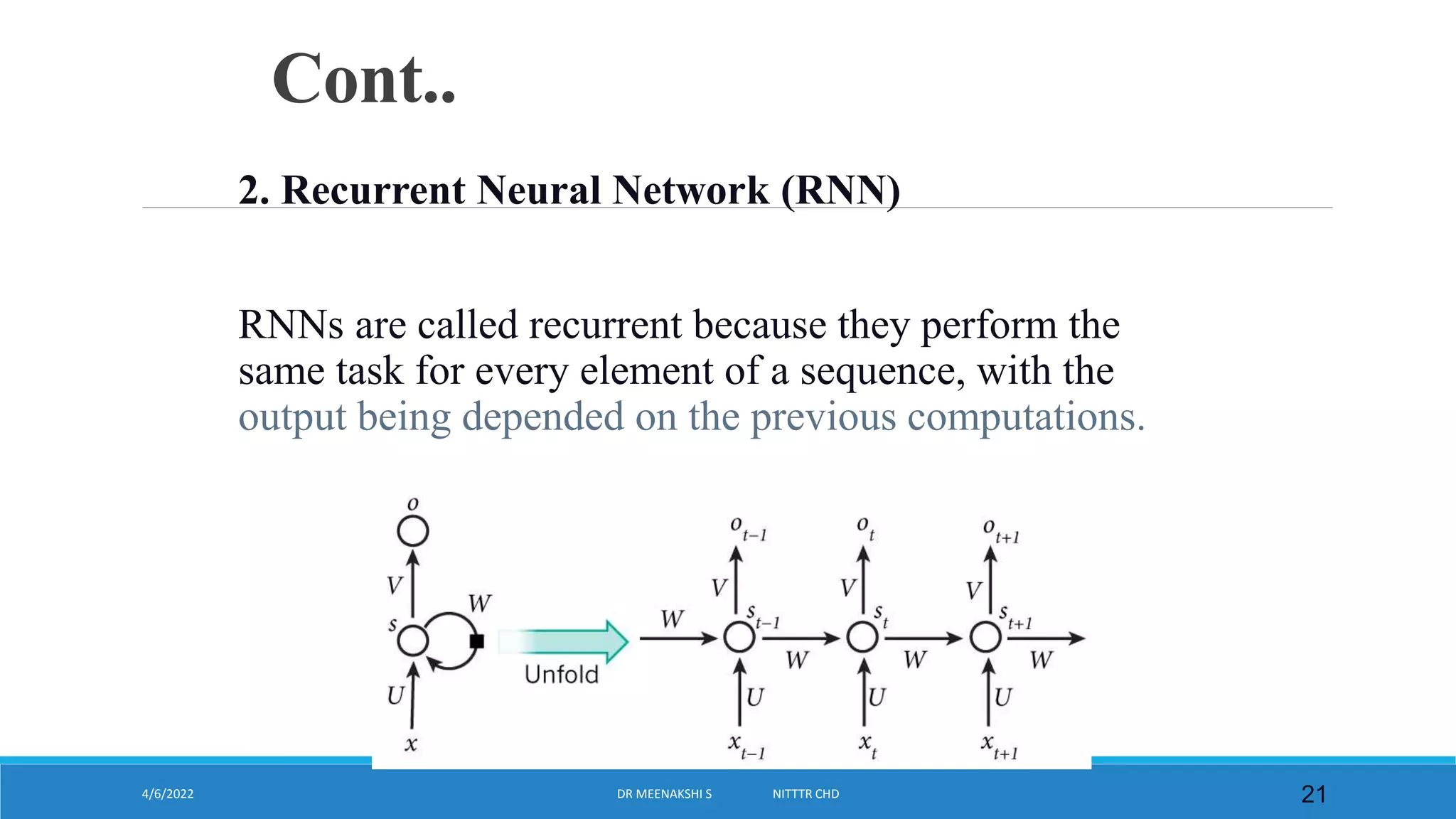

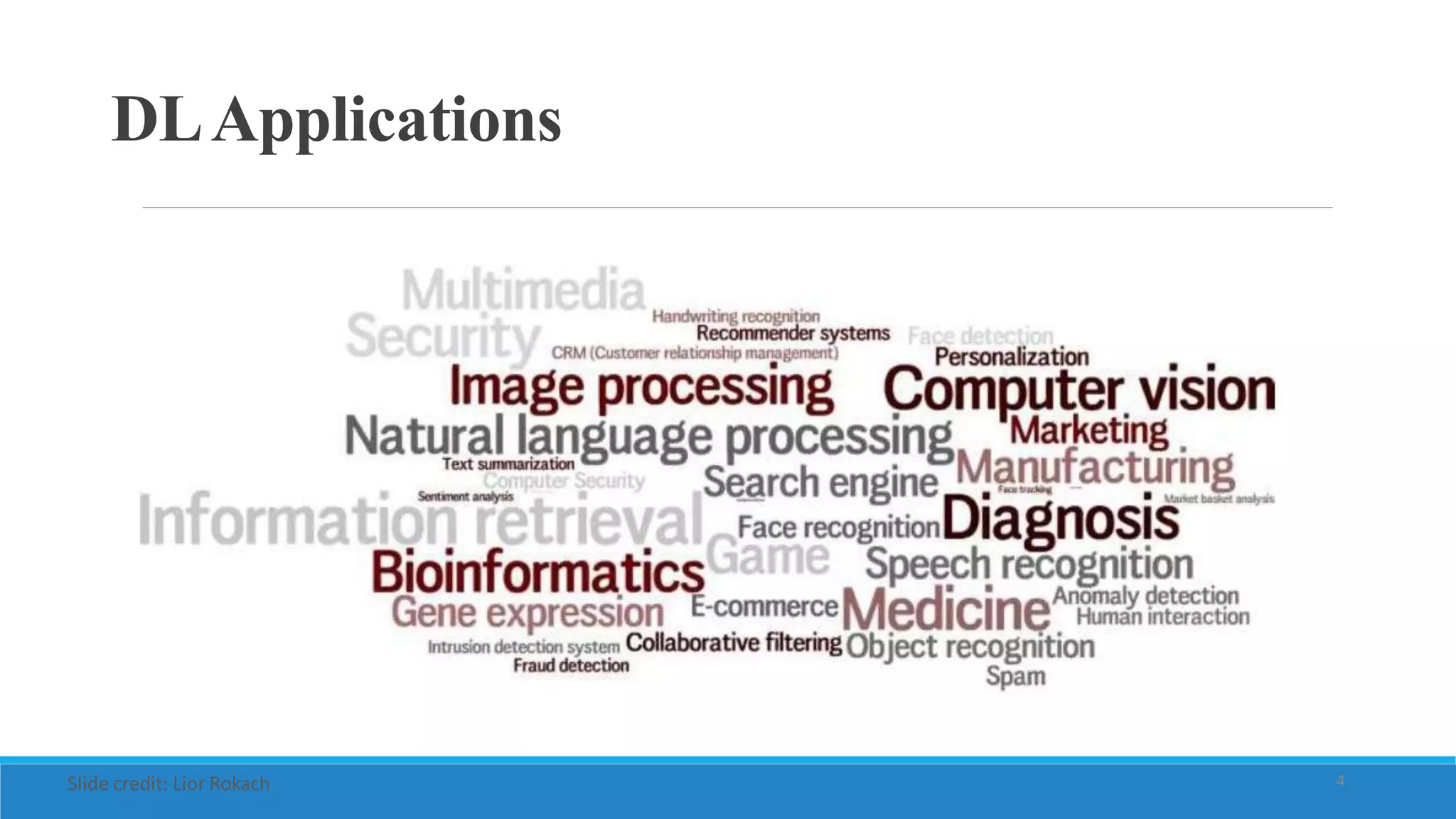

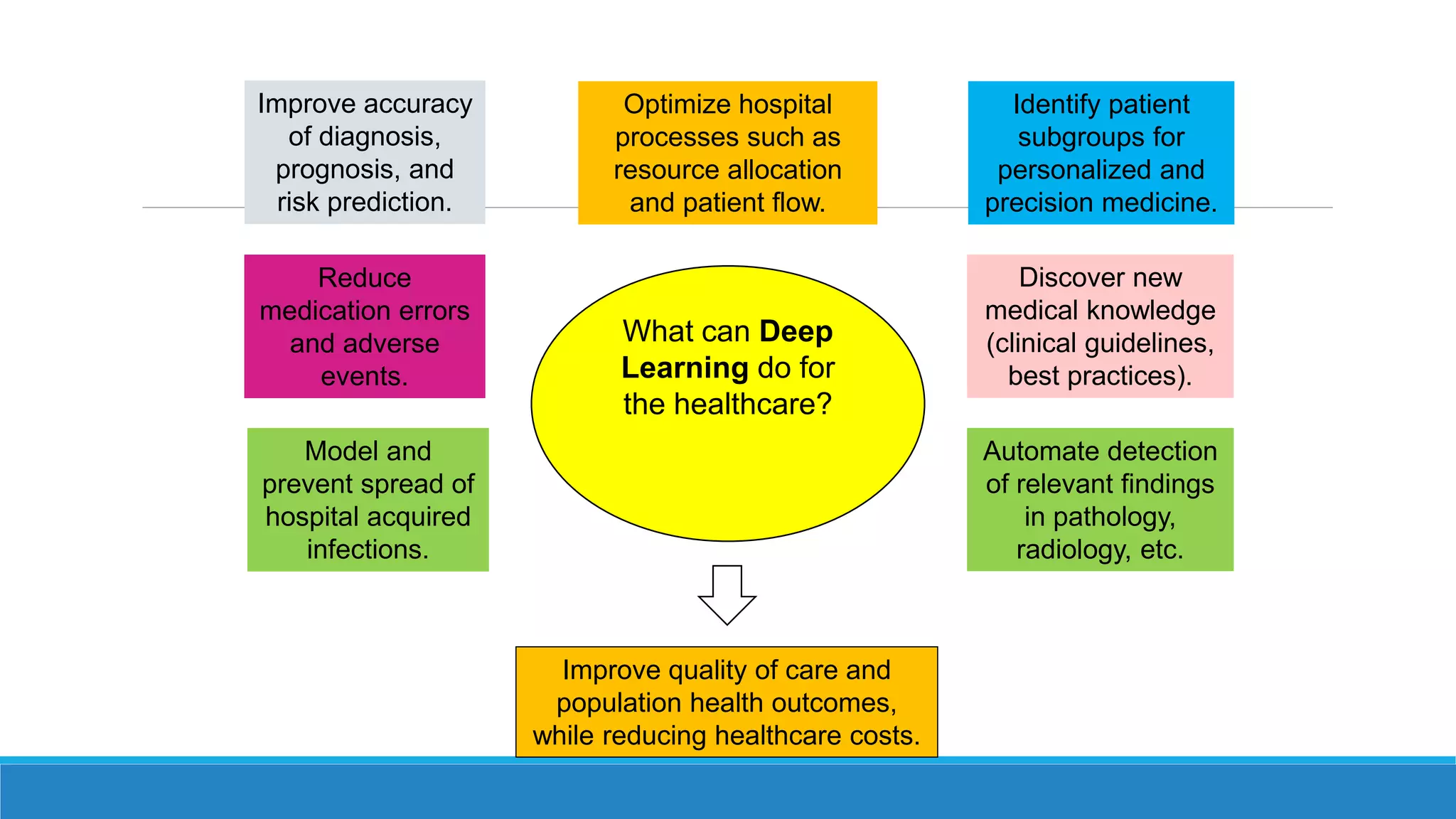

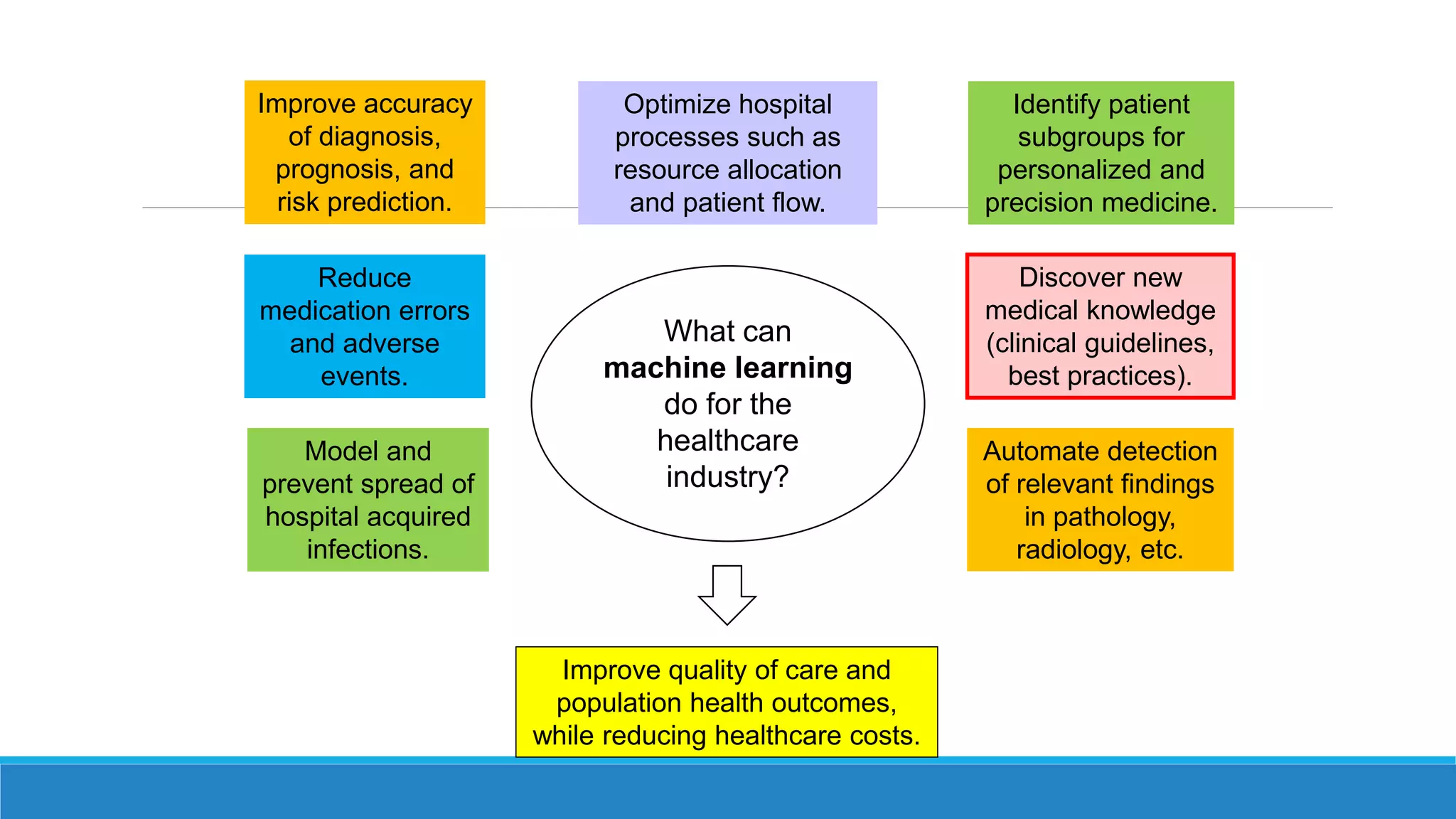

The document discusses the potential applications of deep learning in healthcare. It begins by explaining that deep learning models can improve accuracy of diagnosis, prognosis, and risk prediction by analyzing large datasets. It then discusses how deep learning can optimize hospital processes like resource allocation and patient flow by early and accurate prediction of diseases. Finally, it mentions that deep learning can help identify patient subgroups for personalized and precision medicine approaches.

![President Obama’s initiative to create a 1 million

person research cohort

[Precision Medicine Initiative (PMI) working Group Report, Sept. 17 2015]

THEPRECISIONMEDICINEINITIA

TIVE

Large datasets

Core data set:

• Baseline health exam

• Clinical data derived

from electronic health

records(EHRs)

• Healthcare claims

• Laboratorydata](https://image.slidesharecdn.com/deeplearninghealthcaremsood-220113155720/75/Deep-learning-health-care-33-2048.jpg)

![Use Case: Tumor Tissue Characterization using Ultrasound

Steps:

1. Understand the problem

2. Define input(s) and output(s)

3. Investigate limitations and boundary conditions

4. Collect representative data

1. [Labels]

5. [Calculate features/engineer features]

6. Define ML framework

7. Define Metric for evaluation

...](https://image.slidesharecdn.com/deeplearninghealthcaremsood-220113155720/75/Deep-learning-health-care-63-2048.jpg)

![1. Understand the problem

2. Define input(s) and output(s)

3. Investigate limitations and boundary conditions

4. Collect representative data

1. [Labels]

5. [Calculate features/engineer features]

6. Define ML framework

7. Define Metric for evaluation

...

Courtesy of Dr. Abolmaesumi](https://image.slidesharecdn.com/deeplearninghealthcaremsood-220113155720/75/Deep-learning-health-care-64-2048.jpg)