The Validation Attitude

- 1. 1 1 The ValidationThe Validation AttitudeAttitude Bob ColwellBob Colwell April 2010April 2010

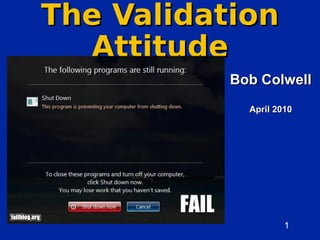

- 2. 2 2 AttitudeAttitude I could talk about techniques, tools,I could talk about techniques, tools, FVFV Environments, algorithms, machineryEnvironments, algorithms, machinery Languages, suites, trainingLanguages, suites, training but I thinkbut I think attitudeattitude is more importantis more important than any of thosethan any of those

- 3. 3 34/4/07 Bob Colwell No Perfect DesignsNo Perfect Designs Nothing is perfect, everything has bugsNothing is perfect, everything has bugs – Shortcomings, compromises, defects, design errata, gaffes, goofs,Shortcomings, compromises, defects, design errata, gaffes, goofs, fumbles, errors, boneheaded mistakes, bobbles, bungles, boo-boosfumbles, errors, boneheaded mistakes, bobbles, bungles, boo-boos – But not all bugs are equal!But not all bugs are equal! Can’t test to saturation: schedule matters tooCan’t test to saturation: schedule matters too Why is everything always so darned buggy?Why is everything always so darned buggy? – Software…need say no more…Software…need say no more… – Why did Titanic not have waterproof compartments?Why did Titanic not have waterproof compartments? – Why did Ford Pinto have gas tank in back?Why did Ford Pinto have gas tank in back? – Why did Challenger fly with leaky O-rings?Why did Challenger fly with leaky O-rings? – Why did torpedoes not explode in WWII?Why did torpedoes not explode in WWII? Entropy has a preferred directionEntropy has a preferred direction Only genius could paint Mona Lisa,Only genius could paint Mona Lisa, but any small child can destroy it quicklybut any small child can destroy it quickly 1000 ways to do things wrong, 1 or 2 that work1000 ways to do things wrong, 1 or 2 that work

- 4. 4 4 Prescription: SW visualization, tools to localize bugs, diagnose problems, and instrument behavior

- 5. 5 54/4/07 Bob Colwell Accidents Are InevitableAccidents Are Inevitable – It's the nature of engineeringIt's the nature of engineering to push designs to edge ofto push designs to edge of failure (schedule, reliability,failure (schedule, reliability, thermals, materials, tools,thermals, materials, tools, judgment of unknowns)judgment of unknowns) – P(accident) =P(accident) = εε , for, for εε ≠≠ 00 – World rewards this behaviorWorld rewards this behavior Cool new features + first toCool new features + first to market often preferred tomarket often preferred to dependabilitydependability Other markets (life-support)Other markets (life-support) make (or should make) thismake (or should make) this trade-off differently!trade-off differently!

- 6. 6 64/4/07 Bob Colwell Isn’t that justIsn’t that just ?? Close. But Murphy is notClose. But Murphy is not quite right.quite right. 1.1. #Near-misses >> #disasters#Near-misses >> #disasters 2.2. Competent design/test findsCompetent design/test finds simple errorssimple errors 3.3. Complex sequences & unlikelyComplex sequences & unlikely event cascades survive to prod’nevent cascades survive to prod’n

- 7. 7 74/4/07 Bob Colwell Failures Getting WorseFailures Getting Worse Mechanical things usually fail predictably due to physicsMechanical things usually fail predictably due to physics – Wings bend, bridges groan, engines rattle, knees acheWings bend, bridges groan, engines rattle, knees ache – By contrast, computer-based things fail “all over the place”By contrast, computer-based things fail “all over the place” Helpful Engineering Attitude:Helpful Engineering Attitude: 1.1. Nature does not want yourNature does not want your engineered system to work; willengineered system to work; will actively work against youactively work against you 2.2. Your design will do only whatYour design will do only what you’ve constrained it to do, onlyyou’ve constrained it to do, only as long as it has toas long as it has to 3.3. Watch out for…Watch out for… Normalization of devianceNormalization of deviance (Challenger O-rings, Apollo(Challenger O-rings, Apollo 1 fire)1 fire)

- 8. 8 8 The Steely-Eyed Missile ValidatorThe Steely-Eyed Missile Validator Apollo 12Apollo 12 22ndnd try to land on moon, launched 11/14/69try to land on moon, launched 11/14/69 36 seconds after liftoff, spacecraft struck by lightning => power36 seconds after liftoff, spacecraft struck by lightning => power surgesurge – All telemetry went haywire; book said to abort liftoffAll telemetry went haywire; book said to abort liftoff – Both spacecraft pilot and mission controller were furiously considering that optionBoth spacecraft pilot and mission controller were furiously considering that option – But John Aaron was on shift, and thought he’d seen this malfunction beforeBut John Aaron was on shift, and thought he’d seen this malfunction before During testing 1 year earlier, Aaron observed test that went off into weedsDuring testing 1 year earlier, Aaron observed test that went off into weeds – Aaron took it on himself to investigate this – led him to obscure SCE subsystemAaron took it on himself to investigate this – led him to obscure SCE subsystem In critical “abort or not” few seconds, with lives on line, Aaron made one ofIn critical “abort or not” few seconds, with lives on line, Aaron made one of most famous calls in NASA historymost famous calls in NASA history – ““Flight, try SCE to ‘Aux’”Flight, try SCE to ‘Aux’” – Neither Flight nor spacecraft pilot Conrad knew what that even meant, but Alan Bean tried itNeither Flight nor spacecraft pilot Conrad knew what that even meant, but Alan Bean tried it – Telemetry came right back, vaulted Aaron into validation stardomTelemetry came right back, vaulted Aaron into validation stardom He could have blown off earlier test, butHe could have blown off earlier test, but he didn’the didn’t His inner validator wanted to know “what just happened?”His inner validator wanted to know “what just happened?” Isaac Asimov once said 3 most important words in science are “What was THAT?”

- 9. 9 9 Complexity Implies SurprisesComplexity Implies Surprises ……and surprises areand surprises are badbad Chaos effects in complexChaos effects in complex µµ P’sP’s – Decomposability is a fundamental tenet ofDecomposability is a fundamental tenet of complex system designcomplex system design – Butterfly wings ruin decomposabilityButterfly wings ruin decomposability – ““Improve design, get slower performance” notImprove design, get slower performance” not at all uncommonat all uncommon We must stop designing largeWe must stop designing large systems as though small ones simplysystems as though small ones simply scale upscale up – lesson from comm engineers:lesson from comm engineers: assumeassume errorserrors

- 10. 10 10 Thinking about validationThinking about validation Ability to think in analogies is highestAbility to think in analogies is highest form of intelligenceform of intelligence – IQ tests like “a:b :: c:d”IQ tests like “a:b :: c:d” – Hofstadter's book: numerical sequencesHofstadter's book: numerical sequences Analogies may illuminate a subject inAnalogies may illuminate a subject in a way that direct introspection cannota way that direct introspection cannot – They drive our minds to their creative limitsThey drive our minds to their creative limits

- 11. 11 11 Listen to Your Inner ValidatorListen to Your Inner Validator YouYou knewknew it wouldn’t be 3, didn’t you?it wouldn’t be 3, didn’t you? – You sensed something’s not quite as it seemsYou sensed something’s not quite as it seems Answer: 0, 1, 2, 720!, …Answer: 0, 1, 2, 720!, … = 0, 1, 2, 6!!= 0, 1, 2, 6!! = 0, 1!, 2!!, 3!!!, …= 0, 1!, 2!!, 3!!!, … That was the voice of your innerThat was the voice of your inner validator that you were hearingvalidator that you were hearing D. Hofstadter, Fluid Concepts and Creative Analogies 0, 1, 2, …?0, 1, 2, …?

- 12. 12 12 Lesson: Trust NothingLesson: Trust Nothing Hyatt RegencyHyatt Regency hotel, Missouri,hotel, Missouri, 19801980 Catwalks on rodsCatwalks on rods 40’ threaded rods40’ threaded rods with nuts halfwaywith nuts halfway Killed 114,Killed 114, injured 200injured 200

- 13. 13 13 What Happened?What Happened? Spec was marginalSpec was marginal 40’ threaded rods40’ threaded rods “too hard”, changed“too hard”, changed to 2x20’ by contractorto 2x20’ by contractor No simulation, no testNo simulation, no test Who goofed?Who goofed? Engineer, contractor,Engineer, contractor, inspector…everyoneinspector…everyone

- 14. 14 14 Therac-25Therac-25 Medical particleMedical particle acceleratoraccelerator Electrons,Electrons, protons, X-raysprotons, X-rays Six fatalitiesSix fatalities from poorfrom poor system/SWsystem/SW designdesign – And blind naïveAnd blind naïve faith in computers!faith in computers!

- 15. 15 15 Question EverythingQuestion Everything TestTest assumptionsassumptions as well as designas well as design – If assumptions are broken, design surely is tooIf assumptions are broken, design surely is too – Try to “catch the field goals”Try to “catch the field goals”

- 16. 16 16 Fight Urge to Relax RequirementsFight Urge to Relax Requirements ChallengerChallenger – Not ok to slip design assumptions (launch temp,Not ok to slip design assumptions (launch temp, # of unburnt O-rings) to suit desires# of unburnt O-rings) to suit desires AirbusAirbus – Blaming pilot not reasonable explanation; pilotBlaming pilot not reasonable explanation; pilot is part of system designis part of system design Runway “incursions” up 71% since ‘93Runway “incursions” up 71% since ‘93 – Near-misses are trying toNear-misses are trying to tell us somethingtell us something Diane Vaughan, The Challenger Launch Decision, Chicago PressDiane Vaughan, The Challenger Launch Decision, Chicago Press 1996; Nancy Leveson, Safeware, Addison-Wesley 19951996; Nancy Leveson, Safeware, Addison-Wesley 1995

- 17. 17 17 If You Didn’t Test It,If You Didn’t Test It, It Doesn’t WorkIt Doesn’t Work Mir: fire extinguishersMir: fire extinguishers boltedbolted to wallto wall – Still had strong metal launch strapsStill had strong metal launch straps – Had never been needed before, so never testedHad never been needed before, so never tested – Discovered with a roaring fire several feet awayDiscovered with a roaring fire several feet away

- 18. 18 184/4/07 Bob Colwell Complexity Makes Everything WorseComplexity Makes Everything Worse Some things must be complicated to do their jobSome things must be complicated to do their job – Our brains, for exampleOur brains, for example But complex sequences are root of most disastersBut complex sequences are root of most disasters – Challenger, Bhopal, Chernobyl, FDIV, Exxon ValdezChallenger, Bhopal, Chernobyl, FDIV, Exxon Valdez Where does complexity come from? Why does itWhere does complexity come from? Why does it keep increasing? Where are the limits?keep increasing? Where are the limits? – Pentium 4Pentium 4 ““in the small” vs “in the large” design (micros vsin the small” vs “in the large” design (micros vs comm systems)comm systems) What to do? Vigilance, testing, awareness…we areWhat to do? Vigilance, testing, awareness…we are all validatorsall validators

- 19. 19 19 What To DoWhat To Do Get the spec rightGet the spec right Design for correctness but…Design for correctness but… design knowing perfection is unattainabledesign knowing perfection is unattainable Users are part of the systemUsers are part of the system Formal methodsFormal methods Pre-production testing and validationPre-production testing and validation Post-production testing and verificationPost-production testing and verification Education of the publicEducation of the public

- 20. 20 204/4/07 Bob Colwell RolesRoles Engineers must standEngineers must stand their groundtheir ground – There are always doubts,There are always doubts, incomplete data; don’t letincomplete data; don’t let ‘em use those against you‘em use those against you Judgment is cruciallyJudgment is crucially needed --needed -- YOURSYOURS –Remember the ChallengerRemember the Challenger ““My God, Thiokol, when do you want me to launch? Next April?”My God, Thiokol, when do you want me to launch? Next April?” –Be careful with “data”Be careful with “data” ““Risk assessment data is like a captured spy; if you torture it long enough, it will tell youRisk assessment data is like a captured spy; if you torture it long enough, it will tell you anything you want to know…”anything you want to know…” (Wm. Ruckelshaus)(Wm. Ruckelshaus) –Crushing, conflicting demands are normCrushing, conflicting demands are norm DesignDesign must push the envelope w/o ceding responsibilitymust push the envelope w/o ceding responsibility ValidationValidation establishes whether they've pushed it too farestablishes whether they've pushed it too far ManagementManagement must beware overriding tech judgmentmust beware overriding tech judgment PublicPublic must understand limits of human design processmust understand limits of human design process All players must value roles of others!All players must value roles of others! engineermgt HR

- 21. 21 21 Roles cont.Roles cont. ManagementManagement – wants to assume a product is safewants to assume a product is safe – knows nothing’s ever perfect,knows nothing’s ever perfect, comes a time to “shoot the engineers” or they’ll nevercomes a time to “shoot the engineers” or they’ll never stop tinkeringstop tinkering ValidatorsValidators – want to prove a product is safewant to prove a product is safe – assume it is not by defaultassume it is not by default – only informed arbiters of when product is readyonly informed arbiters of when product is ready don’t fall for “might as well sign, we’re

- 22. 22 22 Future Directions:Future Directions: Public ExpectationsPublic Expectations Andy Grove’s FDIV epiphanyAndy Grove’s FDIV epiphany Paradoxically, the more high tech, the more public expects of productParadoxically, the more high tech, the more public expects of product Users caused Chernobyl, TMI by going “off book”, but prevented manyUsers caused Chernobyl, TMI by going “off book”, but prevented many other disasters with real-time creativity…lessons are subtleother disasters with real-time creativity…lessons are subtle Takes exquisite understanding & judgment to discernTakes exquisite understanding & judgment to discern accidents from reasonable risk-taking andaccidents from reasonable risk-taking and bonehead errors or incompetencebonehead errors or incompetence This is what a jury must do.This is what a jury must do. How?How? Can’t keep trending this wayCan’t keep trending this way

- 23. 23 23 Future of ValidationFuture of Validation Multiple Culture Changes NeededMultiple Culture Changes Needed Public needs to stop expecting perfectionPublic needs to stop expecting perfection Design teams must explicitly limit complexityDesign teams must explicitly limit complexity and avoid auto-scale-up assumptionsand avoid auto-scale-up assumptions Companies must mature past point of viewingCompanies must mature past point of viewing validation as an unpleasant overheadvalidation as an unpleasant overhead does your company have “Validation Fellows?”does your company have “Validation Fellows?” Validation is a profession of its own.Validation is a profession of its own. Cultivate the Validation Attitude!Cultivate the Validation Attitude!