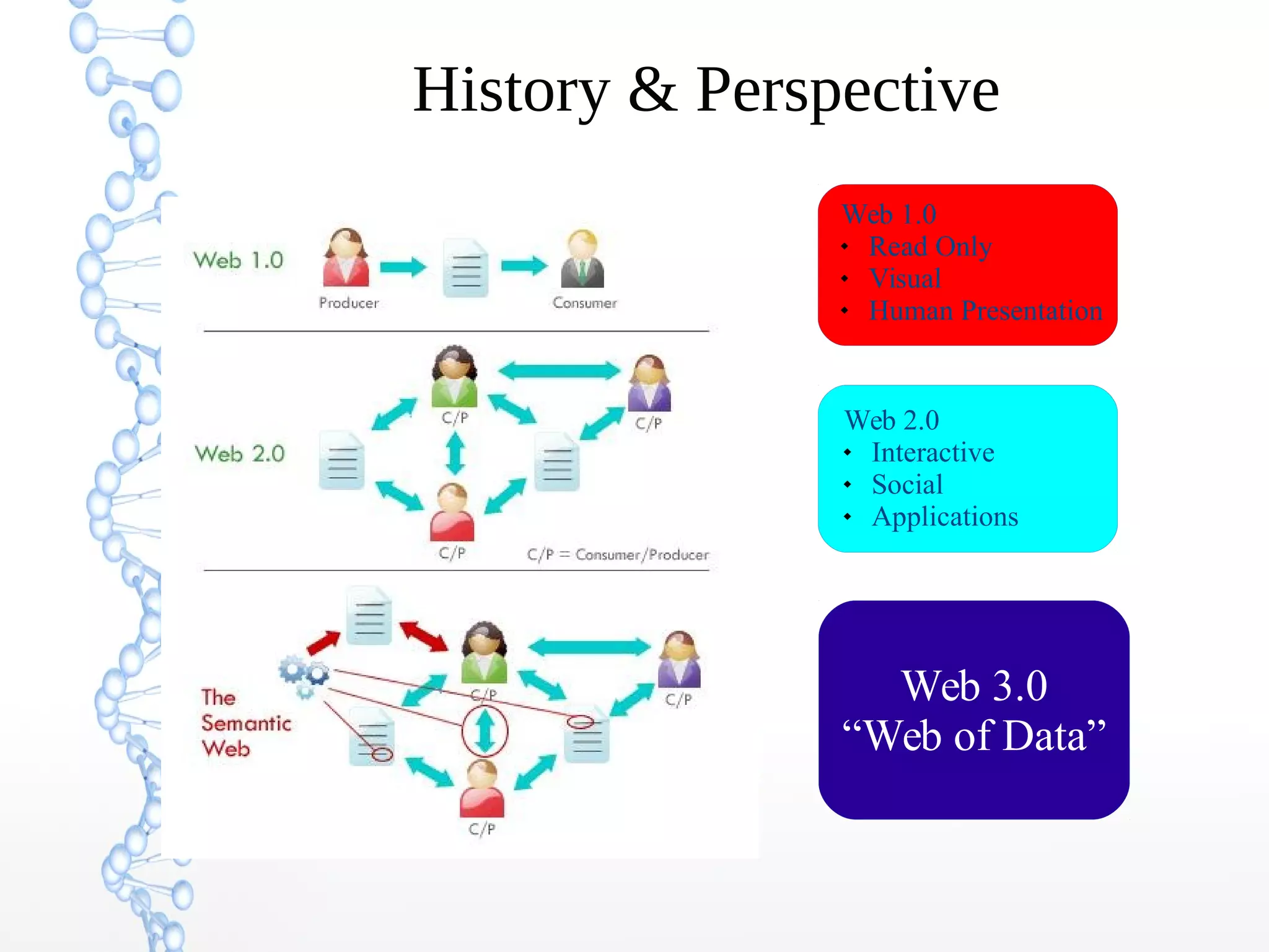

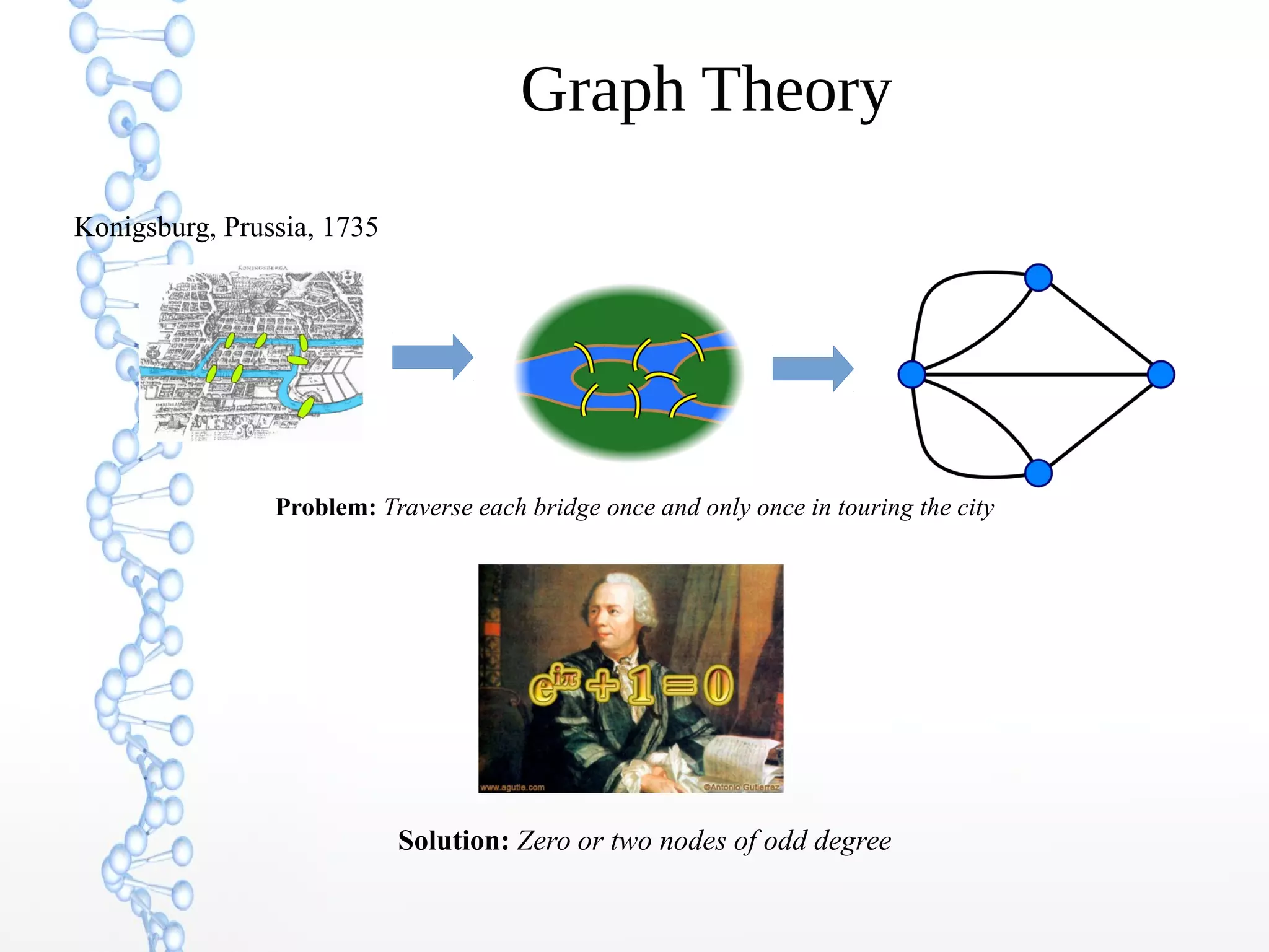

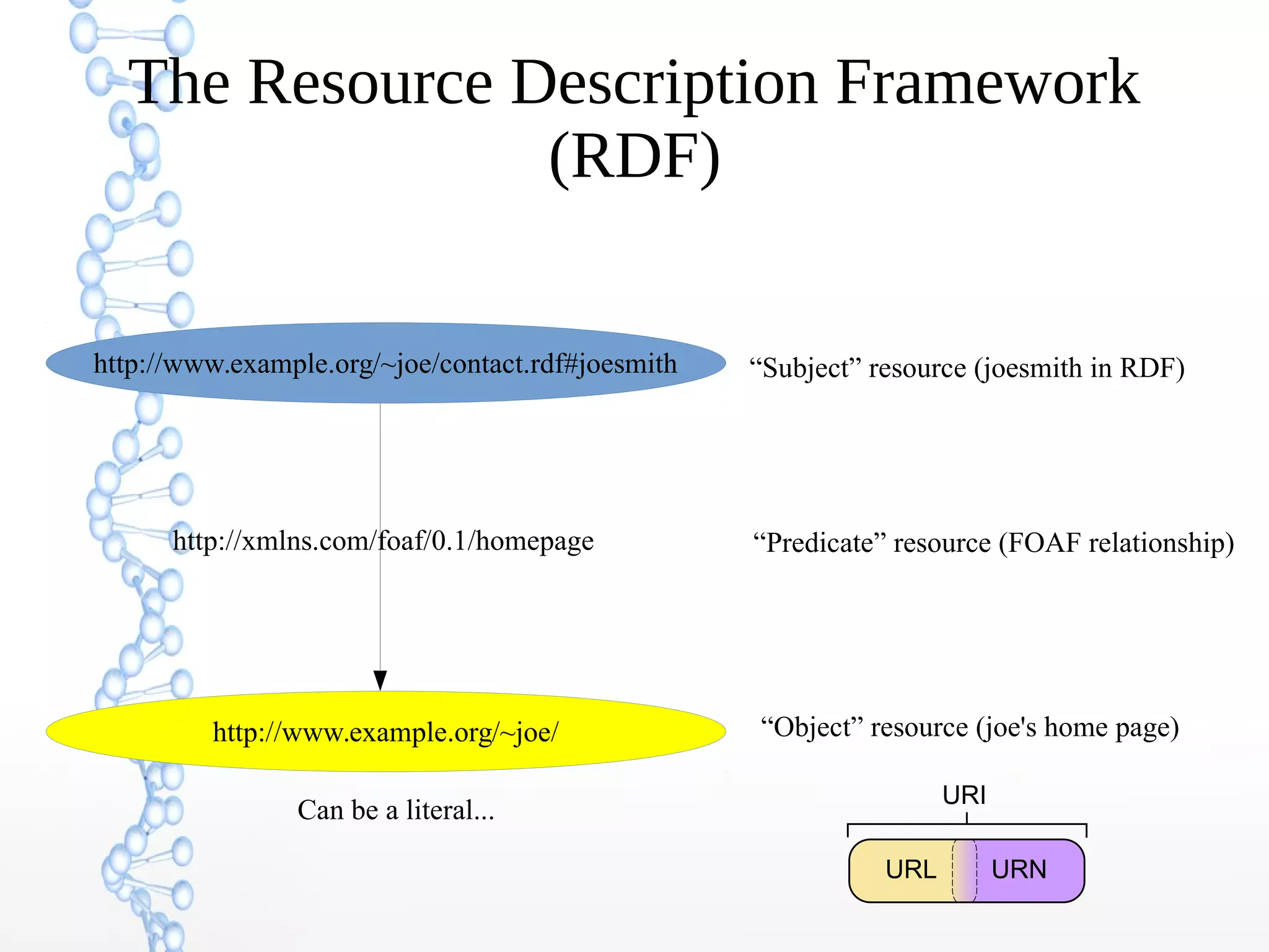

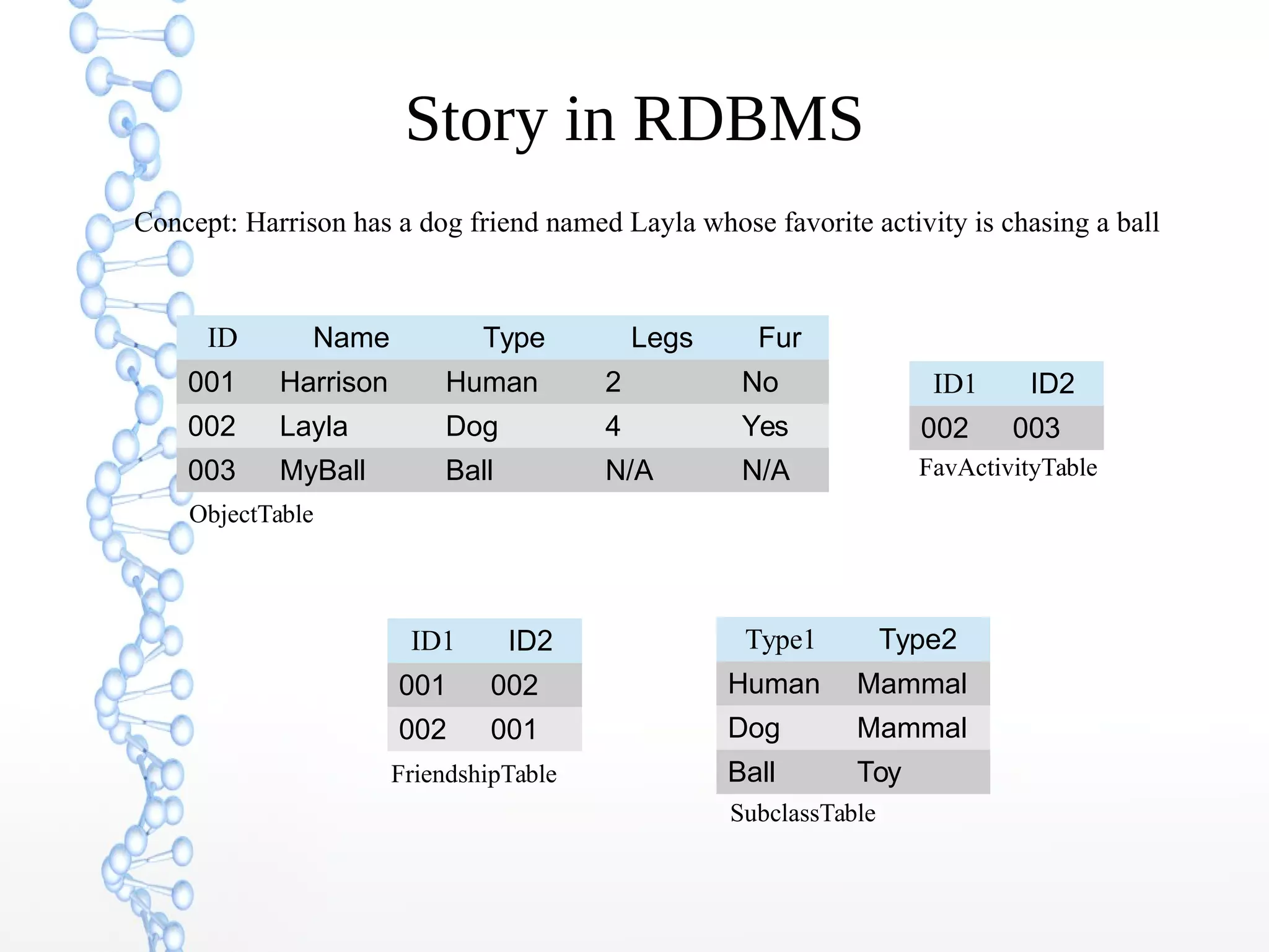

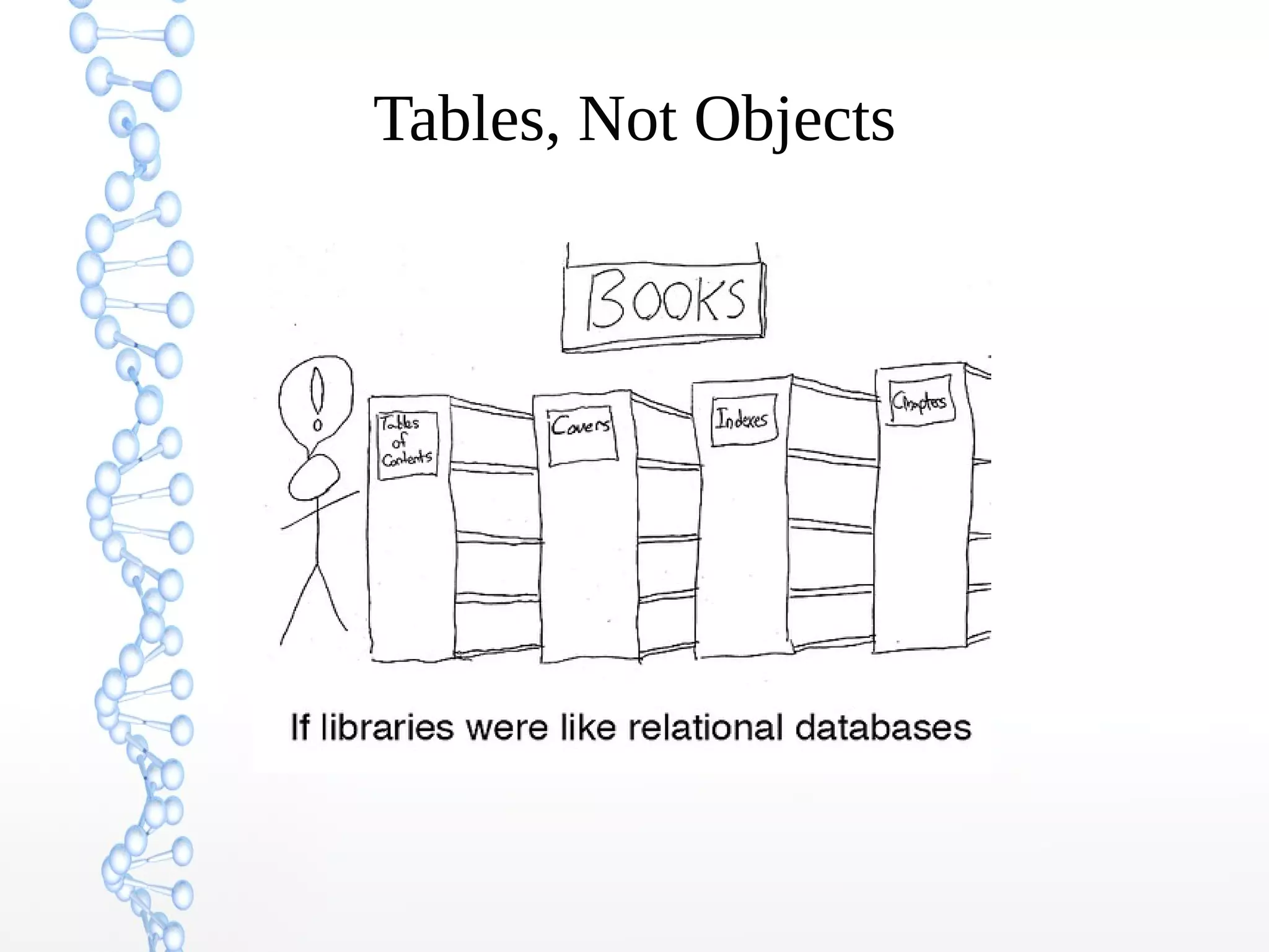

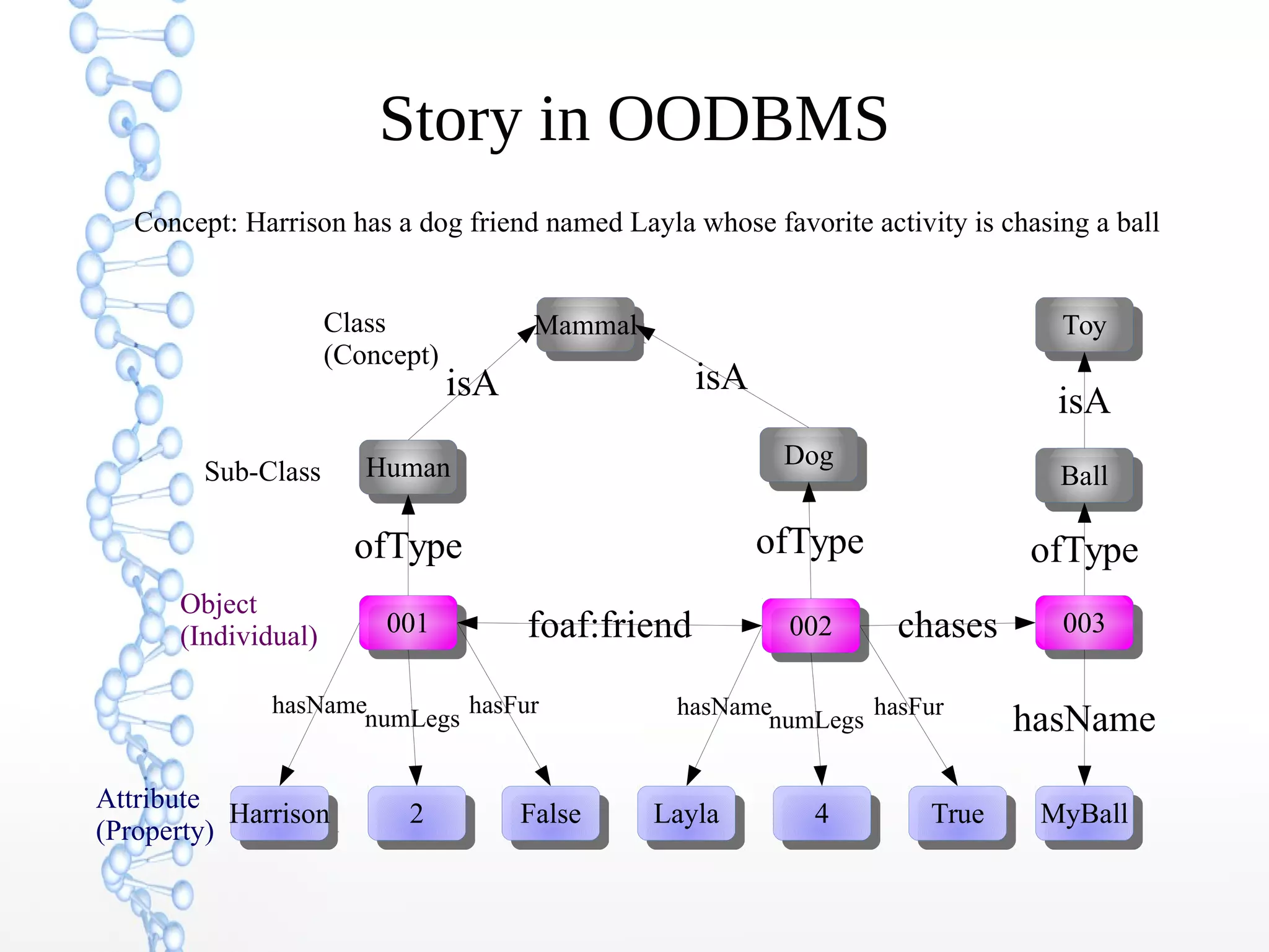

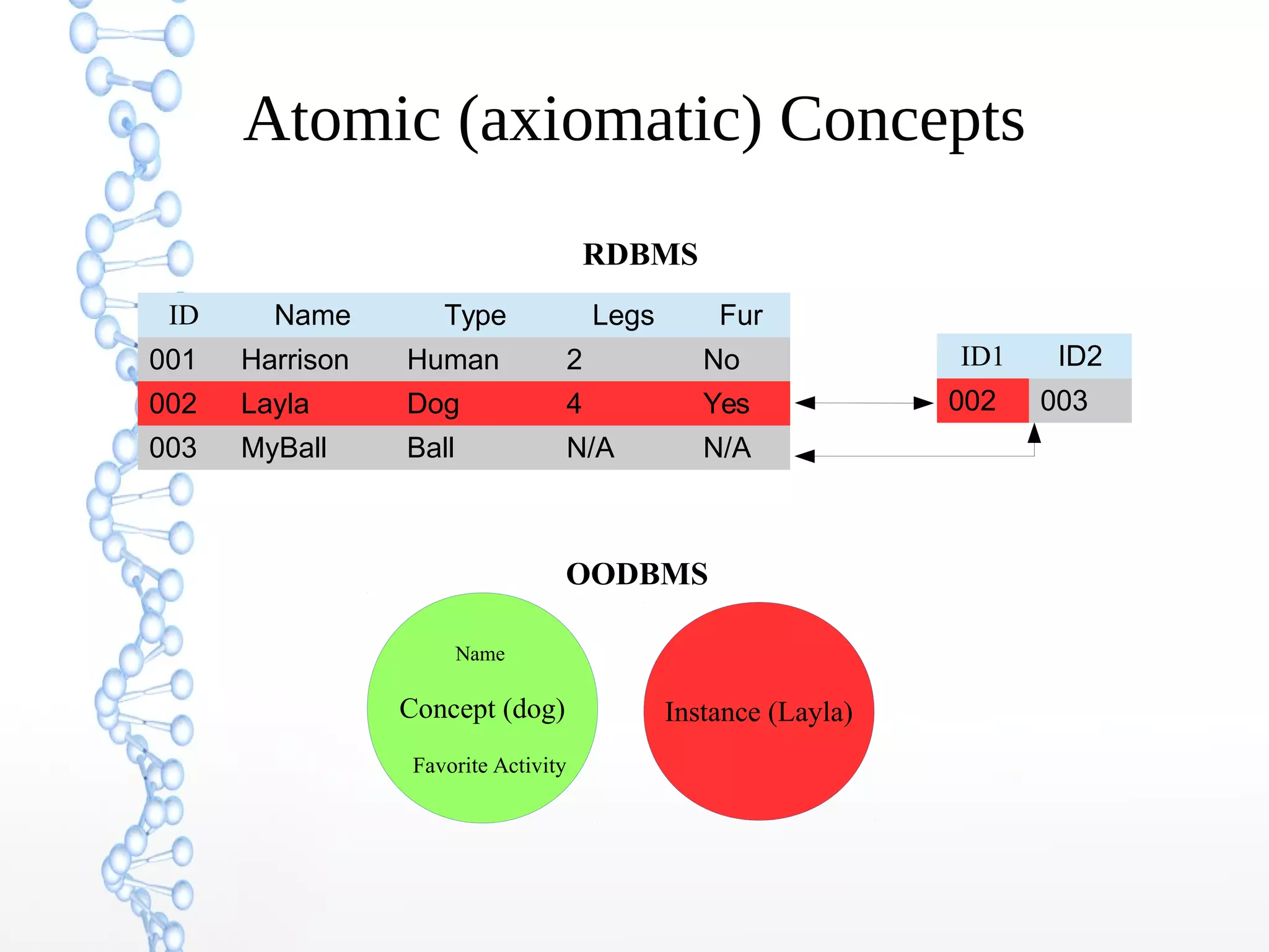

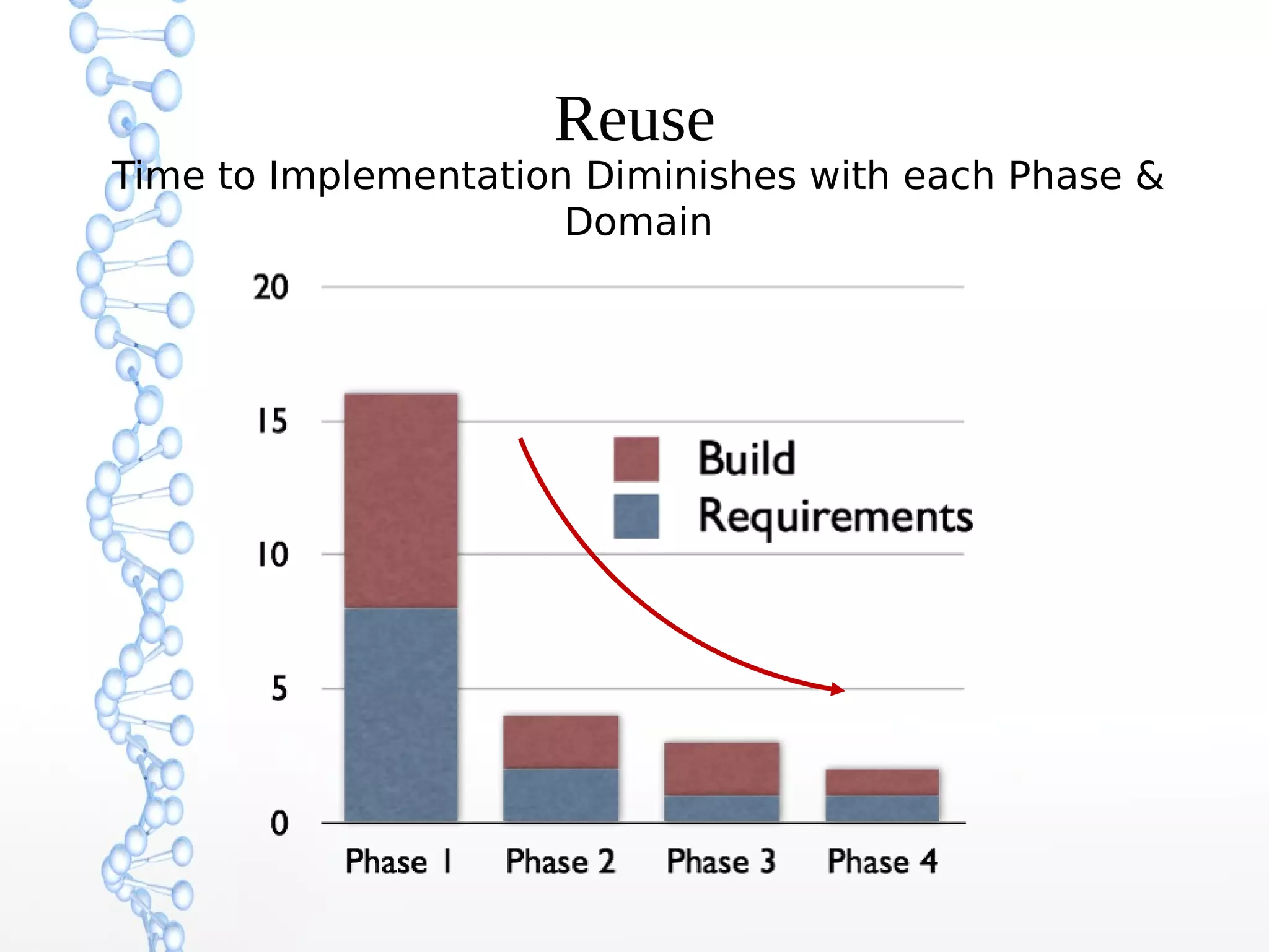

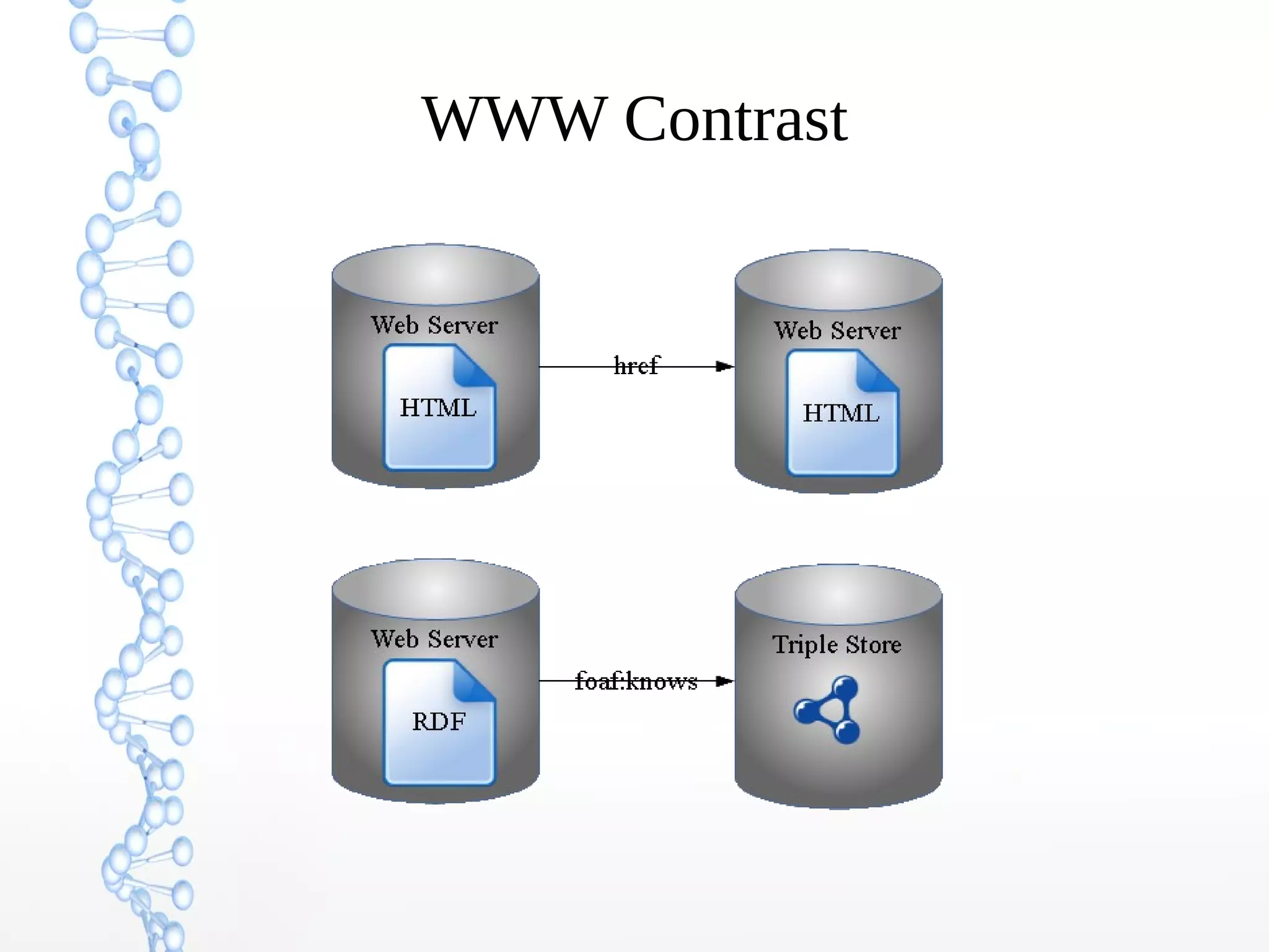

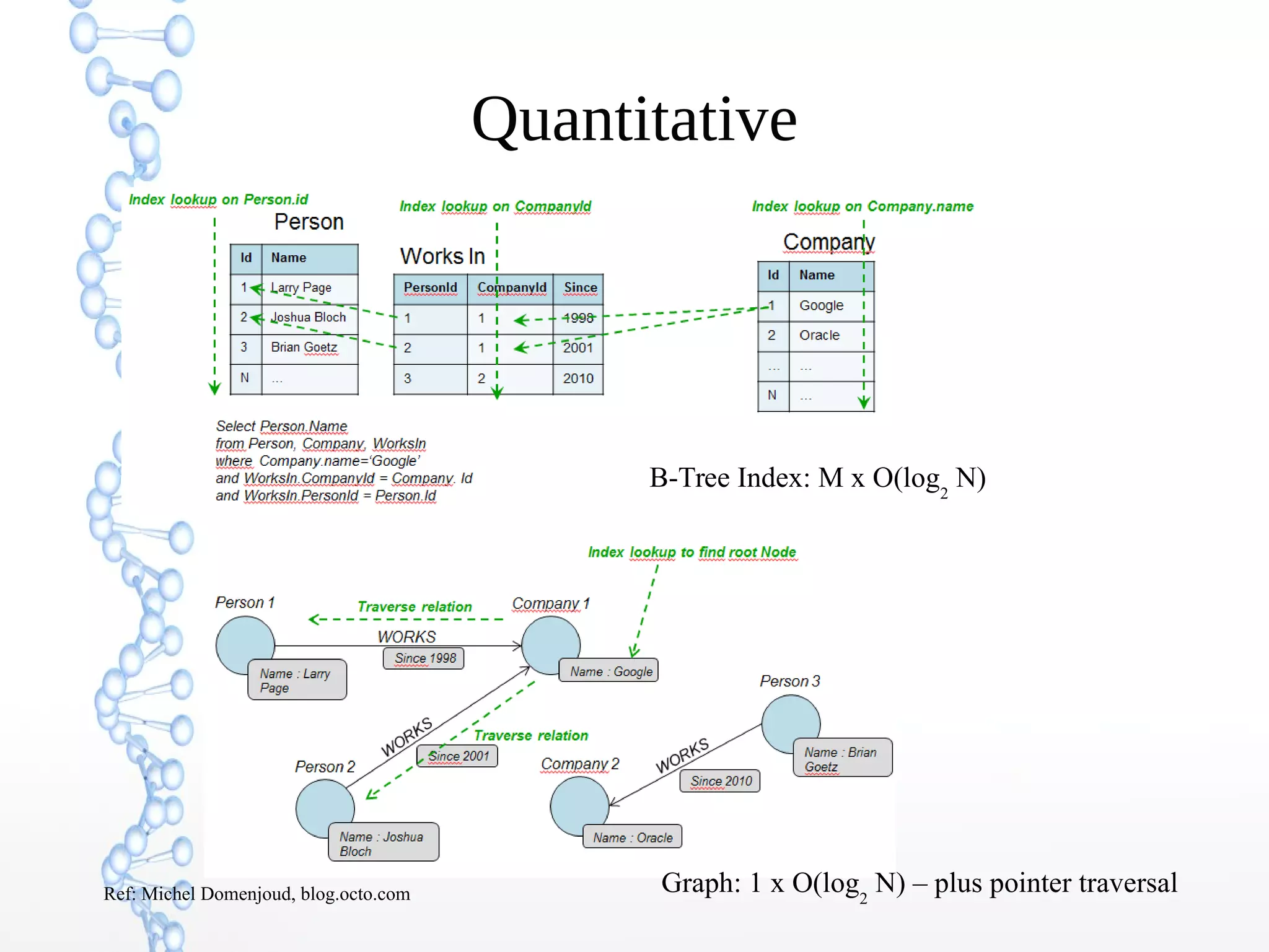

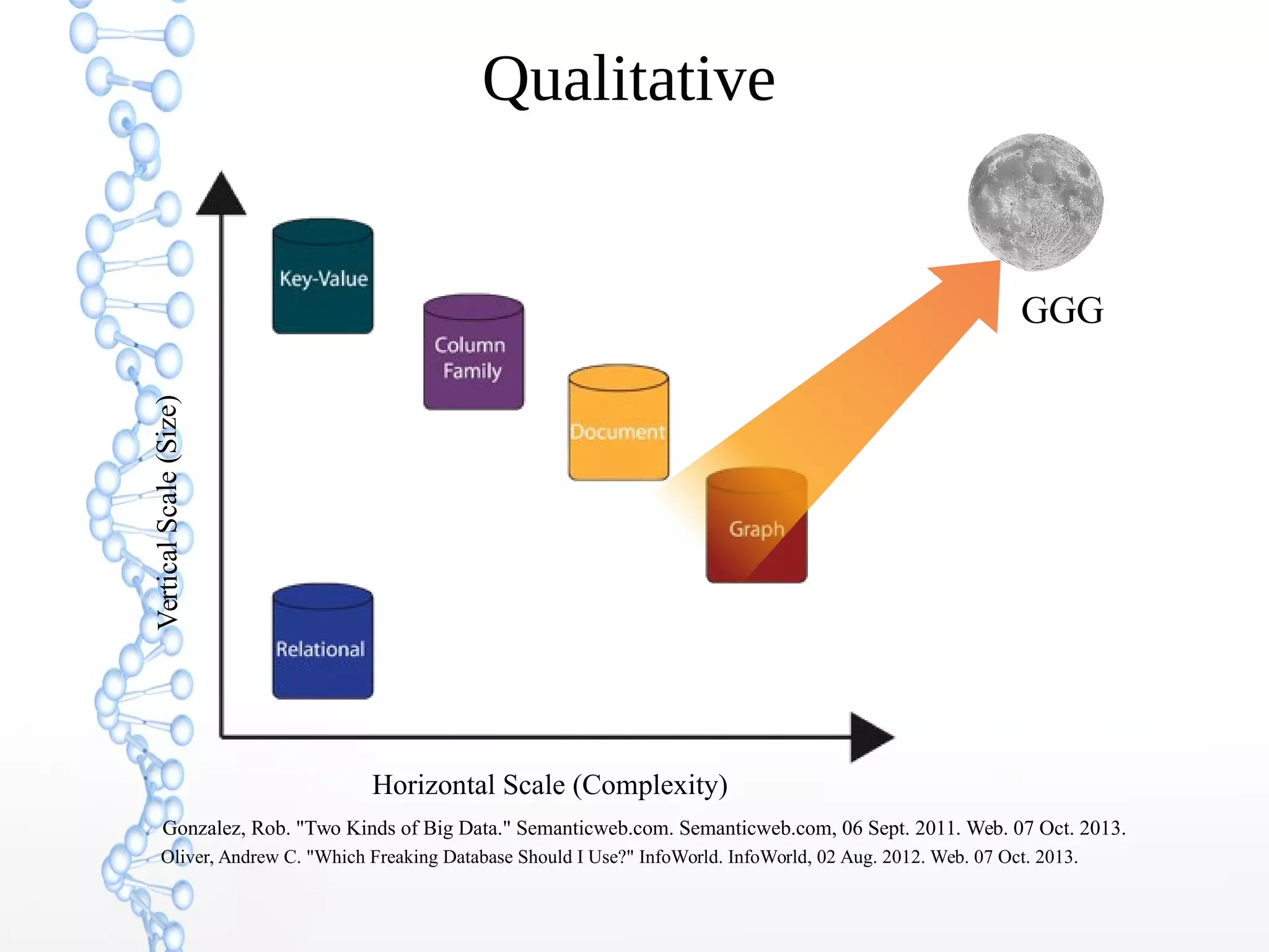

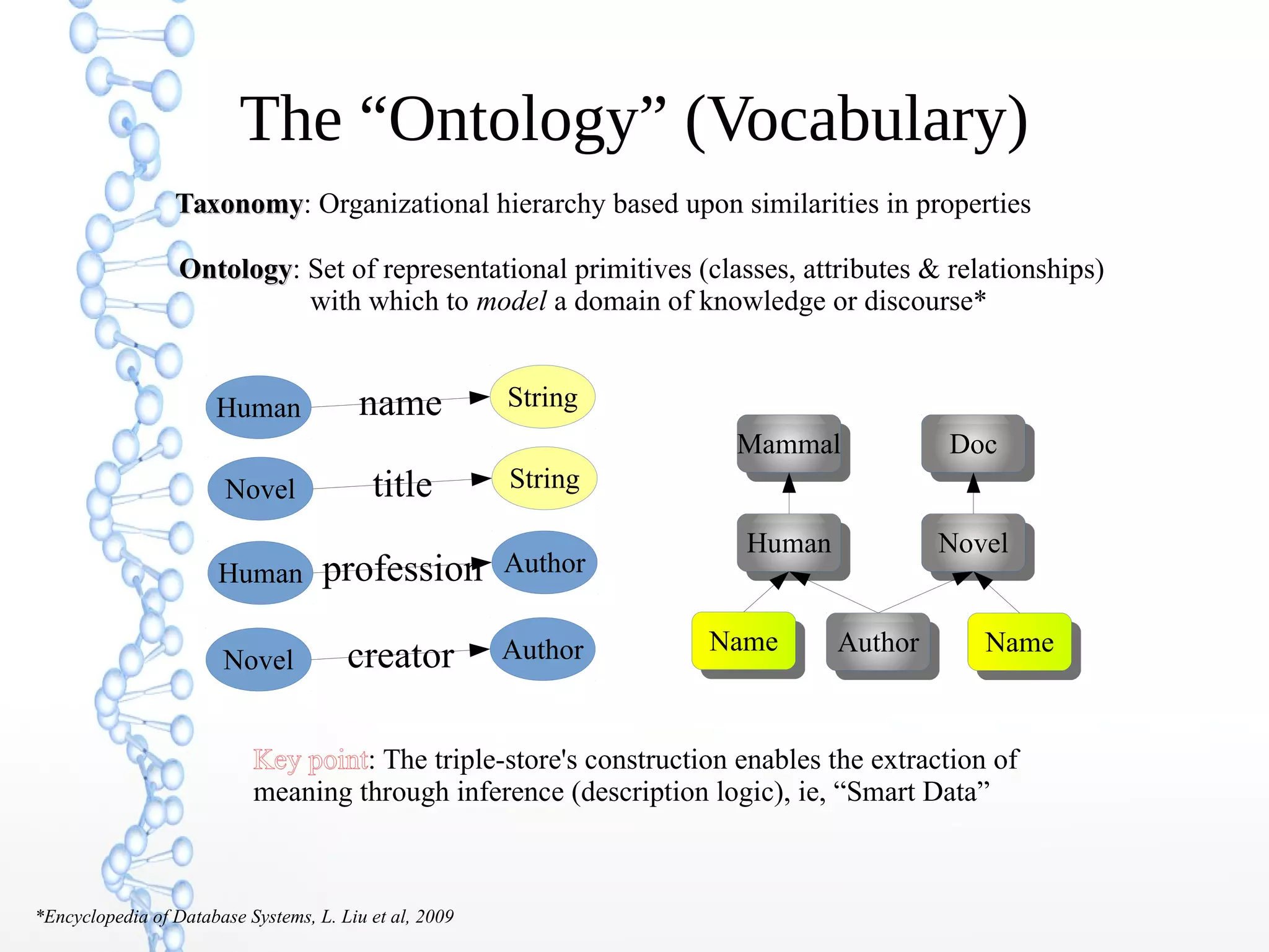

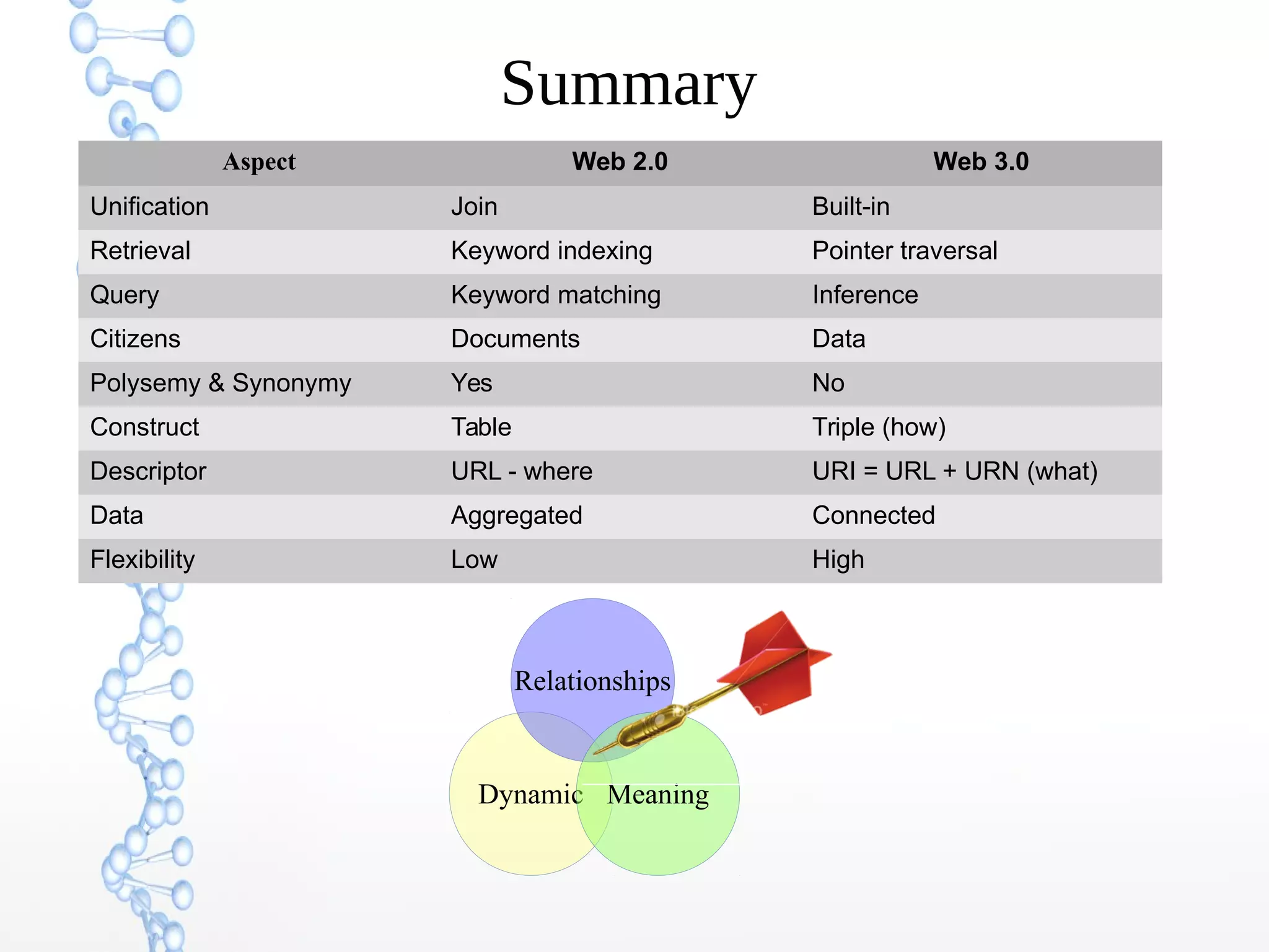

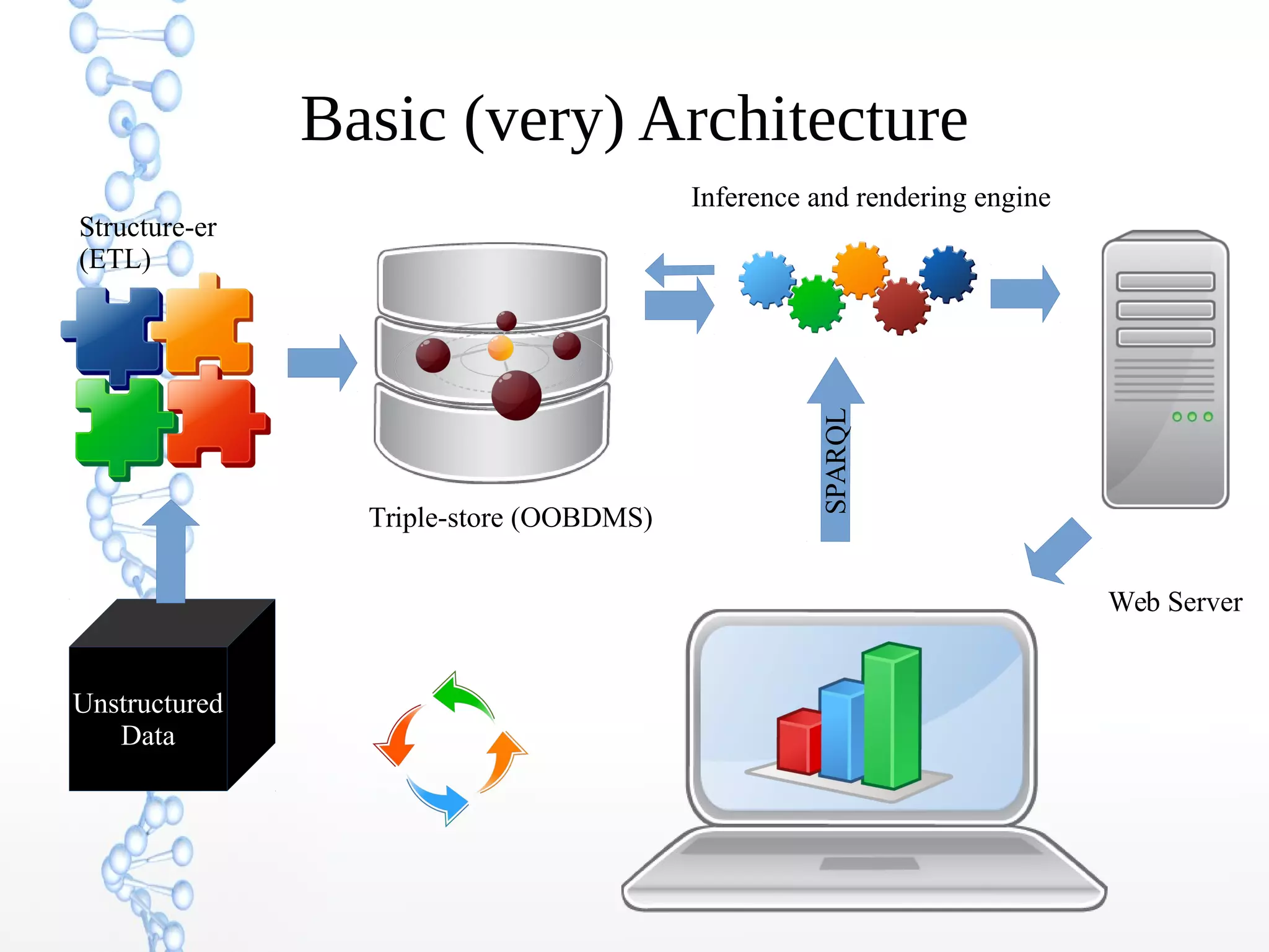

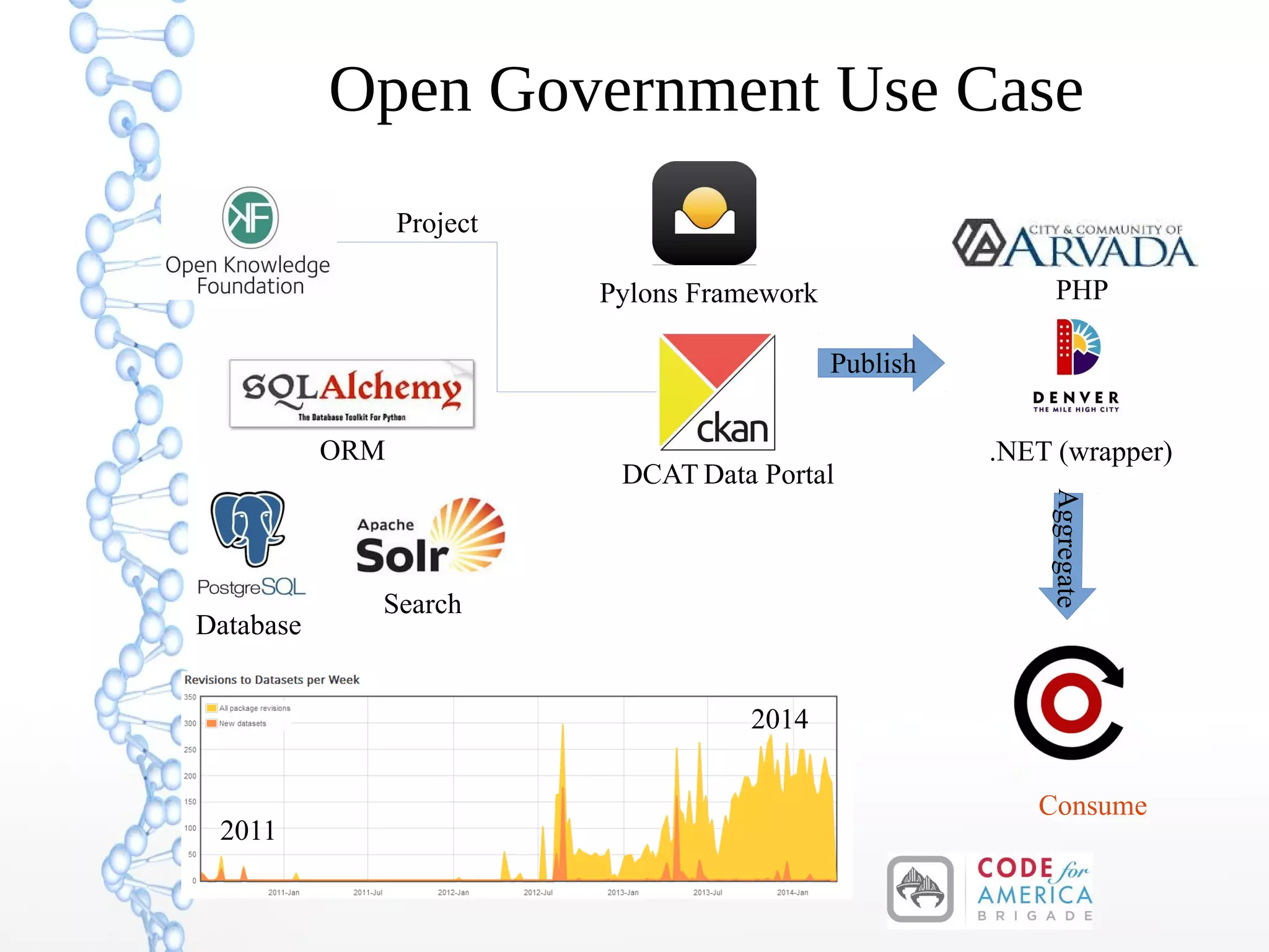

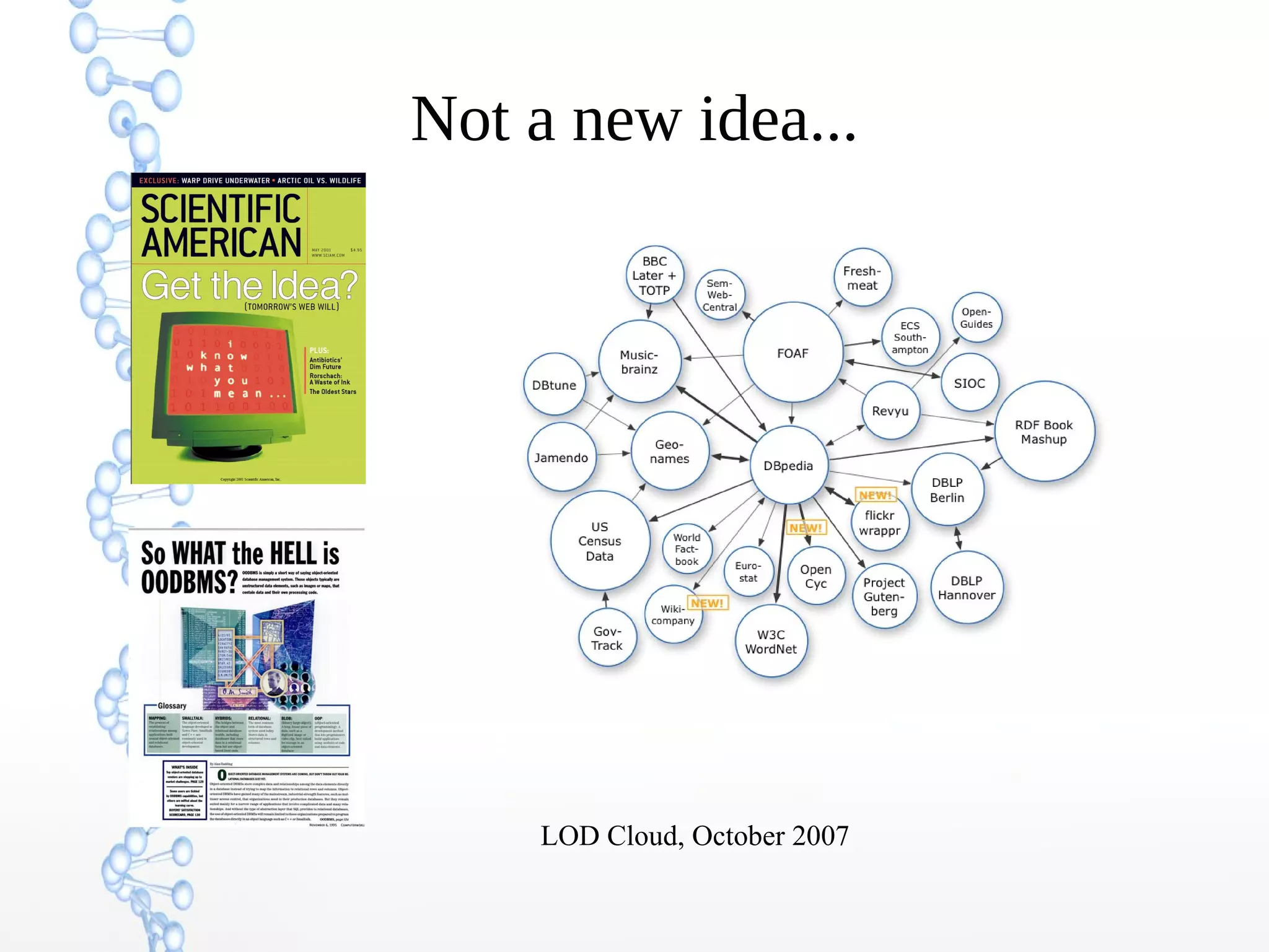

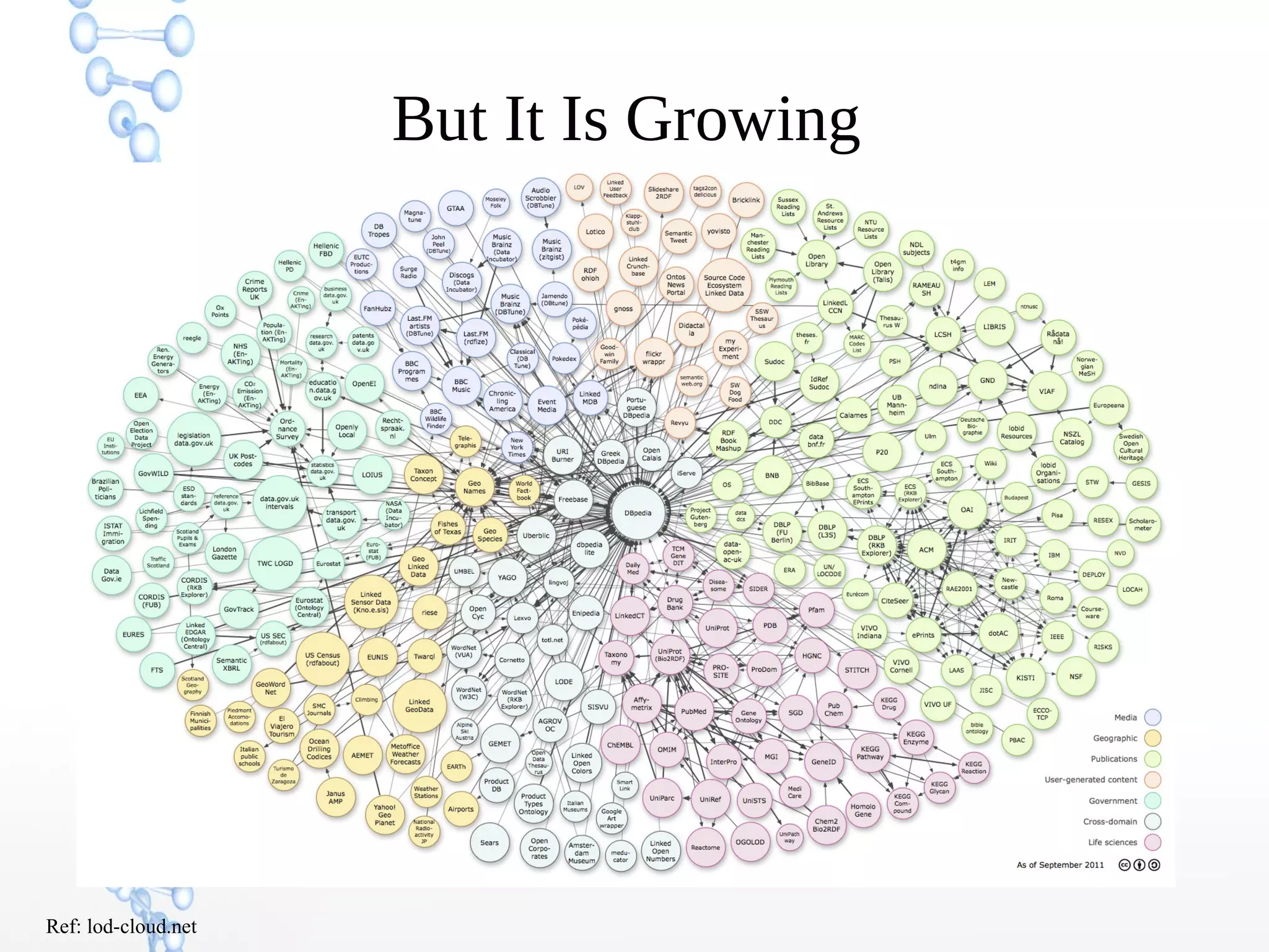

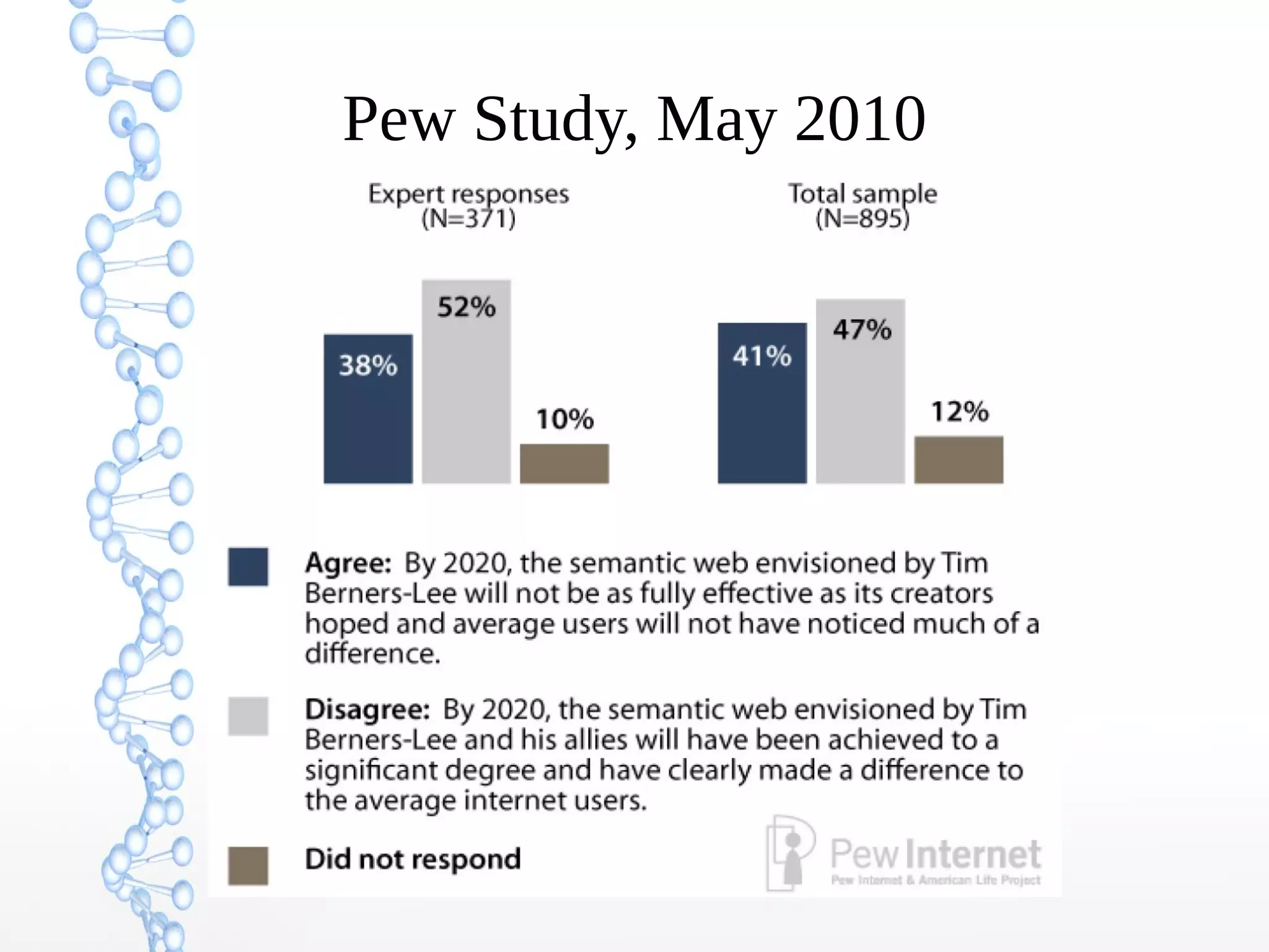

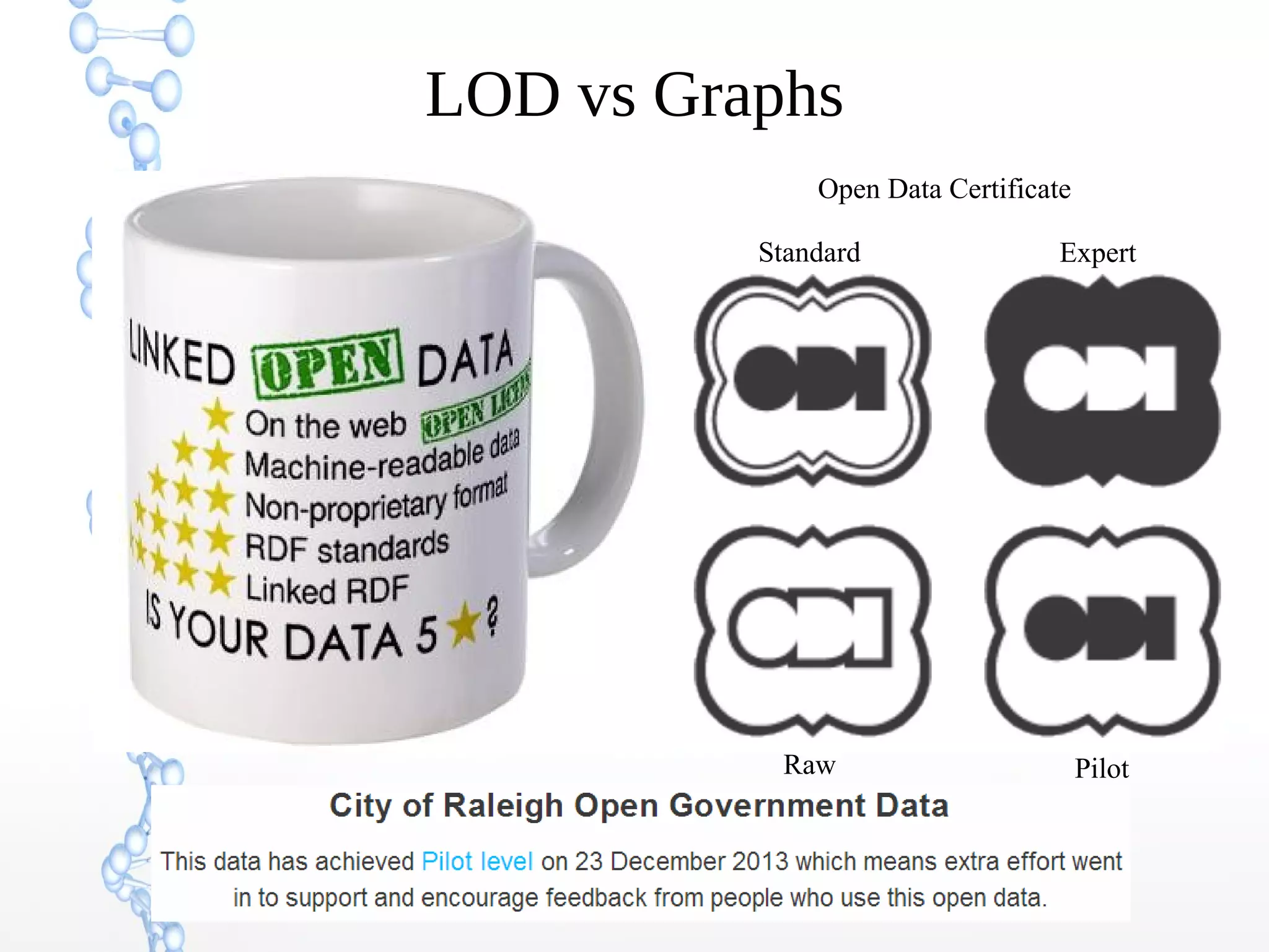

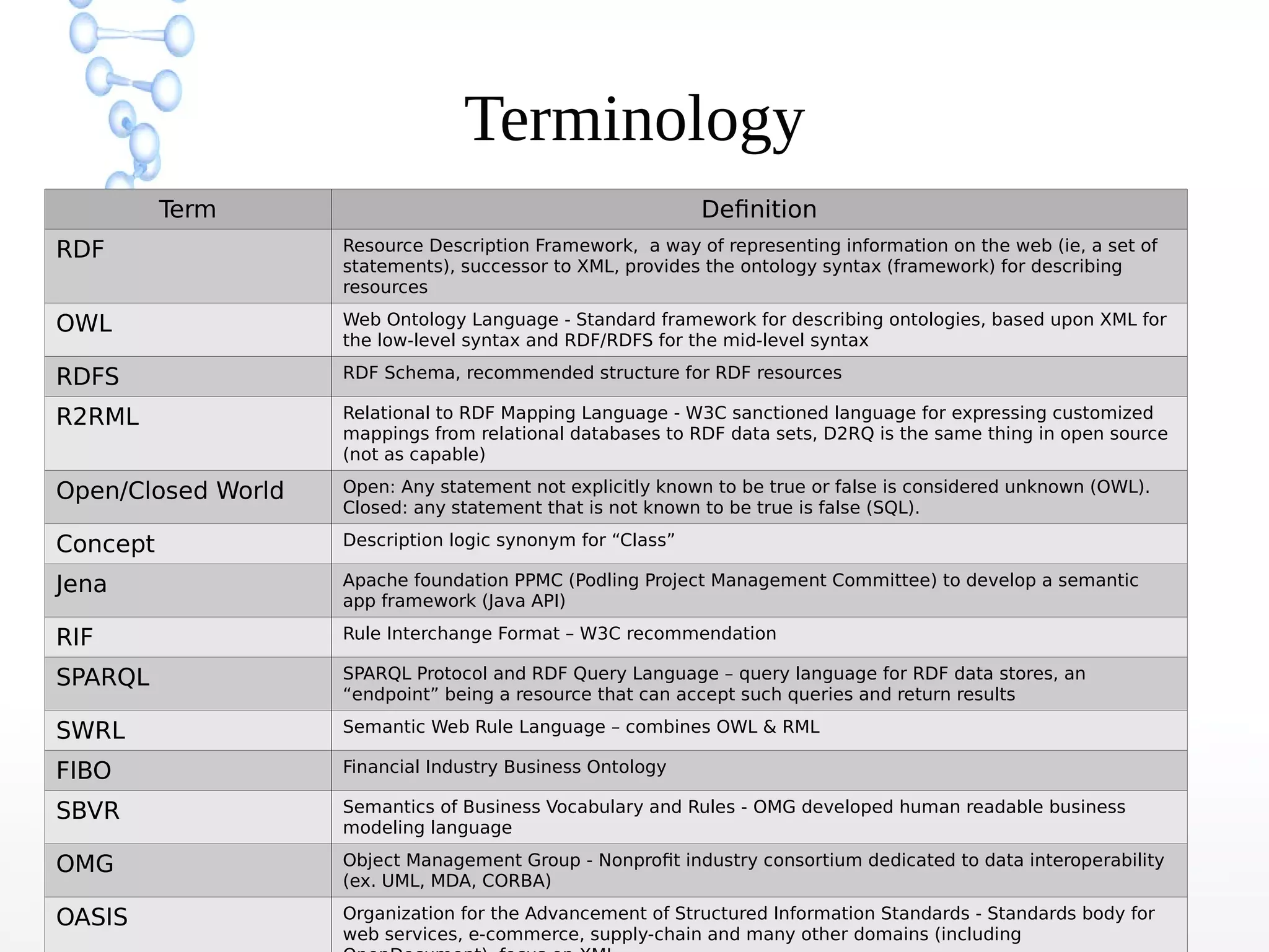

The document discusses the evolution of the web from Web 1.0 to Web 3.0, focusing on the shift towards a semantic web where data is interconnected and can be meaningfully analyzed. It highlights the limitations of traditional relational databases in managing complex and dynamic data, suggesting the advantages of graph databases and semantic integration for enhanced data utility. Key components of the semantic web, such as RDF, ontologies, and inference capabilities, are detailed, underscoring their role in facilitating improved data accessibility and understanding.