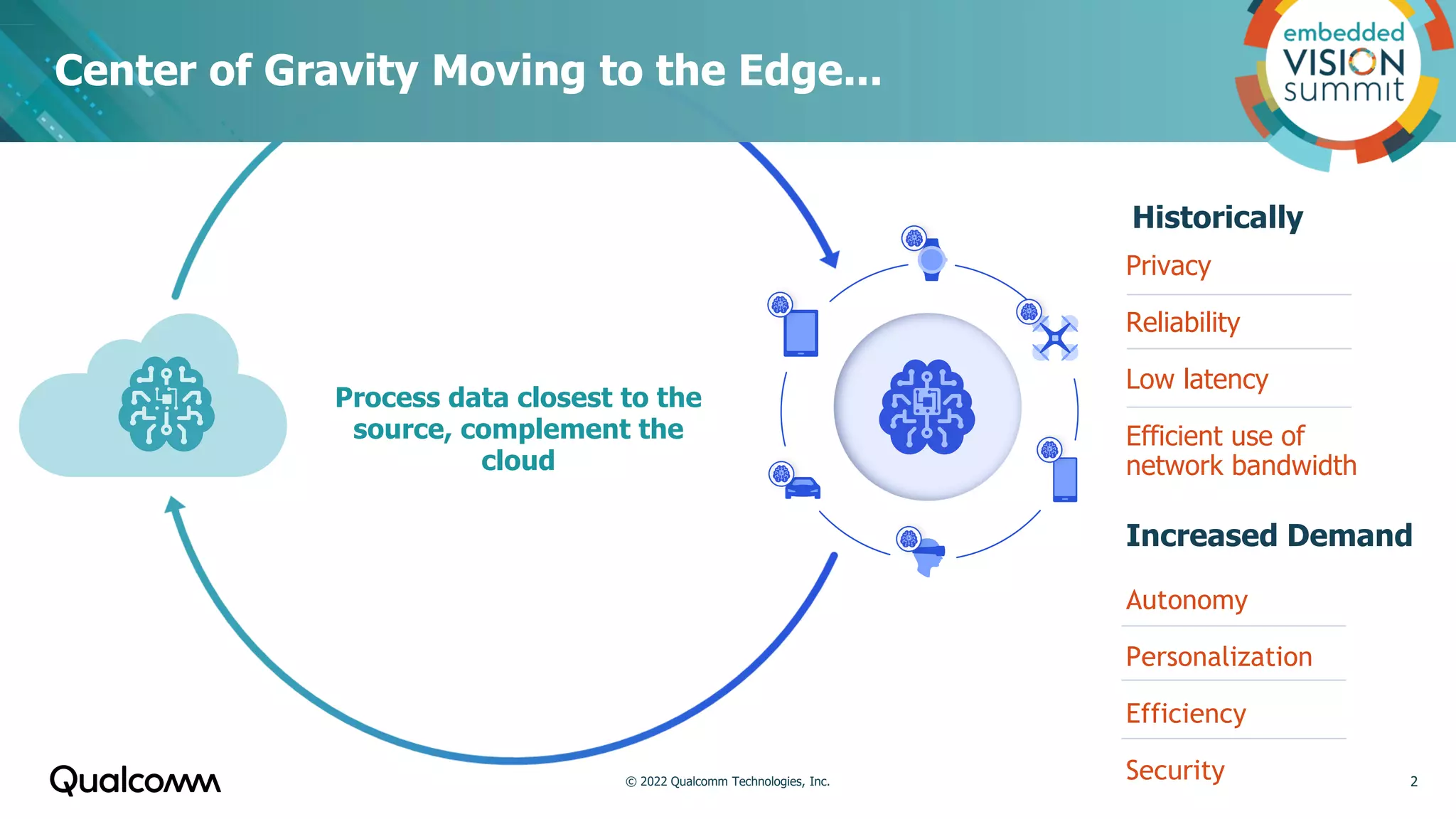

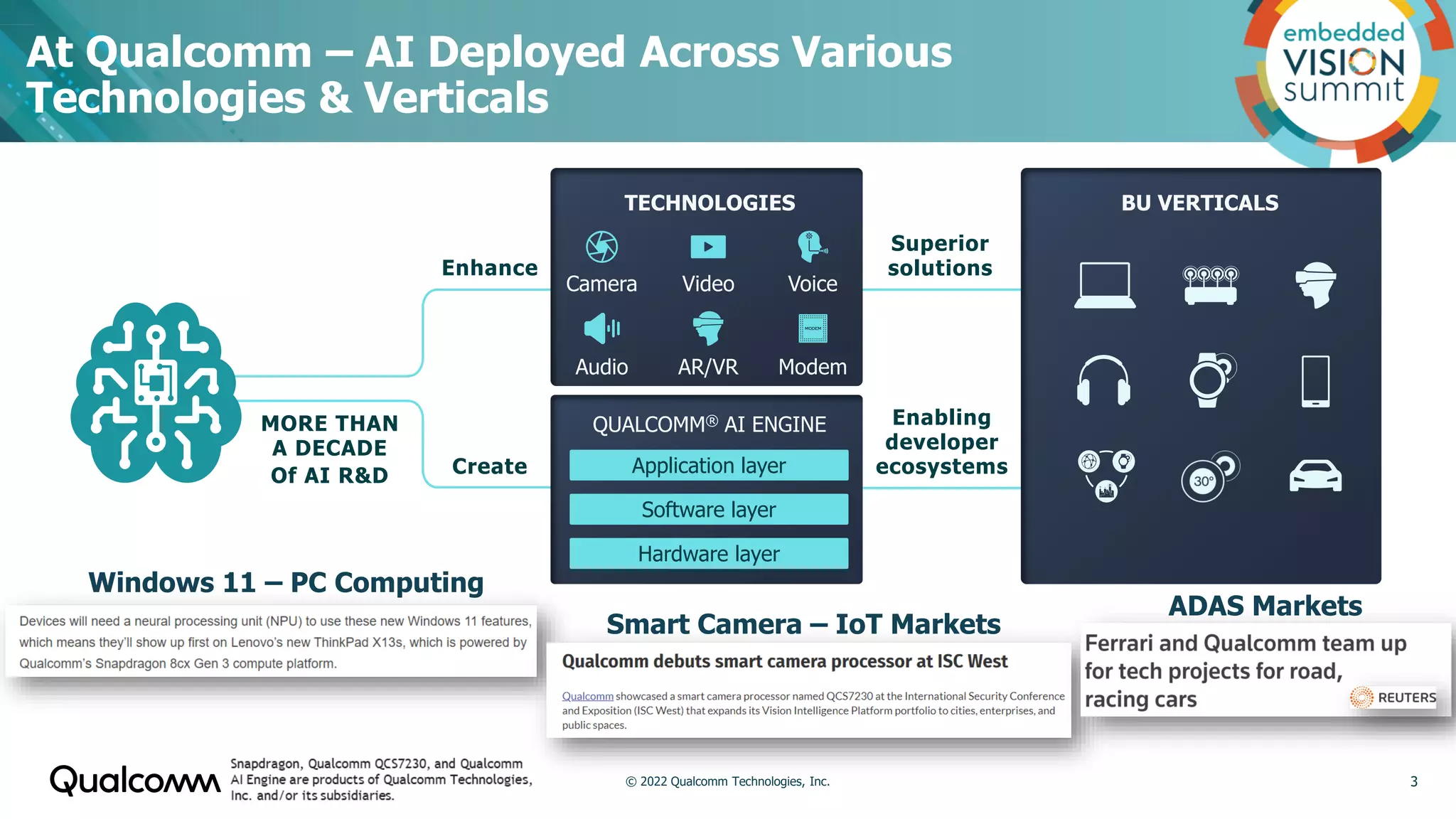

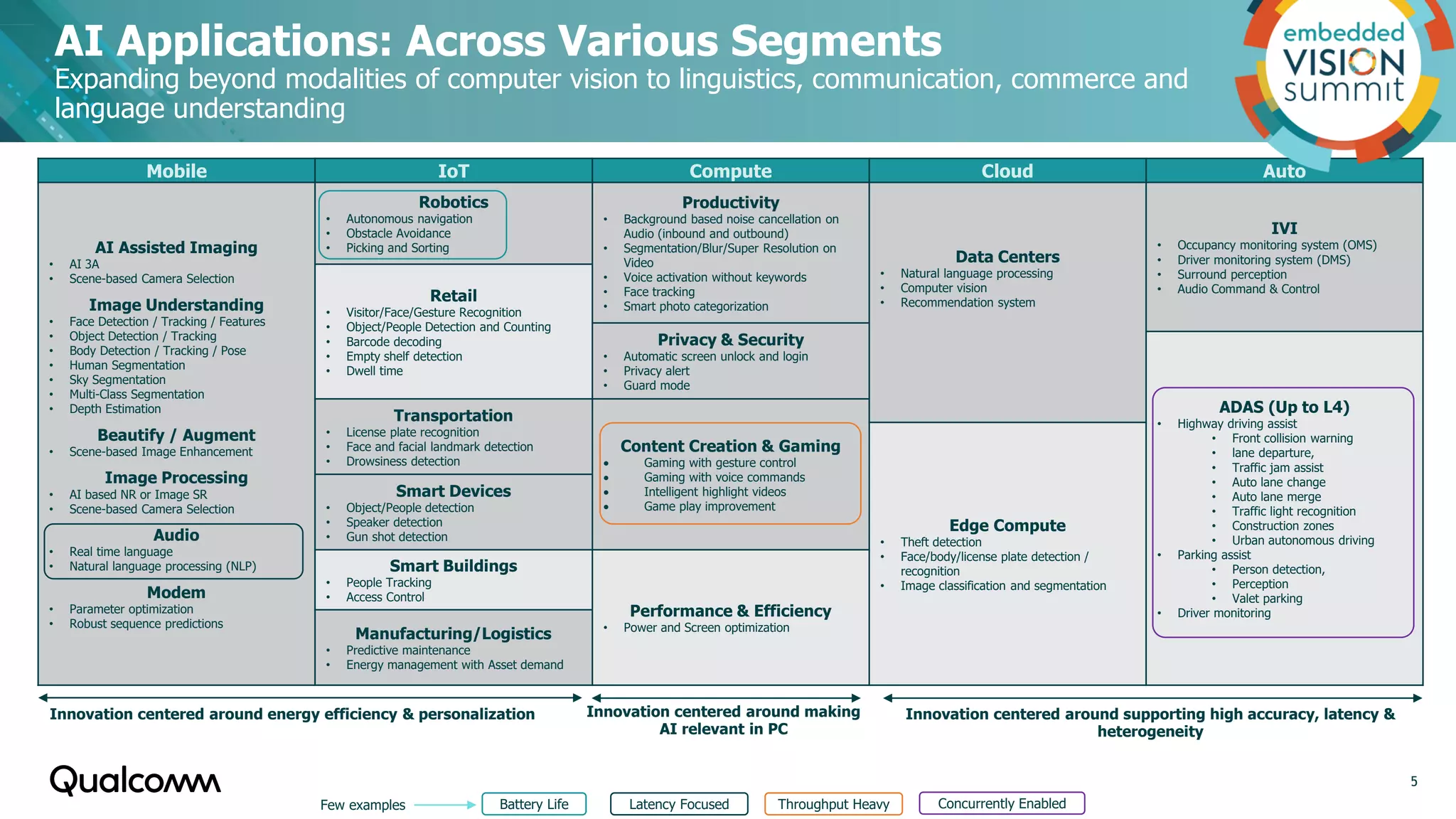

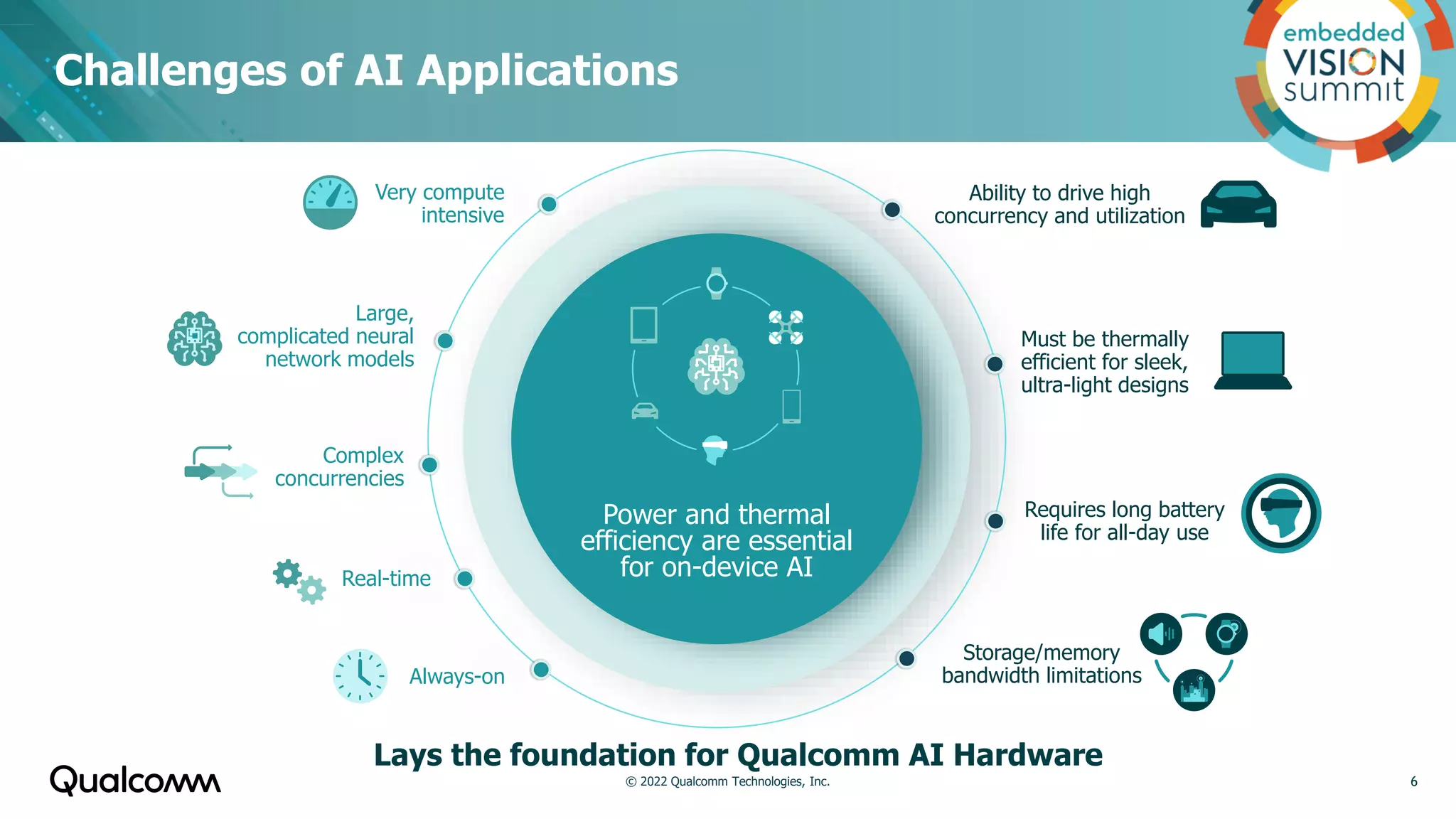

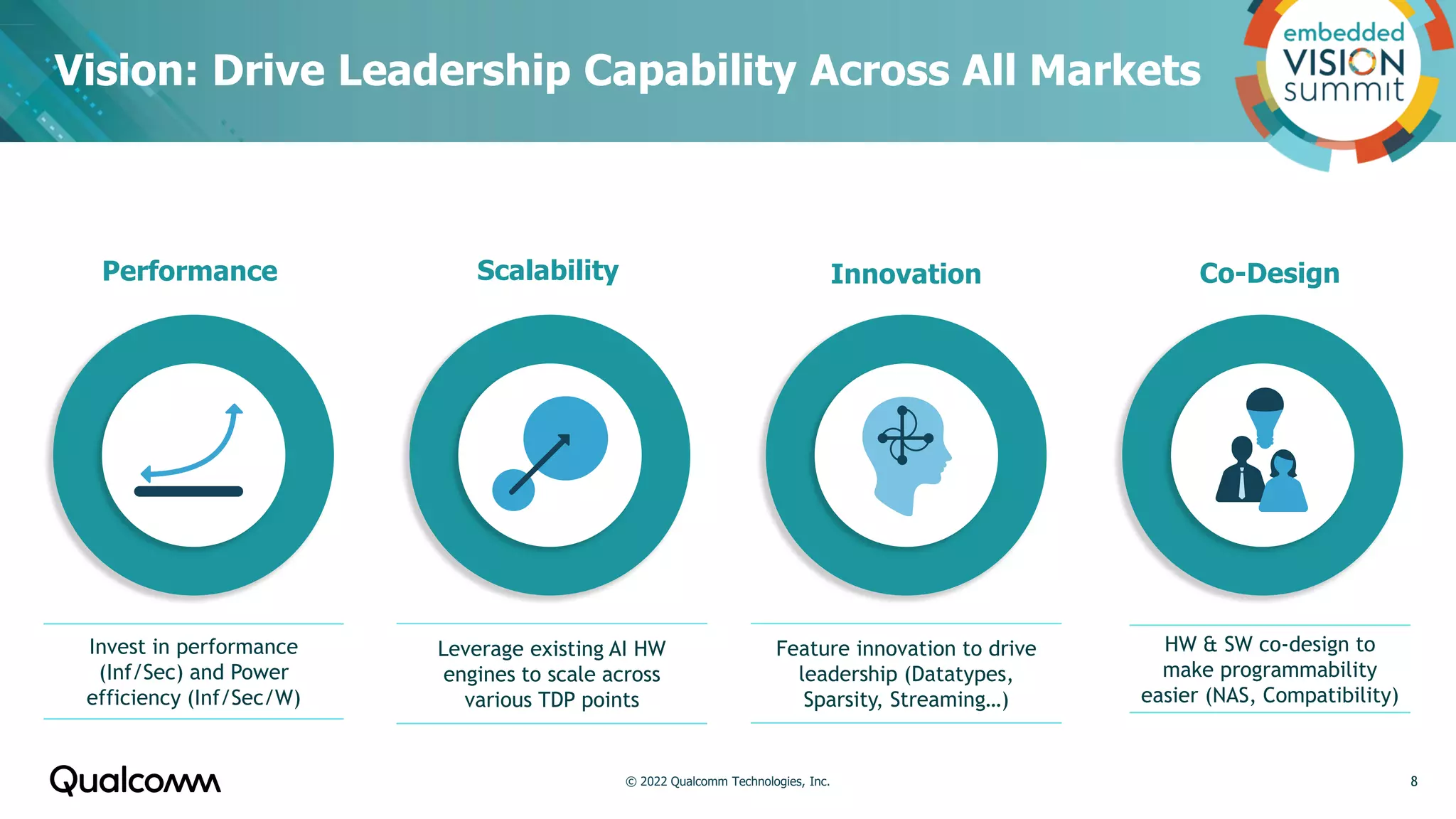

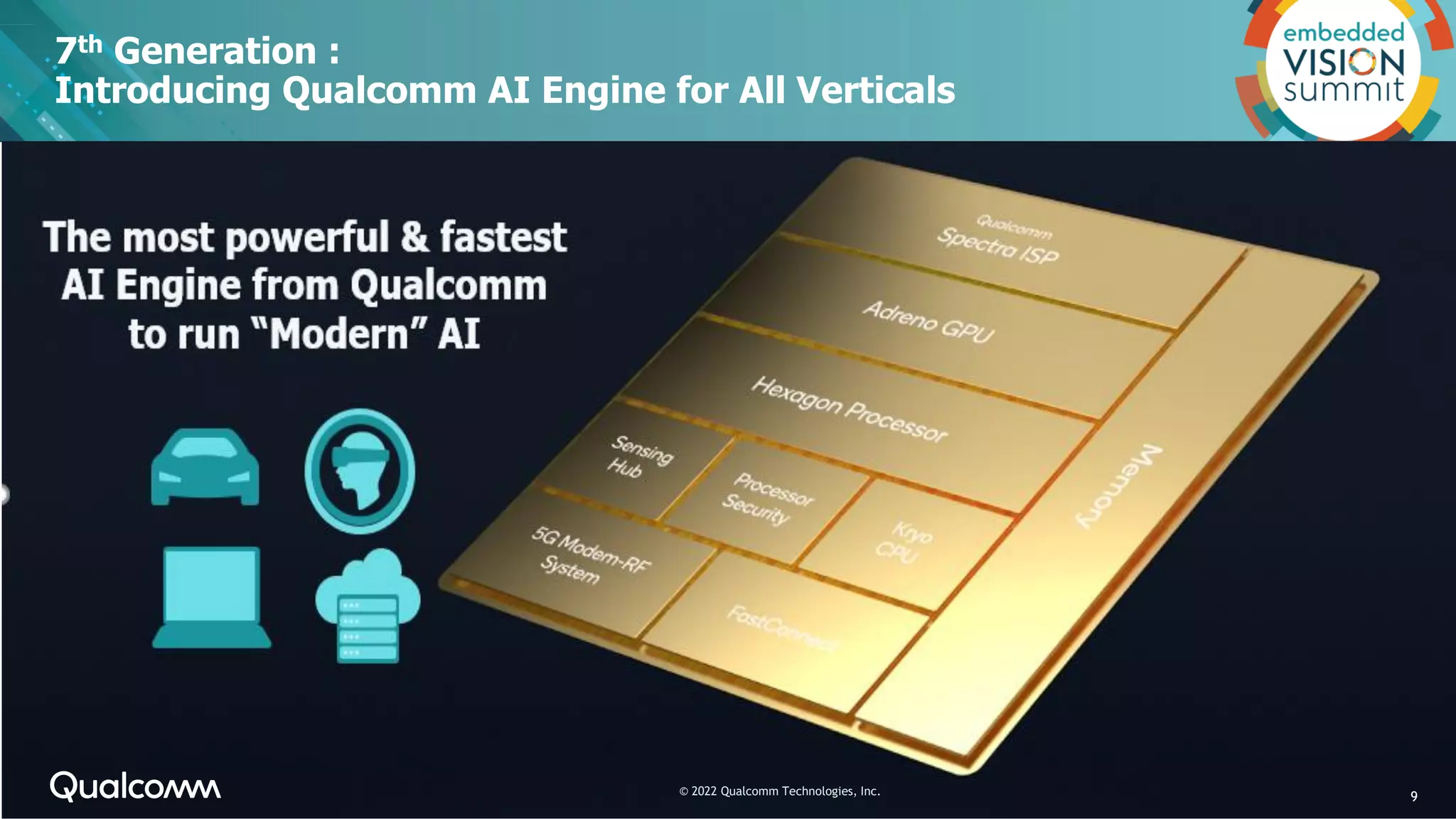

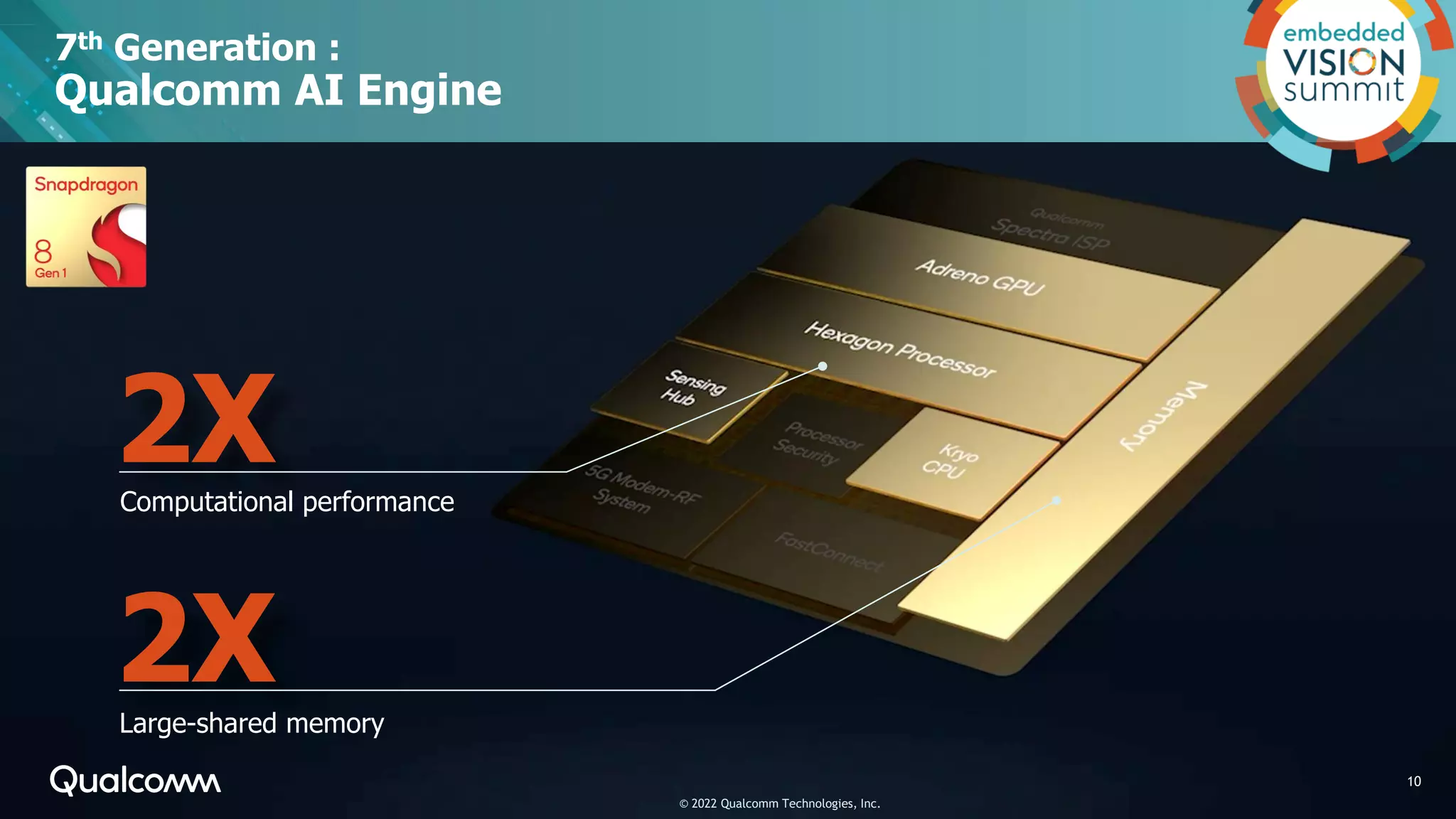

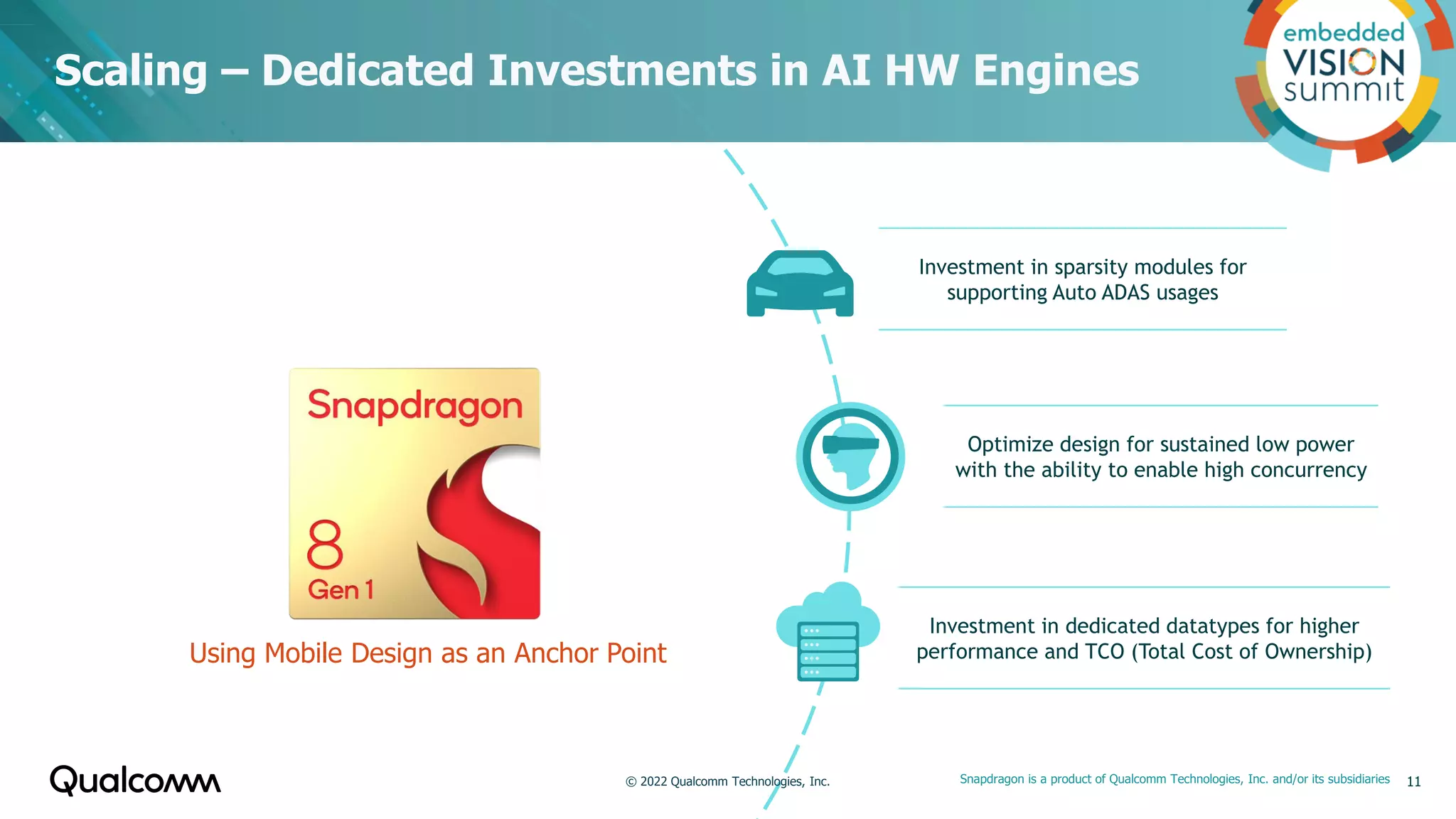

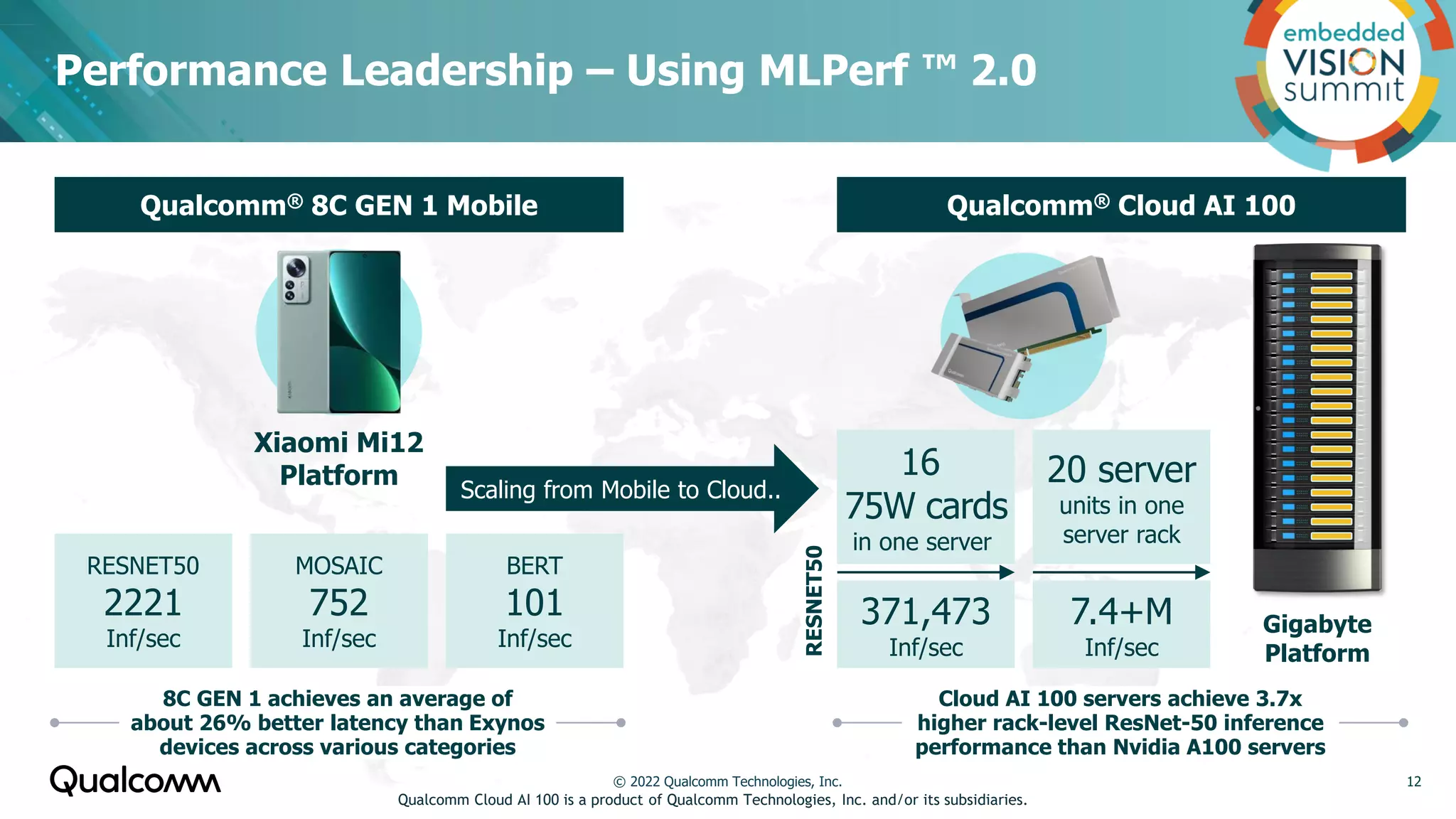

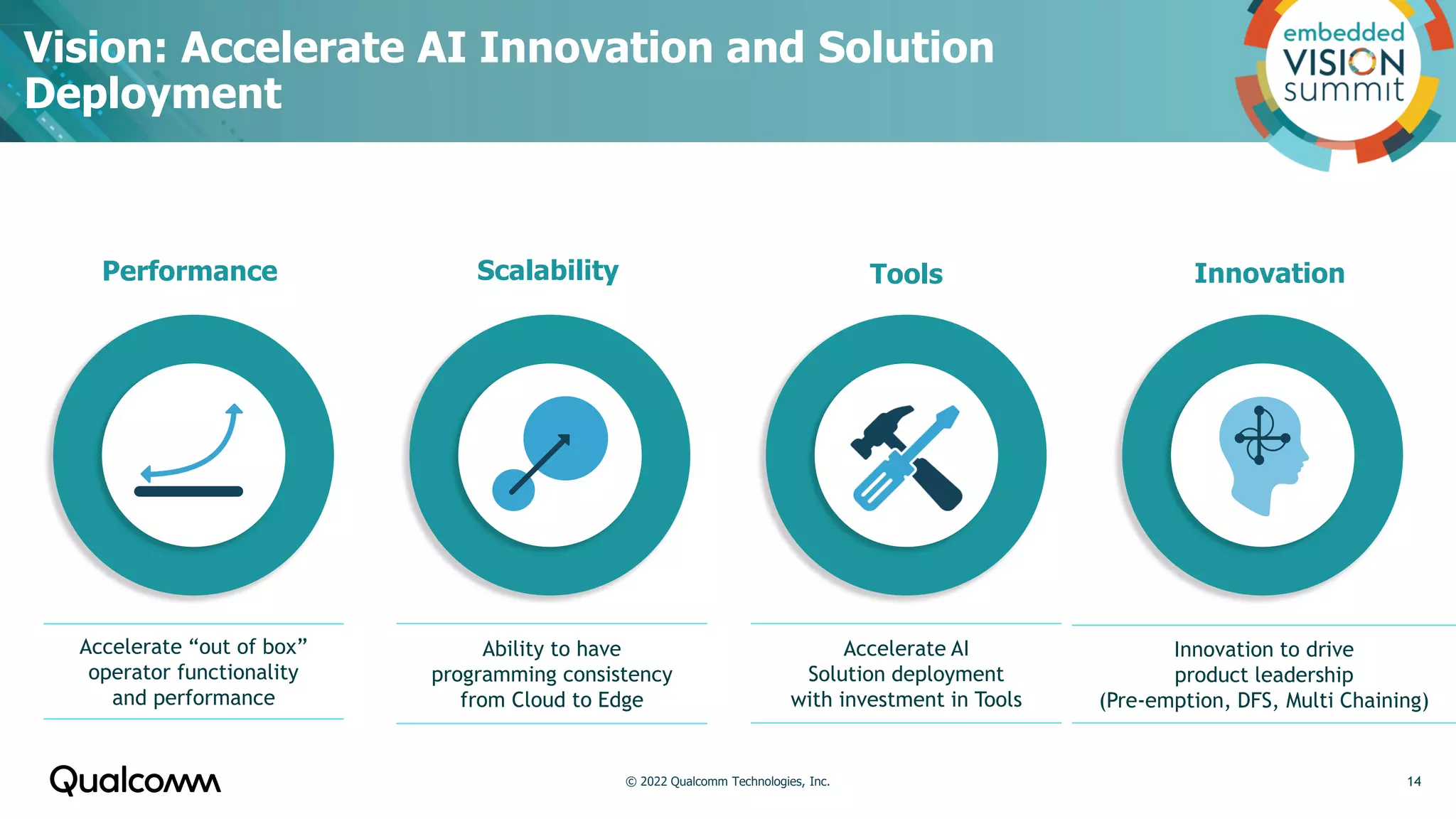

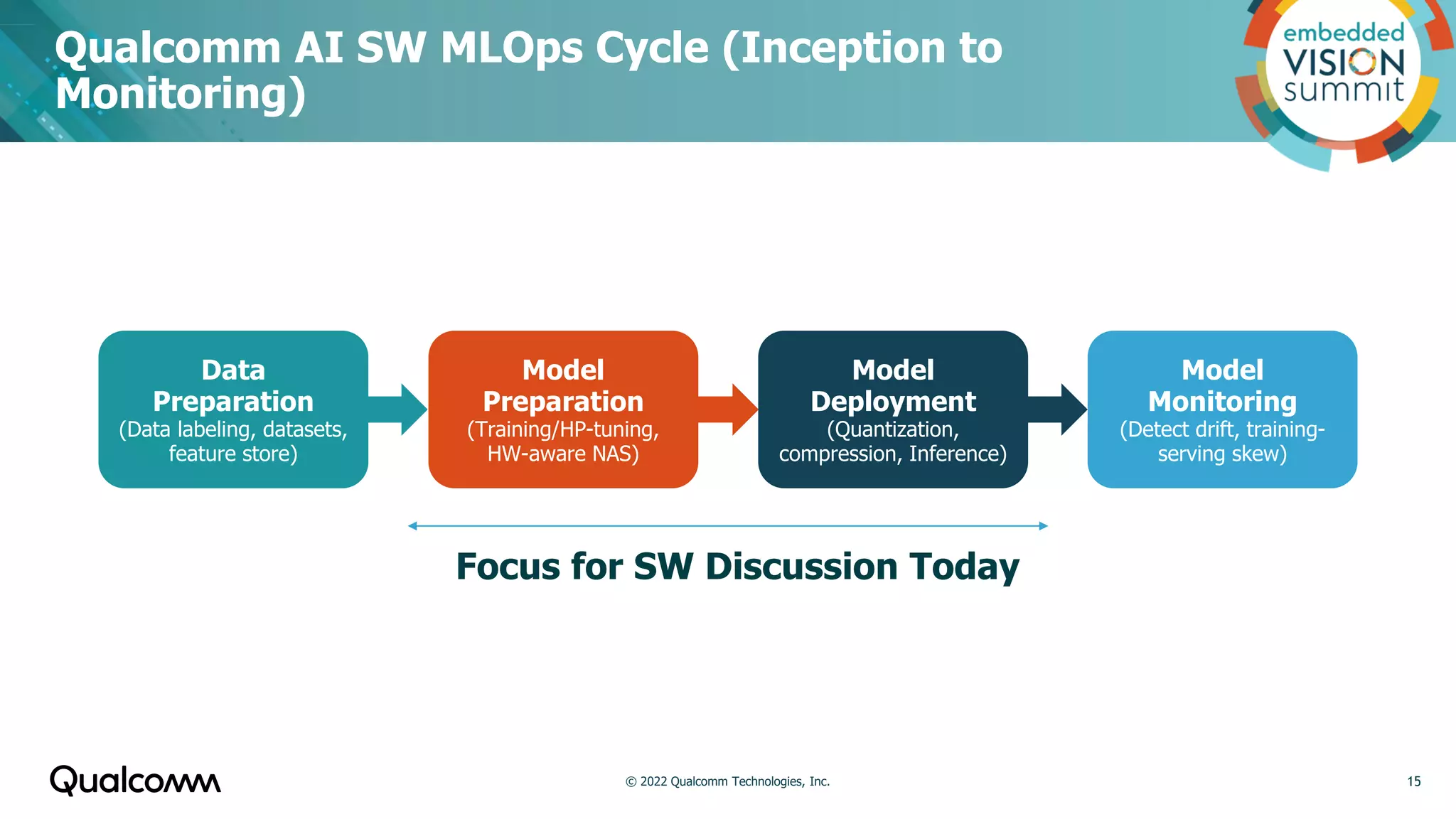

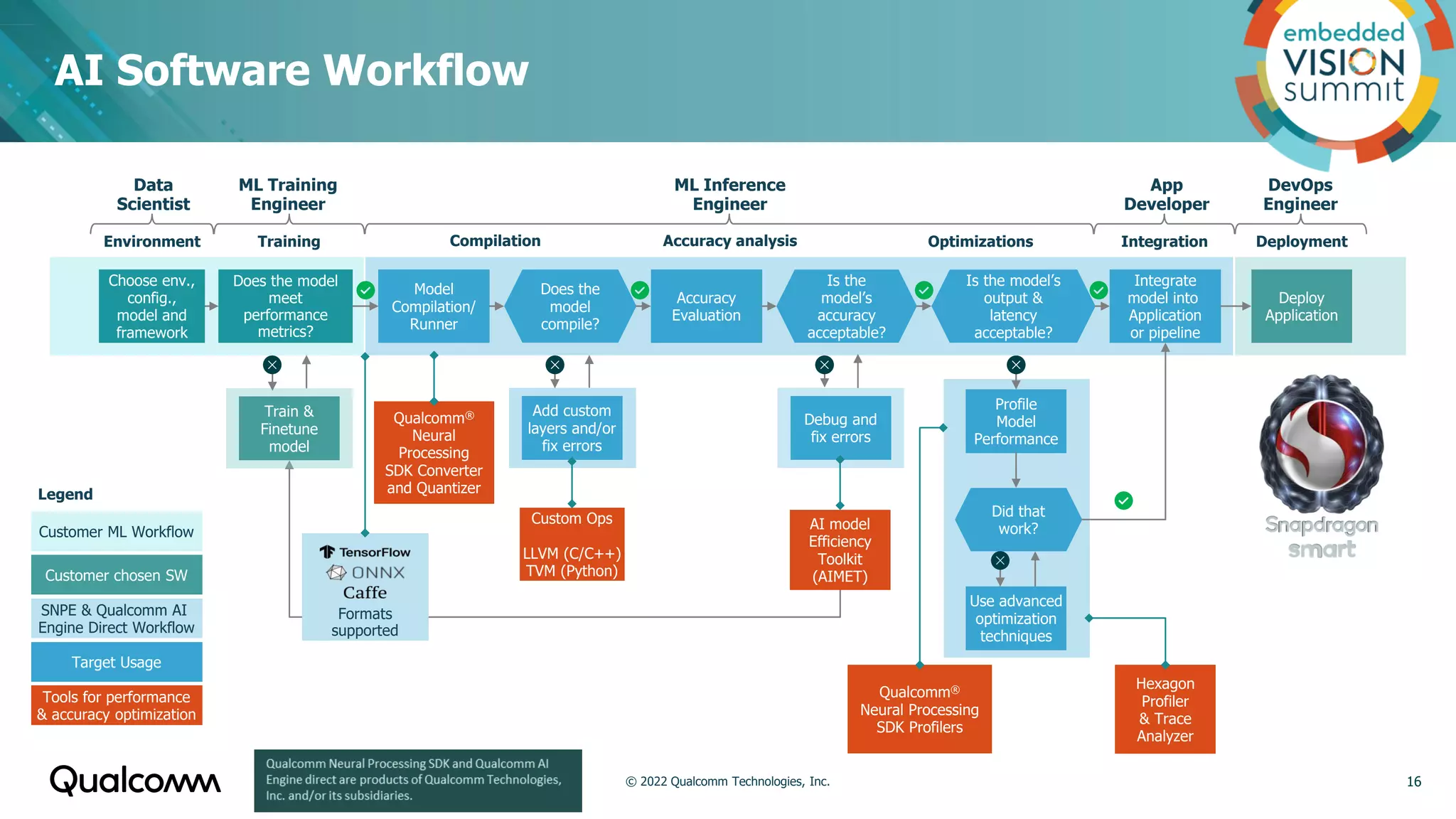

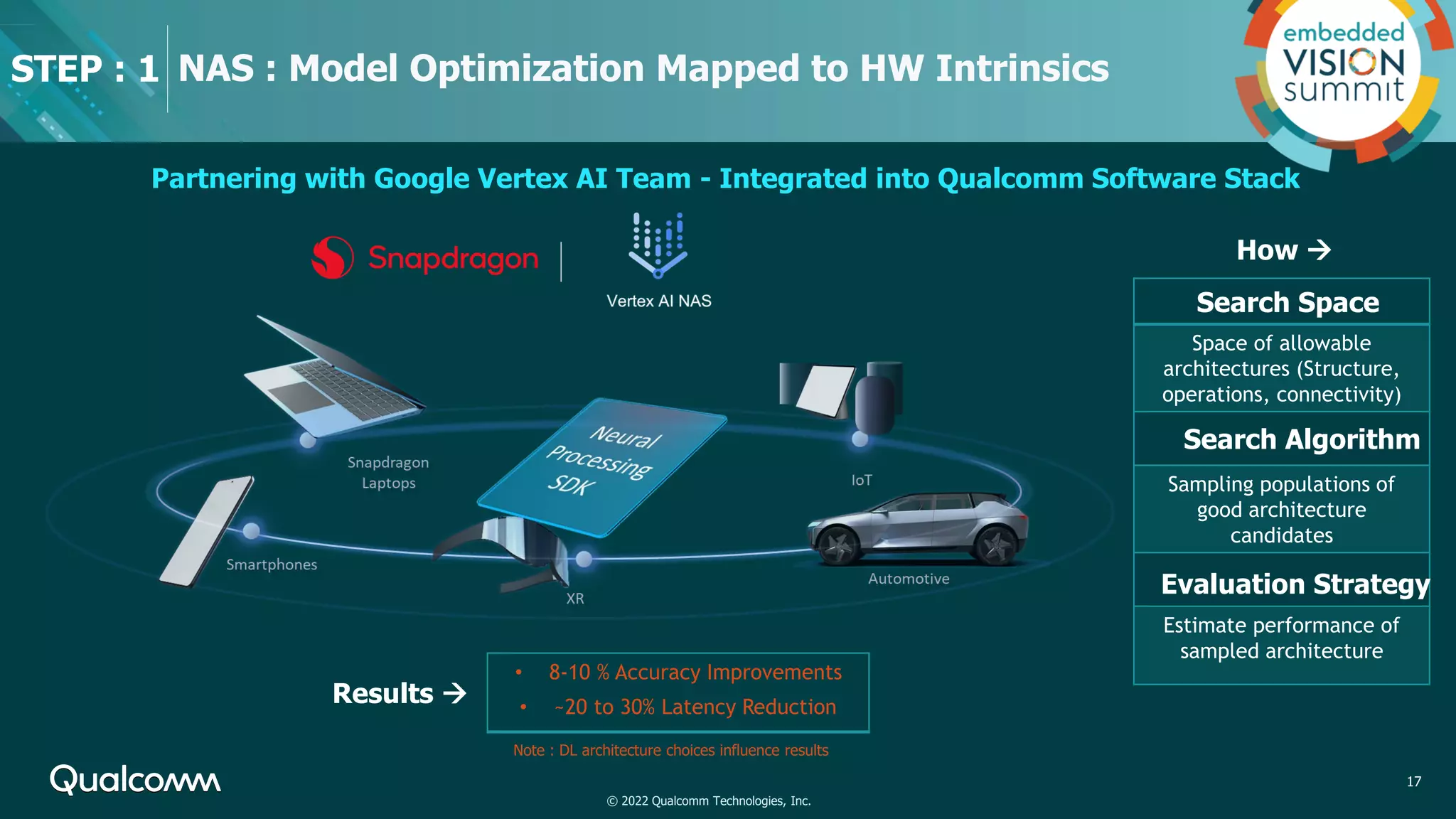

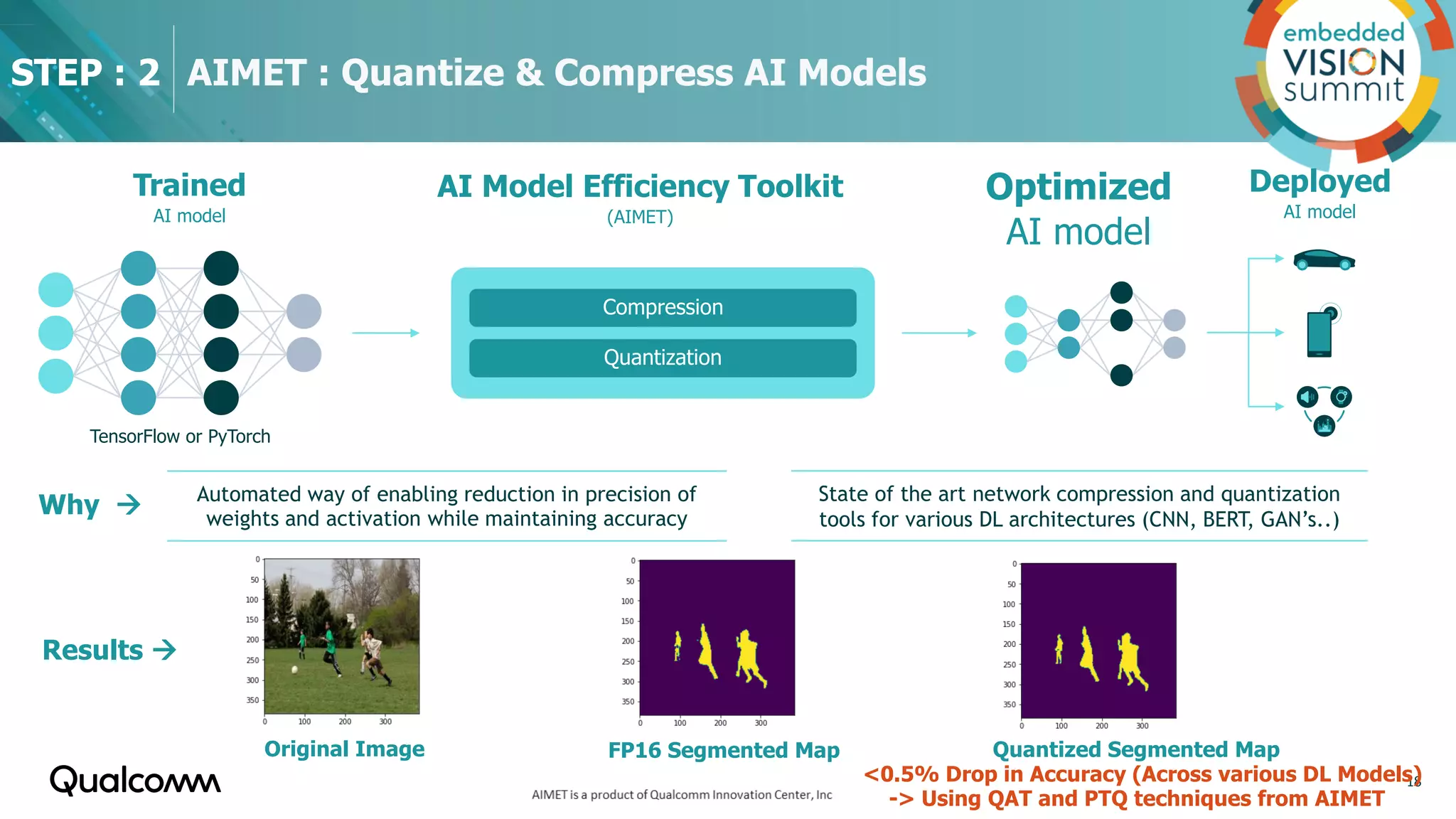

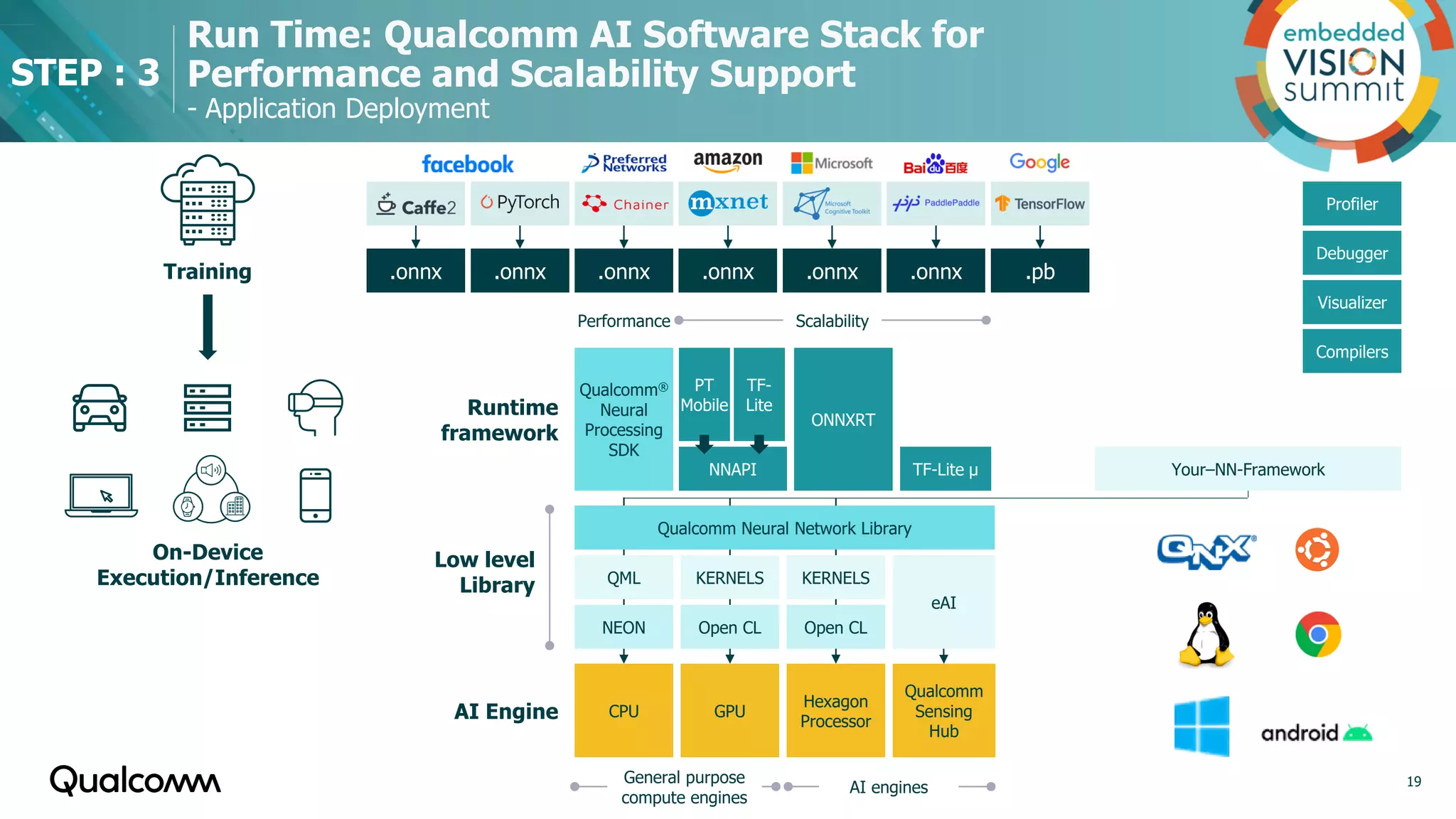

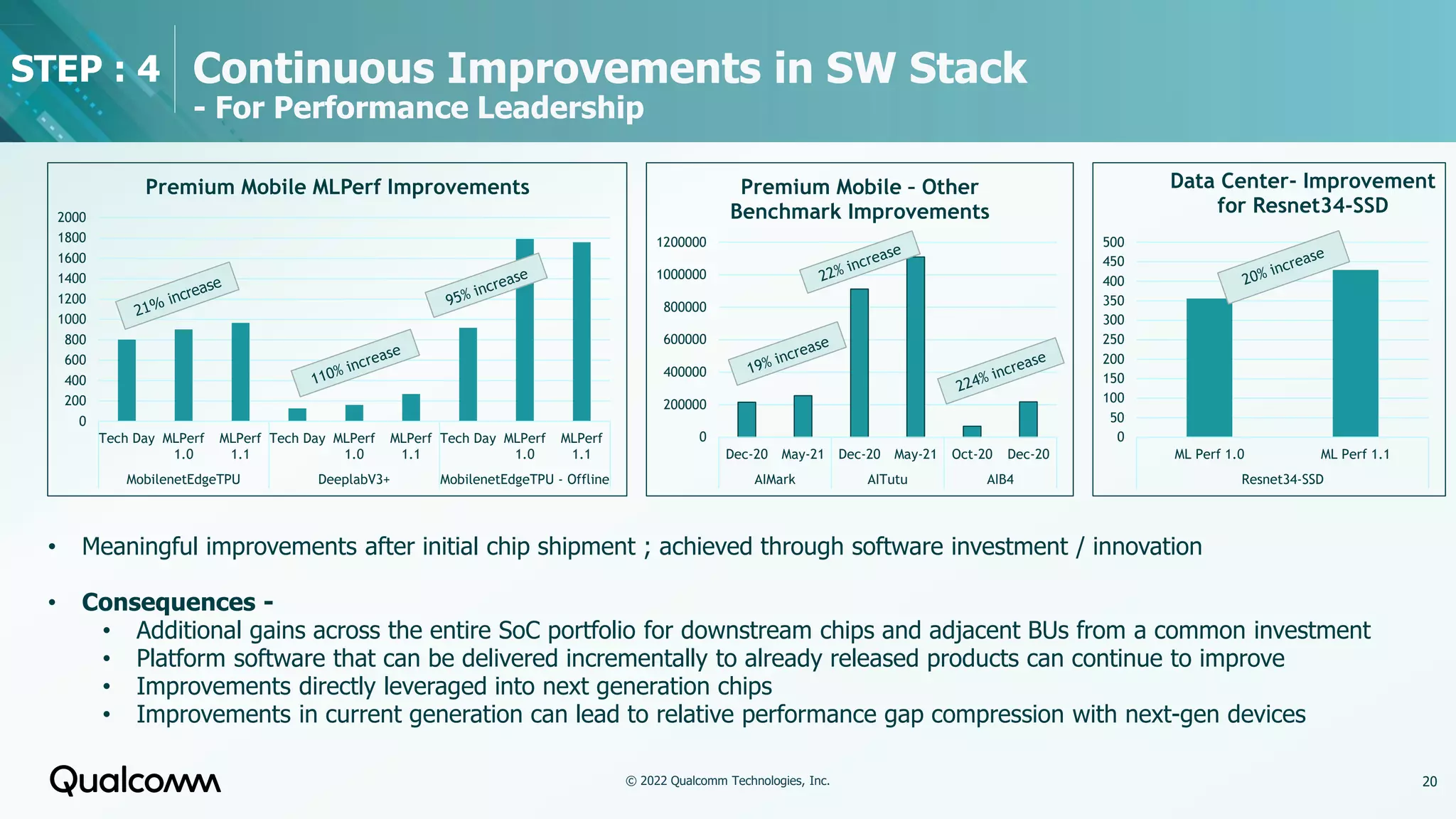

Qualcomm's AI offering focuses on enhancing on-device AI capabilities, emphasizing privacy, reliability, and low latency through edge computing. The technology is applicable across various industries, including automotive, IoT, and smart devices, leveraging extensive AI research and innovative hardware and software. Ongoing investments aim to improve performance, energy efficiency, and scalability, positioning Qualcomm as a leader in AI solutions.