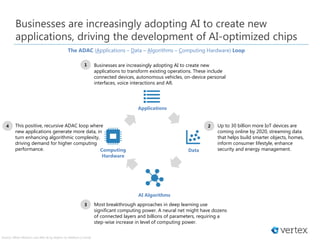

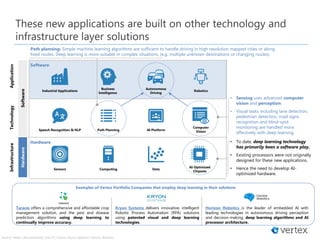

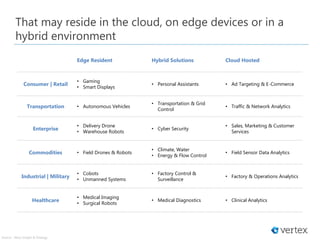

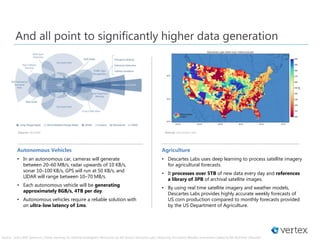

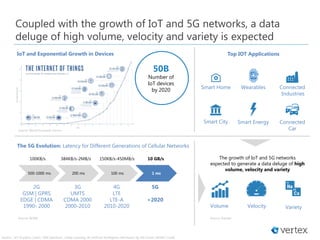

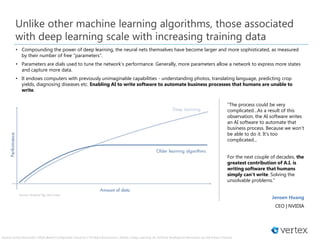

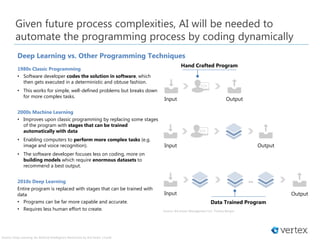

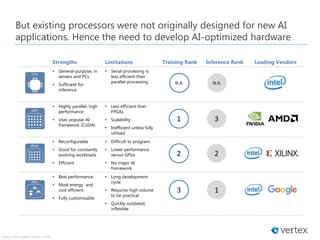

The document discusses the increasing adoption of AI by businesses to develop applications that transform operations and the subsequent demand for AI-optimized chipsets. It highlights the evolution of computing needs from general-purpose processors to specialized hardware that can handle complex data and algorithmic demands, particularly in fields like autonomous vehicles and agriculture. Future parts of the series will explore shifts in computing performance focus, the impact of cloud technology, and emerging technologies like neuromorphic chips and quantum computing.