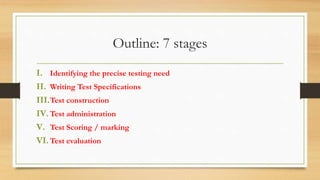

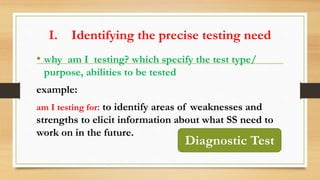

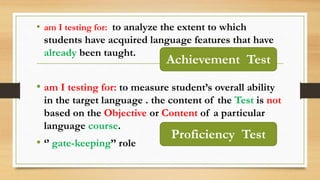

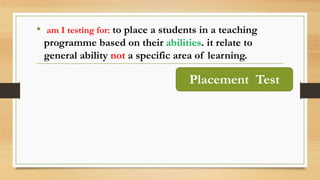

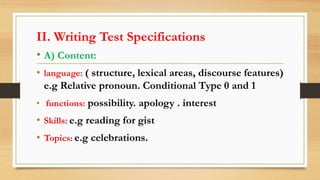

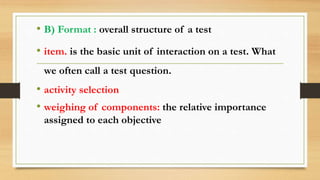

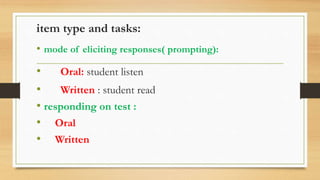

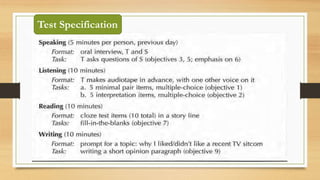

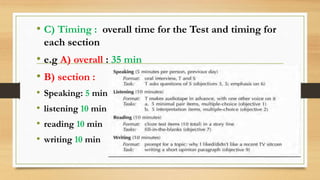

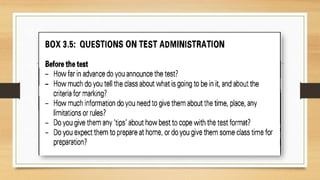

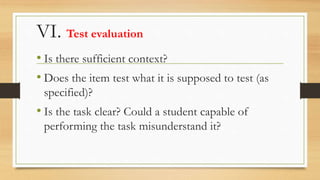

The document outlines a training program for test design, construction, and administration within the Department of English at CRMEF in Morocco for the year 2020-2021. It details a seven-stage process including identifying testing needs, writing specifications, construction, administration, scoring, and evaluation of tests. Various test types and objectives are discussed, such as diagnostic, achievement, and placement tests, along with best practices for effective test administration and scoring.