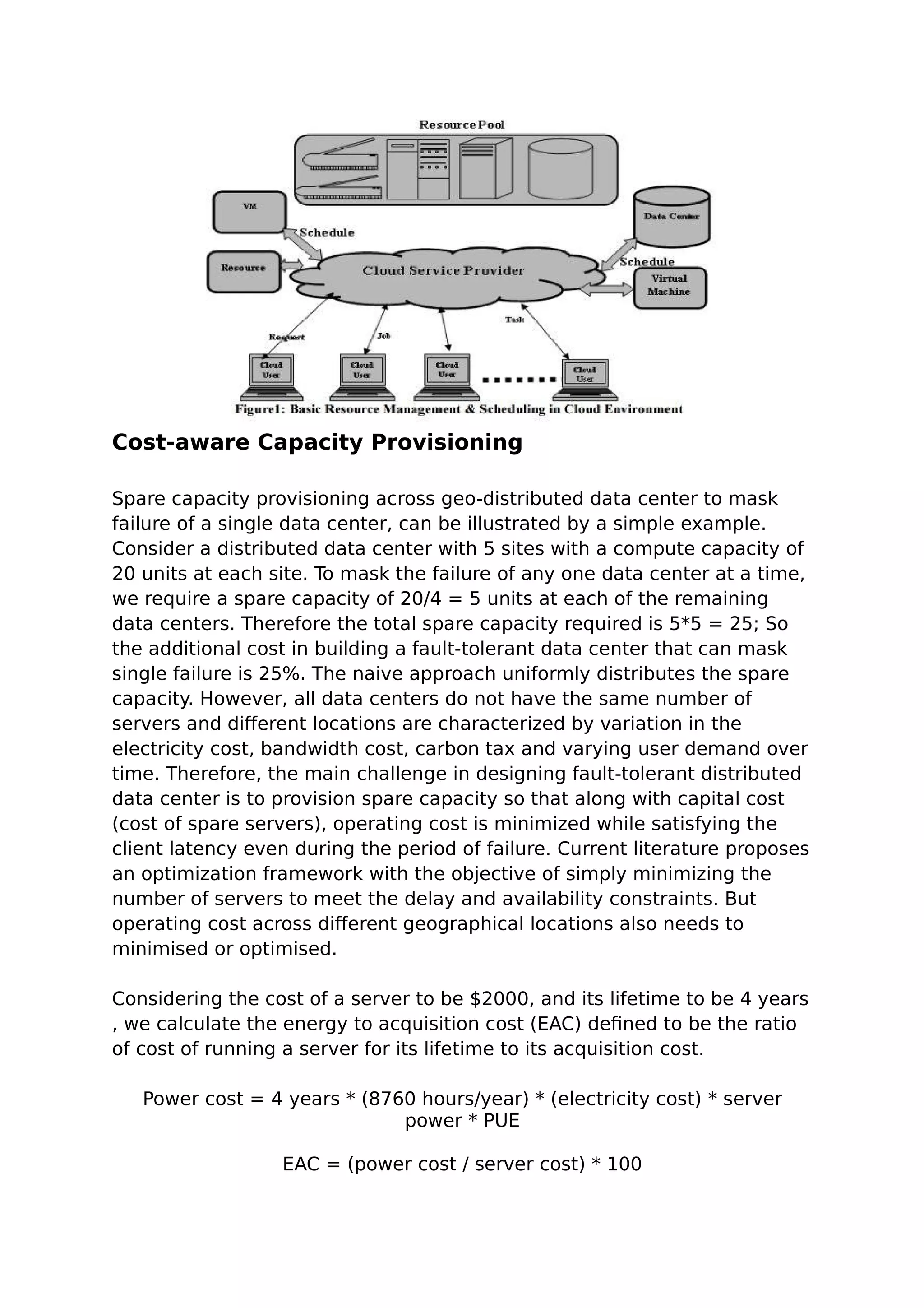

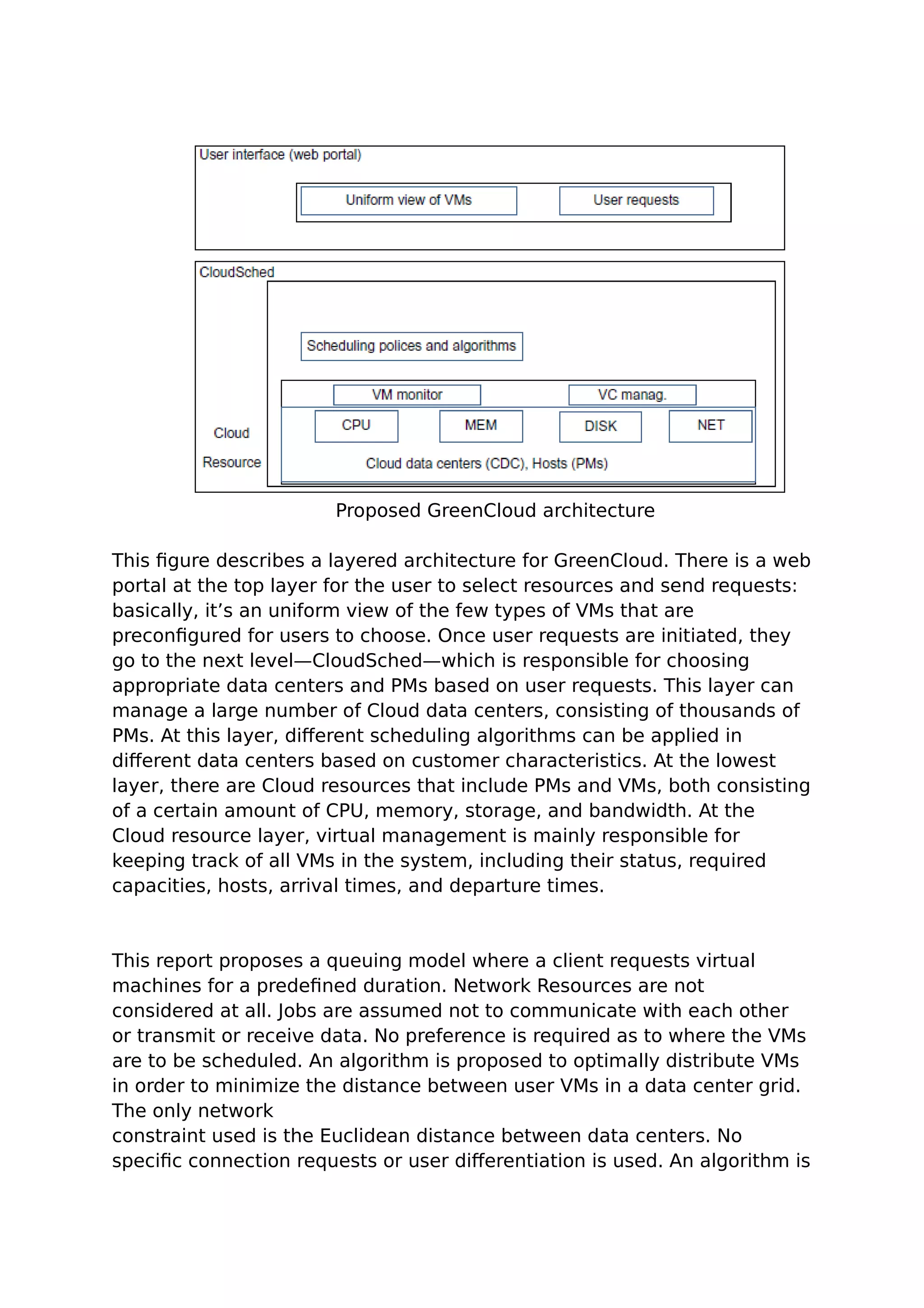

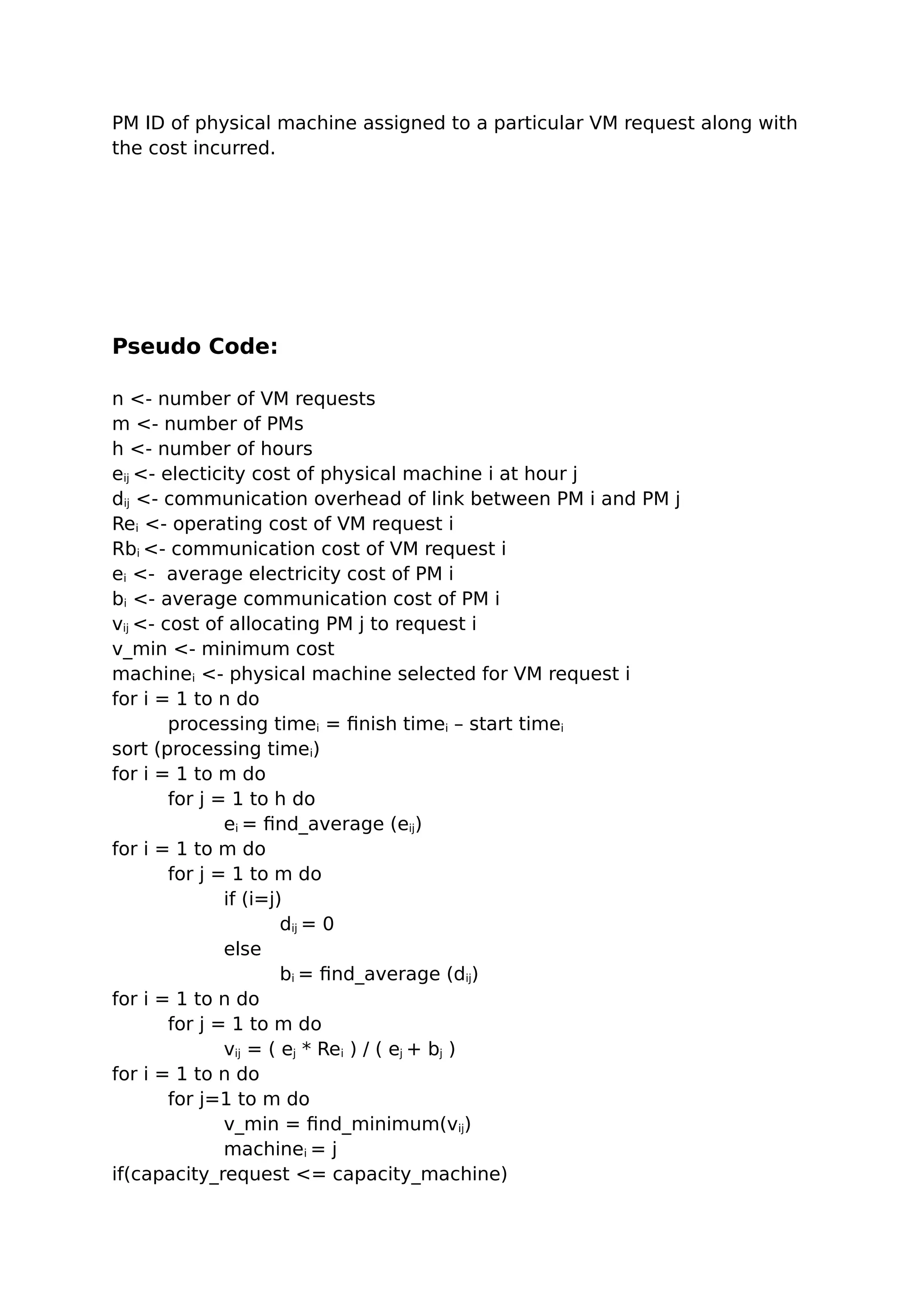

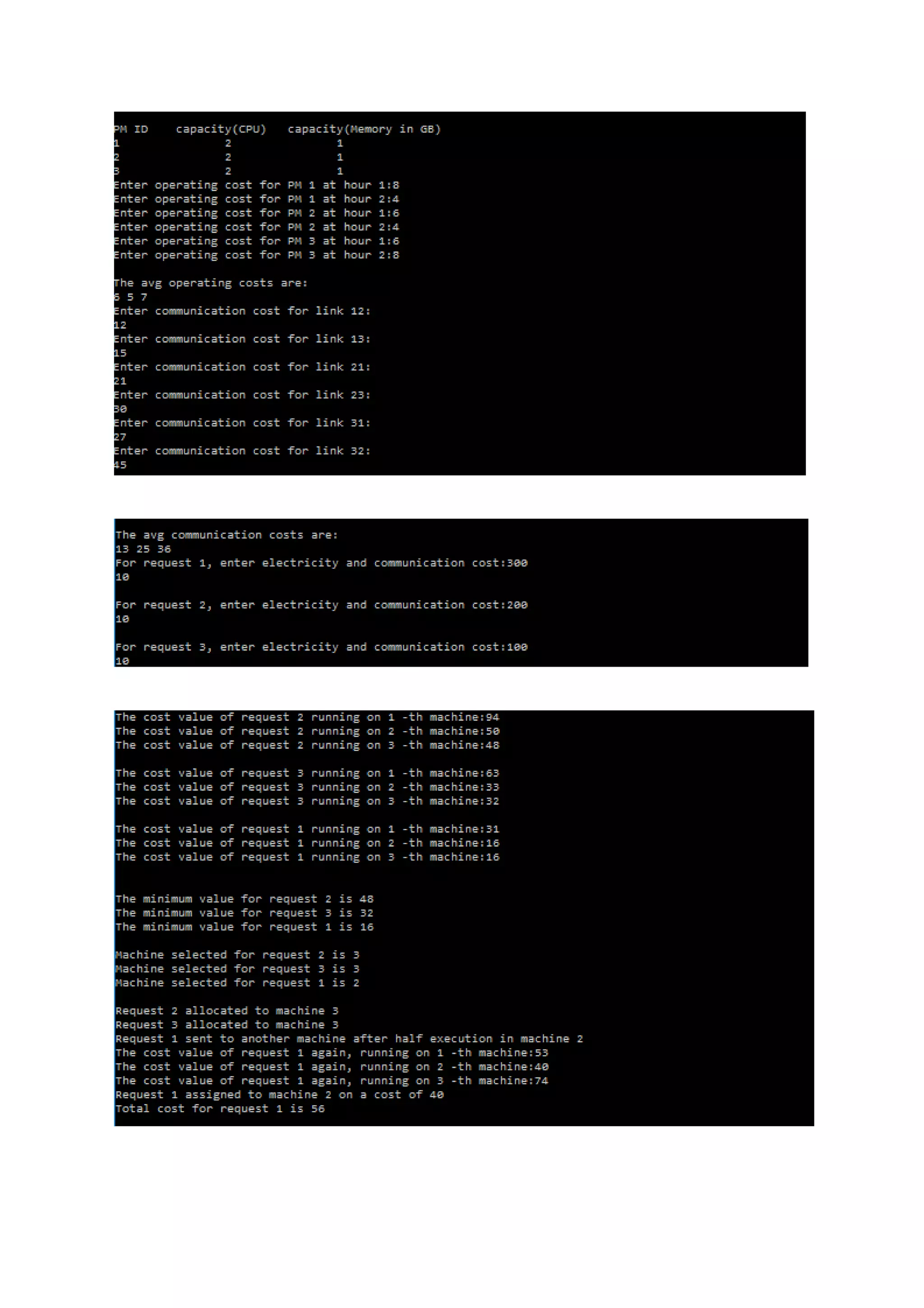

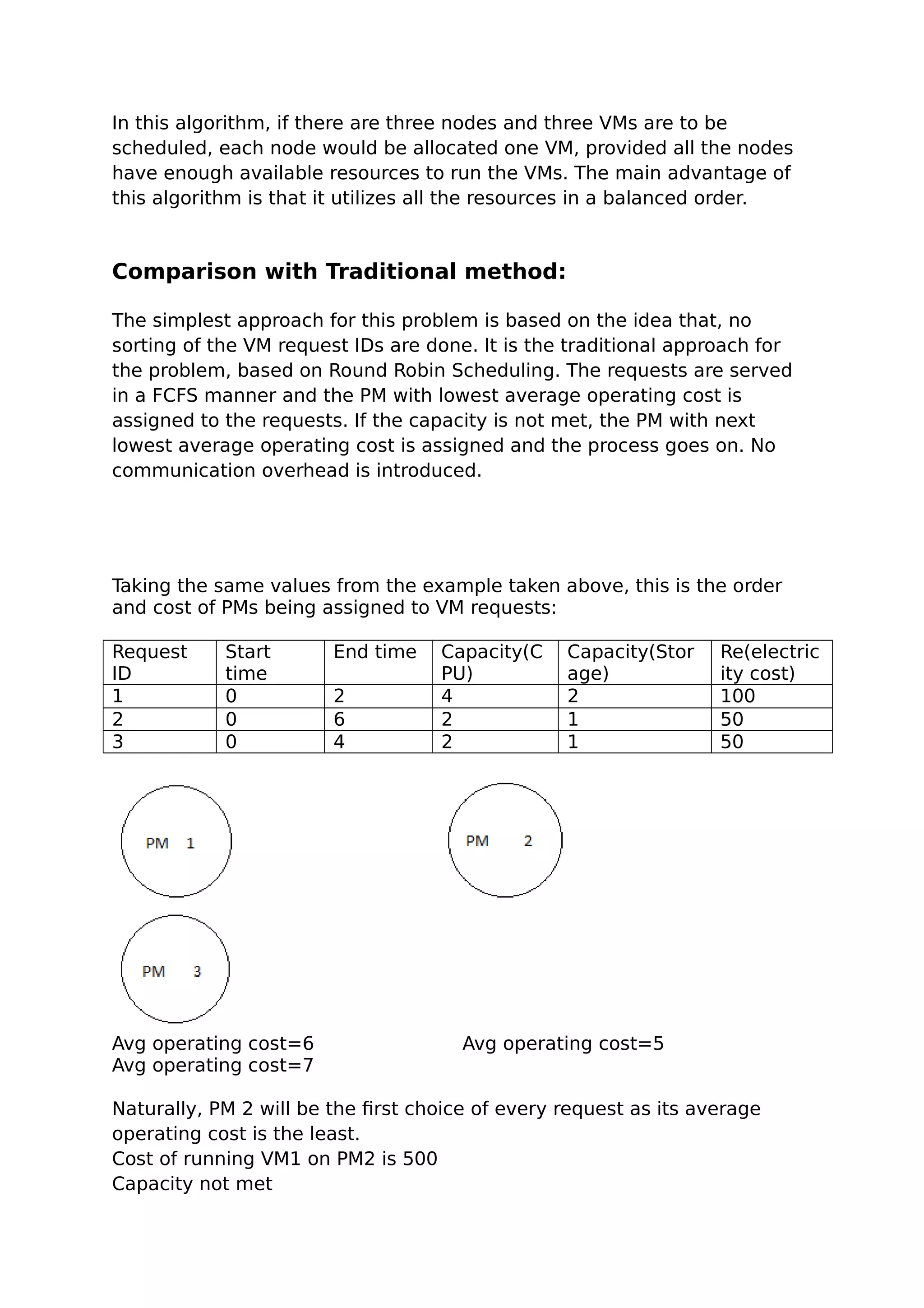

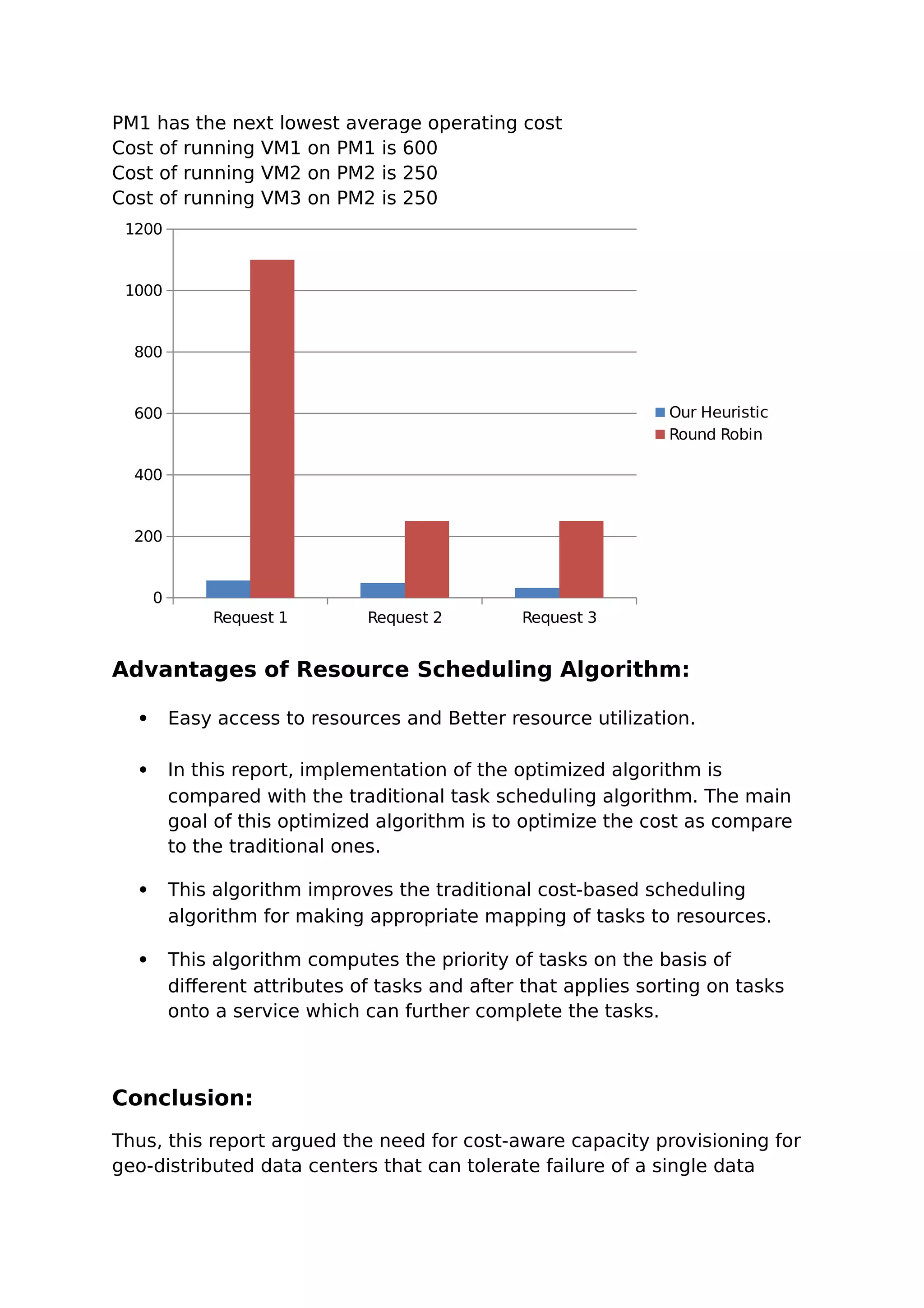

This document summarizes a student report on optimizing virtual machine placement across geo-distributed data centers to minimize costs. It proposes using an optimization model to determine the optimal spare capacity allocation across data centers while considering electricity costs, demand variability, and other factors. It also describes using a heuristic algorithm to place VMs on physical machines across data centers in a way that minimizes operating costs like electricity and communication costs.