This document summarizes a presentation on scaling security in cloud environments. The key points are:

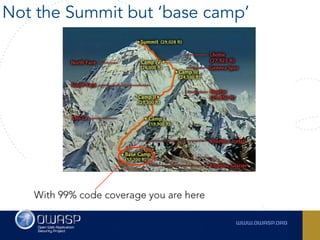

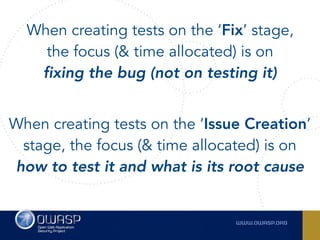

1. Testing and automation are essential for scaling security in the cloud. All aspects of the cloud environment, from provisioning to deployment to scaling, need to be tested.

2. Performance tests should be run daily, including during high-volume periods, to test the behavior of the system under different loads. Quality assurance tests can serve as good performance tests when executed in random order.

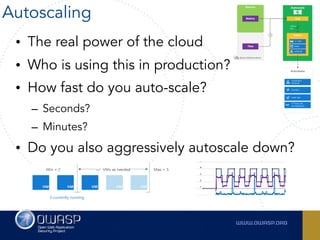

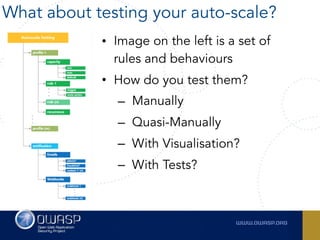

3. Automated scaling is a powerful feature of the cloud but autoscaling rules and behaviors also need to be tested to ensure they work as expected under different conditions.