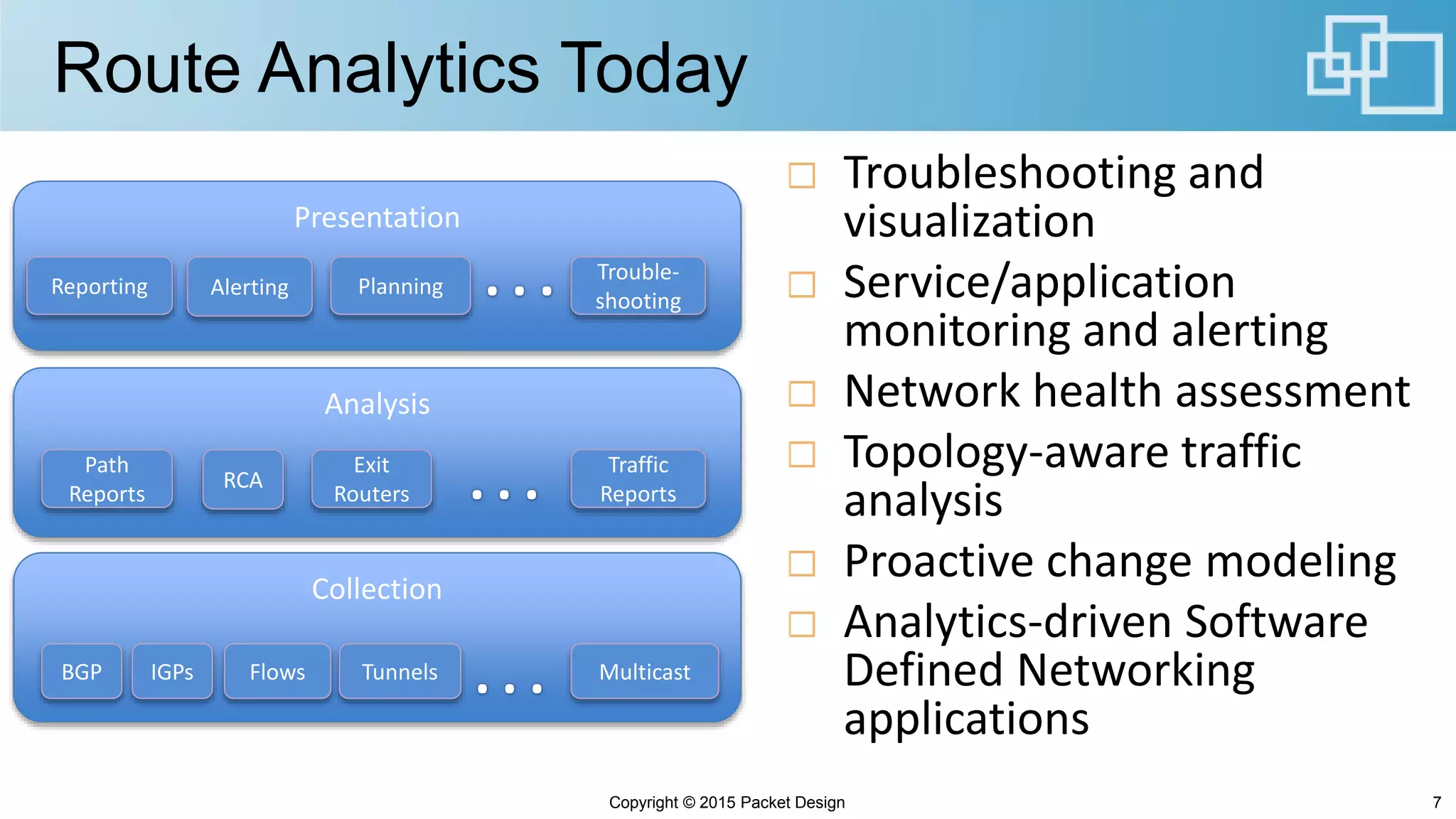

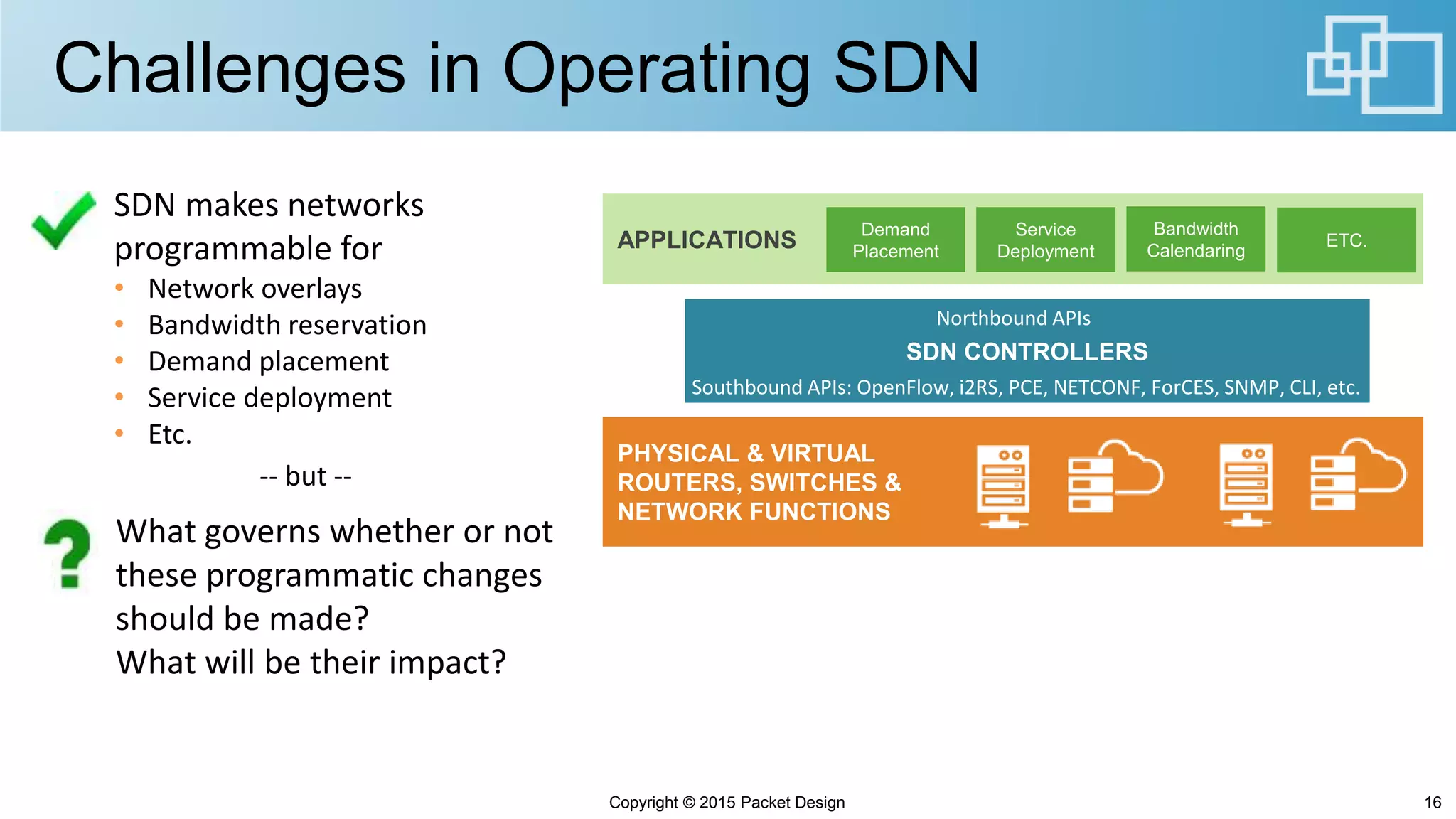

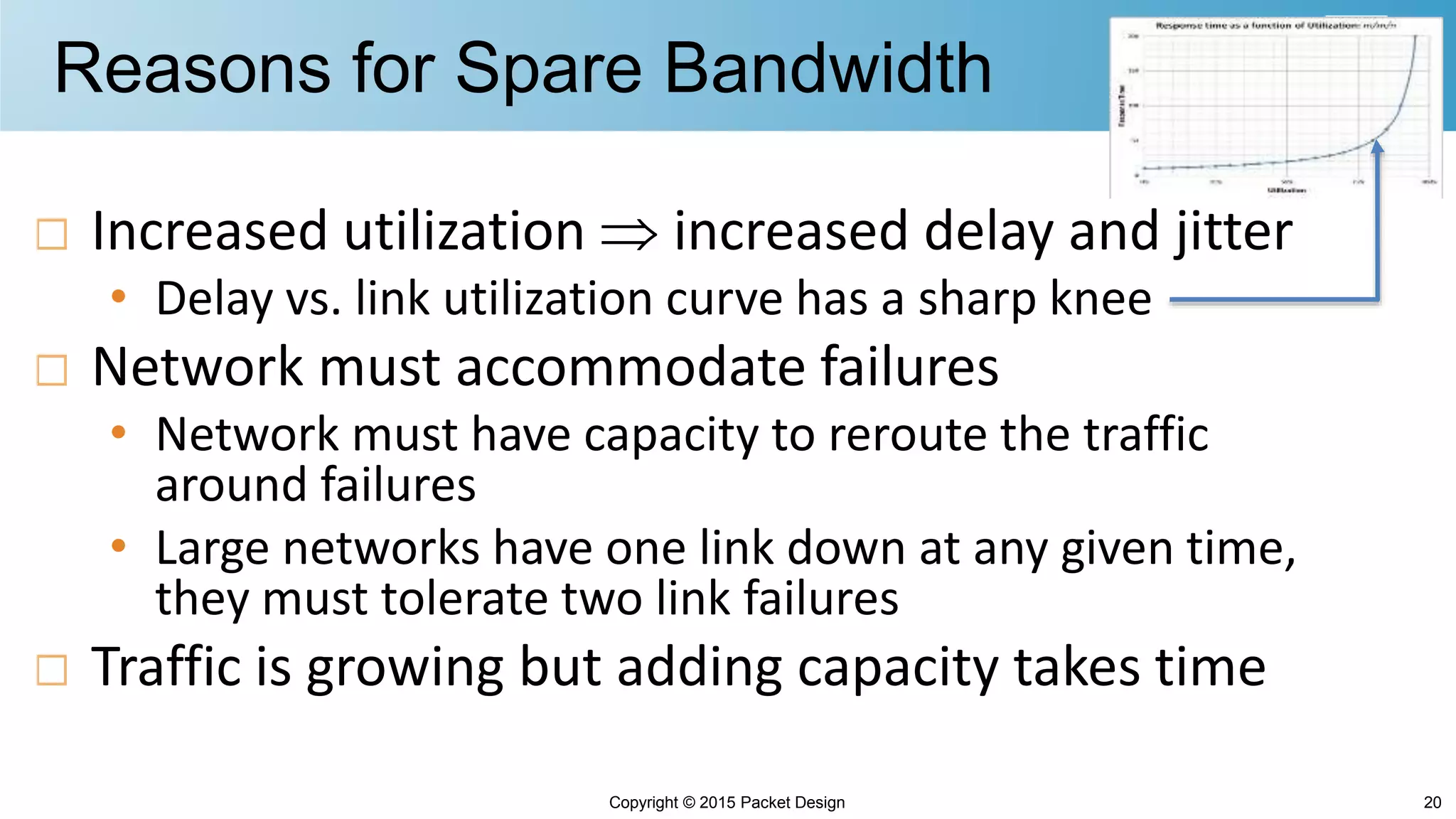

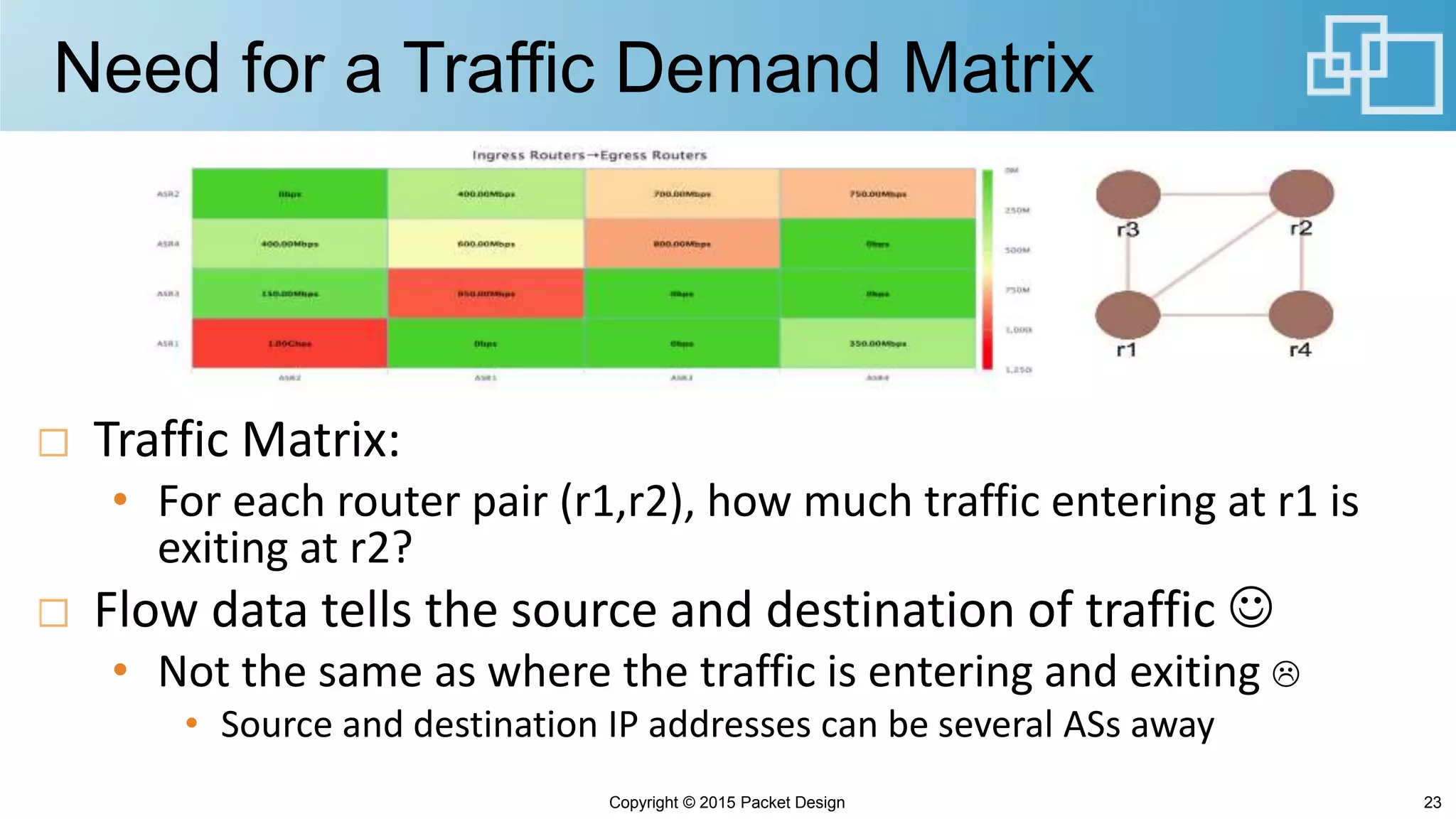

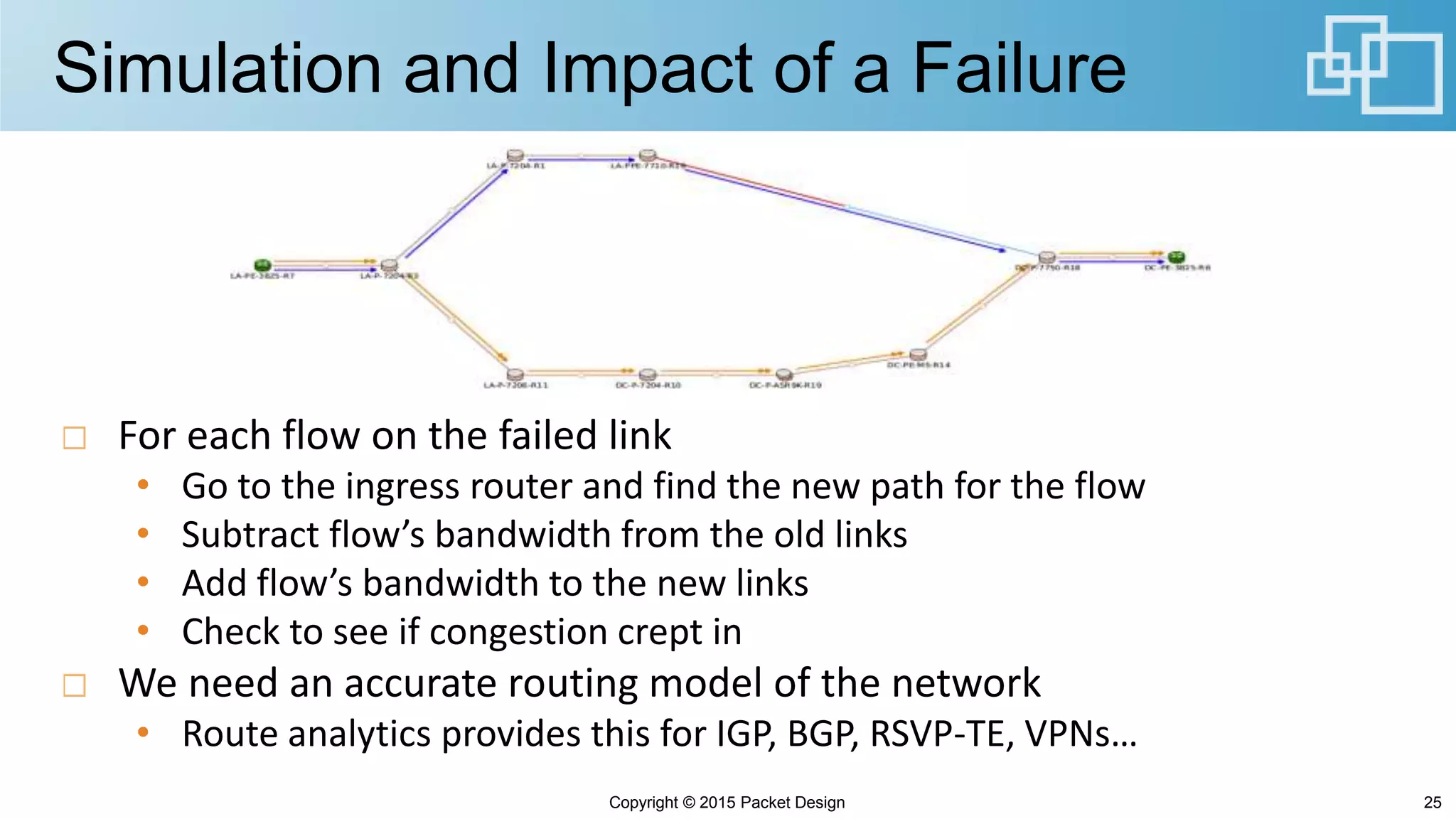

This document discusses how route analytics can help address challenges in operating software-defined networks (SDNs) and implementing bandwidth scheduling applications. It describes how a naive bandwidth scheduling approach could lead to network congestion issues and outlines how route analytics can provide richer information like traffic demand matrices and routing models to simulate failures and ensure spare bandwidth is not overcommitted. Route analytics is presented as key to developing analytics-driven SDN applications by providing visibility into routing impacts on performance, traffic flows, and failure response.