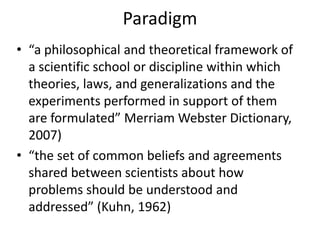

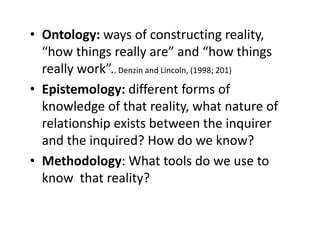

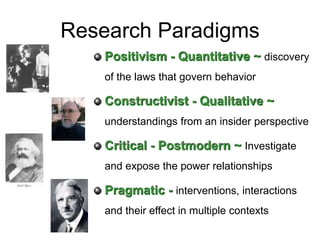

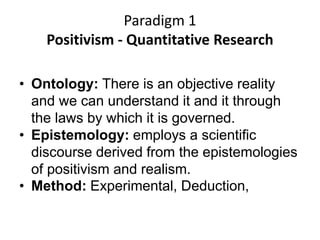

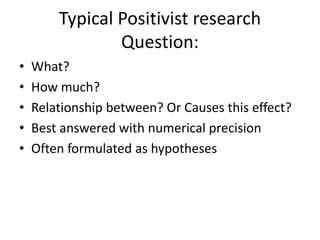

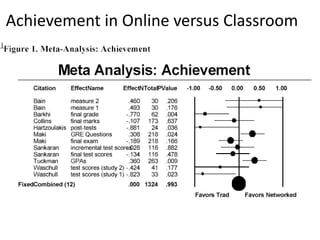

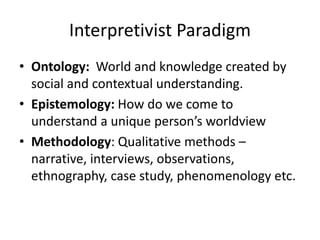

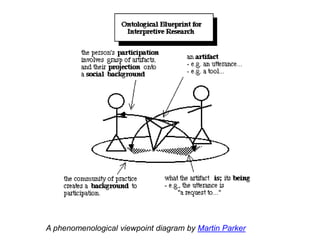

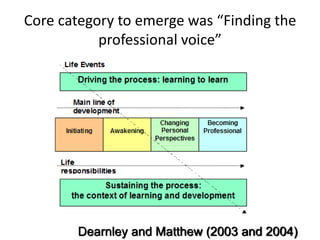

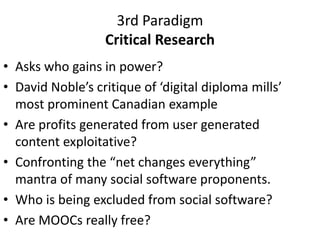

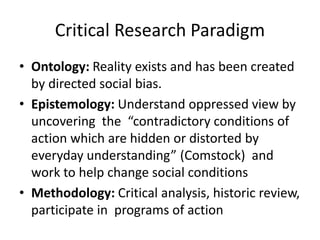

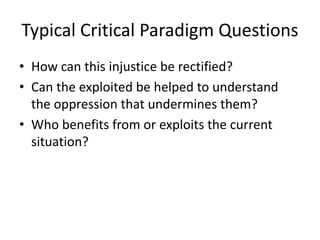

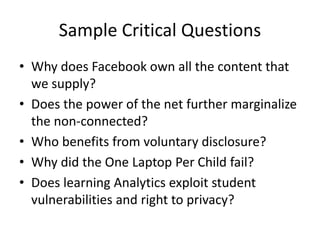

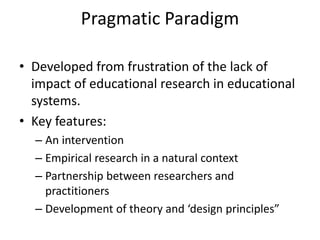

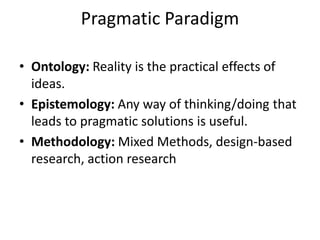

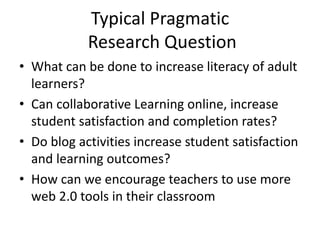

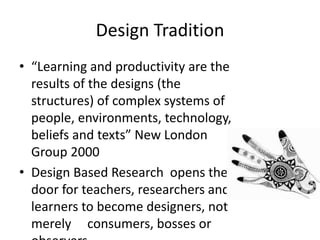

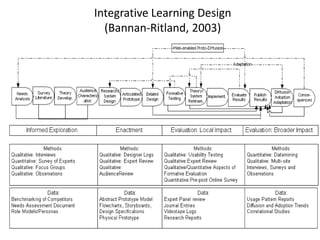

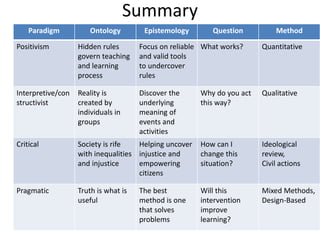

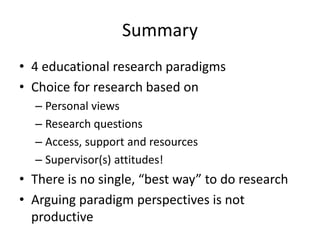

This document discusses four main research paradigms: positivism, interpretivism/constructivism, critical, and pragmatic. It provides an overview of the key aspects of each paradigm, including their ontology (nature of reality), epistemology (nature of knowledge), typical research questions, and common methodologies. The document uses examples from educational technology research to illustrate different studies that fall within each paradigm. Overall, it analyzes the tradeoffs of different paradigms and argues that the choice depends on personal views, the research question, available resources, and supervisory support, with no single best approach.