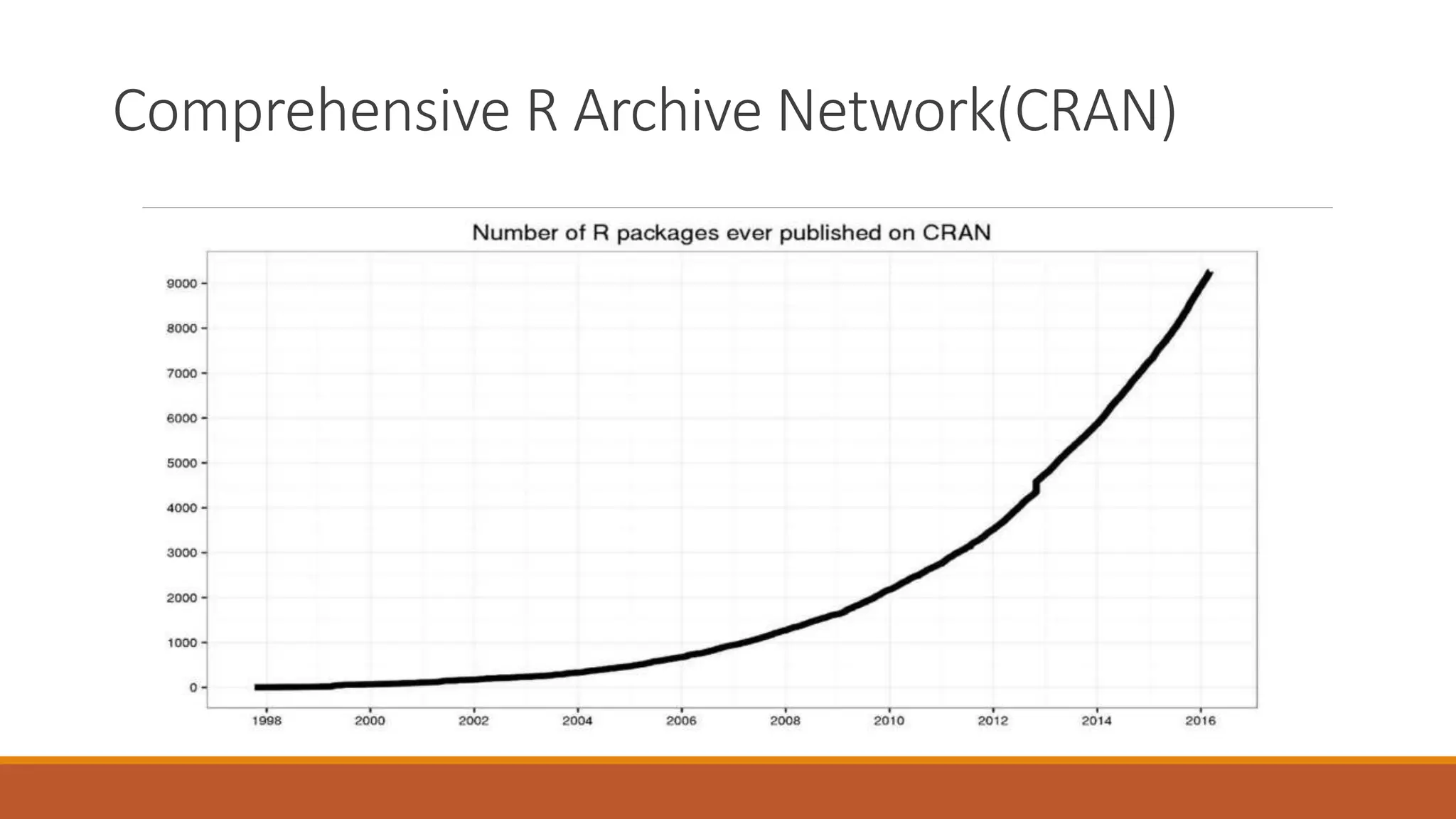

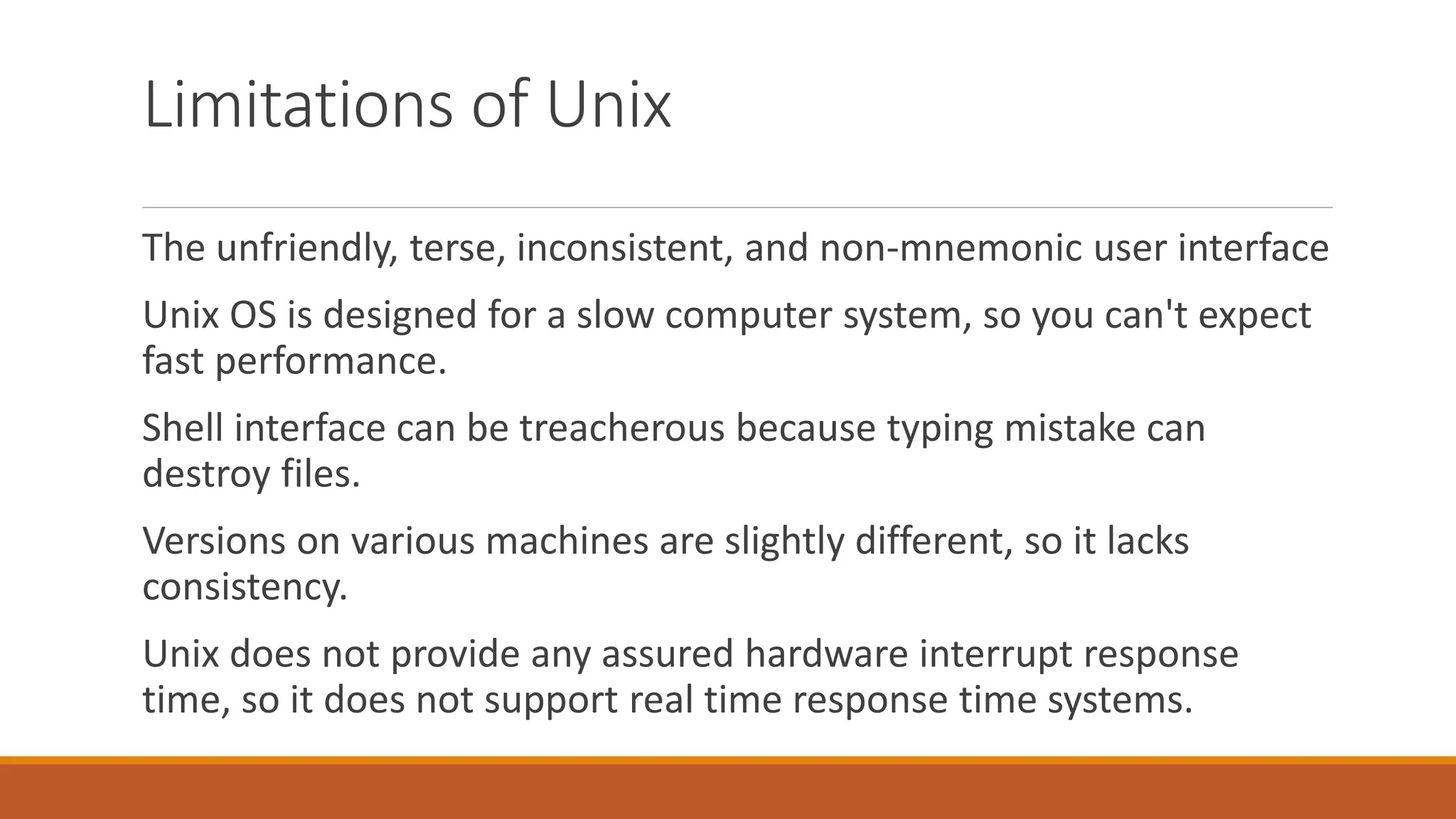

BASIC was originally created in 1963 as a teaching language to simplify programming. It has influenced computer science education and raised the need for coding knowledge. R is a free statistical programming language used for data analysis, modeling, and visualization. It includes many statistical and machine learning methods. UNIX was developed in the late 1960s and became widely used, while Linux is an open-source OS inspired by UNIX. Both operate using commands in a terminal rather than a graphical user interface.