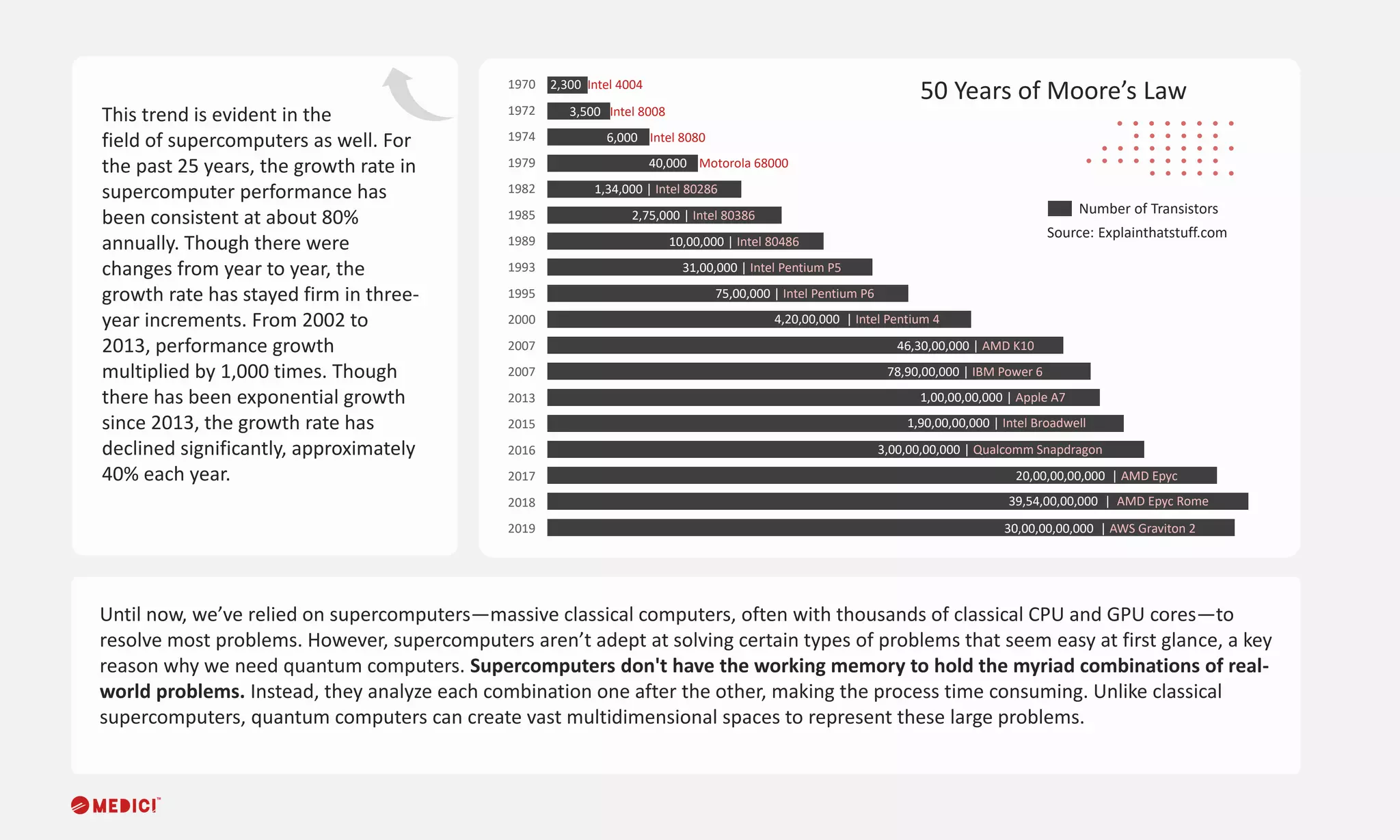

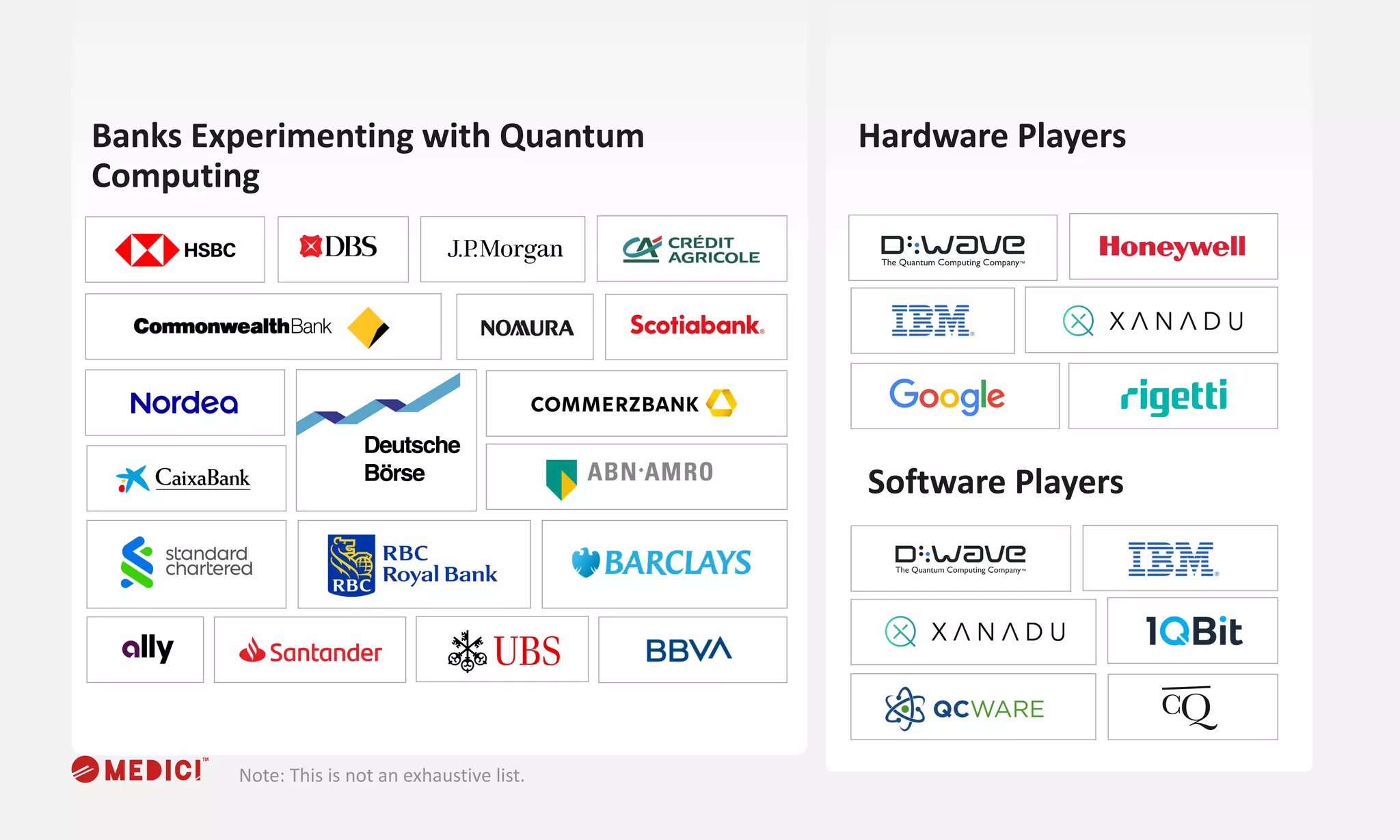

The document discusses the potential of quantum computing in financial services, highlighting its advantages over traditional supercomputers, particularly in processing complex problems quickly and efficiently. It notes significant investments from governments and companies, ongoing advancements in quantum technology, and emerging applications such as Monte Carlo simulations for risk assessments. With hybrid computing approaches expected in the short term, quantum computing aims to transform areas like investments, insurance, and lending within the financial sector.