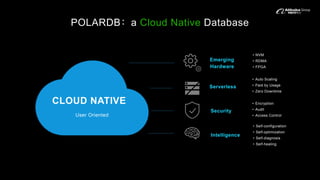

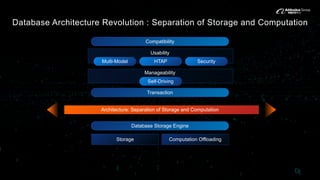

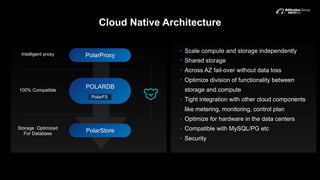

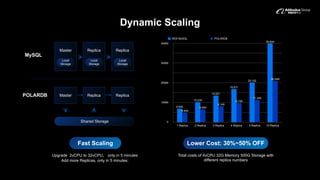

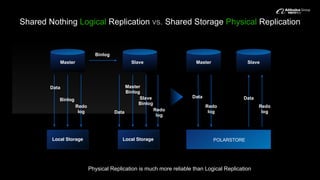

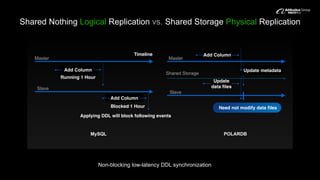

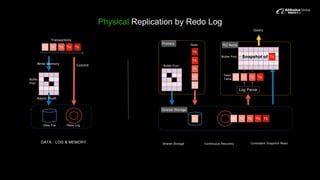

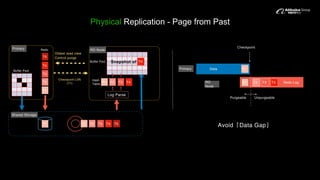

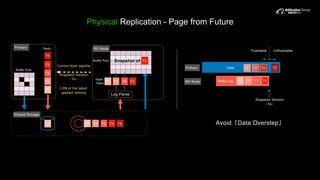

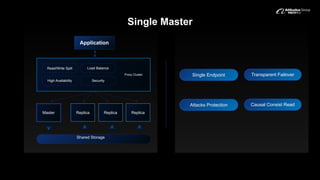

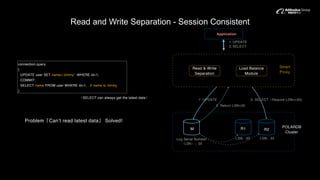

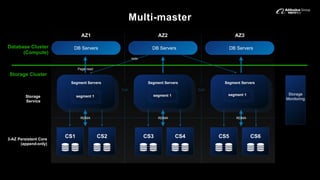

PolarDB is a cloud-native database architecture designed for the cloud. It separates storage and computation to independently scale each and provide high availability even across availability zones without data loss. PolarDB uses a shared storage architecture with PolarStore for storage and PolarProxy for intelligent routing. PolarStore is optimized for emerging hardware like NVMe and Optane and provides low latency access. PolarDB supports dynamic scaling, physical replication for high reliability, and read/write separation for session consistency.