This document provides an overview of parallel programming concepts like parallelism, threads, and concurrency. It discusses the importance of parallel programming given increasing numbers of processor cores. Key concepts covered include parallelism versus multi-processing, tasks and threads, the Java thread classes and methods, threading in Swing applications, and the new Java ForkJoin framework for parallel divide-and-conquer tasks. Examples are provided of using threads, Runnables, and SwingWorkers in Java programs.

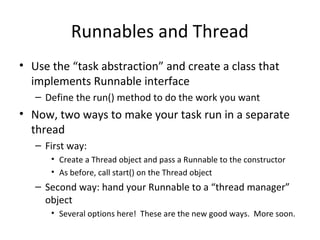

![Mergesort Example

• Top-level call. Create “main” task and submit

public static void mergeSortFJRecur(Comparable[] list, int

first,

int last) {

if (last - first < RECURSE_THRESHOLD) {

MergeSort.insertionSort(list, first, last);

return;

}

Comparable[] tmpList = new Comparable[list.length];

threadPool.invoke(new SortTask(list, tmpList, first, last));

}](https://image.slidesharecdn.com/bhkvi0otpczut8xgfmim-signature-91f77658bc948ec5a8dd1dcec04e37ca491feef9f5dec765599dcd7a59873a3d-poli-160512172820/85/Parallel-programming-37-320.jpg)

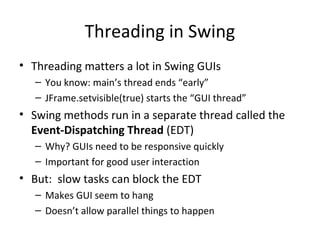

![Mergesort’s Task-Object Nested Class

static class SortTask extends RecursiveAction {

Comparable[] list;

Comparable[] tmpList;

int first, last;

public SortTask(Comparable[] a, Comparable[] tmp,

int lo, int hi) {

this.list = a; this.tmpList = tmp;

this.first = lo; this.last = hi;

}

// continued next slide](https://image.slidesharecdn.com/bhkvi0otpczut8xgfmim-signature-91f77658bc948ec5a8dd1dcec04e37ca491feef9f5dec765599dcd7a59873a3d-poli-160512172820/85/Parallel-programming-38-320.jpg)