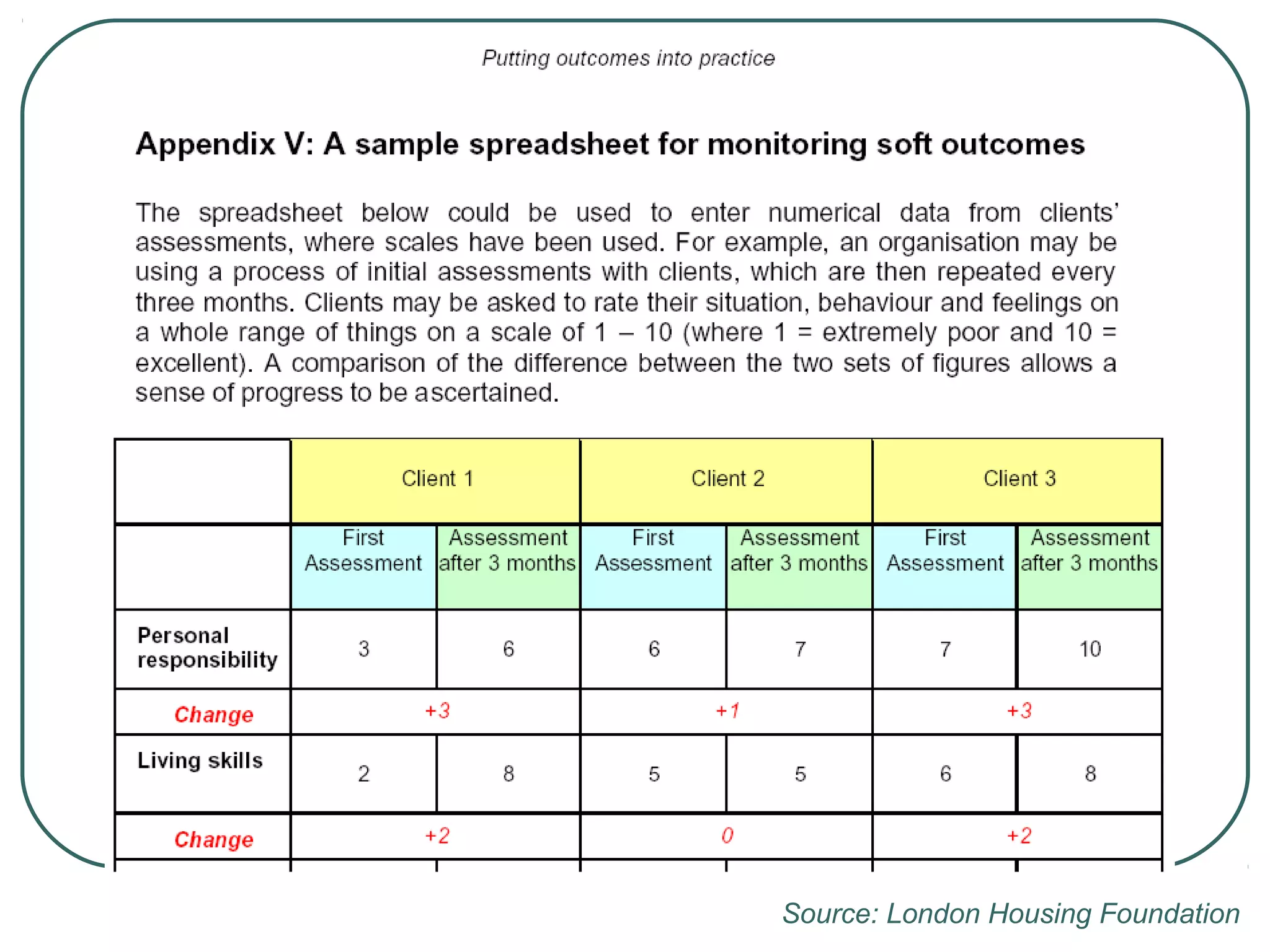

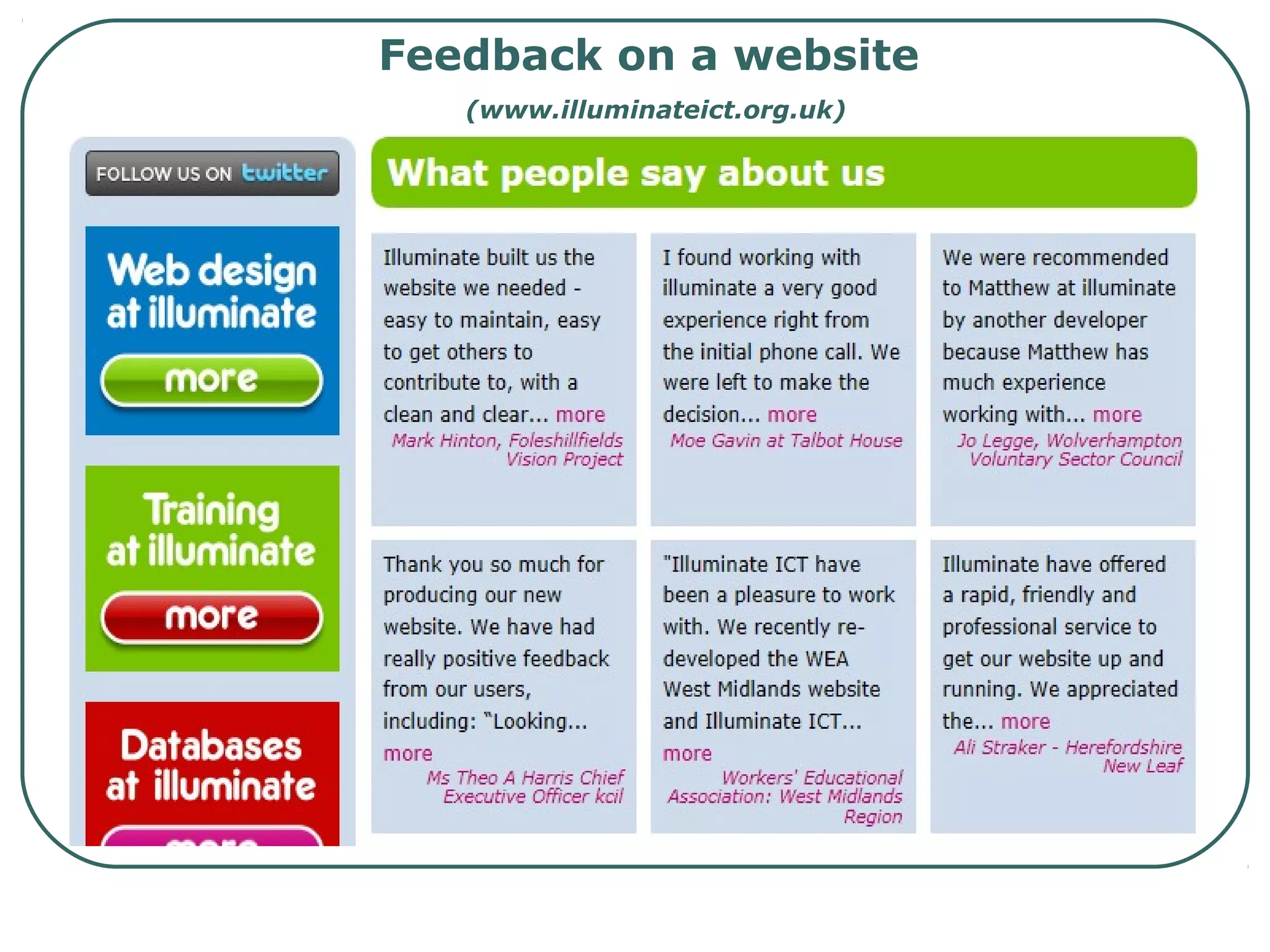

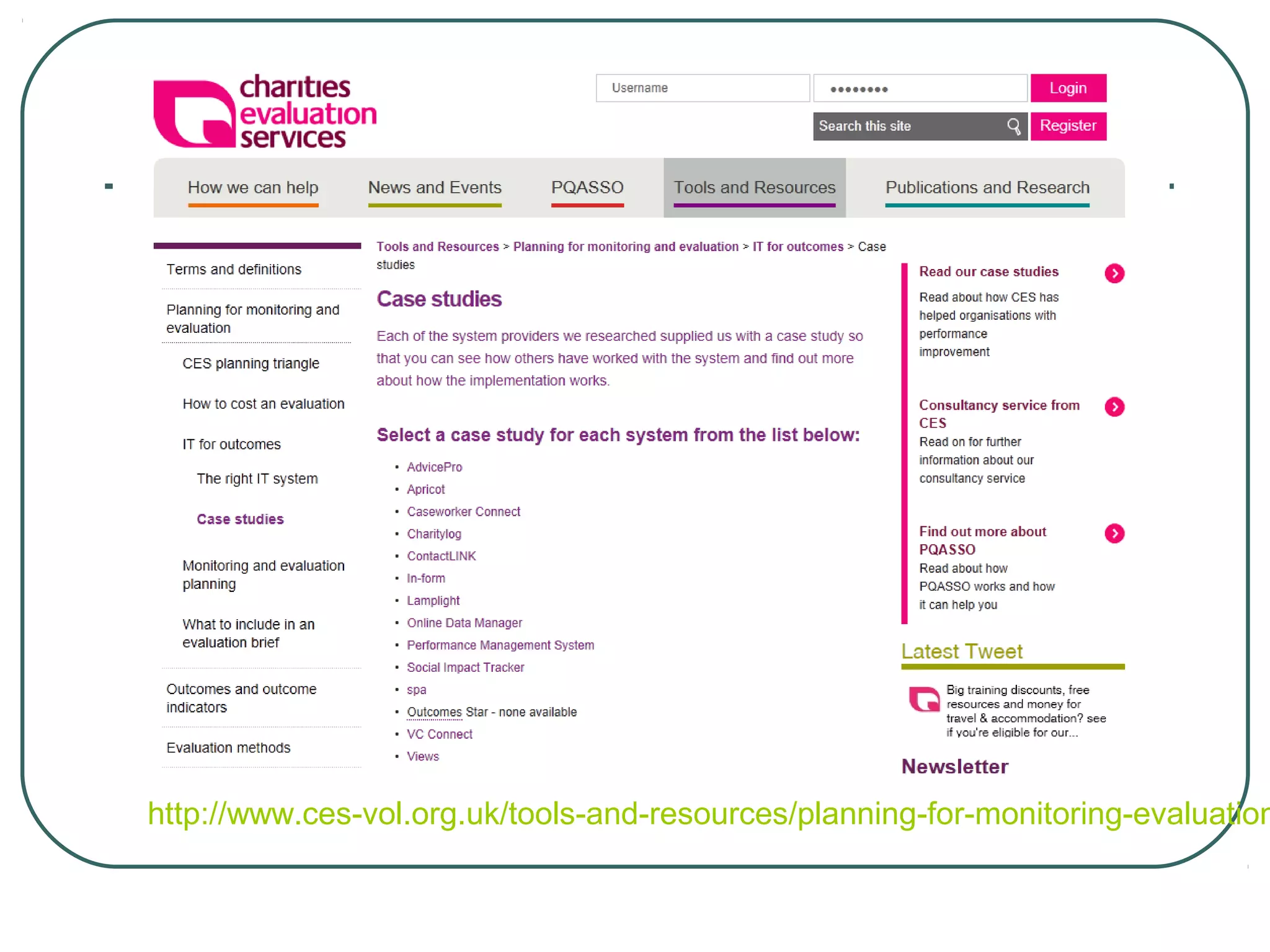

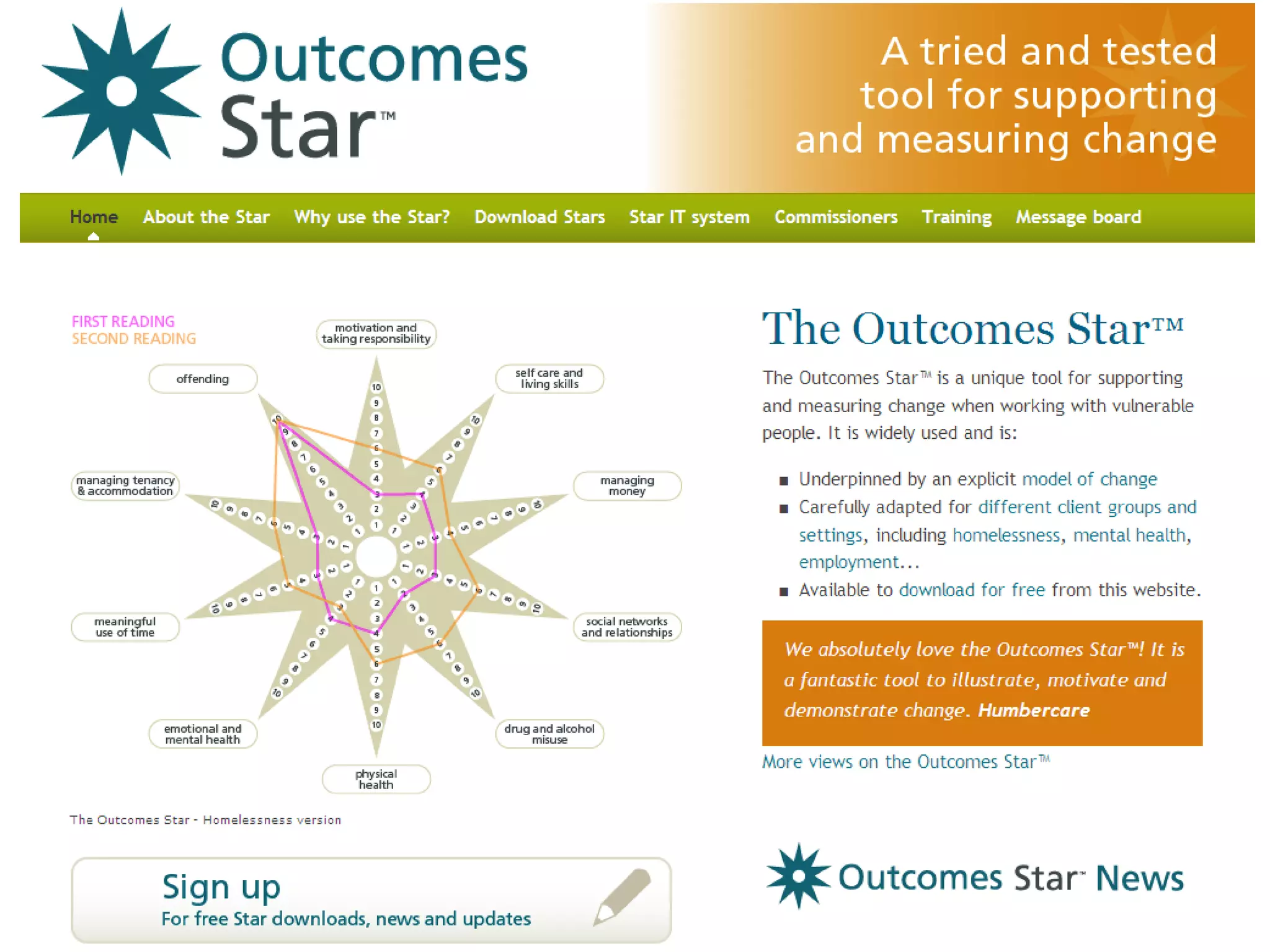

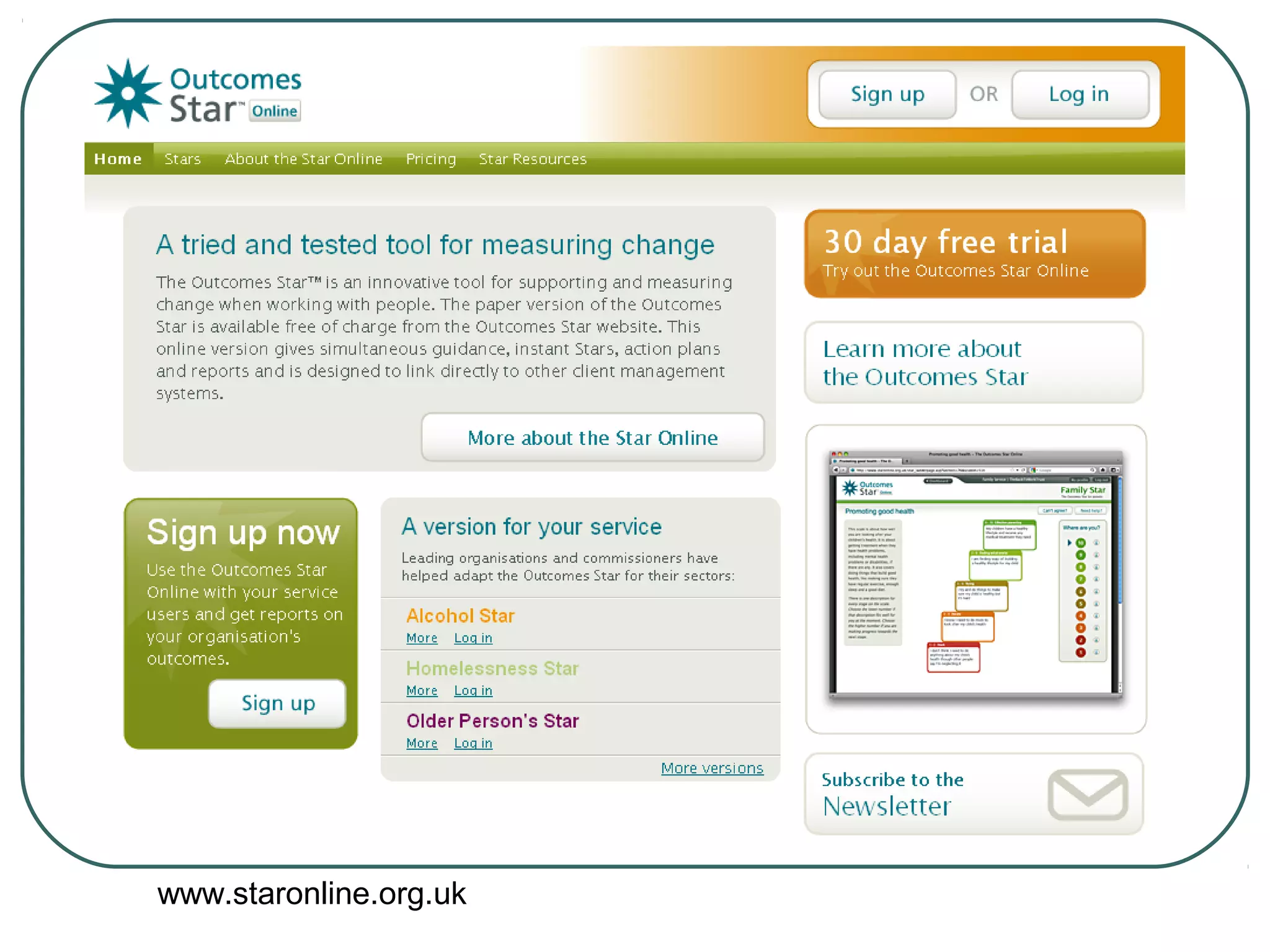

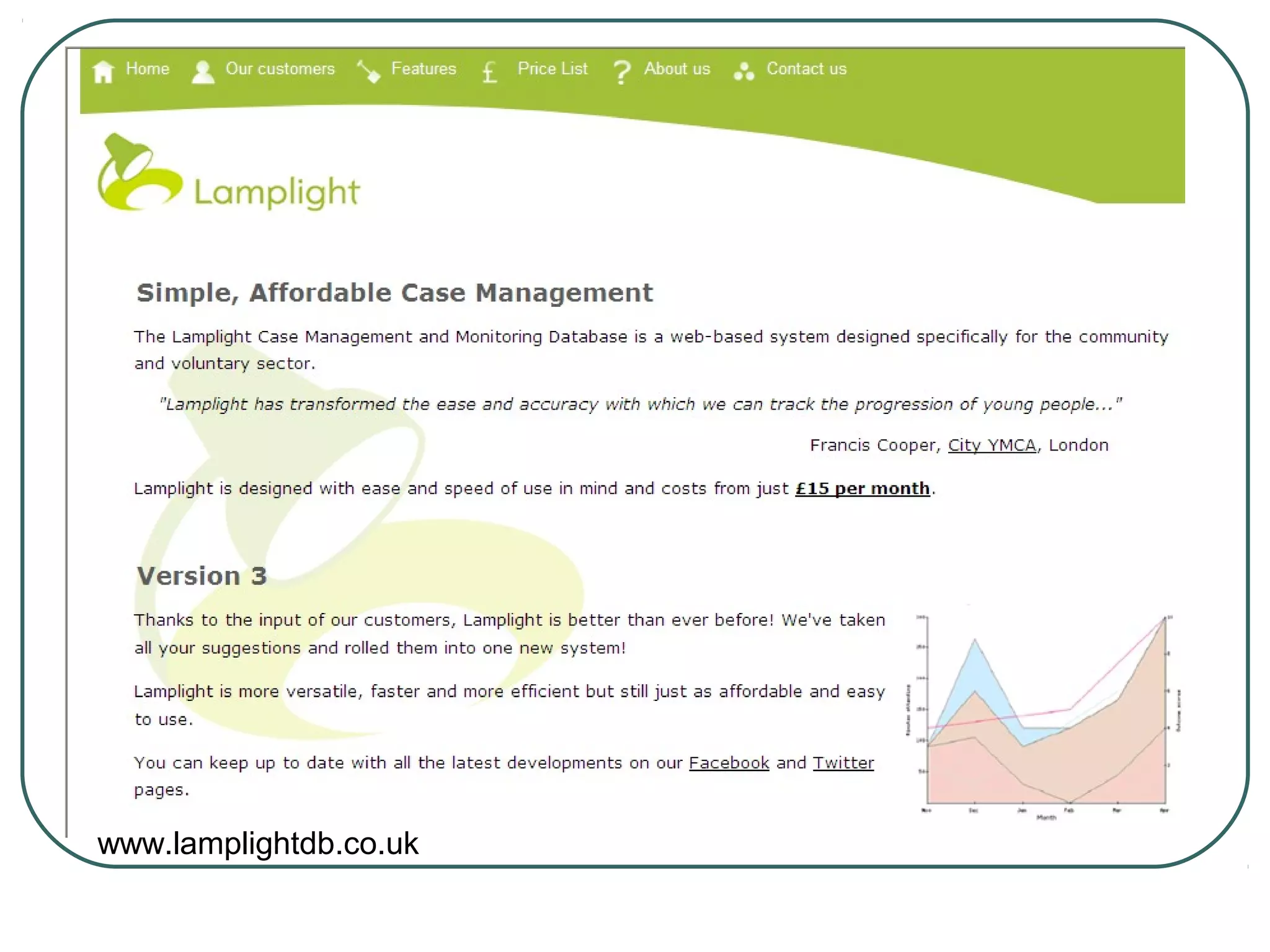

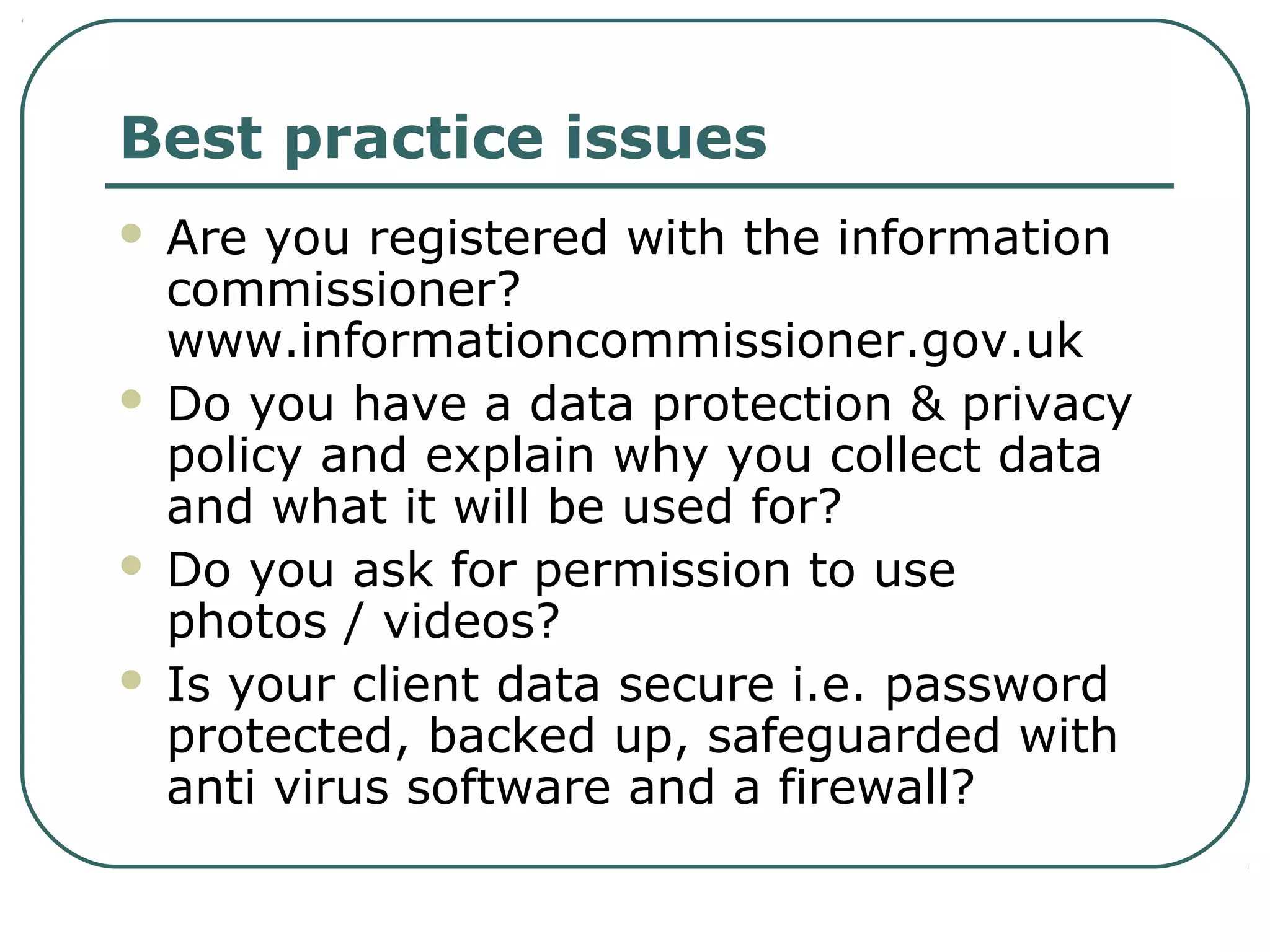

This document discusses how information and communication technologies (ICT) can help improve the efficiency and effectiveness of outcomes monitoring. It provides examples of tools for collecting, storing, retrieving, and presenting outcomes information. These include online surveys, websites, databases, digital files, and tools for data analysis and visualization. The document also outlines steps for developing an ICT system for outcomes monitoring, including identifying needs, researching options, allocating resources, and creating an action plan. It emphasizes the importance of data protection policies and practices.