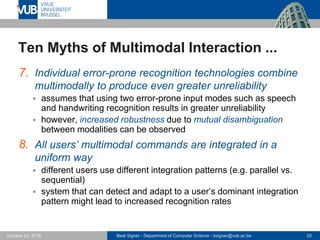

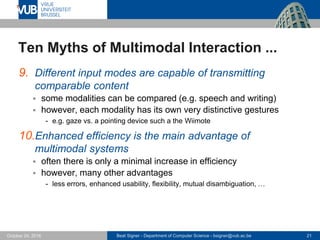

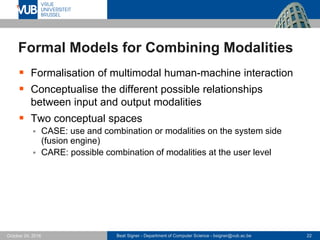

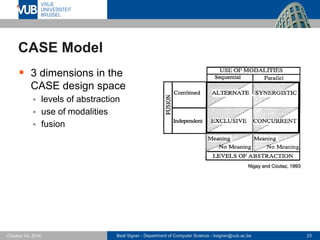

The document discusses the concept of multimodal interaction in human-machine communication, emphasizing the integration of various input and output modalities such as speech, gestures, and visual cues. It outlines the advantages and frameworks for multimodal systems, including challenges, myths, and formal models related to combining modalities effectively. Additionally, it highlights methods of multimodal fusion at different levels and the importance of personalizing user interactions.

![Beat Signer - Department of Computer Science - bsigner@vub.ac.be 29October 24, 2016

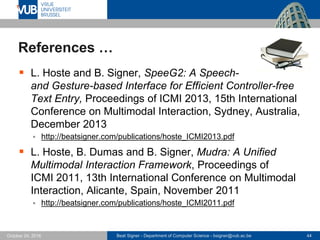

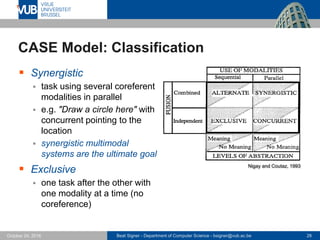

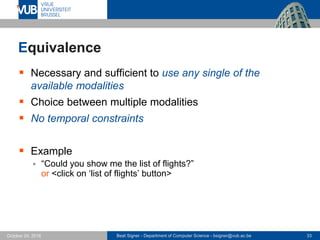

CARE Properties

Four properties to characterise and assess aspects of

multimodal interaction in terms of the combination of

modalities at the user level [Coutaz et al., 1995]

Complementarity

Assignment

Redundancy

Equivalence](https://image.slidesharecdn.com/lecture05multimodalinteraction-161025044651/85/Multimodal-Interaction-Lecture-05-Next-Generation-User-Interfaces-4018166FNR-29-320.jpg)

![Beat Signer - Department of Computer Science - bsigner@vub.ac.be 34October 24, 2016

Example for Equivalence: EdFest 2004

Edfest 2004 prototype con-

sisted of a digital pen and

paper interface in combination

with speech input and voice

output [Belotti et al., 2005]

User had a choice to execute

some commands either via

pen input or through speech

input](https://image.slidesharecdn.com/lecture05multimodalinteraction-161025044651/85/Multimodal-Interaction-Lecture-05-Next-Generation-User-Interfaces-4018166FNR-34-320.jpg)