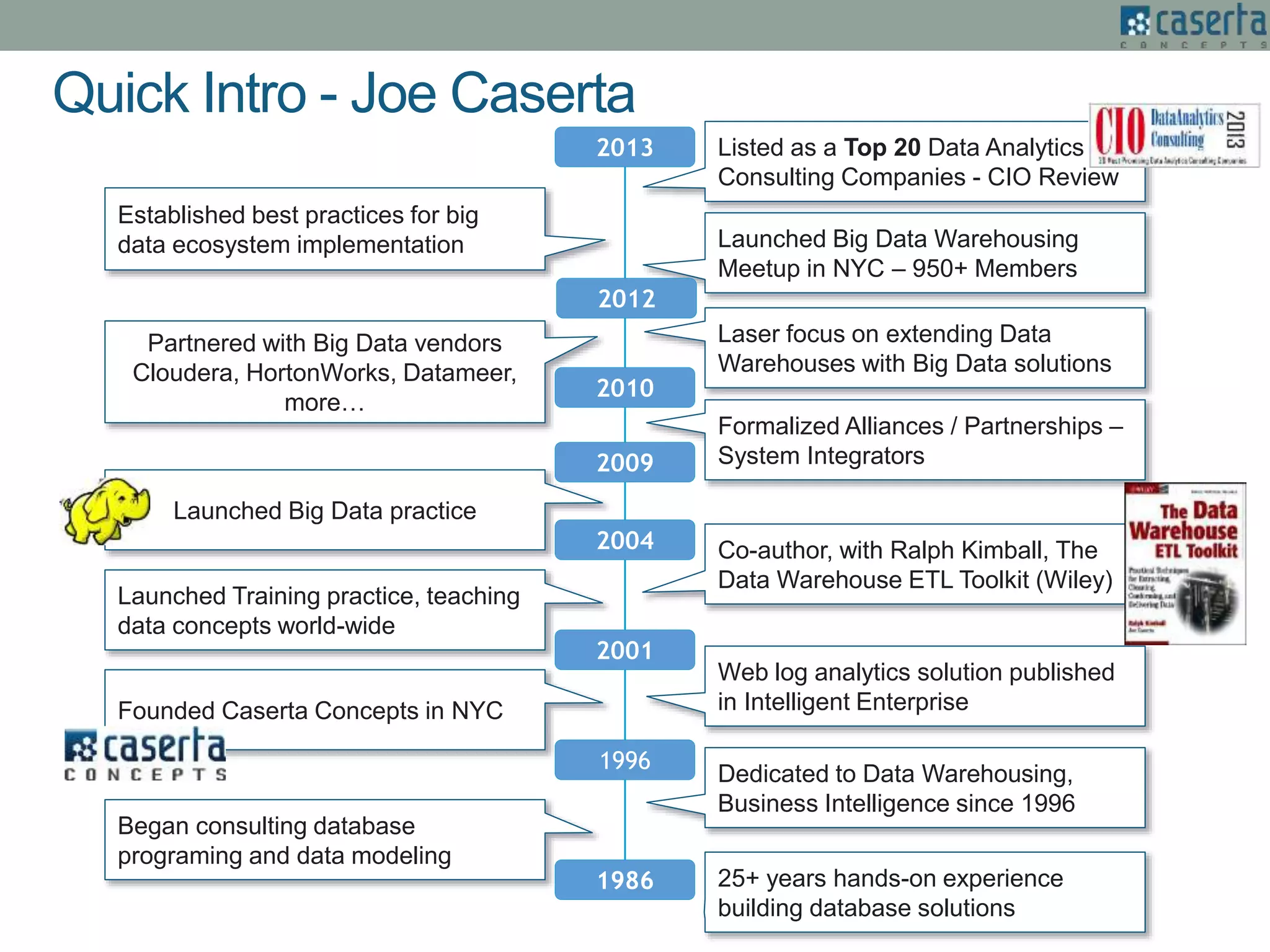

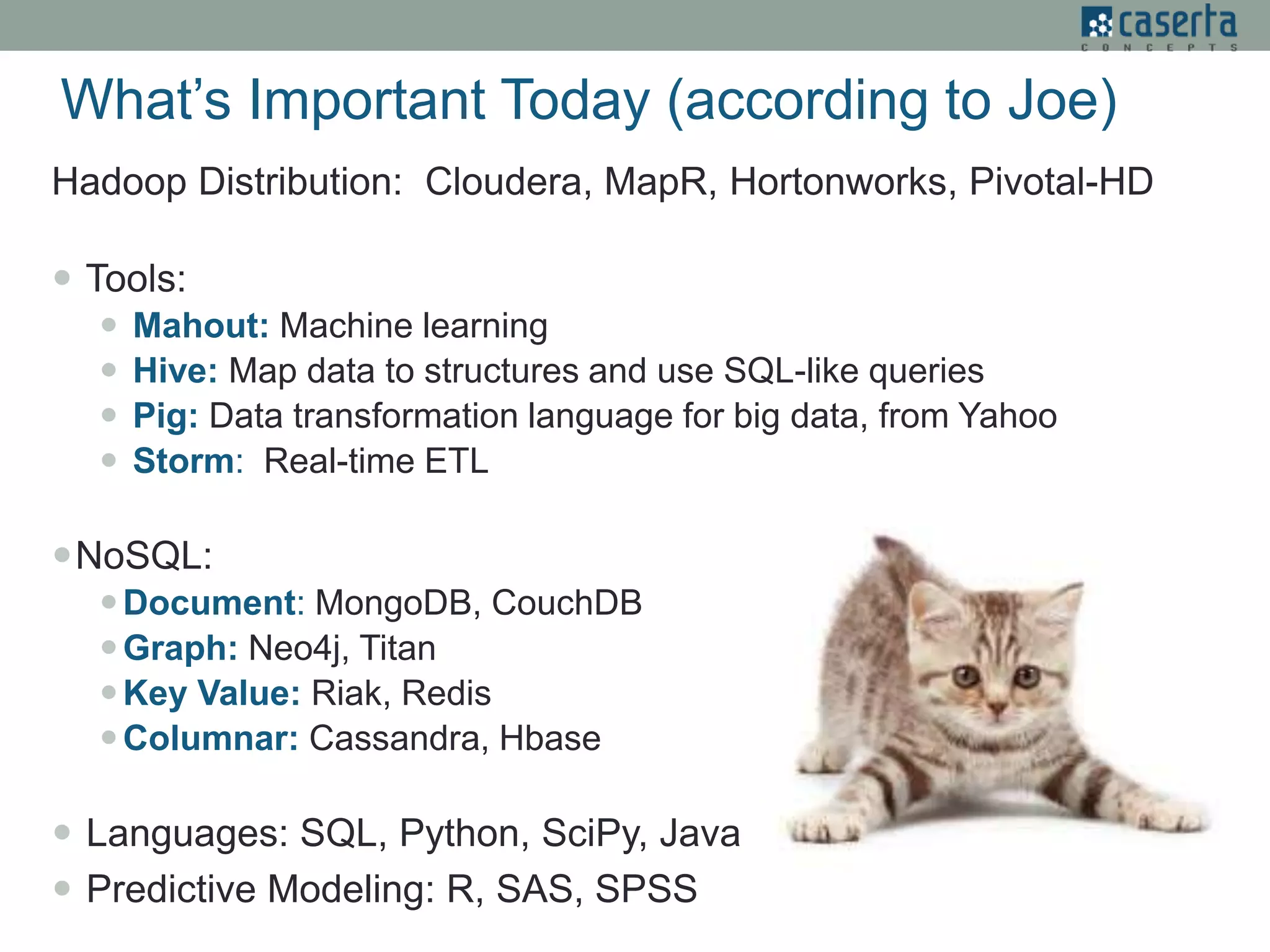

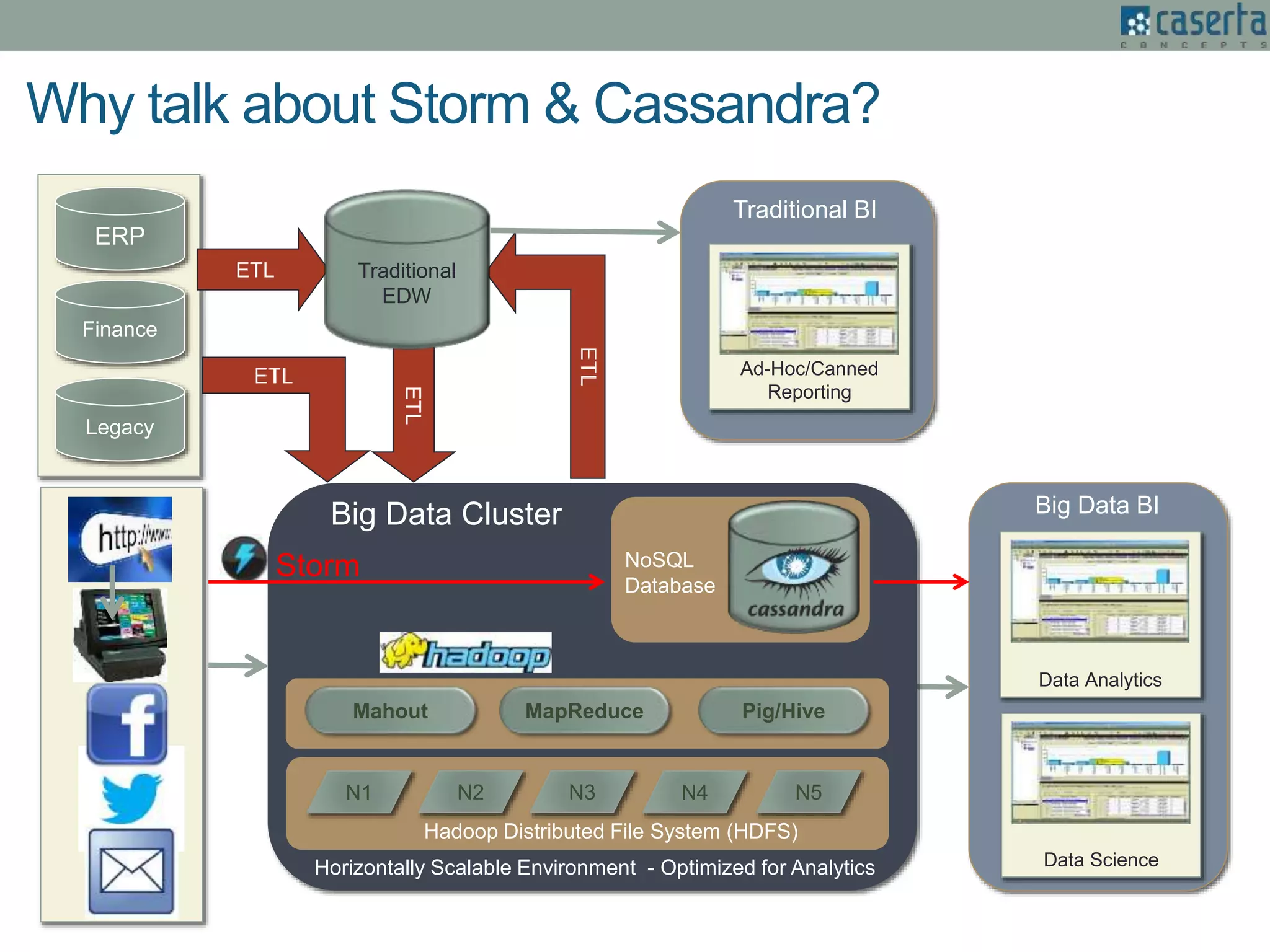

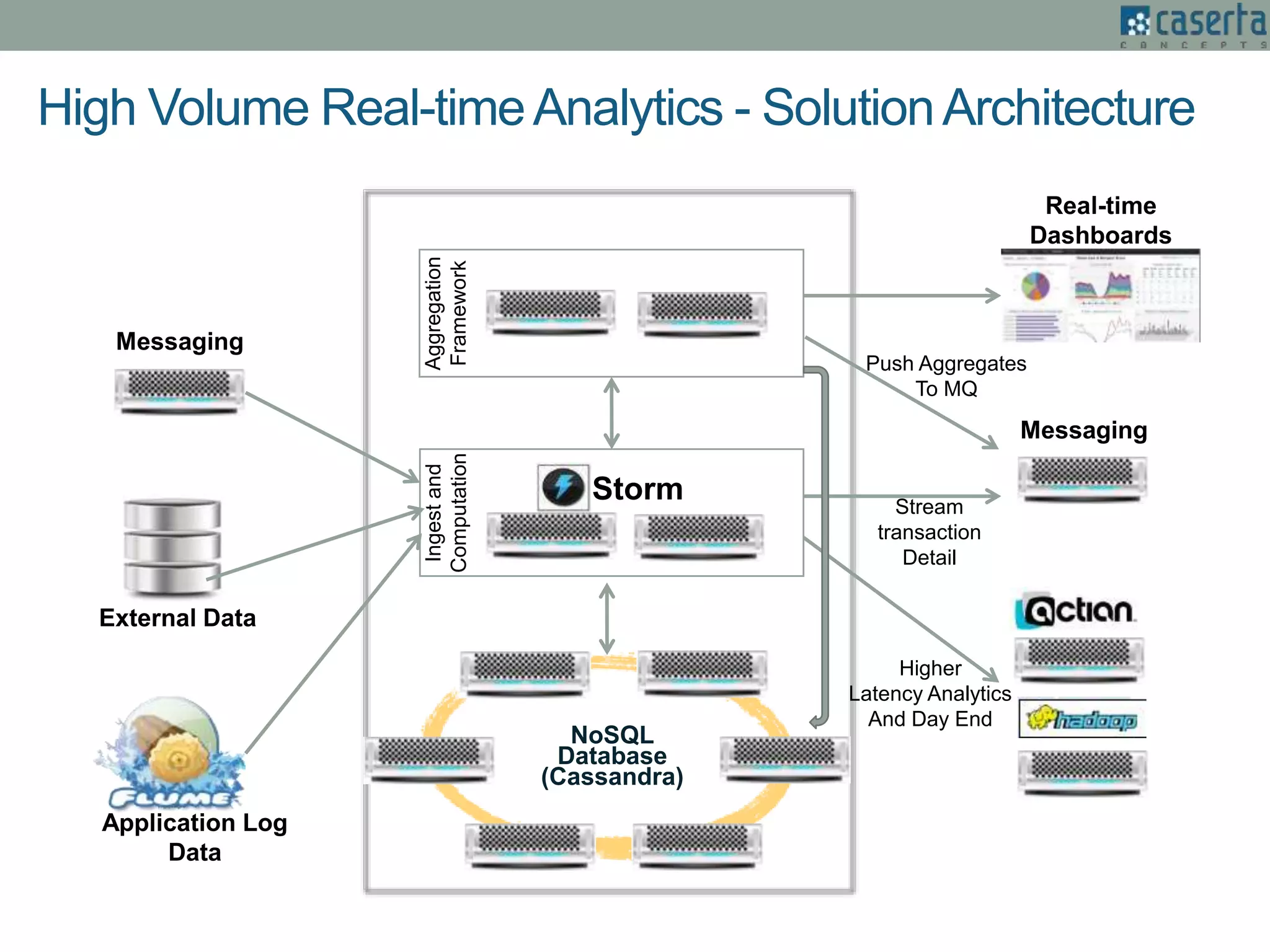

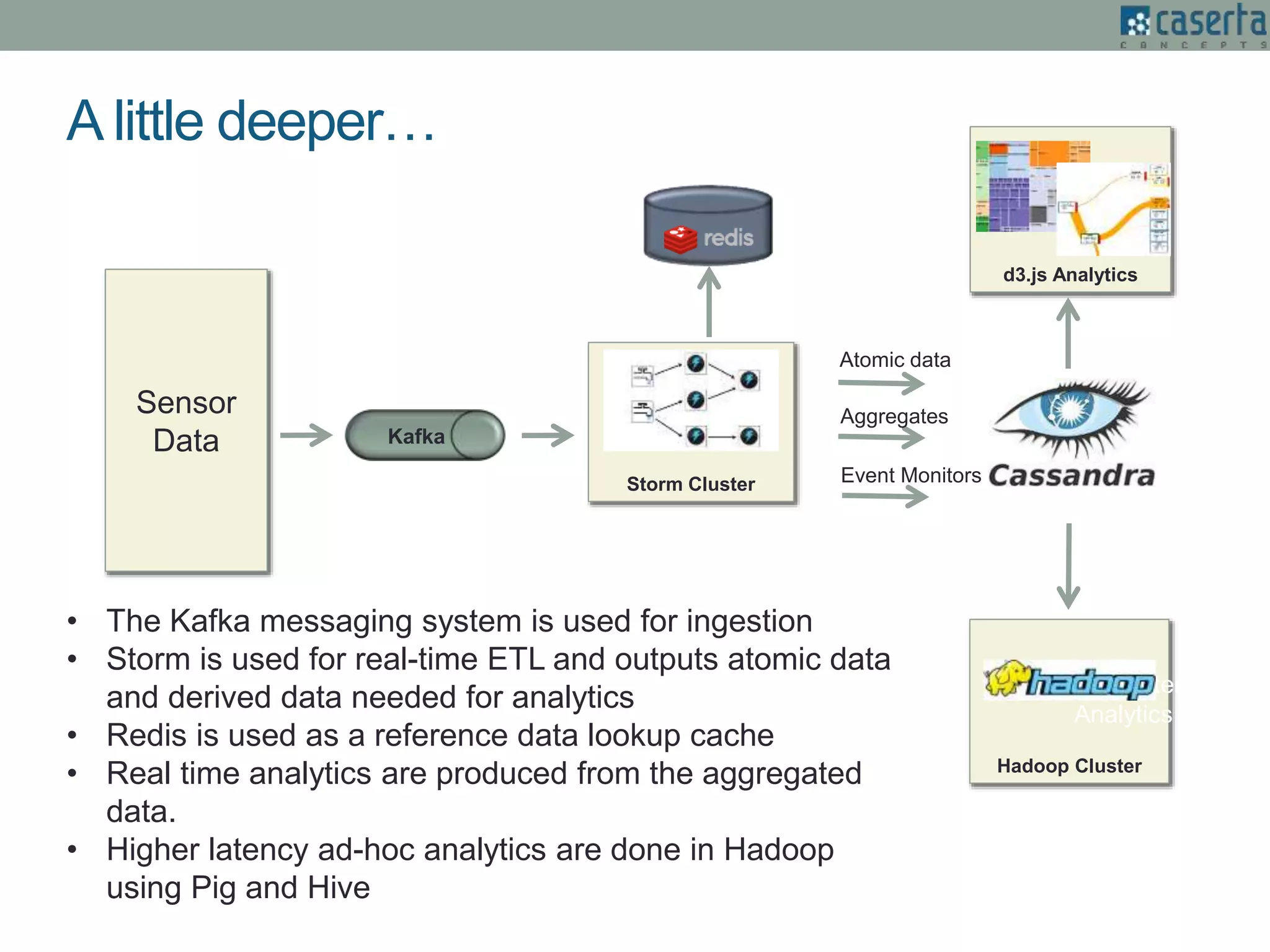

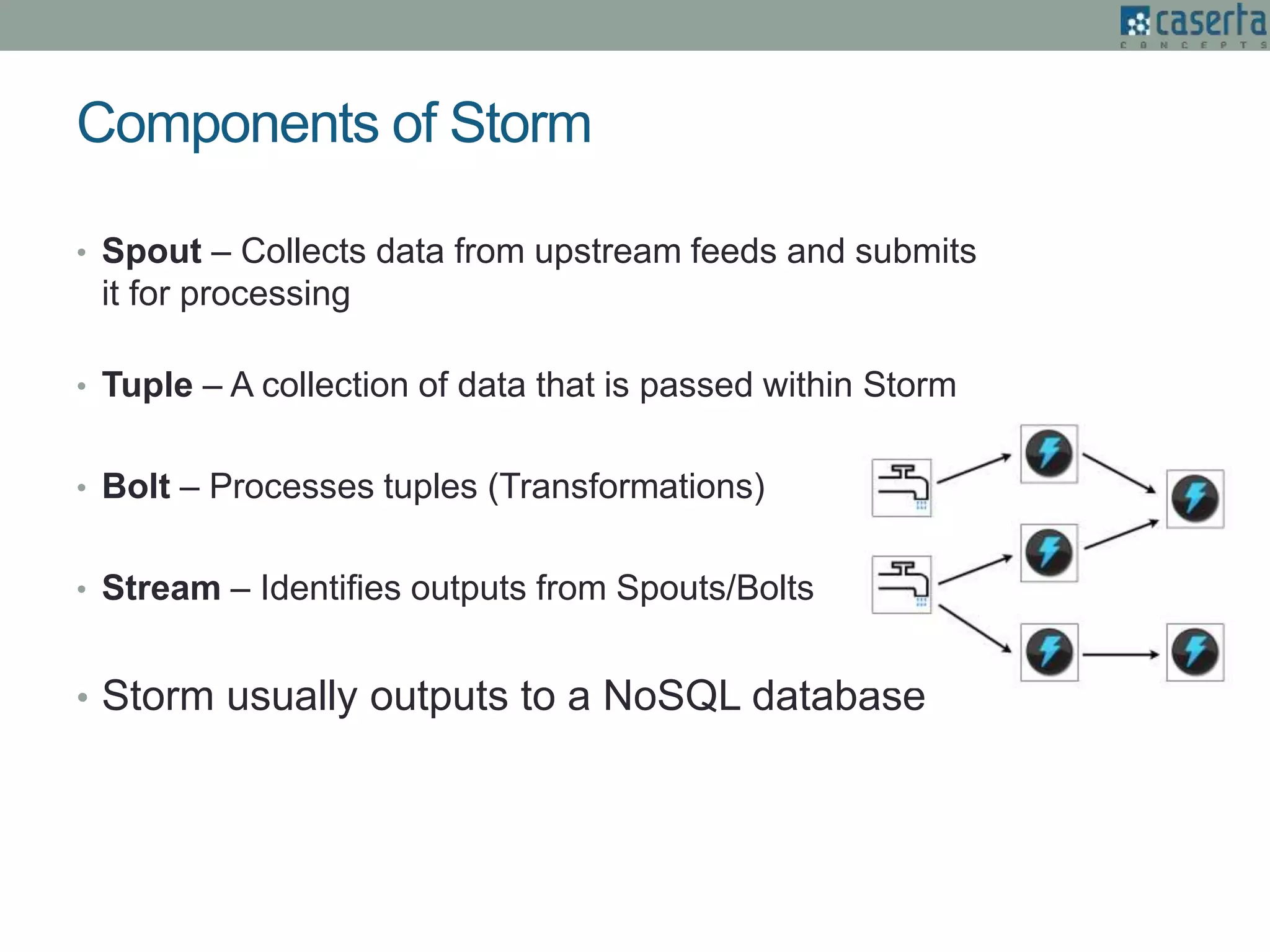

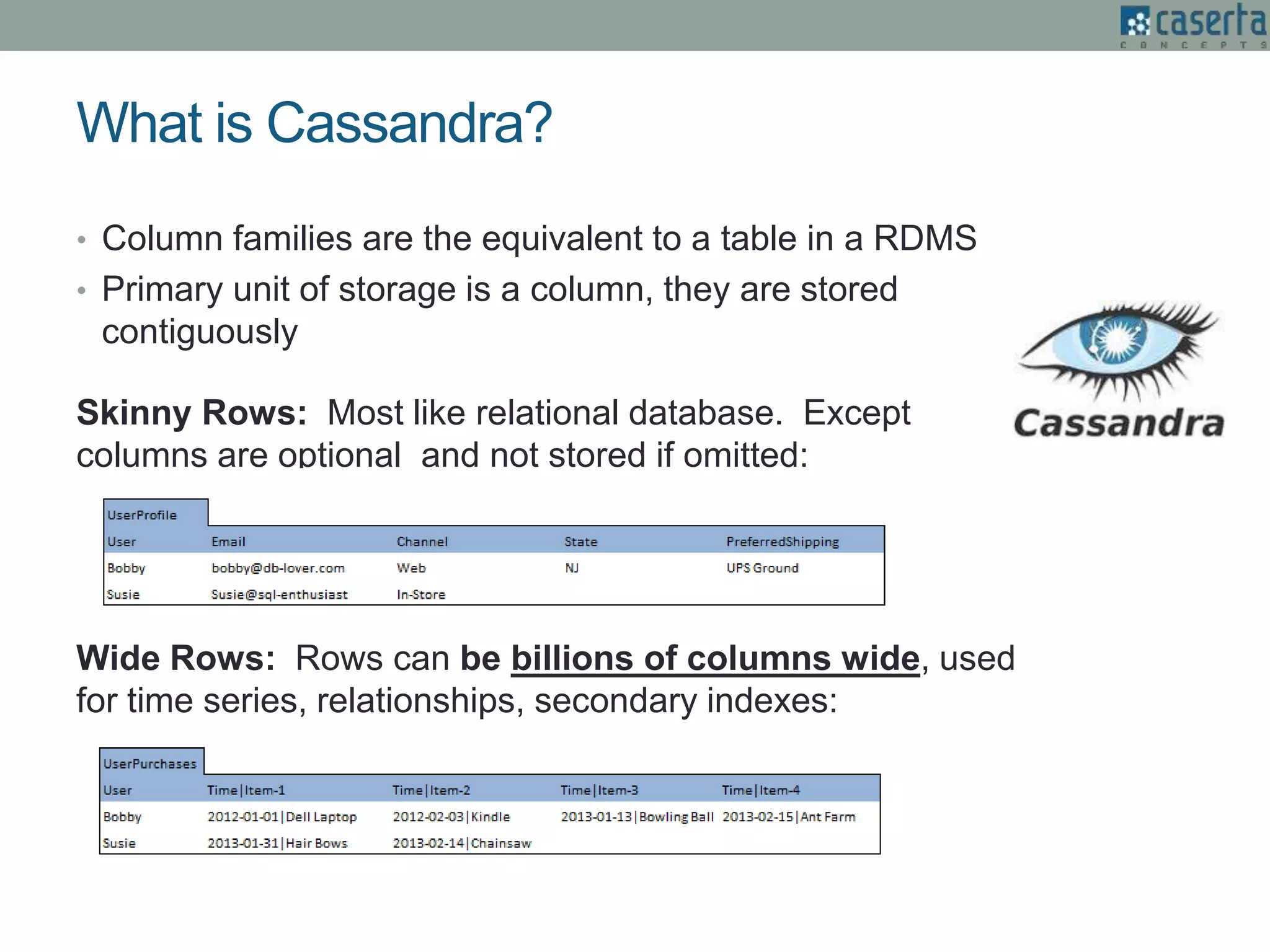

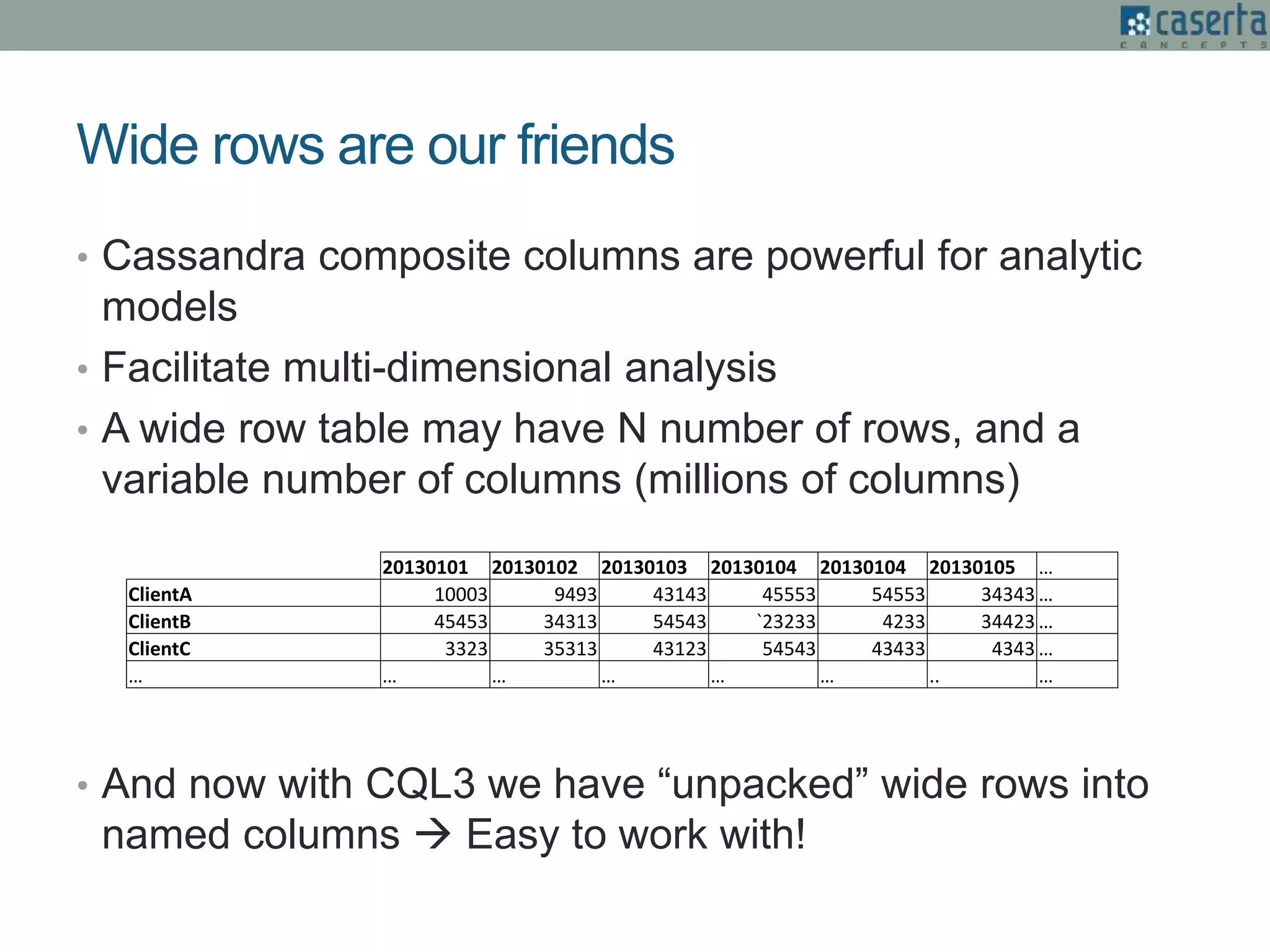

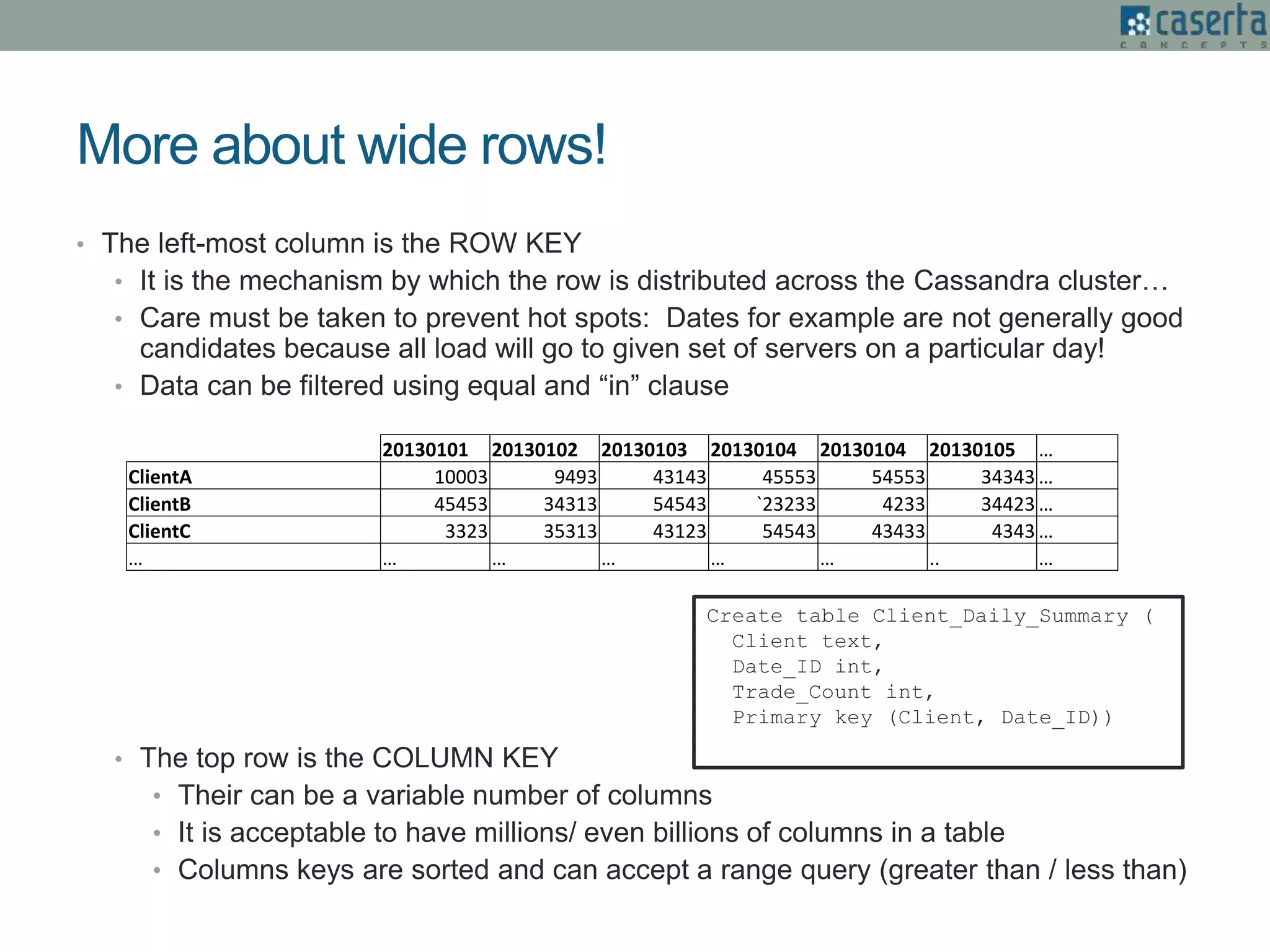

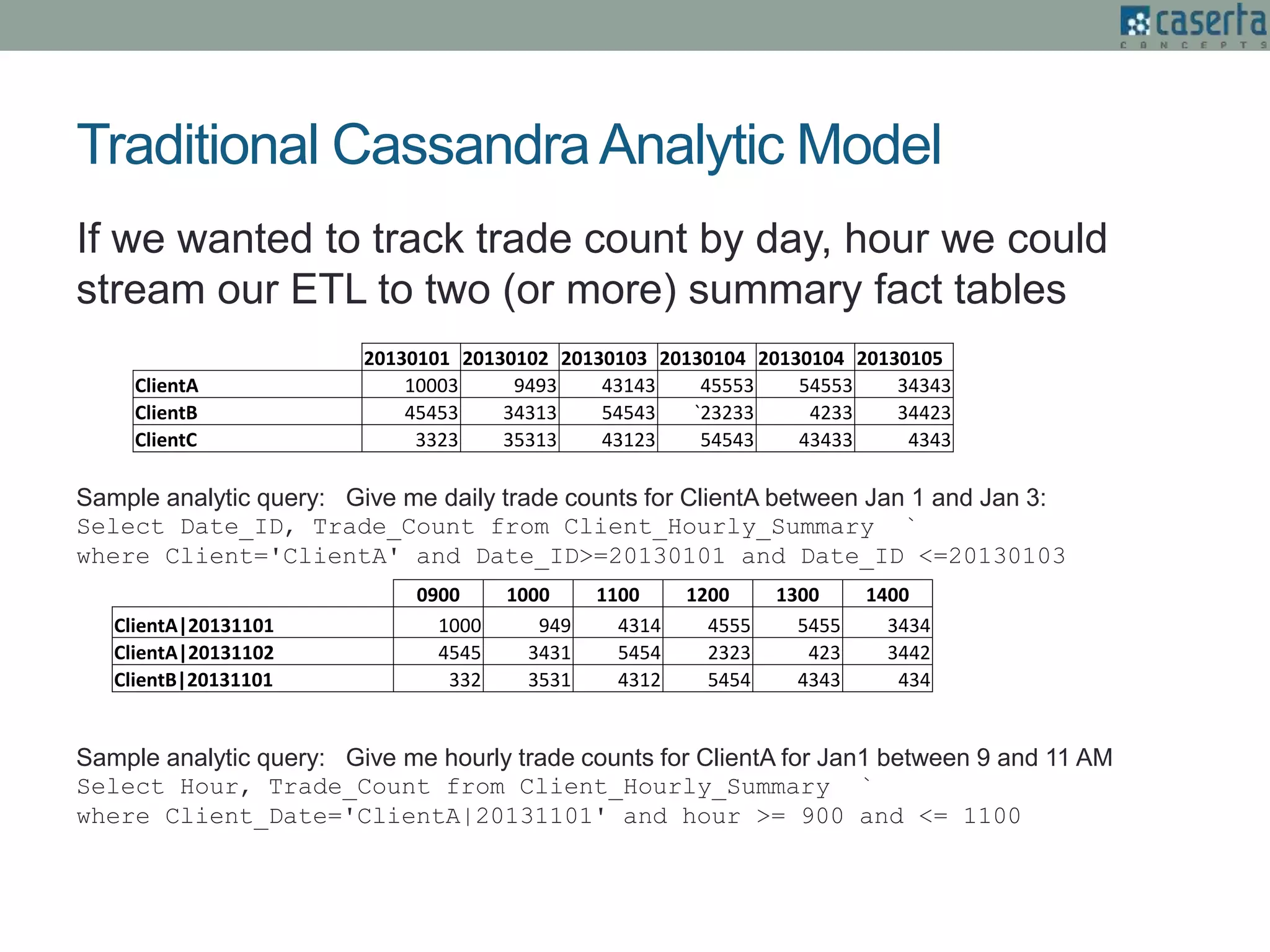

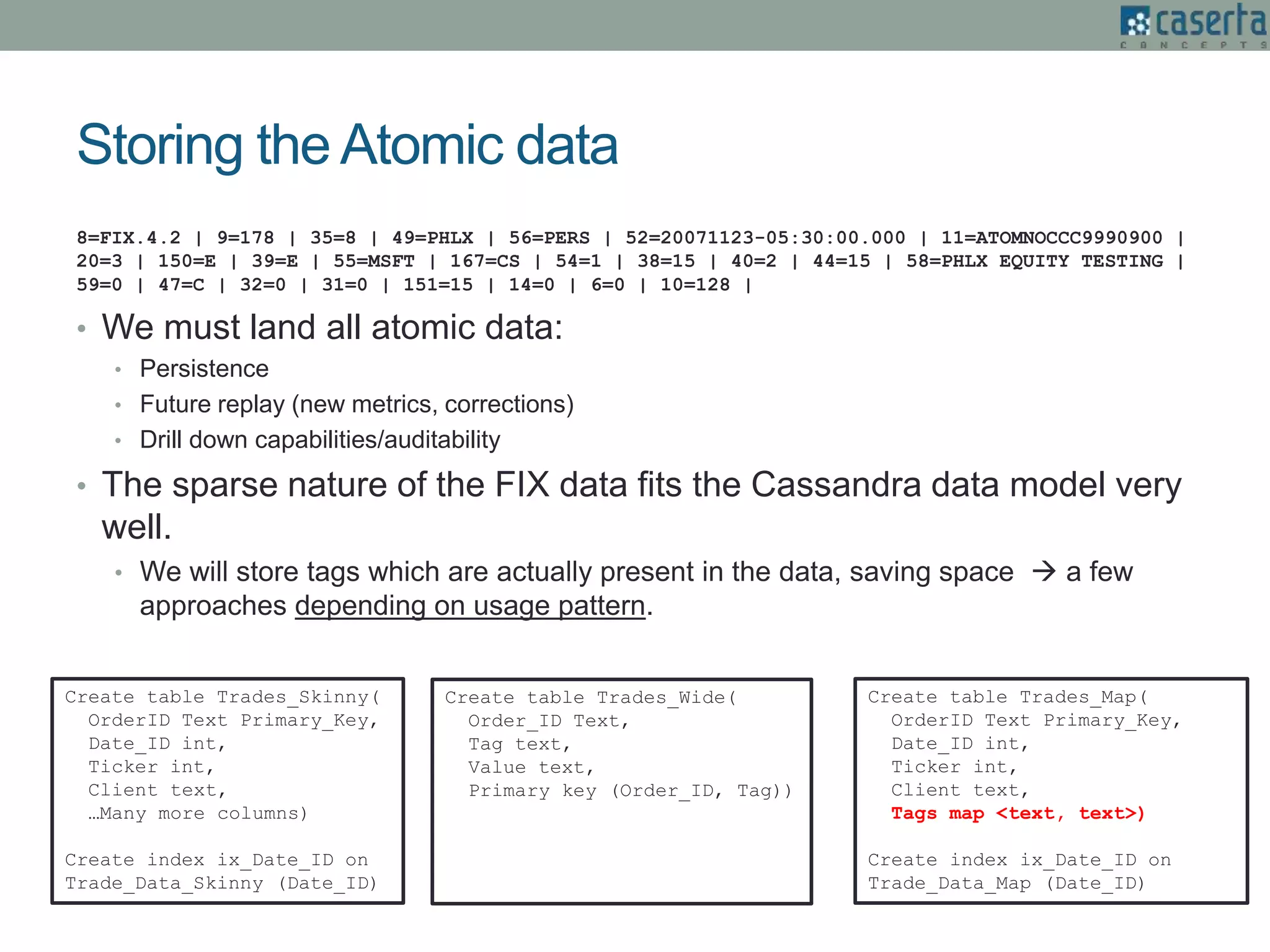

The document outlines Joe Caserta's expertise in low-latency analytics using NoSQL technologies, specifically Storm and Cassandra, for handling real-time data processing and analytics in various industries. It describes a solution for a large US bank's equity trading arm that requires the ability to process millions of messages per second while allowing for ad-hoc analytics. Additionally, it discusses the advantages of NoSQL databases, particularly Cassandra, in terms of scalability, performance, and the flexibility of data modeling.