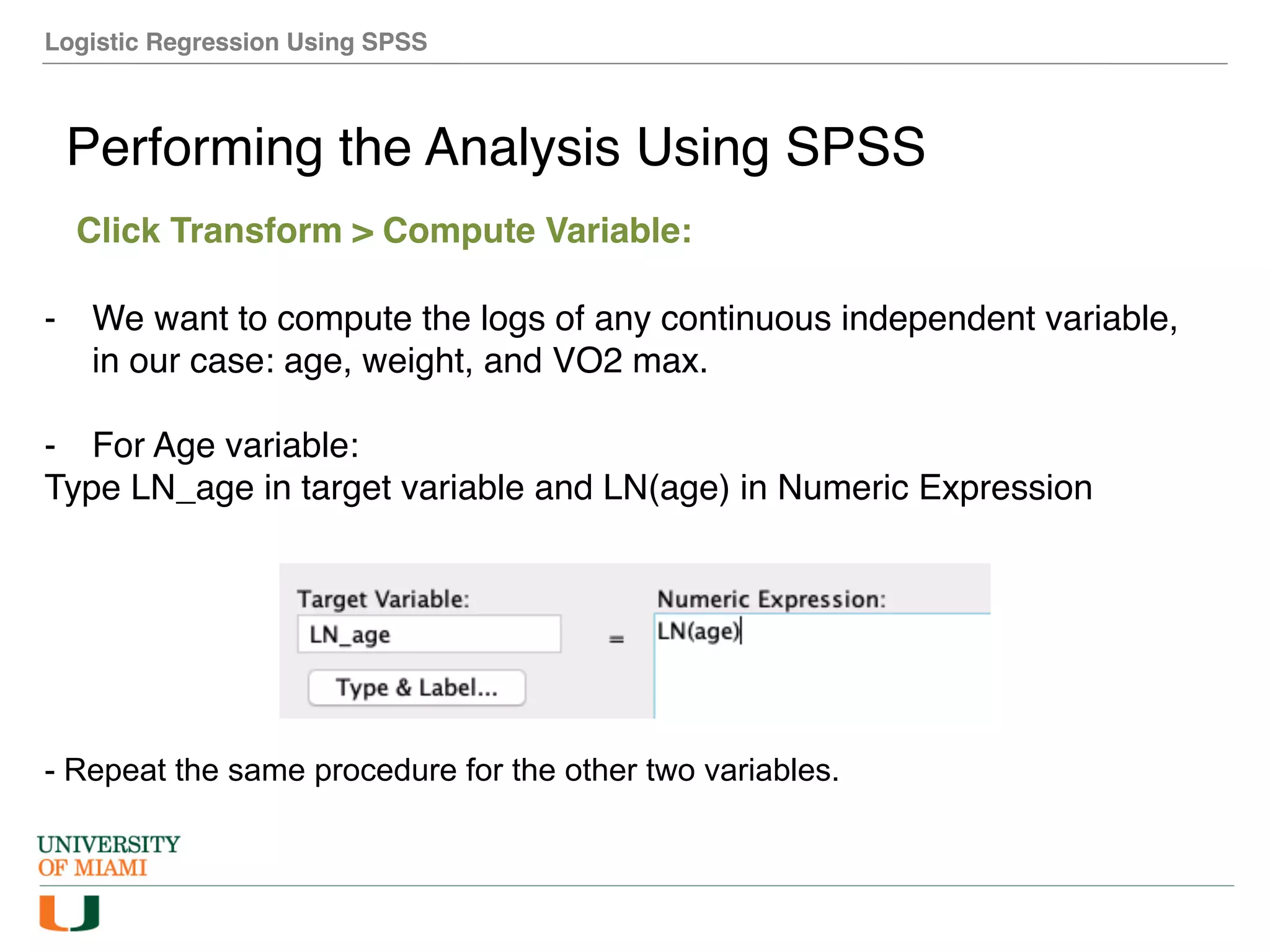

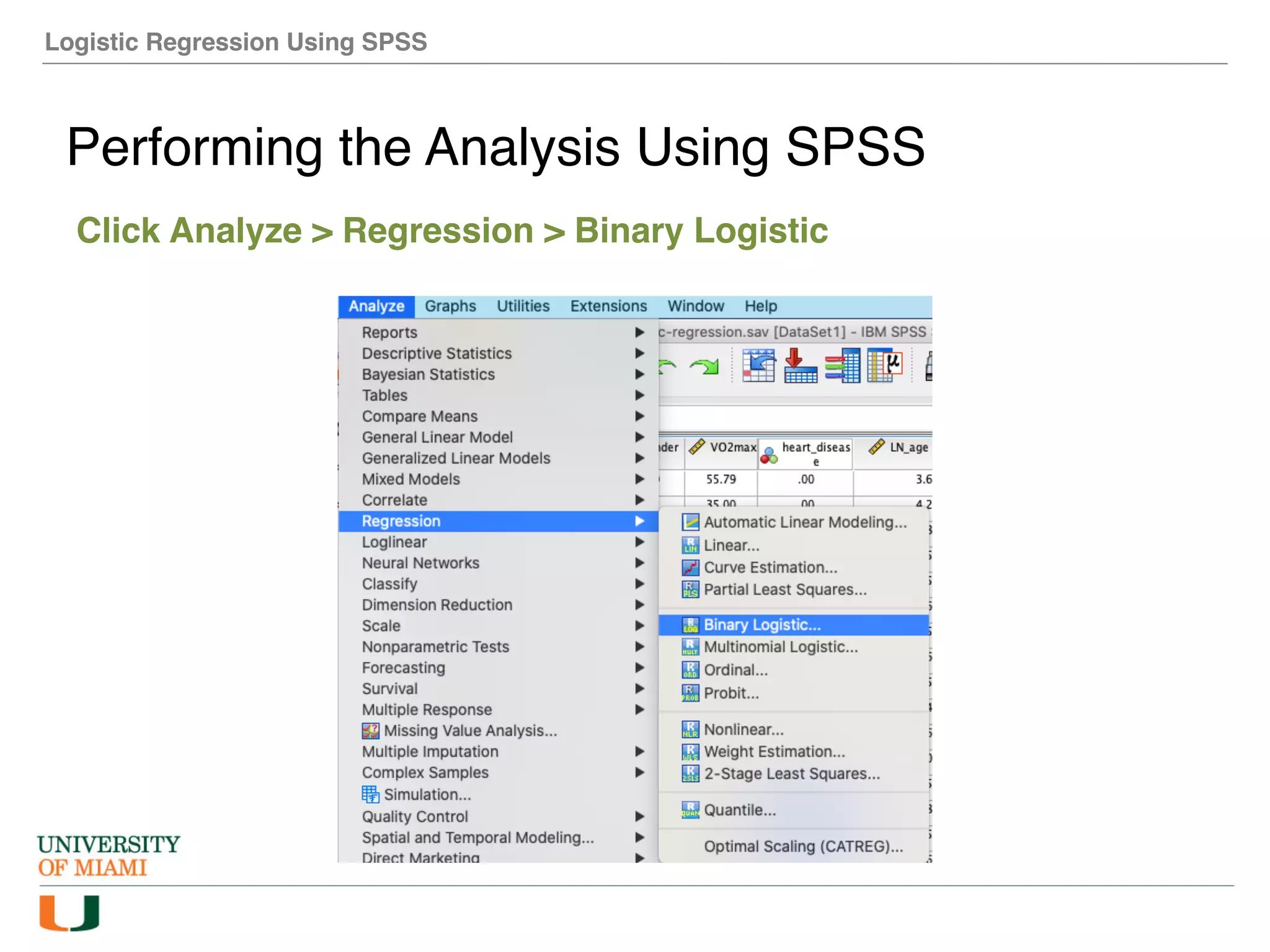

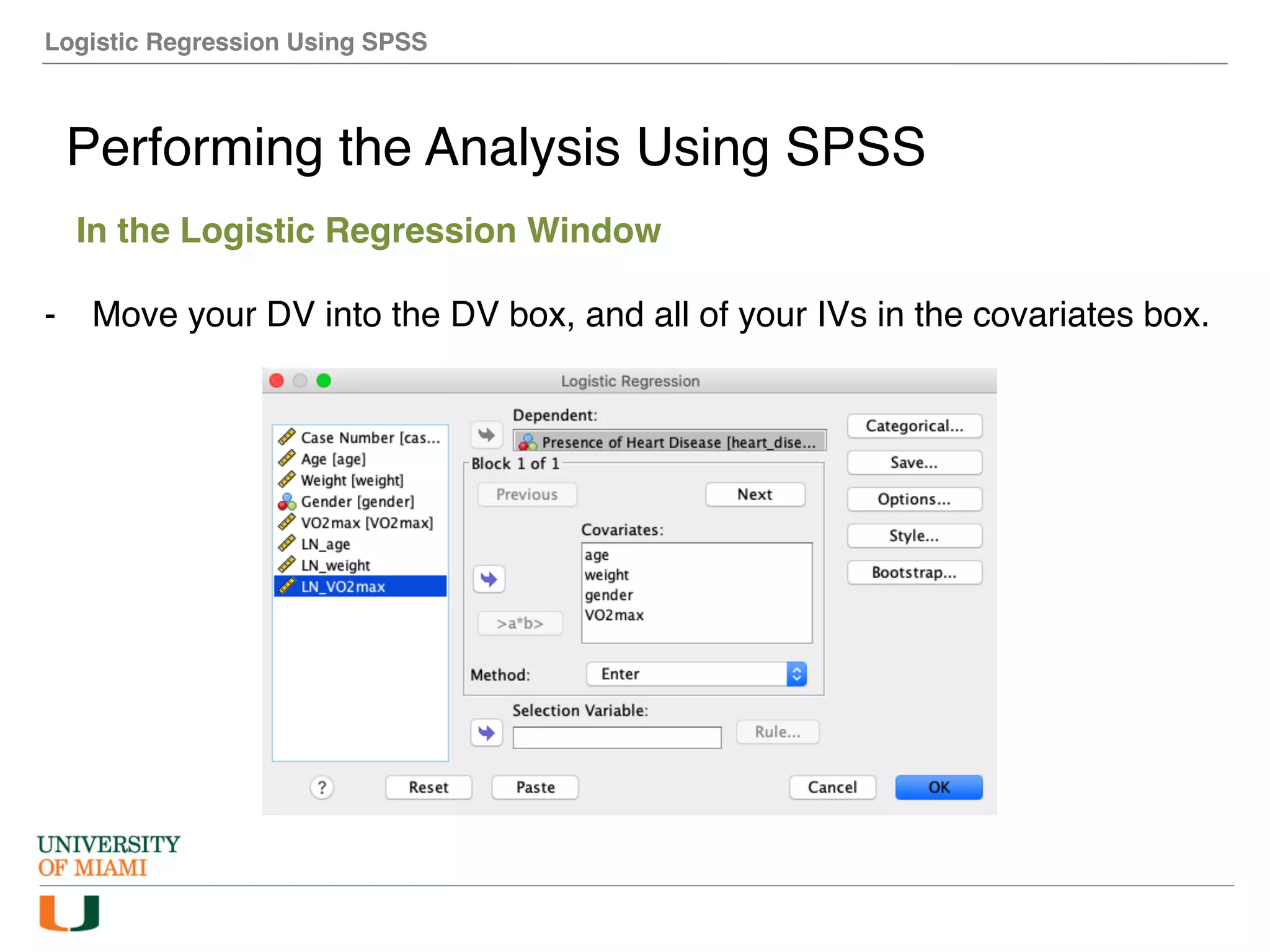

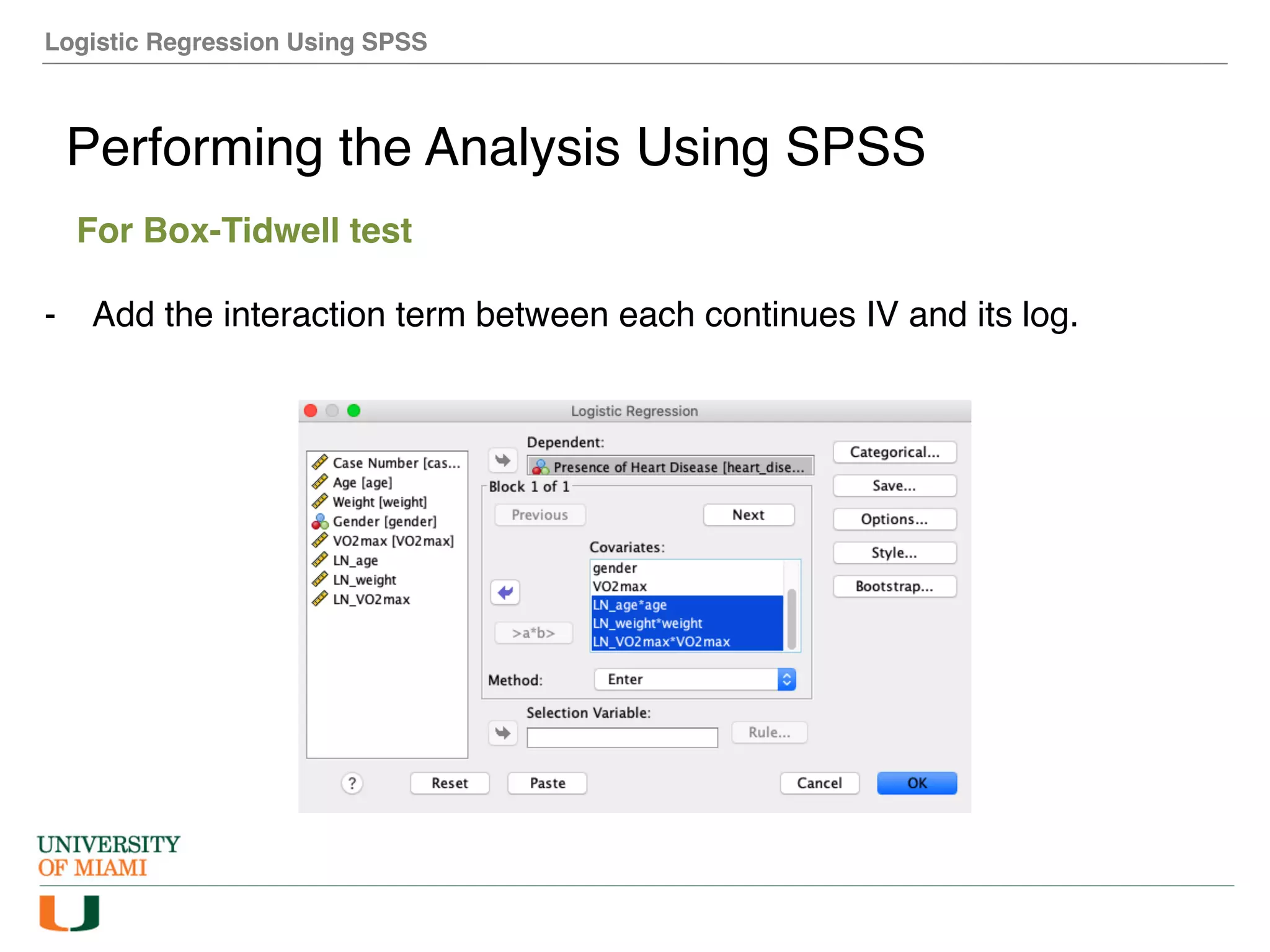

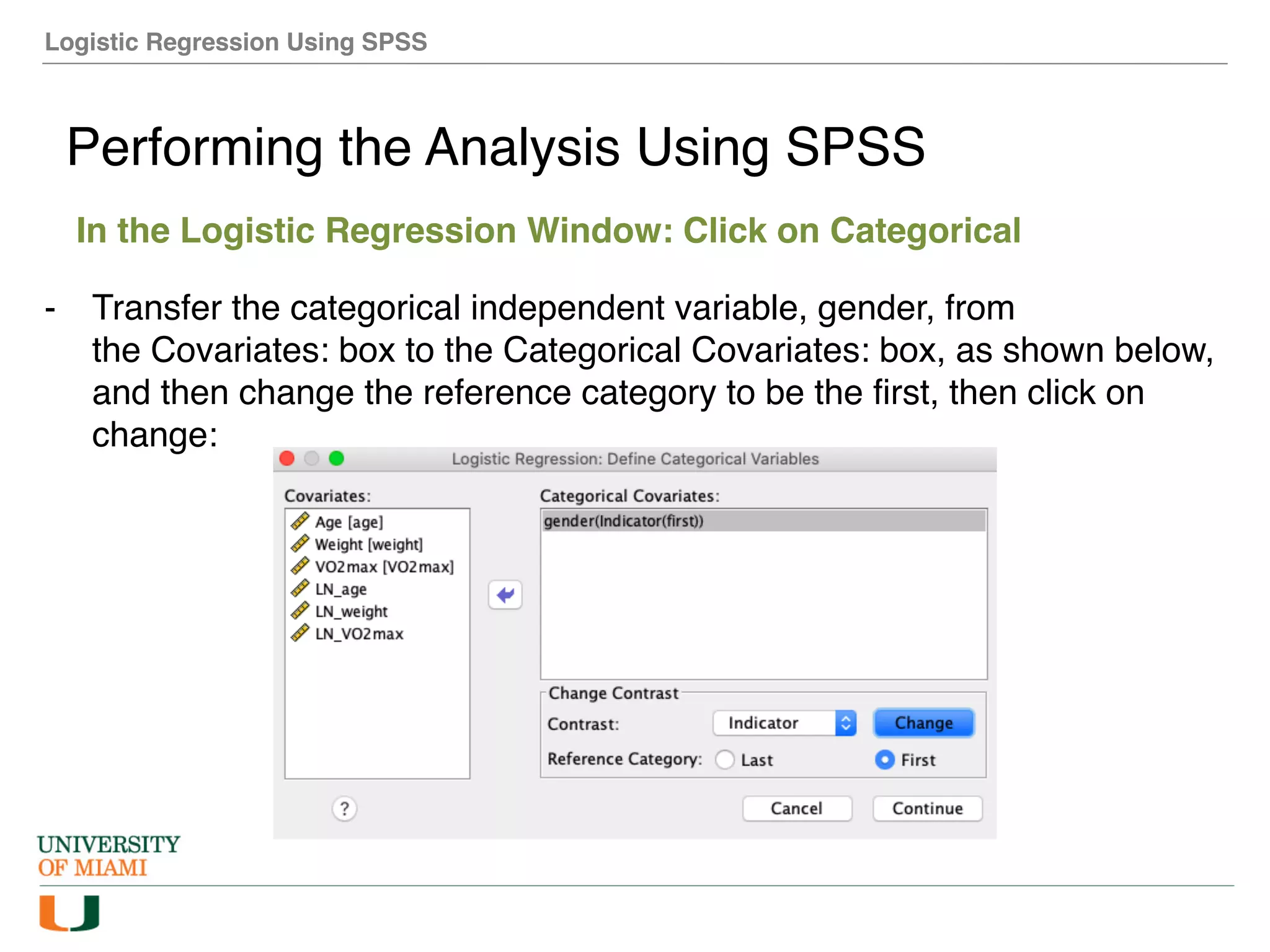

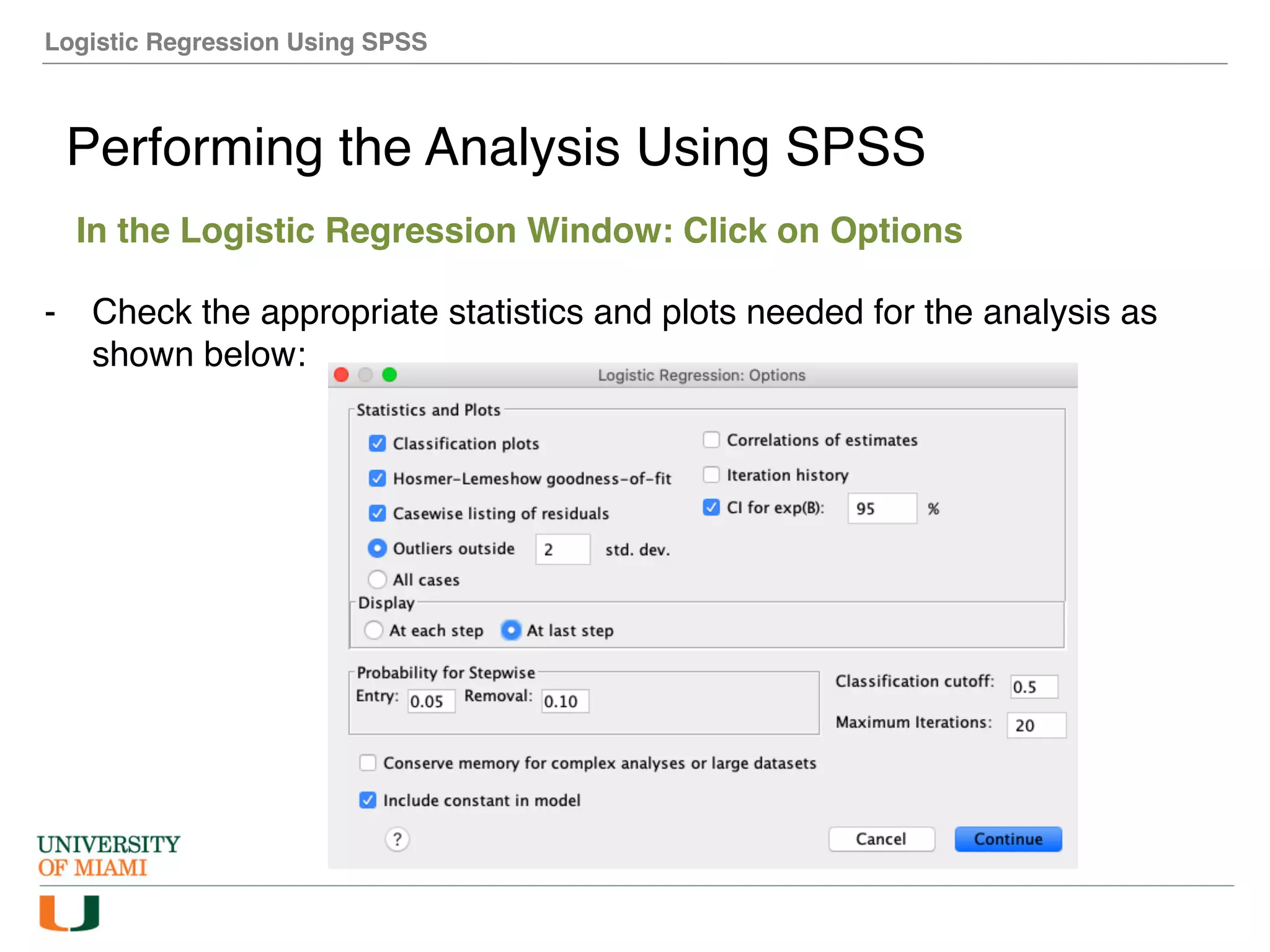

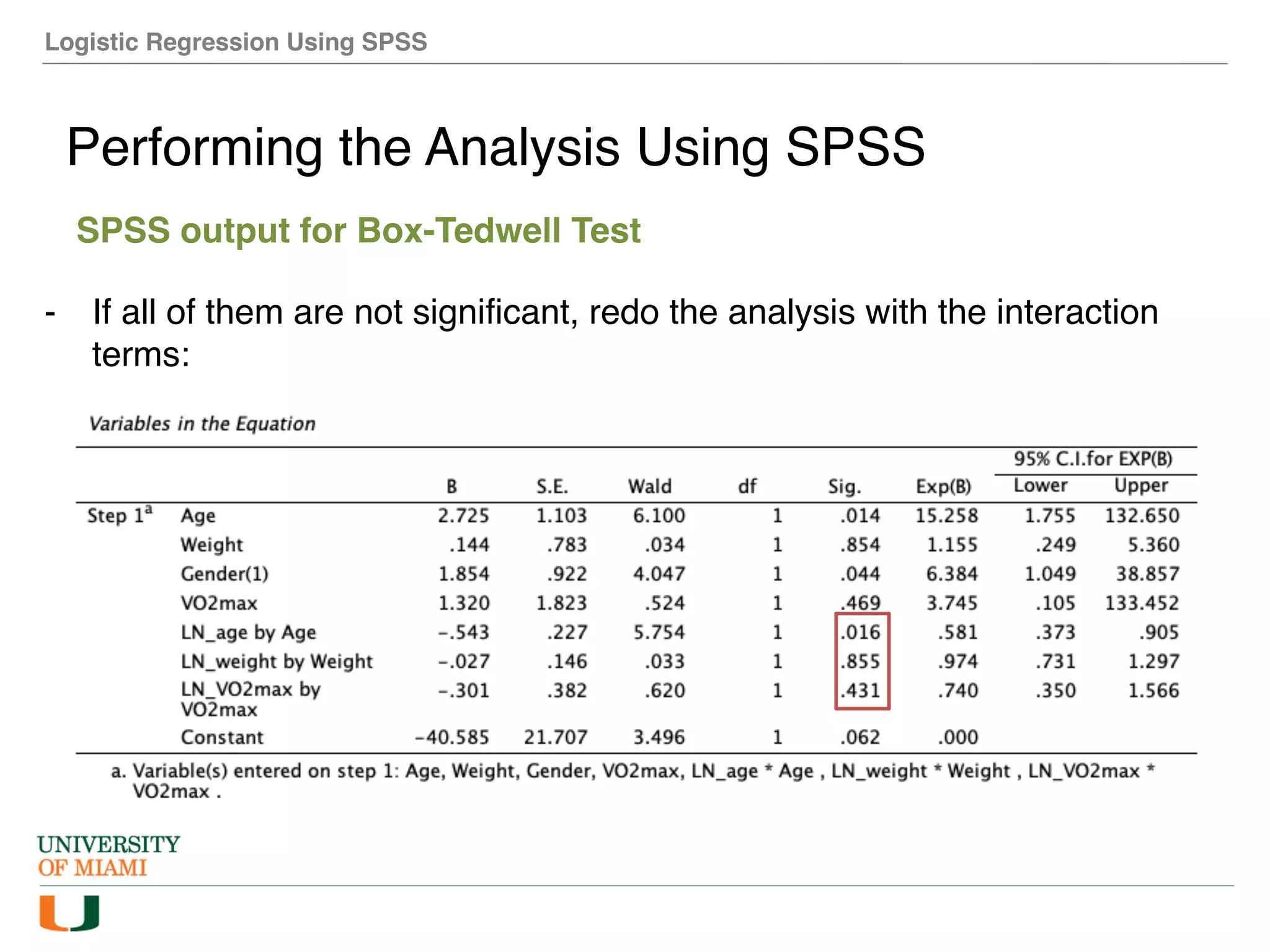

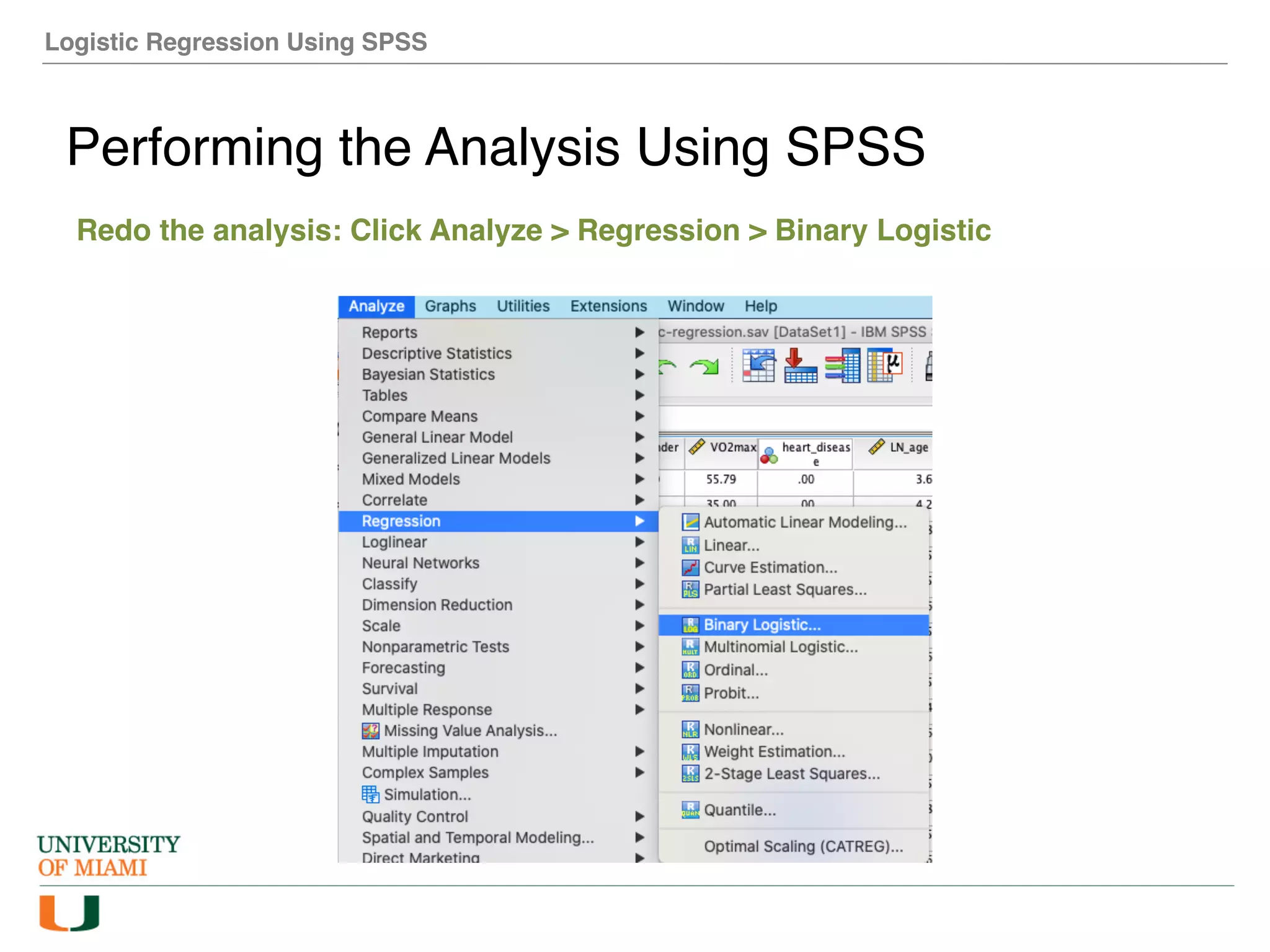

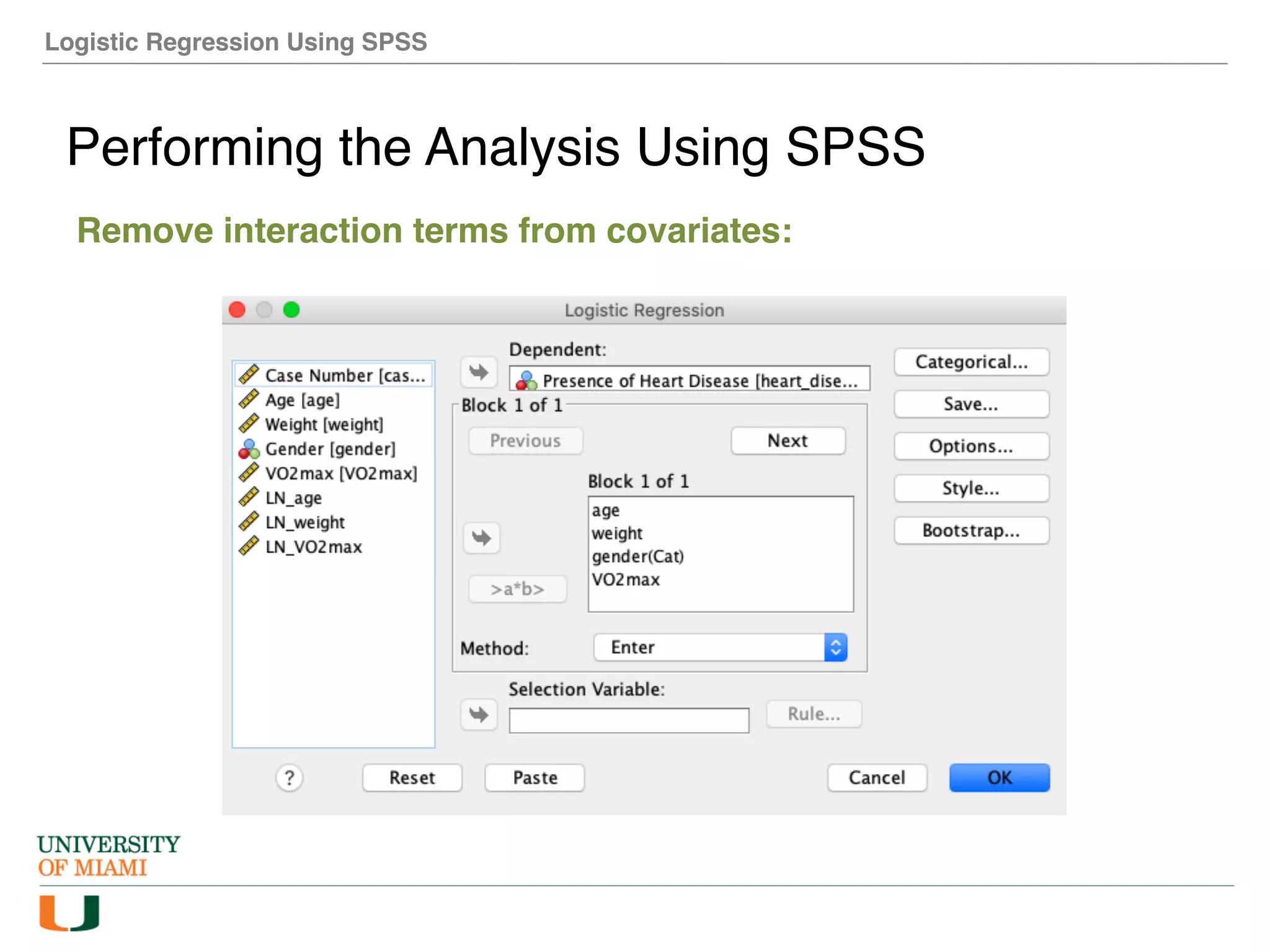

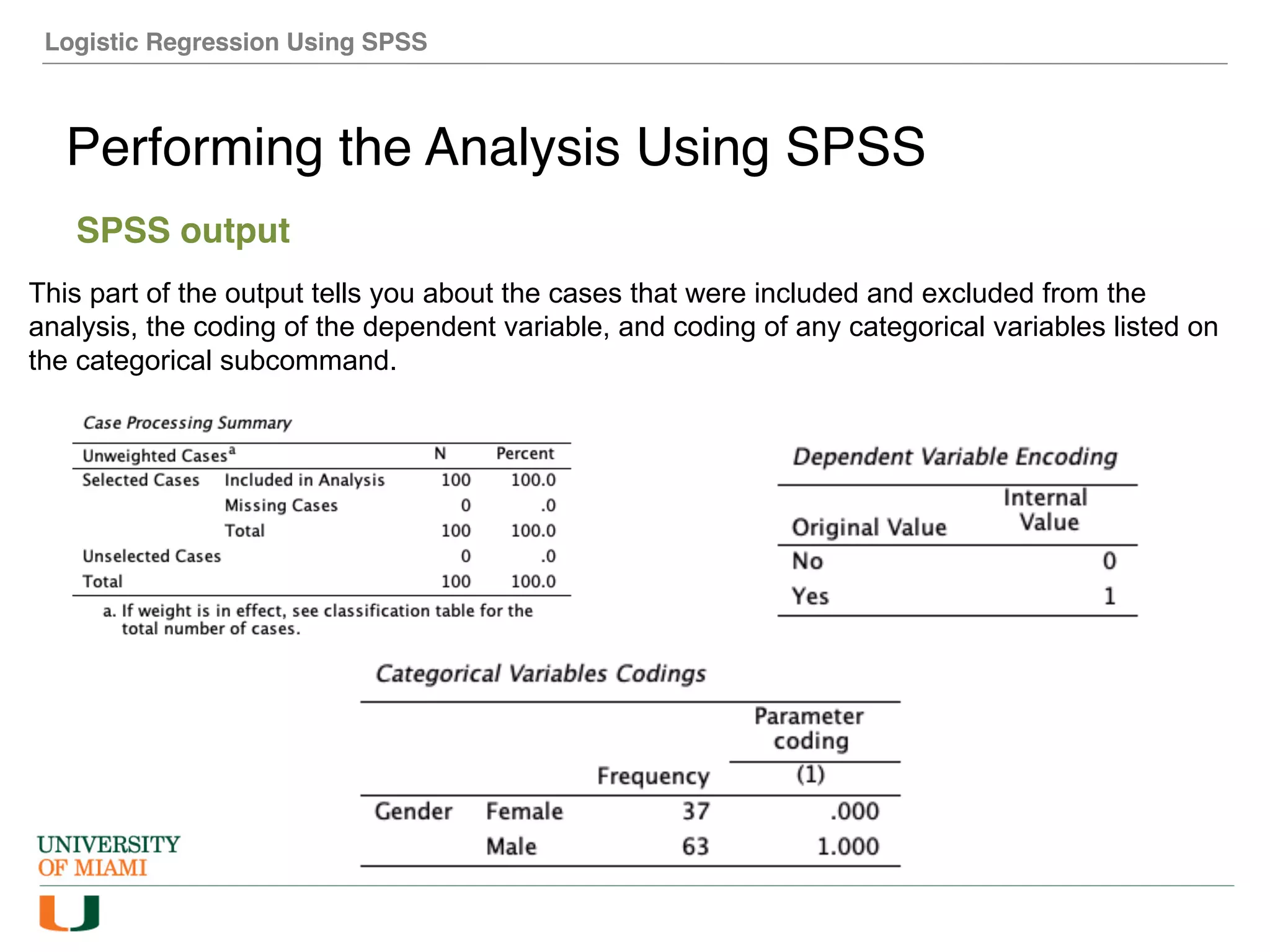

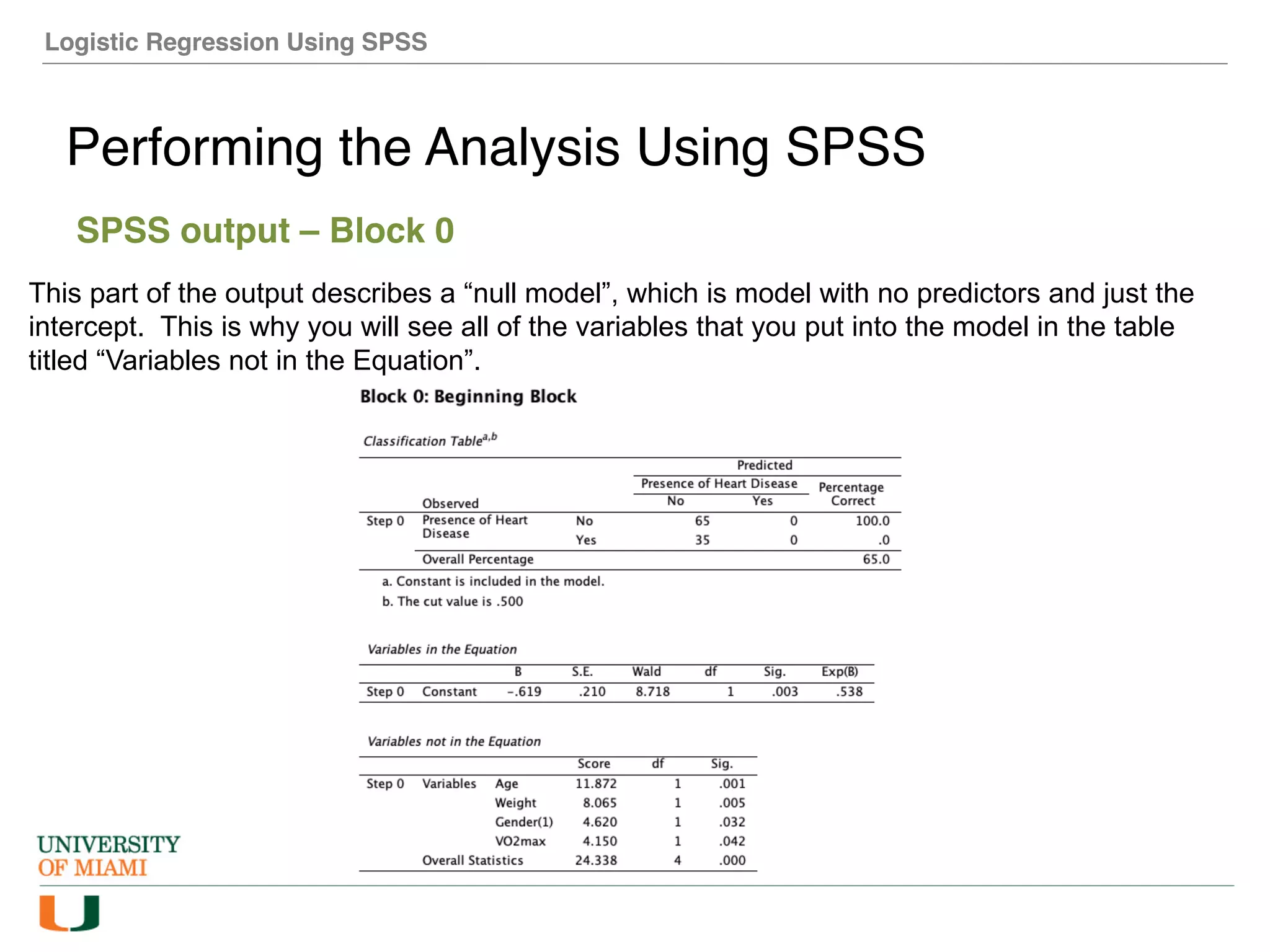

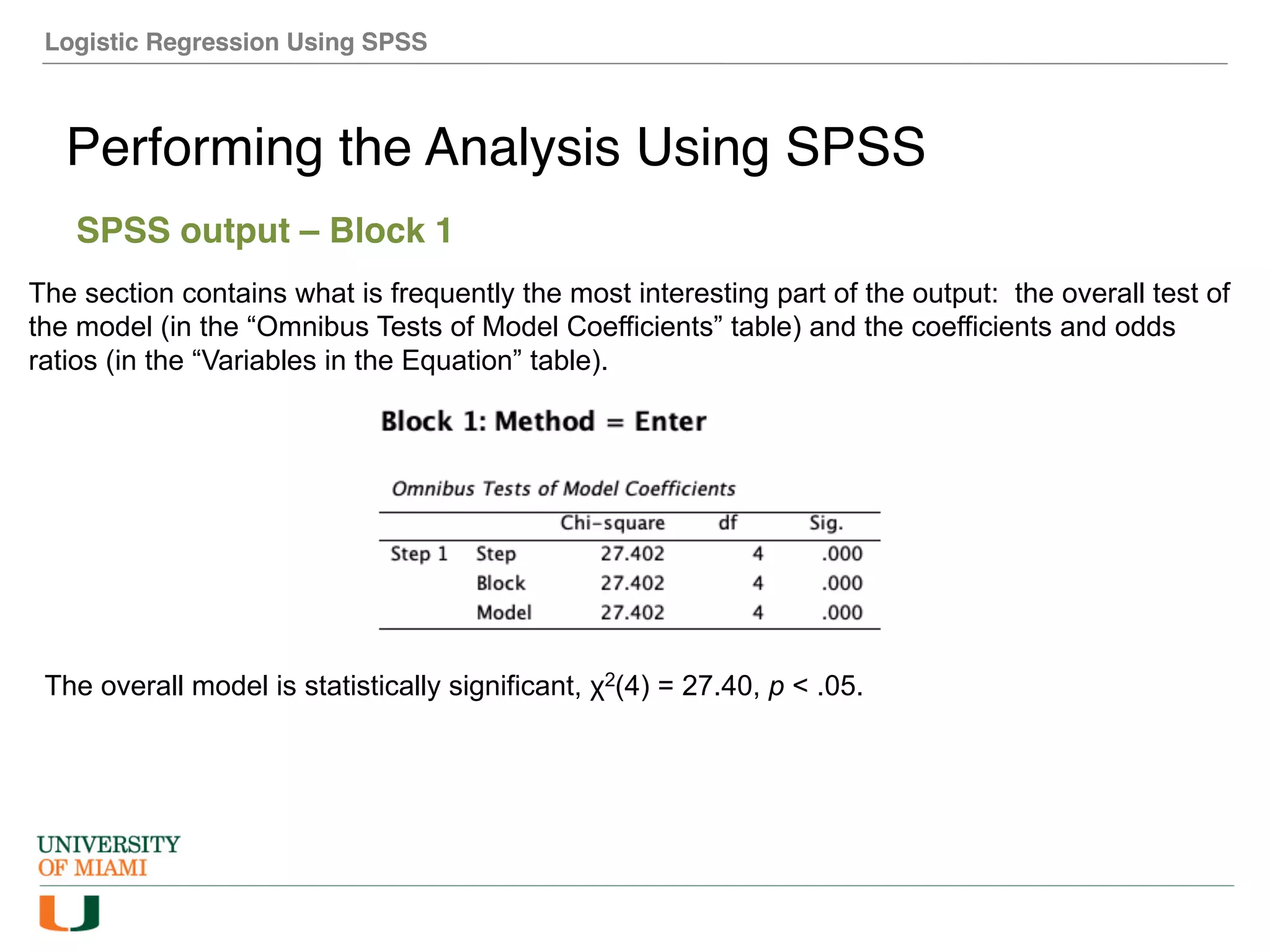

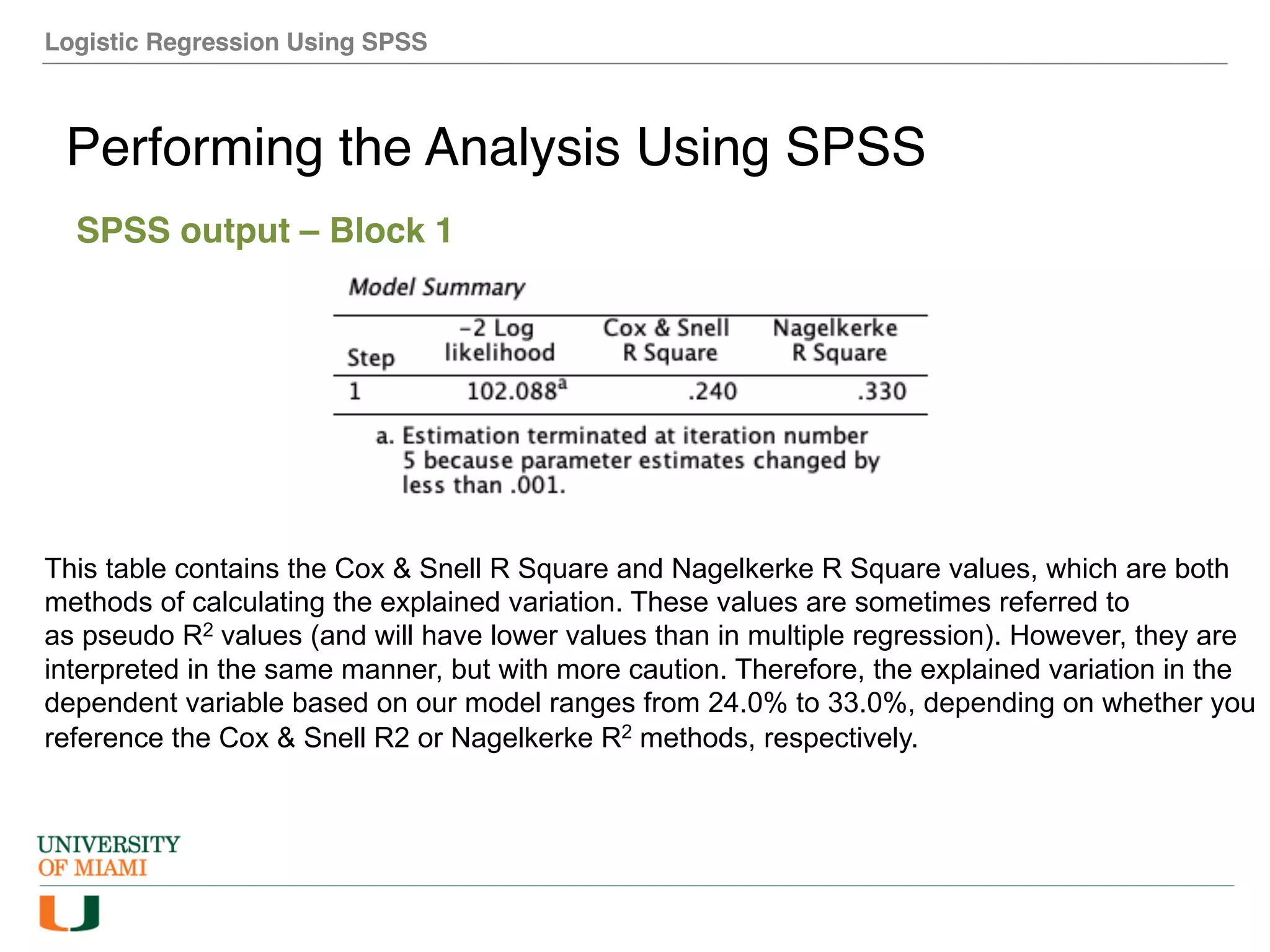

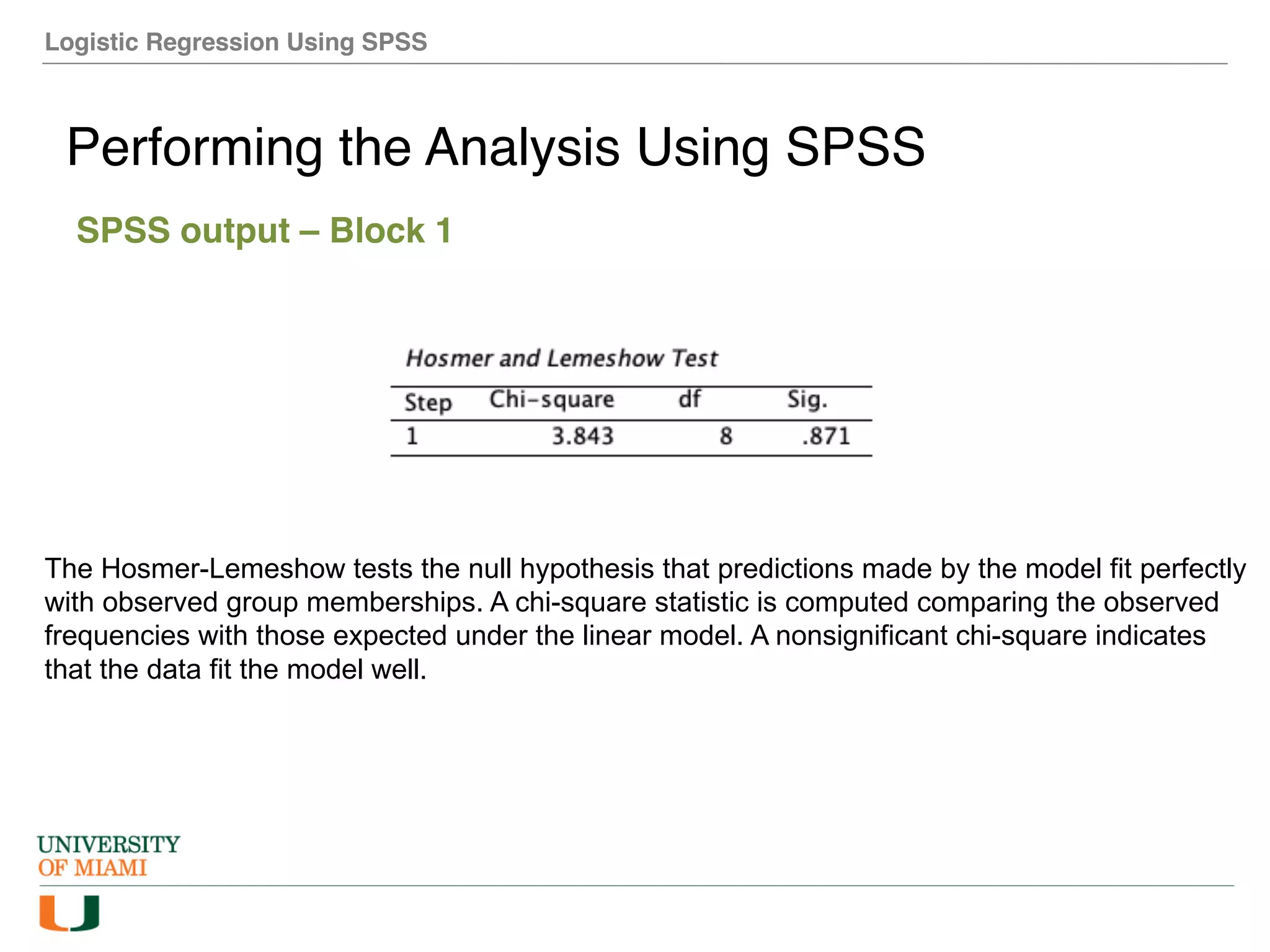

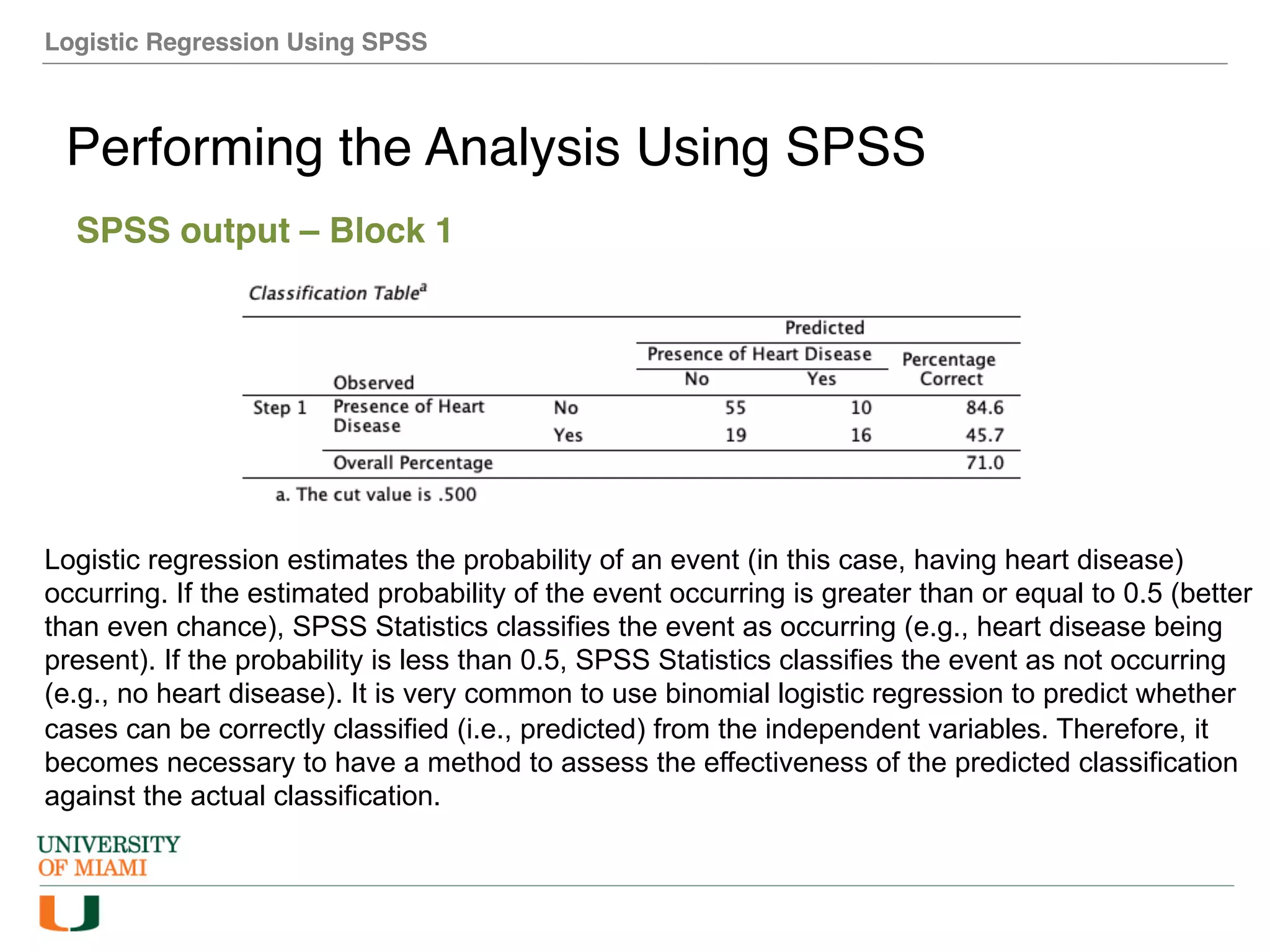

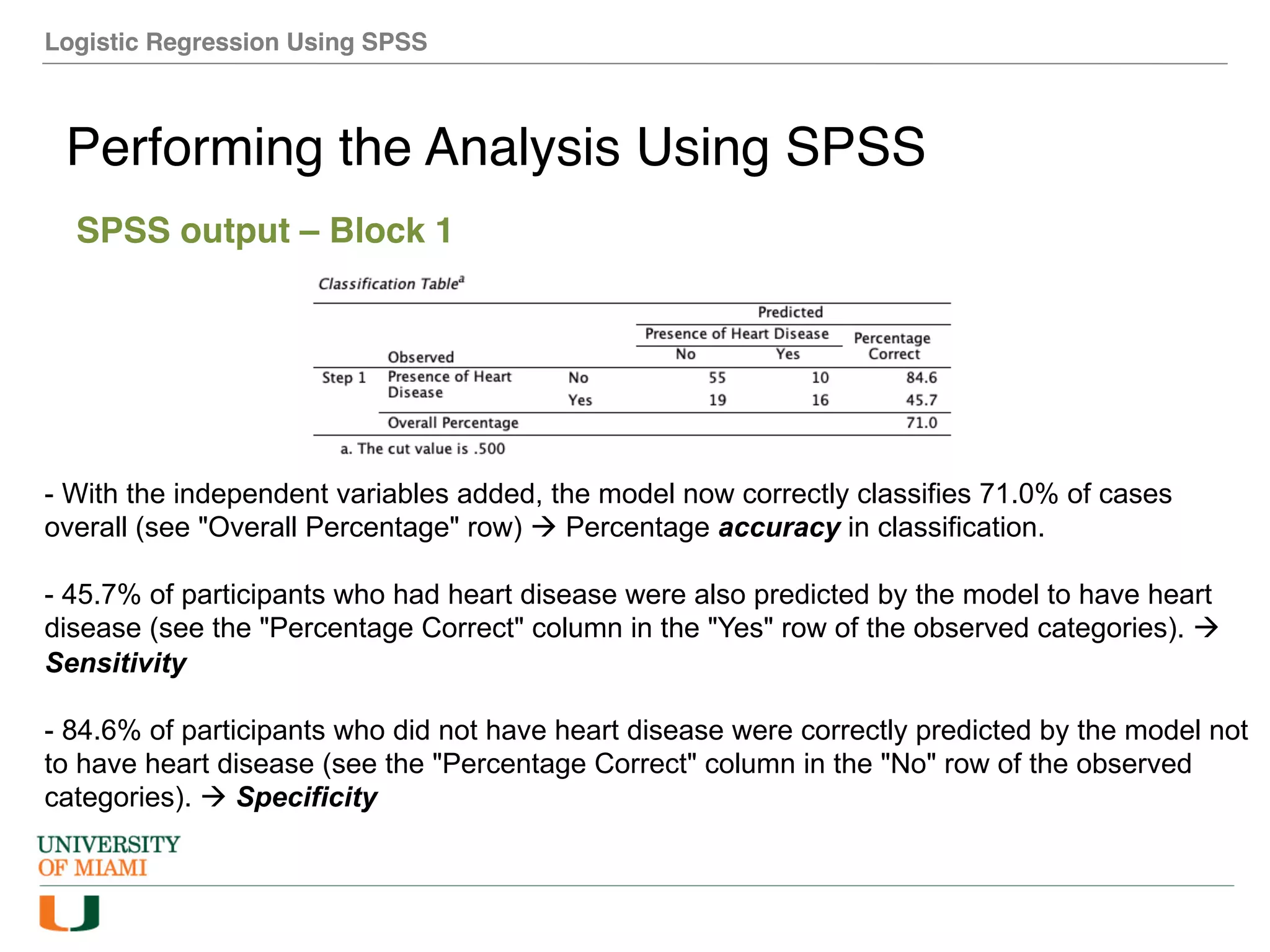

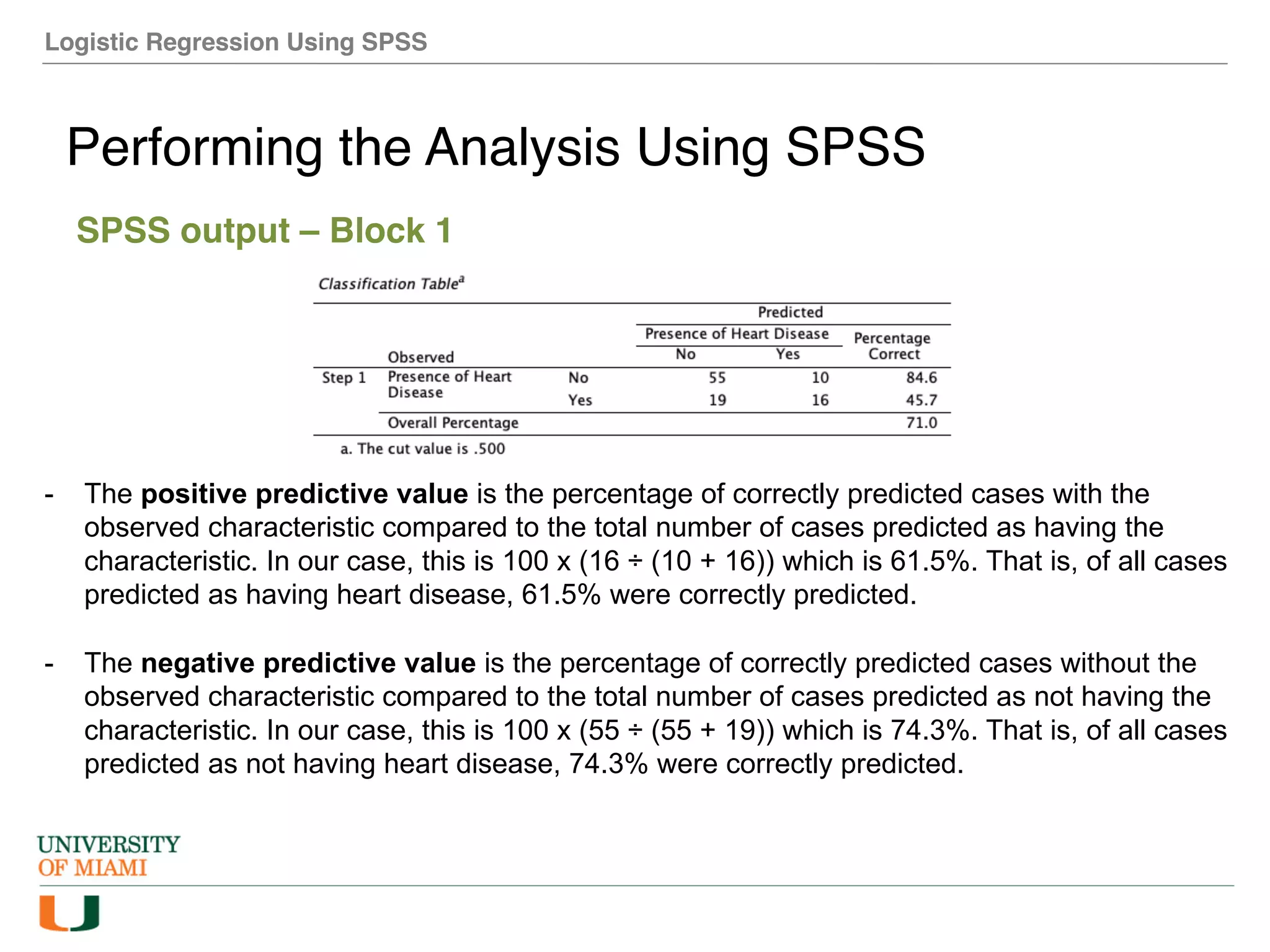

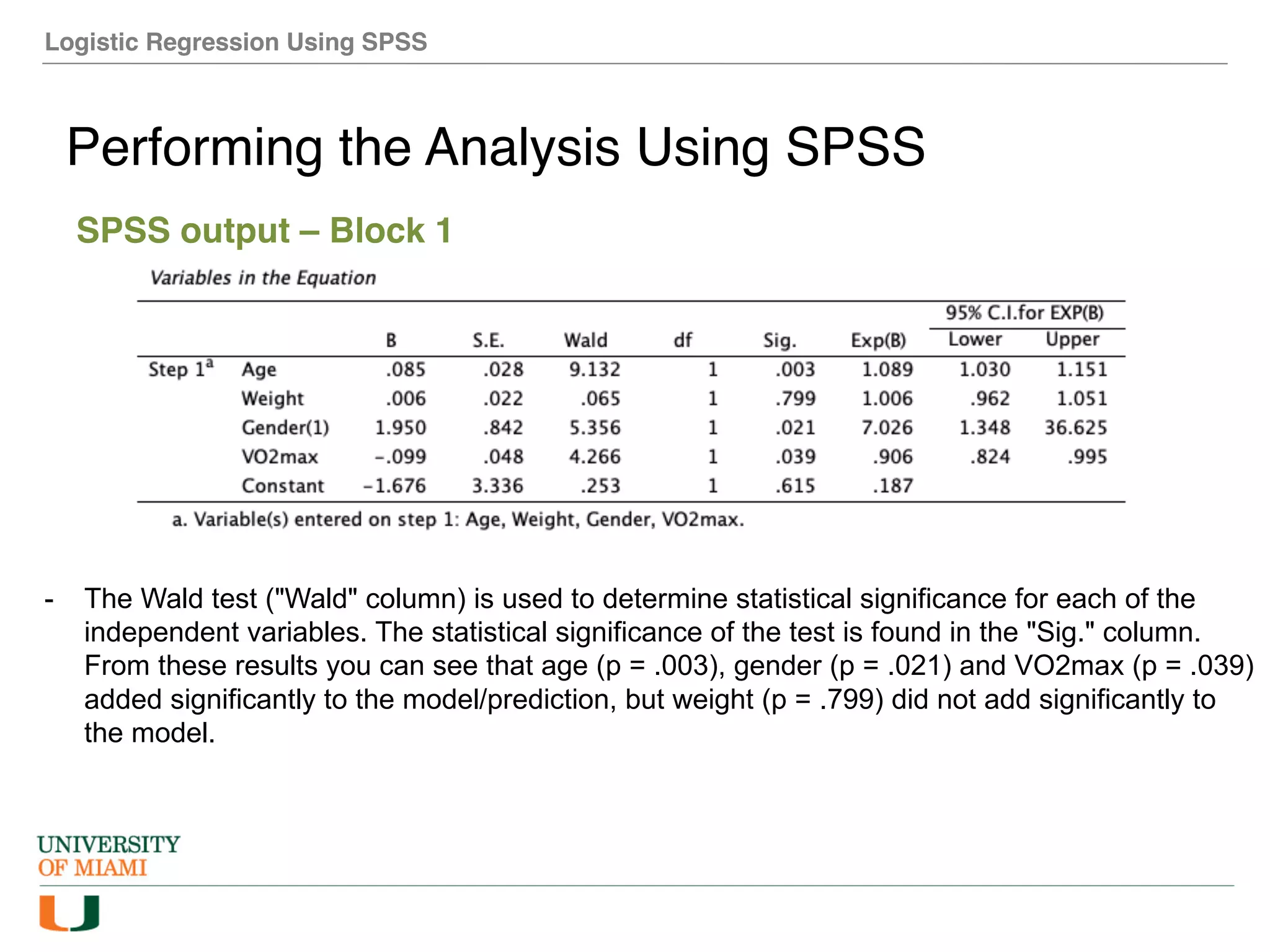

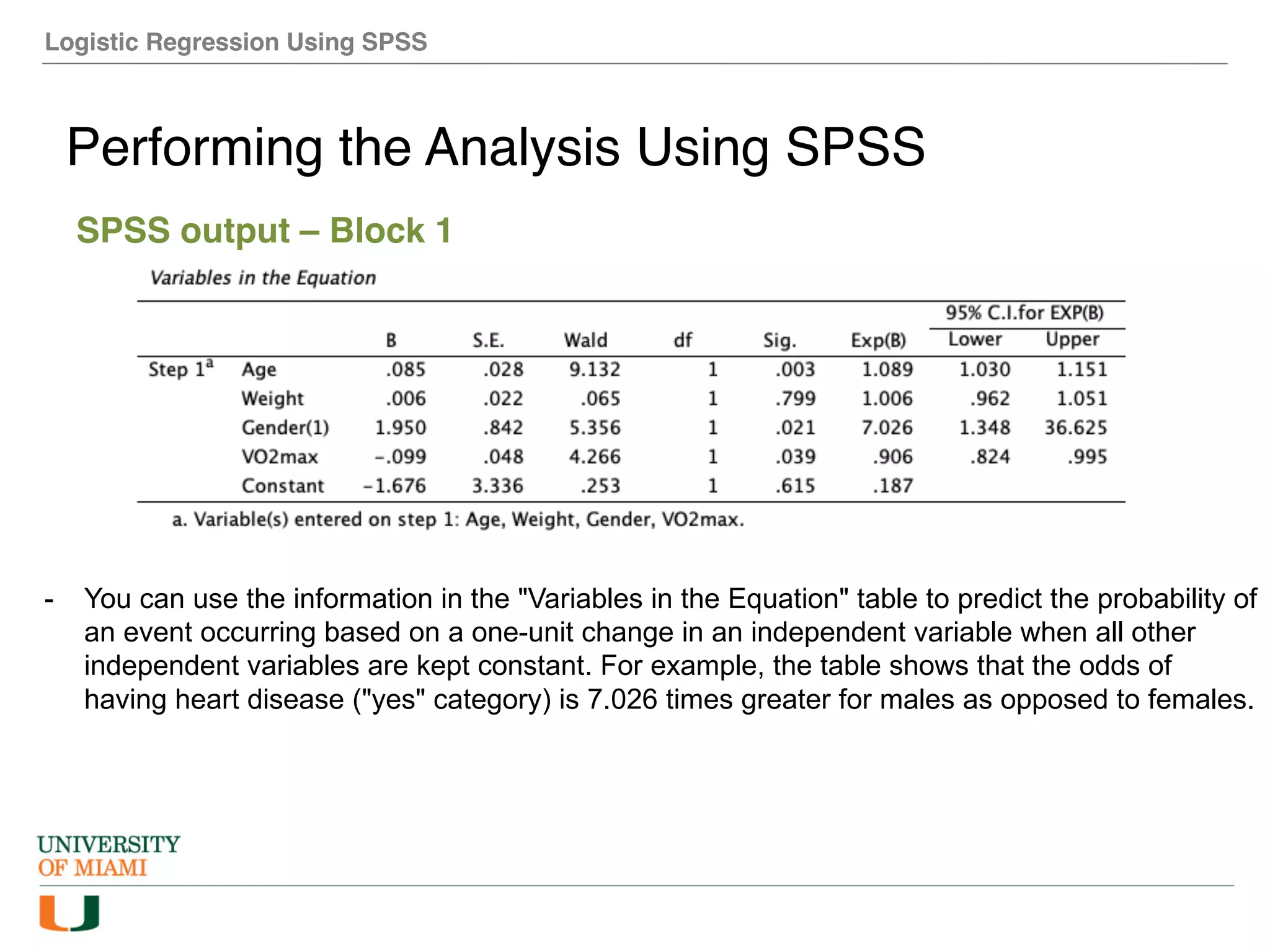

The document provides an overview of how to perform logistic regression analysis in SPSS. It discusses key concepts of logistic regression including using it to predict categorical outcomes from predictor variables. It also reviews assumptions of logistic regression and how to interpret outputs in SPSS including testing model fit and predicting probabilities. The document then demonstrates how to conduct a logistic regression analysis in SPSS to predict heart disease from variables like age, gender and VO2 max.