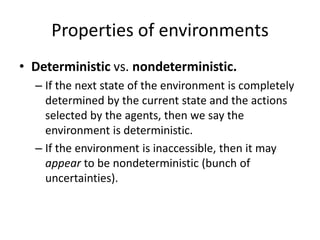

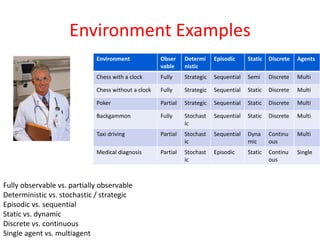

An intelligent agent is an entity that is situated in an environment, autonomous, and flexible. It perceives its environment through sensors and acts upon the environment through effectors. There are different types of agents including simple reflex agents, model-based reflex agents, goal-based agents, and utility-based agents. Environments can be fully or partially observable, deterministic or stochastic, static or dynamic, discrete or continuous, and involve a single agent or multiple agents. Examples of environments include chess, poker, backgammon, taxi driving, medical diagnosis, and image analysis.

![Overall Intelligent Agent

[Terziyan, 1993]

1) is goal-oriented, because it should have at least one goal -

to keep continuously balance between its internal and external

environments ;

2) is creative because of the ability to change external environment;

3) is adaptive because of the ability to change internal environment;

4) is mobile because of the ability to move to another place;

5) is social because of the ability to communicate to create a

community;

6) is self-configurable because of the ability to protect “mental

health” by sensing only a “suitable” part of the environment.](https://image.slidesharecdn.com/artificialintelligence03-191009161342/85/Artificial-intelligence-03-9-320.jpg)

![Three groups of agents

[Etzioni and Daniel S. Weld, 1995]

• Backseat driver: helps the user during some task

(e.g., Microsoft Office Assistant);

• Taxi driver: knows where to go when you tell the

destination;

• Caretaker : know where to go, when and why.](https://image.slidesharecdn.com/artificialintelligence03-191009161342/85/Artificial-intelligence-03-11-320.jpg)

![Simple Reflex Agent

• function SIMPLE-REFLEX-AGENT (percept)

returns action

– static: rules, a set of condition-action rules

– state ← INTERPRET-INPUT (percept)

– rule ← RULE-MATCH (state, rules)

– action ← RULE-ACTION [rule]

– return action](https://image.slidesharecdn.com/artificialintelligence03-191009161342/85/Artificial-intelligence-03-23-320.jpg)

![Model-based reflex agents

• function REFLEX-AGENT-WITH-STATE (percept)

returns an action

– static: state, a description of the current world state

rules, a set of condition-action rules

action, the most recent action, initially none

– state ← UPDATE-STATE (state, action, percept)

– rule ← RULE-MATCH (state, rules)

– action ← RULE-ACTION [rule]

– state ← UPDATE-STATE (state, action)

– return action](https://image.slidesharecdn.com/artificialintelligence03-191009161342/85/Artificial-intelligence-03-29-320.jpg)