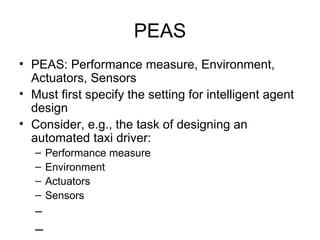

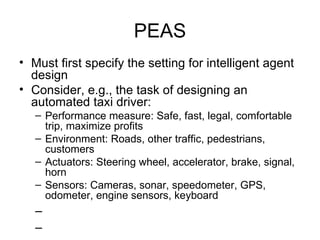

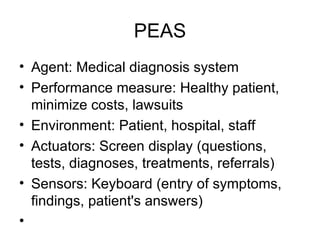

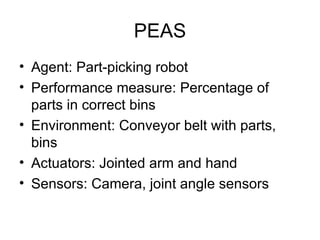

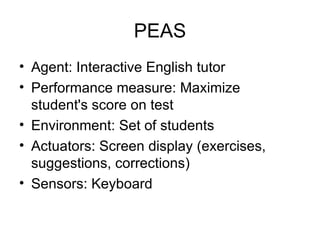

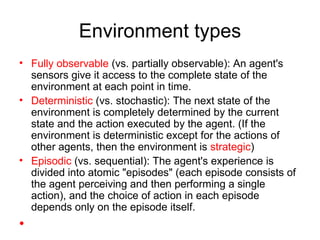

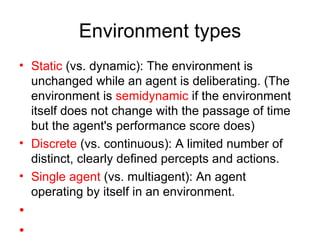

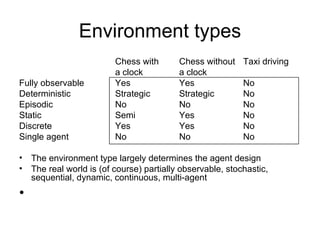

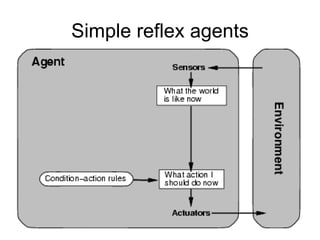

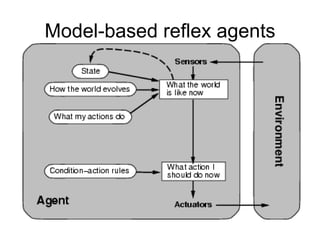

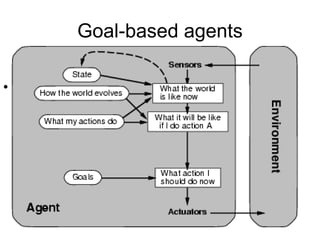

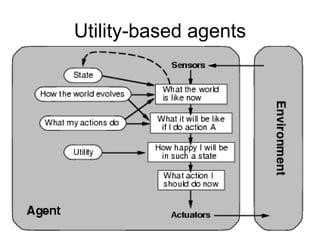

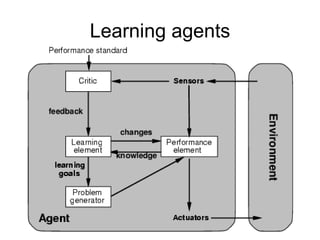

The document outlines different types of intelligent agents and their environments. It discusses agents and environments, rational agents, and the PEAS framework for specifying an agent's Performance measure, Environment, Actuators, and Sensors. Environment types include fully/partially observable, deterministic/stochastic, episodic/sequential, static/dynamic, discrete/continuous, and single/multi-agent. The document also covers agent functions, programs, and basic types including simple reflex agents, model-based reflex agents, goal-based agents, and utility-based agents.

![Agents and environments The agent function maps from percept histories to actions: [ f : P* A ] The agent program runs on the physical architecture to produce f agent = architecture + program](https://image.slidesharecdn.com/m2-agents-120118104054-phpapp01/85/Artificial-Intelligence-Chapter-two-agents-4-320.jpg)

![Vacuum-cleaner world Percepts: location and contents, e.g., [A,Dirty] Actions: Left , Right , Suck , NoOp](https://image.slidesharecdn.com/m2-agents-120118104054-phpapp01/85/Artificial-Intelligence-Chapter-two-agents-5-320.jpg)